Log(Graph): A Near-Optimal High-Performance Graph Representation

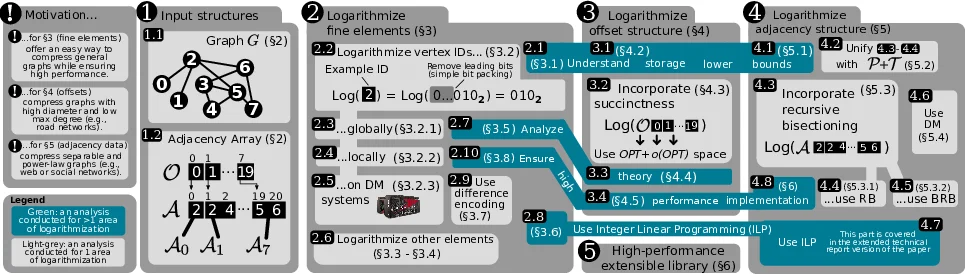

Today’s graphs used in domains such as machine learning or social network analysis may contain hundreds of billions of edges. Yet, they are not necessarily stored efficiently, and standard graph representations such as adjacency lists waste a significant number of bits while graph compression schemes such as WebGraph often require time-consuming decompression. To address this, we propose Log(Graph): a graph representation that combines high compression ratios with very low-overhead decompression to enable cheaper and faster graph processing. The key idea is to encode a graph so that the parts of the representation approach or match the respective storage lower bounds. We call our approach “graph logarithmization” because these bounds are usually logarithmic. Our high-performance Log(Graph) implementation based on modern bitwise operations and state-of-the-art succinct data structures achieves high compression ratios as well as performance. For example, compared to the tuned Graph Algorithm Processing Benchmark Suite (GAPBS), it reduces graph sizes by 20-35% while matching GAPBS’ performance or even delivering speedups due to reducing amounts of transferred data. It approaches the compression ratio of the established WebGraph compression library while enabling speedups of up to more than 2x. Log(Graph) can improve the design of various graph processing engines or libraries on single NUMA nodes as well as distributed-memory systems.

💡 Research Summary

**

The paper “Log(Graph): A Near‑Optimal High‑Performance Graph Representation” addresses the growing challenge of storing and processing massive graphs that contain billions of edges. Traditional adjacency‑list representations waste a large amount of memory, while state‑of‑the‑art compression schemes such as WebGraph achieve high compression ratios at the cost of expensive decompression, which hurts the performance of graph algorithms that need fast random access (e.g., edge existence checks, triangle counting).

Log(Graph) proposes a new design paradigm called “graph logarithmization”. The central idea is to encode each component of a graph (vertex identifiers, offset arrays, adjacency data, edge weights) using a number of bits that matches the information‑theoretic lower bound ⌈log |S|⌉, where |S| is the number of possible values for that component. By doing so, the representation approaches the optimal storage size while keeping the decoding cost essentially zero.

The authors split the approach into three orthogonal layers:

-

Fine‑grained element logarithmization (Section 3).

Vertex IDs are first stored globally with ⌈log n⌉ bits per identifier. A more aggressive local scheme stores each neighbor identifier with ⌈log |Nᵥ|⌉ bits, where Nᵥ is the neighbor set of vertex v. The local scheme adds a small per‑vertex metadata field that records the bit‑length, but the overall overhead is negligible. Edge weights are similarly compressed to ⌈log Wₘₐₓ⌉ bits. -

Offset array compression (Section 4).

Traditional adjacency arrays keep an offset array O of size O(n log n) bits. Log(Graph) replaces O with a succinct bit‑vector that supports rank and select in O(1) time, combined with a simple delta‑encoding. This reduces the offset structure to O(m + o(m)) bits, essentially the information‑theoretic optimum, while preserving constant‑time random access. -

Adjacency data compression (Section 5).

The adjacency list A is compressed by exploiting structural properties of real‑world graphs (e.g., power‑law degree distribution, separability). The authors recursively bisect the graph, reorder vertex IDs using an Integer Linear Programming (ILP) heuristic, and then store the neighbor identifiers with the locally optimal bit‑length. The result is an adjacency block that occupies on average ⌈log |Nᵥ|⌉ bits per edge, achieving compression ratios comparable to WebGraph but without a costly decompression phase.

The paper also extends the scheme to distributed‑memory systems (Section 3.2.3). Vertex IDs are split into an intra‑node part (encoded either globally or locally) and an inter‑node part that identifies the node in the cluster. This yields a compact representation that minimizes inter‑node communication while preserving the same per‑node compression guarantees.

Implementation and performance.

Log(Graph) is implemented in modern C++ using SIMD‑friendly bit‑wise operations, succinct data‑structure libraries, and a lightweight ILP solver. The authors evaluate the system on a variety of synthetic (uniform random, power‑law, road networks) and real‑world graphs (web crawls, social networks) and on a broad set of algorithms: BFS, PageRank, Connected Components, Betweenness Centrality, Triangle Counting, and Single‑Source Shortest Path (SSSP).

Key results include:

- Memory reduction of 20‑35 % compared with a tuned GAP‑BS (Graph Algorithm Processing Benchmark Suite) adjacency‑list baseline.

- Offset compression alone reduces offset storage by more than 90 % without any measurable slowdown.

- Full adjacency compression brings total graph size within a few percent of WebGraph’s best‑case ratios, while delivering up to 2.2× speed‑ups on BFS and SSSP due to reduced data movement.

- The succinct offset structure provides rank/select queries in constant time, leading to virtually identical traversal performance to a plain offset array.

- In distributed settings, the intra/inter ID split cuts inter‑node traffic, enabling the same speed‑up trends on multi‑node runs.

The authors also provide a thorough theoretical analysis of the storage lower bounds for each component, proving that Log(Graph) achieves O(OPT + o(OPT)) bits, where OPT is the optimal information‑theoretic bound. They discuss the trade‑offs between global and local vertex‑ID encoding, the impact of vertex degree distribution on compression, and the overhead of the ILP reordering (which is linear in the number of edges and performed only once as a preprocessing step).

Impact and conclusions.

Log(Graph) demonstrates that it is possible to simultaneously approach optimal graph compression and retain high‑performance random access, overturning the conventional belief that compression necessarily incurs a heavy decompression penalty. By carefully aligning each graph component with its theoretical lower bound and using succinct data structures that support constant‑time queries, the authors achieve a practical system that can be dropped into existing graph processing pipelines with minimal code changes. The open‑source implementation, modular design, and extensive evaluation make Log(Graph) a compelling candidate for inclusion in future high‑performance graph analytics frameworks, especially on NUMA‑aware single‑node machines and large distributed clusters where memory bandwidth and network traffic dominate execution time.

Comments & Academic Discussion

Loading comments...

Leave a Comment