Learning Personalized Discretionary Lane-Change Initiation for Fully Autonomous Driving Based on Reinforcement Learning

In this article, the authors present a novel method to learn the personalized tactic of discretionary lane-change initiation for fully autonomous vehicles through human-computer interactions. Instead of learning from human-driving demonstrations, a reinforcement learning technique is employed to learn how to initiate lane changes from traffic context, the action of a self-driving vehicle, and in-vehicle user feedback. The proposed offline algorithm rewards the action-selection strategy when the user gives positive feedback and penalizes it when negative feedback. Also, a multi-dimensional driving scenario is considered to represent a more realistic lane-change trade-off. The results show that the lane-change initiation model obtained by this method can reproduce the personal lane-change tactic, and the performance of the customized models (average accuracy 86.1%) is much better than that of the non-customized models (average accuracy 75.7%). This method allows continuous improvement of customization for users during fully autonomous driving even without human-driving experience, which will significantly enhance the user acceptance of high-level autonomy of self-driving vehicles.

💡 Research Summary

The paper introduces a reinforcement‑learning (RL) framework that learns a driver’s personal discretionary lane‑change initiation strategy through direct human‑computer interaction rather than from large collections of human‑driving demonstrations. The authors formulate the problem as a discrete‑action Markov decision process: the state consists of a multi‑dimensional traffic context (relative distances and speeds of surrounding vehicles, lane density, inferred intents of neighboring cars, and the autonomous vehicle’s own acceleration/braking state), and the action space contains only two choices – “initiate lane change” or “maintain current lane”.

Instead of a handcrafted reward, the system uses explicit user feedback as the sole source of reinforcement. After each lane‑change decision the occupant can indicate whether the maneuver felt “comfortable” (positive feedback) or “uncomfortable” (negative feedback). Positive feedback yields a reward of +1, negative feedback –1. This binary reward directly encodes the driver’s subjective preference, enabling the learned policy to align with individual comfort criteria.

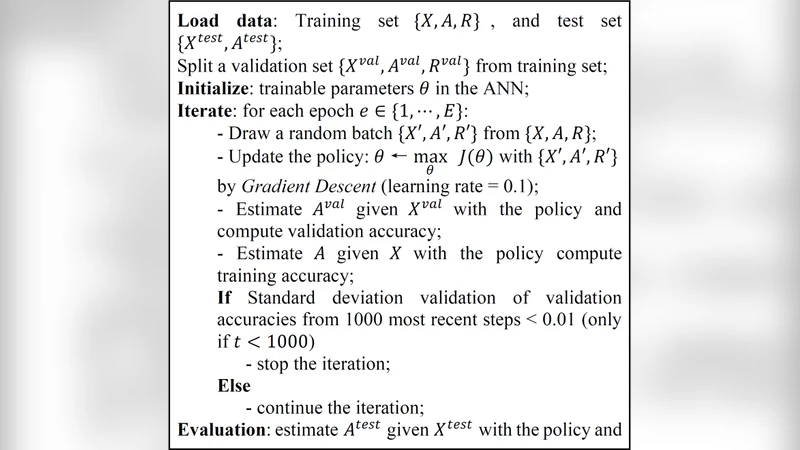

Training is performed offline. Collected (state, action, reward) tuples are fed into a policy‑gradient algorithm derived from REINFORCE. To improve sample efficiency, importance‑sampling weights correct for the shift between the behavior policy used during data collection and the current target policy, while a baseline function reduces variance. Each driver receives a personalized parameter vector θᵤ; the algorithm can start from a generic policy trained on all users and then fine‑tune it with that driver’s feedback, allowing rapid convergence even when feedback is sparse.

A key contribution is the multi‑dimensional scenario model. The authors quantify three competing costs associated with a lane‑change decision: (1) time loss (extra travel time), (2) safety risk (probability of conflict with surrounding vehicles), and (3) discomfort (jerk, lateral acceleration). These costs are combined into a weighted sum C = w₁·Δt + w₂·Risk + w₃·Discomfort, where the weights reflect the driver’s relative importance of each factor. By incorporating C into the reward signal, the RL agent learns a policy that simultaneously balances efficiency, safety, and ride comfort—far beyond the binary “change / stay” logic of many prior works.

The experimental protocol involved 30 participants interacting with a high‑fidelity driving simulator. Each participant experienced a series of traffic situations and provided immediate feedback after every lane‑change initiation. The dataset was split into training, validation, and test sets (approximately 5:1:1). Two model families were evaluated: (a) personalized models trained individually for each driver, and (b) a non‑personalized model trained on the pooled data. The personalized models achieved an average classification accuracy of 86.1 % (σ = 4.2 %) in predicting whether a driver would approve a lane‑change, whereas the generic model reached 75.7 % (σ = 5.6 %). Statistical tests confirmed the superiority of personalization (p < 0.01). Moreover, drivers who supplied frequent feedback converged to >80 % accuracy within five training epochs, while those with sparse feedback still benefited from the pre‑trained generic policy as a warm start.

The authors discuss several limitations. First, feedback latency: in real‑world driving, occupants may not be able to provide instantaneous evaluations, creating a temporal mismatch between the action and its reward. Second, feedback subjectivity: the same traffic situation may receive contradictory ratings depending on the driver’s mood or physiological state. Third, the current formulation only decides when to start a lane change; the subsequent execution (trajectory planning, longitudinal control) remains outside the scope.

Future work is outlined along three lines: (1) enriching the reward with continuous confidence scores and multimodal affective signals (facial expression, heart‑rate variability) to capture nuanced comfort levels; (2) integrating online learning so that the policy can adapt continuously during deployment; and (3) extending the action space to include full lane‑change execution, thereby learning end‑to‑end personalized maneuvering.

In conclusion, the study demonstrates that reinforcement learning driven by explicit user feedback can effectively capture and reproduce individual discretionary lane‑change tactics without requiring massive human‑driving datasets. The personalized policies outperform generic ones by a substantial margin, suggesting that such feedback‑centric adaptation could be a pivotal factor in increasing user acceptance of Level‑4/5 autonomous vehicles.

Comments & Academic Discussion

Loading comments...

Leave a Comment