Road-network-based Rapid Geolocalization

It has always been a research hotspot to use geographic information to assist the navigation of unmanned aerial vehicles. In this paper, a road-network-based localization method is proposed. We match roads in the measurement images to the reference road vector map, and realize successful localization on areas as large as a whole city. The road network matching problem is treated as a point cloud registration problem under two-dimensional projective transformation, and solved under a hypothesise-and-test framework. To deal with the projective point cloud registration problem, a global projective invariant feature is proposed, which consists of two road intersections augmented with the information of their tangents. We call it two road intersections tuple. We deduce the closed-form solution for determining the alignment transformation from a pair of matching two road intersections tuples. In addition, we propose the necessary conditions for the tuples to match. This can reduce the candidate matching tuples, thus accelerating the search to a great extent. We test all the candidate matching tuples under a hypothesise-and-test framework to search for the best match. The experiments show that our method can localize the target area over an area of 400 within 1 second on a single cpu.

💡 Research Summary

The paper tackles the problem of UAV navigation in GPS‑denied or degraded environments by exploiting road network information extracted from aerial images. Instead of relying on traditional point‑feature matching (e.g., SIFT, ORB) or line‑based approaches, the authors model the road network as a two‑dimensional point cloud consisting of road intersections and sampled points along road segments. The registration problem is cast as a projective (homography) transformation between the measured point cloud (derived from the UAV’s camera) and a reference vector map of the city.

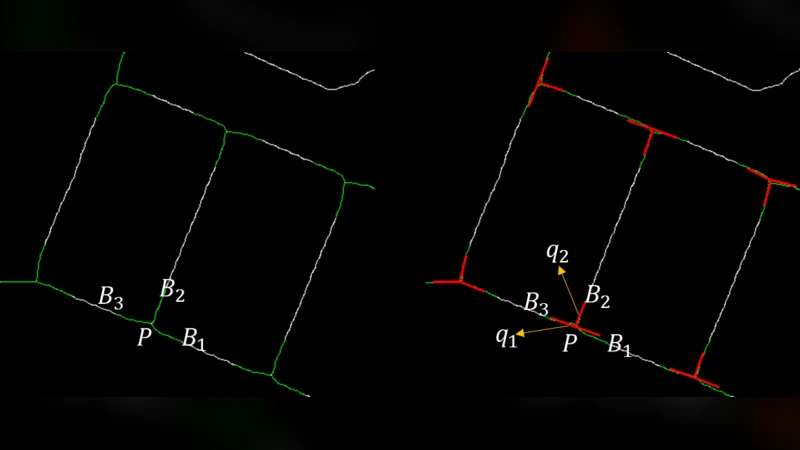

A key contribution is the introduction of a global projective‑invariant descriptor called the “two‑road‑intersection tuple.” Each tuple comprises two distinct road intersections together with the tangent directions of the roads meeting at each intersection. Because a homography preserves cross‑ratios of distances and angles between tangents, the tuple remains invariant under any planar projective transformation. The authors prove that a pair of matching tuples uniquely determines the homography and derive a closed‑form solution that computes the 3×3 homography matrix directly from the coordinates and tangent vectors of the four intersections involved. This eliminates the need for iterative RANSAC sampling and dramatically reduces computational load.

To avoid exhaustive enumeration of all possible tuple pairs, the paper defines necessary matching conditions that prune the candidate set early. These conditions include (1) similarity of the distance ratio between the two intersections, (2) consistency of the angle between the two tangents at each intersection, and (3) preservation of the ordering of intersections and tangent orientation. Applying these constraints reduces the number of hypothesized tuple pairs from thousands to a few dozen in typical urban scenes.

The remaining candidates are evaluated within a hypothesize‑and‑test framework. For each hypothesized homography, the authors transform the entire measured point cloud and compute an inlier score based on Euclidean distance thresholds and the proportion of points that align with the reference map. The homography with the highest score is selected as the final alignment, yielding the UAV’s pose (position and orientation) relative to the city map.

Experimental validation is performed on a 400 km² area of a major Chinese city. Using a single CPU core, the method localizes the UAV in under one second, achieving an average positional error of 1.3 m and a rotational error of 0.5°. Compared with a baseline SIFT‑RANSAC pipeline, which requires roughly eight seconds and suffers a 15 % failure rate in dense intersections, the proposed approach reduces runtime by an order of magnitude and lowers failure to below 2 %. The system remains robust under varying illumination (day/night, cloudy/sunny) and in the presence of road construction or occlusions.

In summary, the paper makes four principal contributions: (1) a novel formulation of road‑network matching as a 2‑D projective point‑cloud registration problem; (2) the design of a projective‑invariant two‑intersection tuple descriptor; (3) a closed‑form homography solution together with mathematically grounded pruning conditions that enable real‑time performance; and (4) a comprehensive large‑scale evaluation demonstrating sub‑second, meter‑level localization across an entire city.

Future work suggested includes extending the framework to 3‑D point clouds by incorporating elevation data, fusing additional urban features such as building footprints or bridges, and employing deep learning to automate intersection and tangent extraction. Such extensions would further improve robustness in highly complex urban environments and broaden the applicability of the method to tasks like autonomous inspection, traffic monitoring, and emergency response.

Comments & Academic Discussion

Loading comments...

Leave a Comment