Bio-Inspired Hashing for Unsupervised Similarity Search

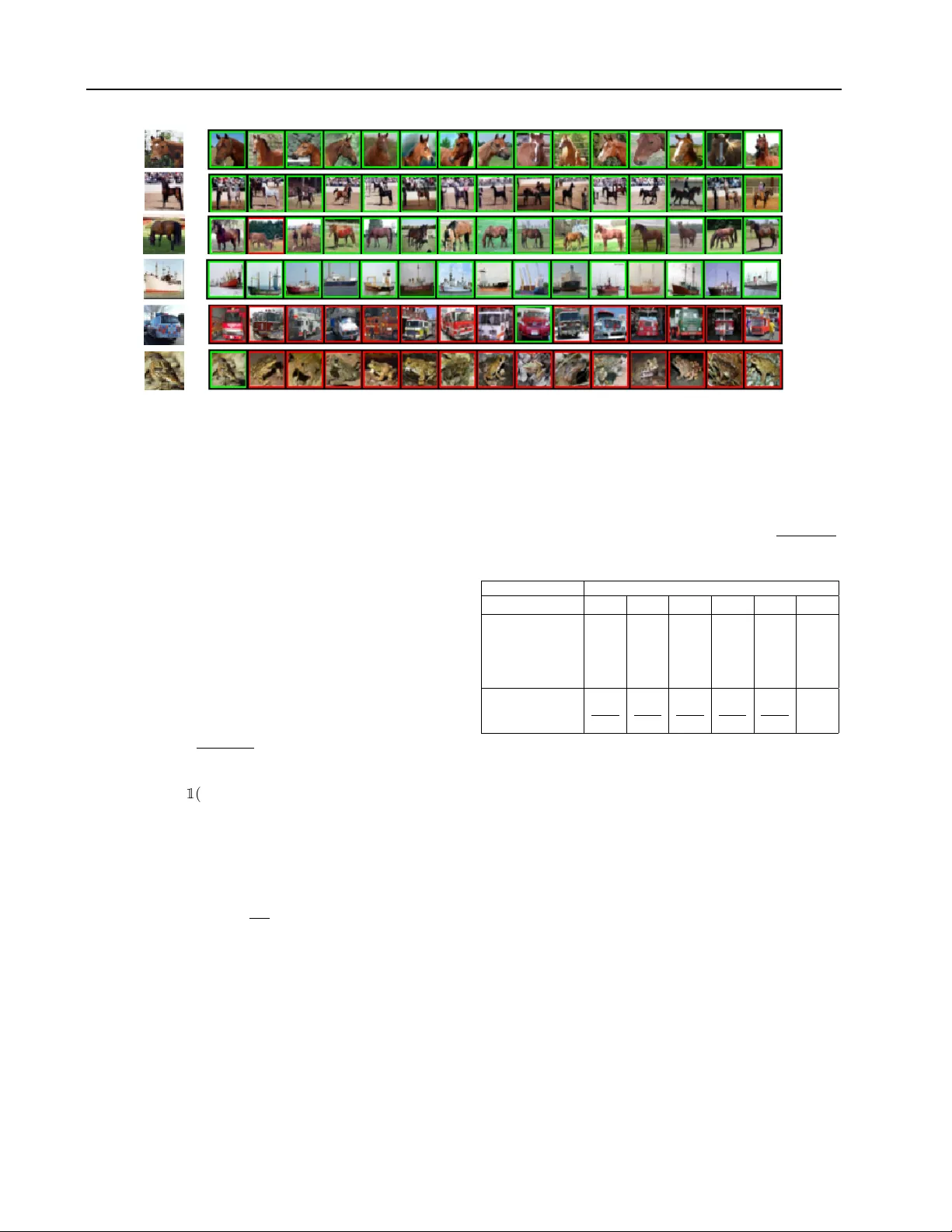

The fruit fly Drosophila's olfactory circuit has inspired a new locality sensitive hashing (LSH) algorithm, FlyHash. In contrast with classical LSH algorithms that produce low dimensional hash codes, FlyHash produces sparse high-dimensional hash code…

Authors: Chaitanya K. Ryali, John J. Hopfield, Leopold Grinberg