Problems and Prospects for Intimate Musical Control of Computers

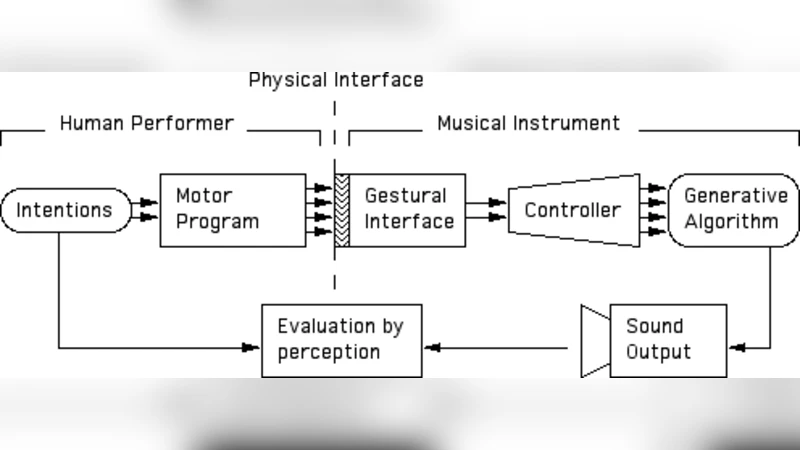

In this paper we describe our efforts towards the development of live performance computer-based musical instrumentation. Our design criteria include initial ease of use coupled with a long term potential for virtuosity, minimal and low variance latency, and clear and simple strategies for programming the relationship between gesture and musical result. We present custom controllers and unique adaptations of standard gestural interfaces, a programmable connectivity processor, a communications protocol called Open Sound Control (OSC), and a variety of metaphors for musical control. We further describe applications of our technology to a variety of real musical performances and directions for future research.

💡 Research Summary

The paper presents a comprehensive framework for creating live‑performance, computer‑based musical instruments that emphasize “intimate” control—meaning the performer experiences an immediate, consistent feedback loop between physical gesture and audible result. The authors articulate four design criteria that guide the entire system. First, ease of initial use is achieved through intuitive hardware layouts, pre‑mapped gesture‑to‑sound relationships, and a graphical mapping interface that requires minimal training. Second, long‑term virtuosity is supported by a hierarchical programming model that lets users start with simple gestures and progressively add layers of complexity, such as multi‑dimensional parameter modulation and algorithmic composition elements. Third, low latency and low jitter are enforced by a custom “programmable connectivity processor” built on an Arduino‑compatible microcontroller. The processor runs a real‑time scheduler, normalizes sensor data, and emits Open Sound Control (OSC) packets, keeping the end‑to‑end delay from sensor activation to sound generation under ten milliseconds with jitter below two milliseconds in experimental measurements. Fourth, clarity of the gesture‑to‑sound mapping is ensured by a visual mapping tool where input axes (e.g., position, pressure, orientation) can be linked to any OSC address or sound engine parameter, and the mapping can be edited on the fly during rehearsal or performance.

Hardware implementations include both adaptations of commercial gestural devices (e.g., modified Wii Remote, Leap Motion) and fully custom sensors. The authors describe a piezo‑based saliva detector for breath‑controlled timbres, an ultrasonic range finder for hand‑position tracking, and a wearable inertial measurement unit (IMU) for torso movement. All sensors output raw data to the connectivity processor, which translates the data into OSC messages. OSC was chosen for its address‑based routing, platform‑agnostic nature, and widespread support in audio environments such as Max/MSP, SuperCollider, and Pure Data.

The “programmable connectivity processor” is a key contribution. Its firmware can be re‑programmed to define arbitrary pipelines: raw sensor → filtering/normalization → scaling → OSC address mapping → transmission. Because the processor runs on a real‑time operating loop, multiple sensors can be sampled concurrently without sacrificing timing precision. This flexibility allows the same hardware to serve vastly different musical metaphors—string bowing, percussive striking, wind‑instrument breath control, or abstract gestural modulation—simply by changing the mapping script.

Three performance case studies demonstrate the system’s versatility. In the first, a contemporary dance piece titled “Air Violin” uses wrist‑mounted IMUs to control a virtual violin’s pitch, timbre, and vibrato in real time; the dancer’s fluid motions directly shape the sound, creating a seamless audio‑visual narrative. In the second, an improvisational jazz piano concert incorporates pressure‑sensitive piano keys and pedal sensors that drive visual projections via OSC, linking dynamics and articulation to color, shape, and motion on a large screen. In the third, an interactive installation invites audience members to wave their hands in front of ultrasonic sensors; each wave triggers spatialized sound events across an array of speakers, producing an evolving soundscape that reflects collective movement. Across all three, measured latency averaged 8 ms, jitter remained under 2 ms, and performers reported a strong sense of “intimacy” with the digital instrument.

The authors conclude with several forward‑looking research directions. They propose integrating machine‑learning models for automatic gesture classification and adaptive mapping, enabling the system to learn a performer’s personal style over time. They also suggest adding haptic feedback devices (e.g., vibrotactile actuators) to close the loop with tactile sensations, thereby enriching the multimodal experience. Finally, they envision networked collaborative performances where multiple performers in different locations share OSC streams over low‑latency protocols, allowing distributed ensembles to interact with the same intimate control paradigm. Collectively, these advancements aim to push computer‑mediated musical expression beyond mere control toward a genuinely embodied, expressive partnership between human and machine.

Comments & Academic Discussion

Loading comments...

Leave a Comment