An Analysis of Control Parameters of MOEA/D Under Two Different Optimization Scenarios

💡 Research Summary

**

This paper conducts a systematic parameter study of the decomposition‑based multi‑objective evolutionary algorithm MOEA/D, focusing on three key control parameters: population size (µ), scalarizing function (g), and the penalty parameter (θ) of the Penalty‑based Boundary Intersection (PBI) function. The novelty lies in evaluating these parameters under two distinct performance‑assessment scenarios that have been largely overlooked in previous work: (1) the traditional final‑population scenario, where only the nondominated solutions present in the final population are used for evaluation, and (2) the reduced external archive (UEA) scenario, where a predefined number of nondominated solutions are selected from an unbounded external archive that stores all nondominated solutions discovered during the run.

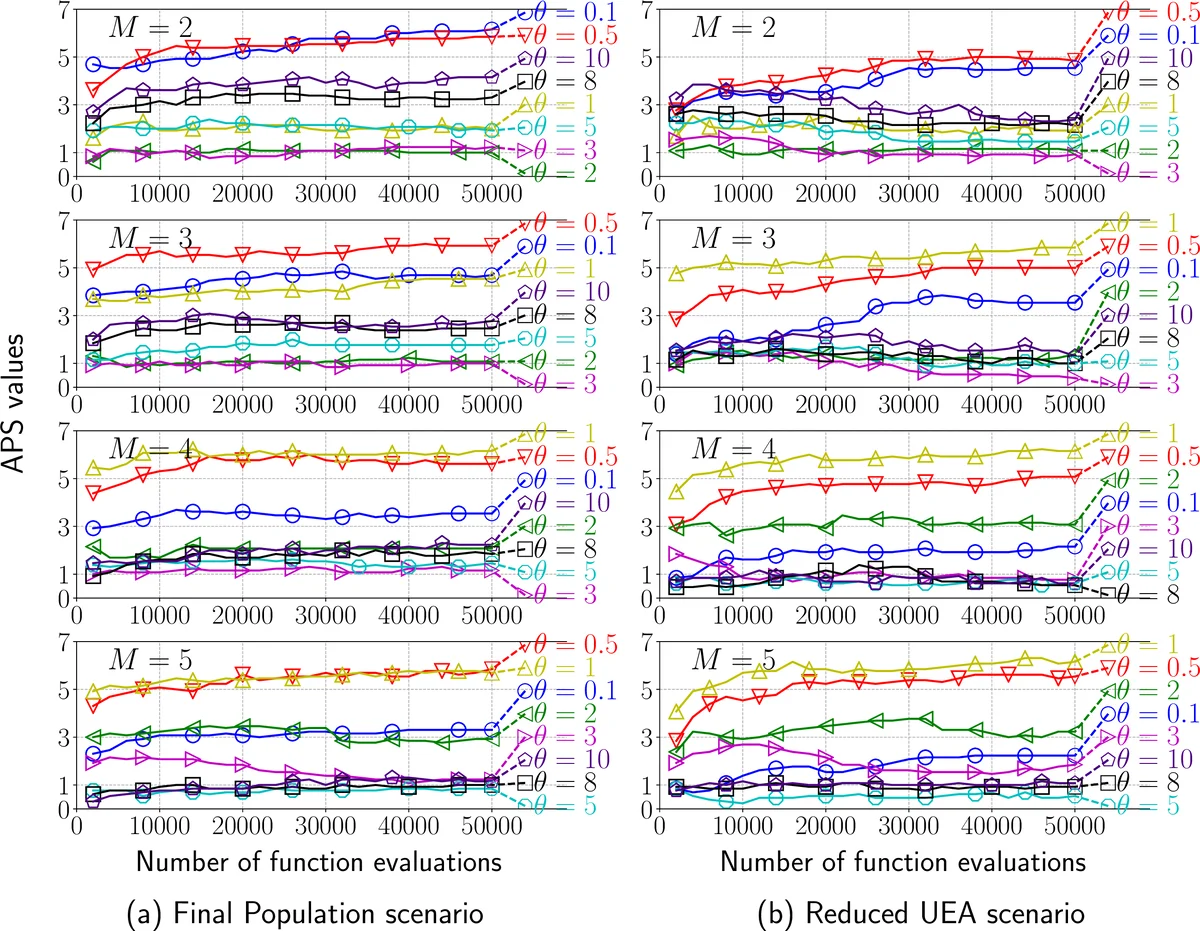

The authors test 13 benchmark problems—four DTLZ and nine WFG instances—with objective counts M = 2, 3, 4, 5. Performance is aggregated using the Average Performance Score (APS), which normalizes and averages results over multiple independent runs, providing a single metric for each parameter configuration.

Population size (µ). In the final‑population scenario, modest sizes (µ ≈ 20–40) yield the best trade‑off between convergence speed and diversity, confirming earlier recommendations. In contrast, the reduced‑UEA scenario benefits from substantially larger populations (µ ≥ 80). A larger µ creates more sub‑problems, leading to finer coverage of the objective space and consequently a richer set of nondominated solutions stored in the archive, which improves APS.

Scalarizing functions. Three widely used scalarizing functions are examined: the weighted‑sum Chebyshev version (g_chm), the weighted‑division Chebyshev version (g_chd), and the PBI function (g_pbi). g_chm performs adequately on simple, convex Pareto fronts but struggles with the non‑convex, multimodal WFG problems. g_chd, which normalizes the weight vector, provides more evenly distributed search directions and consistently outperforms g_chm on both DTLZ and WFG suites. g_pbi’s performance is highly dependent on θ; when θ is appropriately set, g_pbi achieves the best balance of convergence and diversity across most test cases.

Penalty parameter (θ). For the final‑population scenario, medium to high values (θ ≈ 5–10) are optimal, emphasizing diversity to compensate for the limited number of solutions retained in the final population. In the reduced‑UEA scenario, lower values (θ ≈ 1–3) are preferable because the archive already supplies abundant diversity; a smaller θ therefore accelerates convergence without sacrificing spread.

Parameter interactions. The study reveals significant interactions among µ, g, and θ. Large µ combined with high θ can overly dilute selection pressure, slowing convergence, especially when g_pbi is used. Conversely, small µ with low θ can lead to rapid convergence but at the cost of poor coverage of the Pareto front. The choice of scalarizing function modulates these effects: g_chd is relatively robust to θ variations, while g_pbi is highly sensitive.

Key findings and practical guidelines.

- Final‑population scenario: Use µ ≈ 20–40, θ ≈ 5–10, and prefer g_chd or g_pbi. This configuration yields stable APS across all tested problems.

- Reduced‑UEA scenario: Adopt a larger µ (80–100), a low θ (1–3), and g_pbi. This setup exploits the archive’s capacity to store many nondominated solutions while maintaining fast convergence.

The authors argue that optimal parameter settings are scenario‑dependent; a configuration tuned for the final‑population scenario does not necessarily transfer to the reduced‑UEA scenario. Consequently, practitioners should first decide which evaluation framework aligns with their application needs before performing parameter tuning.

The paper concludes by highlighting the need for adaptive parameter control mechanisms that can dynamically adjust µ, g, and θ based on the chosen scenario and problem characteristics. Future work may explore self‑adapting strategies, extensions to higher‑dimensional objective spaces, and real‑world case studies where computational budgets and archive management constraints differ markedly from benchmark settings.

Comments & Academic Discussion

Loading comments...

Leave a Comment