Byzantine Fault-Tolerance in Decentralized Optimization under Minimal Redundancy

This paper considers the problem of Byzantine fault-tolerance in multi-agent decentralized optimization. In this problem, each agent has a local cost function. The goal of a decentralized optimization algorithm is to allow the agents to cooperatively compute a common minimum point of their aggregate cost function. We consider the case when a certain number of agents may be Byzantine faulty. Such faulty agents may not follow a prescribed algorithm, and they may share arbitrary or incorrect information with other non-faulty agents. Presence of such Byzantine agents renders a typical decentralized optimization algorithm ineffective. We propose a decentralized optimization algorithm with provable exact fault-tolerance against a bounded number of Byzantine agents, provided the non-faulty agents have a minimal redundancy.

💡 Research Summary

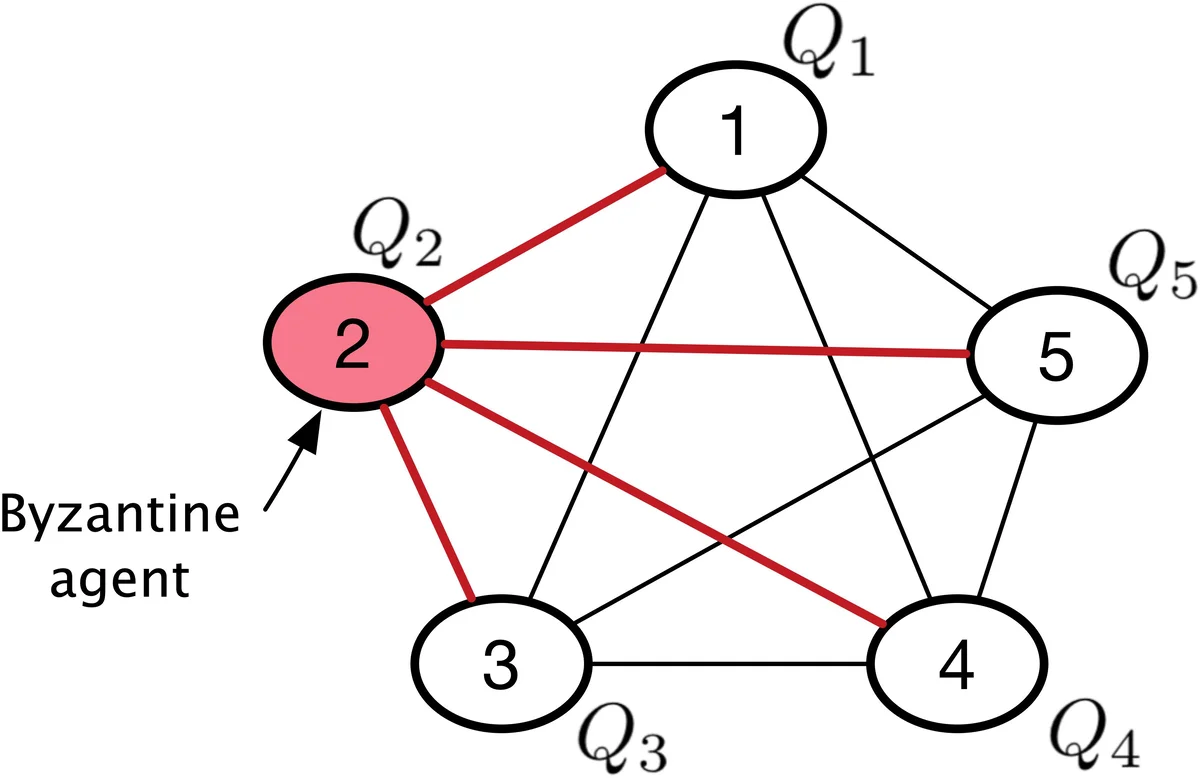

The paper tackles the challenging problem of Byzantine‑fault‑tolerant decentralized optimization in a multi‑agent setting. Each of the n agents holds a smooth, convex local cost function Q_i : ℝ^d → ℝ, and the collective goal is to compute the minimizer of the aggregate cost Σ_i Q_i(x). When up to f agents behave arbitrarily (Byzantine), they can send inconsistent or malicious messages, breaking standard consensus‑based optimization methods.

The authors introduce the notion of exact fault‑tolerance: despite the presence of Byzantine agents, every non‑faulty agent must compute the exact minimizer of the aggregate cost formed only by the non‑faulty agents, denoted x_H^*. They prove that exact fault‑tolerance is impossible without a structural redundancy condition, which they call 2f‑redundancy. This condition requires that any subset of at least n−2f non‑faulty agents shares the same minimizer as the whole set of non‑faulty agents. In practice, such redundancy appears in distributed sensing (multiple sensors observing the same phenomenon) or homogeneous learning (identical models trained on different data shards).

The core contribution is a Projected Consensus algorithm equipped with a Comparative Elimination (CE) filter (Algorithm 1). In each synchronous round:

- Broadcast (S1) – Every non‑faulty agent i broadcasts its current estimate x_i^t to all other agents. Byzantine agents may send arbitrary vectors or remain silent (treated as a zero vector).

- CE Filter (S2) – Agent i computes the Euclidean distances between its own estimate and all received estimates, sorts them, and discards the f farthest values. The remaining n−f−1 estimates (plus its own) form the filter set F_i^t.

- Projected Consensus (S3) – Agent i forms a weighted average of the differences x_i^t – m_i^t(j) over j∈F_i^t, scales it by a step size η, subtracts this term from x_i^t, and finally projects the result onto the set X_i = arg min Q_i(x) (the minimizer set of its own cost). The projection is a Euclidean projection onto a closed convex set and is non‑expansive.

The algorithm operates on a complete communication graph, ensuring every agent can directly receive messages from all others.

The theoretical analysis rests on three standard assumptions about the non‑faulty agents:

- Existence – The global minimizer x_H^* exists and is finite.

- Lipschitz smoothness – Each gradient ∇Q_i is µ‑Lipschitz continuous.

- Strong convexity – The average non‑faulty cost Q_H(x) = (1/|H|) Σ_{i∈H} Q_i(x) is λ‑strongly convex.

Under these assumptions and the 2f‑redundancy property, the authors derive a recursive inequality for the squared distance V_i^t = ‖x_i^t – x_H^*‖². By exploiting the non‑expansiveness of the projection and the geometry of the CE filter, they obtain

V_i^{t+1} ≤ V_i^t – 2η φ_i^t + η² ψ_i^t,

where φ_i^t is the norm of the averaged disagreement after filtering, and ψ_i^t is its squared norm. Using strong convexity (λ) and Lipschitz smoothness (µ) they bound φ_i^t from below and ψ_i^t from above, yielding a linear contraction

max_{i∈H} V_i^{t+1} ≤ ρ max_{i∈H} V_i^{t}, ρ∈

Comments & Academic Discussion

Loading comments...

Leave a Comment