Object condensation: one-stage grid-free multi-object reconstruction in physics detectors, graph and image data

High-energy physics detectors, images, and point clouds share many similarities in terms of object detection. However, while detecting an unknown number of objects in an image is well established in computer vision, even machine learning assisted object reconstruction algorithms in particle physics almost exclusively predict properties on an object-by-object basis. Traditional approaches from computer vision either impose implicit constraints on the object size or density and are not well suited for sparse detector data or rely on objects being dense and solid. The object condensation method proposed here is independent of assumptions on object size, sorting or object density, and further generalises to non-image-like data structures, such as graphs and point clouds, which are more suitable to represent detector signals. The pixels or vertices themselves serve as representations of the entire object, and a combination of learnable local clustering in a latent space and confidence assignment allows one to collect condensates of the predicted object properties with a simple algorithm. As proof of concept, the object condensation method is applied to a simple object classification problem in images and used to reconstruct multiple particles from detector signals. The latter results are also compared to a classic particle flow approach.

💡 Research Summary

**

The paper introduces a novel “object condensation” (OC) technique for simultaneous multi‑object reconstruction in high‑energy physics detectors, images, and point‑cloud data. Traditional computer‑vision detectors rely on anchor boxes or two‑stage pipelines that assume objects have well‑defined sizes, aspect ratios, and densities. Such assumptions break down for sparse, irregular detector hits where objects (particles) can heavily overlap and have widely varying spatial extents.

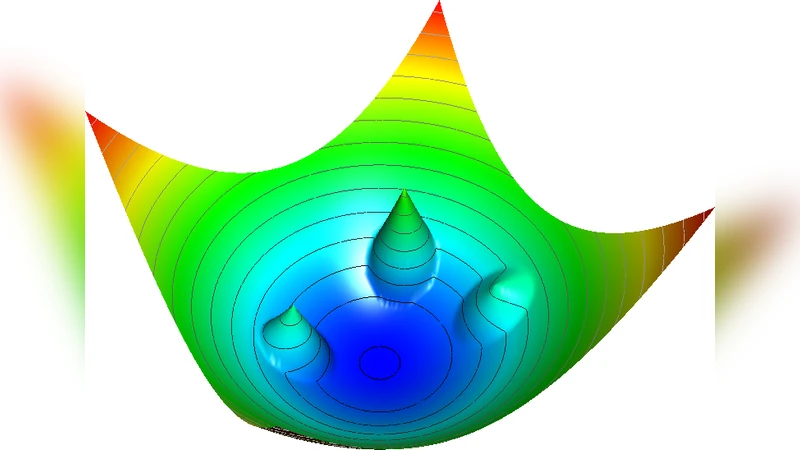

OC treats every pixel (image) or vertex (graph/point‑cloud) as a potential “condensation point” that can represent an entire object. A neural network predicts, for each vertex i, (1) a scalar β_i ∈ (0,1) indicating how likely the vertex is a condensation point, and (2) the usual object properties (class, position, momentum, etc.). β_i is transformed into a charge q_i = arctanh(2β_i) + q_min, where q_min is a small positive hyper‑parameter. The charge defines a potential field V_i(x) ∝ q_i in a learnable latent clustering space x. Vertices belonging to the same true object are attracted toward the vertex with the highest charge (α_k) via an attractive term ‖x‑x_α‖²·q_α, while vertices belonging to other objects experience a repulsive hinge term max(0,1‑‖x‑x_α‖)·q_α.

The loss combines three parts: (i) a property loss L_p weighted by arctanh(2β_i) to focus learning on high‑β vertices, (ii) a condensation loss L_β that forces exactly one high‑β vertex per object and suppresses background (β≈0) vertices, and (iii) a potential loss L_V that implements the attractive/repulsive forces. Hyper‑parameters s_c and s_B balance the relative importance of property versus clustering terms, while q_min controls the trade‑off between pure segmentation (high q_min) and precise property regression (low q_min).

Training uses a simple ground‑truth assignment: each vertex is assigned to the object it belongs to (or to background) and the vertex with maximal charge in each object becomes the target condensation point. This avoids costly edge‑wise matching or binary edge classification, keeping computational complexity linear in the number of vertices.

During inference, vertices with β above a threshold are taken as condensation‑point candidates, sorted by descending β, and each candidate gathers all vertices within a radius t_d (≈0.1–1 in the latent space). The gathered cluster inherits the property predictions of its seed condensation point, yielding a complete object description in a single pass.

The authors validate OC on two fronts. First, a toy image classification task (MNIST‑style) demonstrates that a one‑stage OC detector achieves comparable accuracy to conventional two‑stage detectors while using far fewer parameters and less compute. Second, a realistic particle‑flow reconstruction problem shows that OC can recover multiple overlapping particles from simulated detector hits with performance on par with classic Particle Flow algorithms, but with a simpler, end‑to‑end trainable pipeline. Notably, OC handles overlapping objects without requiring explicit object centers, and it naturally accommodates variable object sizes and densities.

Key insights include:

- The charge‑based potential formulation provides a differentiable clustering mechanism that is rotation‑symmetric and avoids saddle‑point traps by focusing on the highest‑charge vertex.

- The β‑driven background suppression term eliminates the need for separate noise‑filtering stages.

- The method is architecture‑agnostic; any network capable of producing per‑vertex embeddings and β can be used (e.g., CNNs for images, Graph Neural Networks for detector graphs).

Overall, object condensation offers a unified, grid‑free, single‑stage framework for multi‑object detection that bridges computer vision, high‑energy physics, and 3‑D point‑cloud analysis. Its flexibility suggests promising extensions to LiDAR perception, medical imaging, and real‑time hardware implementations where memory and latency constraints are critical. Future work may explore adaptive latent‑space dimensionality, dynamic β thresholds, and integration with attention mechanisms to further improve scalability and robustness.

Comments & Academic Discussion

Loading comments...

Leave a Comment