A dependency look at the reality of constituency

A comment on “Neurophysiological dynamics of phrase-structure building during sentence processing” by Nelson et al (2017), Proceedings of the National Academy of Sciences USA 114(18), E3669-E3678.

💡 Research Summary

The paper offers a critical commentary on Nelson et al.’s 2017 study “Neurophysiological dynamics of phrase‑structure building during sentence processing.” While Nelson and colleagues present evidence that they interpret as neural support for phrase‑structure building in the brain, the authors of this commentary argue that the evidence is better explained by a dependency‑based view of syntax. They begin by contrasting the two dominant theoretical frameworks in syntactic theory: the traditional X‑bar phrase‑structure approach, which posits hierarchical tree structures, and the dependency approach, which treats syntax as a network of pairwise word‑to‑word relations. Citing Mel’čuk (2011) and other dependency scholars, they claim that phrase‑structure trees are merely epiphenomena of underlying dependencies and that the universality of constituency is questionable, especially across typologically diverse languages (Evans & Levinson, 2009).

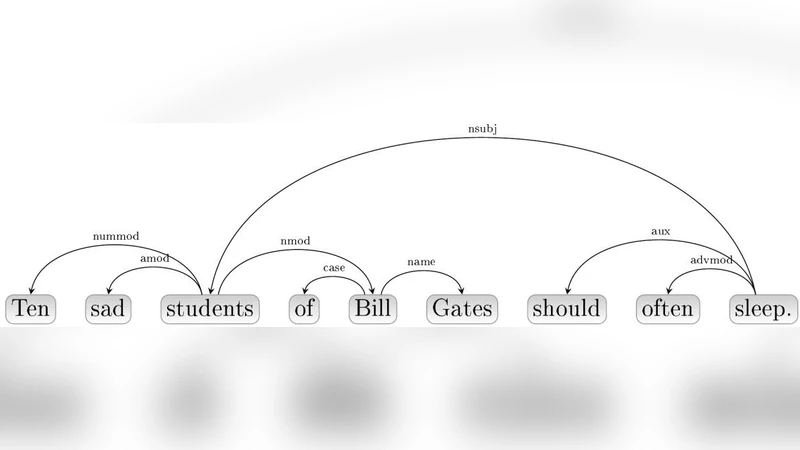

The authors then focus on methodological issues in the Nelson et al. experiment. In the original study, participants read sentences such as “ten sad students,” and the authors report a transient decrease in neural activity that they attribute to the construction of a phrase‑structure constituent. However, the commentary points out that the parser used by Nelson et al. is a constituency parser that temporarily treats the phrase as a unit before fully integrating it, whereas a standard dependency parser would immediately link “ten” and “sad” directly to “students.” Figure 1 in the commentary illustrates this difference, showing that the dependency parser closes the open dependencies at the point where the constituency parser still holds them open. This discrepancy suggests that the observed neural dynamics may be an artifact of the chosen parsing model rather than evidence for a universal phrase‑structure mechanism.

A second major critique concerns the language models employed as baselines. Nelson et al. compare neural responses to predictions from a bigram (2‑gram) model, noting that the model accounts for roughly half of the observed dependencies (Liu, 2008). The commentary argues that bigrams are an insufficient baseline because they ignore long‑range dependencies that are crucial for syntactic processing. They advocate for higher‑order n‑gram models (trigrams, 4‑grams, 5‑grams) or, better yet, neural language models that capture hierarchical structure. Moreover, the authors note that Nelson et al. also tested two “syntactic” n‑gram variants: an unbounded model based on part‑of‑speech categories and a syntactic n‑gram that lacks a clear definition and implementation description. The former discards lexical information, potentially explaining its poor performance, while the latter’s opacity hinders reproducibility and raises concerns about its realism.

The commentary further highlights the limited ecological validity of the stimulus set. All sentences in the original study are ten words or fewer, which does not reflect the complexity of natural language where sentences often exceed this length and contain multiple embedded clauses. Dependency theory predicts that longer sentences will exhibit richer, more varied dependency patterns, and the authors suggest that future experiments should incorporate longer, more naturalistic sentences to test whether the neural signatures observed by Nelson et al. persist under more realistic conditions.

Finally, the authors synthesize their arguments to claim that dependency grammar offers a more parsimonious and empirically grounded account of syntactic processing. Dependency relations are directly tied to the merge operation posited by minimalist syntax, align with recent cognitive findings (Gómez‑Rodríguez, 2016), and have been shown to correlate with processing difficulty (Ferrer‑i‑Cancho, 2004). They recommend that subsequent neuro‑cognitive studies adopt dependency parsers, employ higher‑order language models, and use corpora that better reflect natural language use. By doing so, researchers can more accurately assess whether neural dynamics truly reflect phrase‑structure building or are better explained by the activation of dependency relations. This commentary thus calls for a methodological shift toward dependency‑centric analyses in the study of the neural basis of syntax.

Comments & Academic Discussion

Loading comments...

Leave a Comment