Evolutionary Architecture Search for Graph Neural Networks

💡 Research Summary

The paper introduces Genetic‑GNN, an evolutionary neural architecture search (NAS) framework designed specifically for graph neural networks (GNNs). While most NAS research has focused on convolutional networks for grid‑like data, only a few works have tackled GNN architecture search, and those that exist (e.g., GraphNAS, Auto‑GNN) rely on reinforcement learning (RL) to generate candidate structures. Those RL‑based methods suffer from two major drawbacks: (1) they keep learning hyper‑parameters (learning rate, dropout, etc.) fixed during the search, which can lead to sub‑optimal models because GNN performance is highly sensitive to these settings; and (2) they require training a controller RNN and evaluating candidates sequentially, resulting in high computational cost and poor scalability to large search spaces.

Genetic‑GNN addresses these issues by employing a population‑based evolutionary algorithm that alternates between evolving GNN structures and evolving their learning hyper‑parameters. The search space includes, for each layer, a choice of aggregator (GCN, GAT, GraphSAGE, etc.), an activation function (ReLU, LeakyReLU, ELU, etc.), and hidden dimension size. Hyper‑parameter dimensions comprise learning rate, dropout probability, and other training settings. An initial population of random architectures (S₀) and associated parameter settings (P₀) is generated.

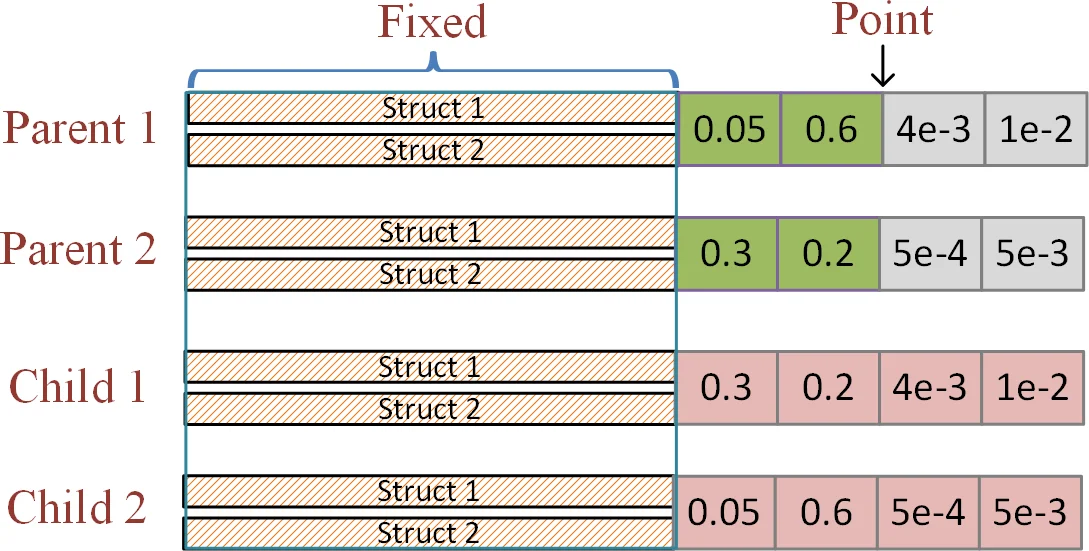

The algorithm proceeds in two alternating phases repeated for I rounds. In the parameter‑evolution phase, the current set of structures is fixed while a genetic process optimizes learning rates, dropout rates, and other parameters across the whole population; fitness is measured on a validation set. In the structure‑evolution phase, the best parameter configuration is fixed, and genetic operators (selection, crossover, mutation) modify the architectures: crossover swaps whole layers between parents, mutation replaces aggregators, changes activation functions, or adjusts hidden sizes. This alternating scheme allows structures and parameters to co‑adapt, mitigating the “structure‑parameter mismatch” that plagues fixed‑parameter searches.

Because each individual model is evaluated independently, the method scales naturally to parallel GPU clusters, unlike RL‑based approaches that evaluate candidates sequentially. Experiments were conducted on standard transductive node‑classification benchmarks (Cora, Citeseer, Pubmed) and an inductive benchmark (PPI). Genetic‑GNN achieved accuracy and F1 scores comparable to or better than state‑of‑the‑art methods such as GraphNAS, Auto‑GNN, GCN, and GAT, while reducing search time by roughly 30‑50 % under comparable population sizes. The parameter‑evolution component proved especially valuable, automatically finding learning rates and dropout values that prevented over‑fitting and improved generalization.

The authors highlight three main contributions: (1) formalizing GNN architecture search as a joint optimization of structure and hyper‑parameters within an evolutionary framework; (2) proposing the alternating evolution mechanism that dynamically fits structures to parameters and vice versa; and (3) providing extensive empirical evidence that the approach is both effective and scalable for transductive and inductive graph learning tasks.

Limitations noted include sensitivity to evolutionary meta‑parameters (population size, crossover/mutation probabilities) and the current restriction to a fixed number of layers; future work could explore adaptive layer depth and extend the method to graph‑level tasks such as graph classification or link prediction. In summary, Genetic‑GNN offers a practical, efficient, and high‑performing solution for automated GNN design, advancing the state of NAS for graph‑structured data.

Comments & Academic Discussion

Loading comments...

Leave a Comment