A Survey of FPGA-Based Robotic Computing

💡 Research Summary

This survey paper provides a comprehensive overview of the state‑of‑the‑art in FPGA‑based acceleration for robotic computing. It begins by highlighting the rapid advances in robotics across algorithms, mechanics, and hardware, and points out the dual challenges of high computational demand and stringent power budgets that modern robots—such as manipulators, legged platforms, drones, and autonomous vehicles—must confront. While CPUs offer flexibility and GPUs deliver massive parallelism, both consume on the order of tens to hundreds of watts, which is often prohibitive for edge‑mounted robotic platforms.

The authors argue that field‑programmable gate arrays (FPGAs) occupy a unique niche: they can be customized at the hardware level to match the specific data‑flow characteristics of robotic workloads, achieving far lower latency and dramatically higher energy efficiency. A key advantage discussed is partial reconfiguration (PR), which allows portions of an FPGA to be re‑programmed at runtime without disrupting the rest of the system. PR enables time‑sharing of hardware resources among multiple robotic tasks, thereby reducing overall power consumption while preserving performance.

The paper structures the robotic software stack into four principal stages—sensing, perception, localization, and planning & control—and reviews how each stage can be mapped onto FPGA fabrics. In the sensing stage, FPGAs directly interface with high‑rate sensors (high‑resolution cameras, RGB‑D devices, GNSS/IMU, LiDAR, radar, sonar) and perform early‑stage preprocessing, thus alleviating I/O bottlenecks that would otherwise burden CPUs or GPUs.

For perception, the survey covers classic pipelines (HOG‑SVM, CRF‑based segmentation) as well as modern deep‑learning models (Faster‑RCNN, YOLO, SSD, Fully Convolutional Networks, PSPNet). It details techniques such as data‑flow pipelining, quantization, and on‑chip memory reuse that allow these convolutional networks to run on FPGAs with up to tenfold improvements in energy efficiency while maintaining competitive accuracy and throughput.

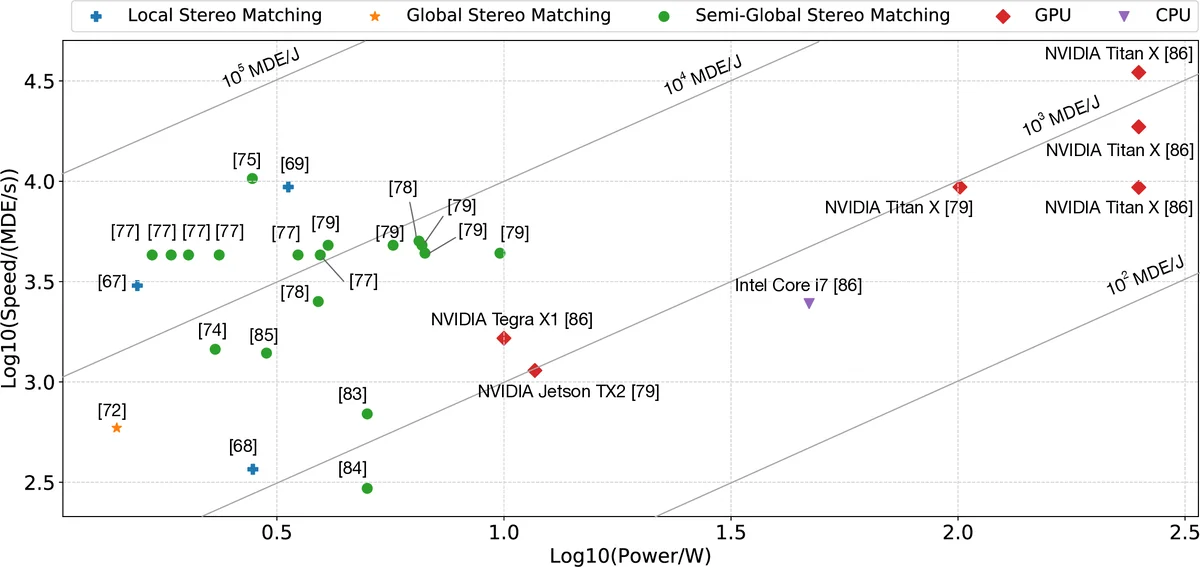

Localization is examined through sensor‑fusion algorithms (Kalman filters, particle filters), LiDAR‑based SLAM, and vision‑based stereo triangulation. The authors illustrate FPGA implementations that sustain update rates exceeding 100 Hz, essential for high‑speed drones and autonomous cars, and discuss robustness issues such as weather‑induced LiDAR degradation and GNSS signal blockage.

In the planning and control domain, the paper surveys both low‑dimensional graph search methods (A*, Dijkstra) and high‑dimensional sampling‑based planners (RRT, PRM). It also reviews decision‑making frameworks based on Markov Decision Processes (MDP) and Partially Observable MDPs (POMDP), as well as reinforcement‑learning approaches (Q‑learning, actor‑critic, policy gradients). FPGA implementations of these algorithms enable control loop latencies on the order of tens of microseconds, far surpassing typical CPU‑based solutions.

The authors present two real‑world case studies. The first describes PerceptIn’s commercial autonomous‑micromobility vehicles, where an FPGA‑centric architecture handles heterogeneous sensor synchronization and accelerates critical perception pipelines, achieving a 30 % reduction in power consumption and a 40 % cut in end‑to‑end latency. The second case study focuses on space‑grade FPGAs used in satellite and planetary rovers, emphasizing radiation tolerance, rapid design cycles, and cost savings while delivering real‑time image processing and path planning in harsh environments.

Finally, the survey identifies open research challenges: (1) the need for more mature high‑level synthesis (HLS) tools to bridge algorithmic description and efficient hardware generation; (2) runtime scheduling and security mechanisms for dynamic partial reconfiguration; (3) heterogeneous integration of FPGAs with ASIC‑based neural‑processing units to exploit the strengths of both; and (4) the establishment of standardized robotic workload benchmarks to enable fair performance comparison. By addressing these issues, the authors contend that FPGAs will evolve from niche accelerators to a central computing substrate capable of replacing or complementing CPUs and GPUs across the full spectrum of robotic applications.

Comments & Academic Discussion

Loading comments...

Leave a Comment