Audio Watermarking with Error Correction

In recent times, communication through the internet has tremendously facilitated the distribution of multimedia data. Although this is indubitably a boon, one of its repercussions is that it has also given impetus to the notorious issue of online music piracy. Unethical attempts can also be made to deliberately alter such copyrighted data and thus, misuse it. Copyright violation by means of unauthorized distribution, as well as unauthorized tampering of copyrighted audio data is an important technological and research issue. Audio watermarking has been proposed as a solution to tackle this issue. The main purpose of audio watermarking is to protect against possible threats to the audio data and in case of copyright violation or unauthorized tampering, authenticity of such data can be disputed by virtue of audio watermarking.

💡 Research Summary

The paper addresses the problem of protecting digital audio from copyright infringement and unauthorized tampering by embedding a watermark into audio signals and providing a mechanism for error‑corrected transmission. After a broad introduction that highlights the proliferation of multimedia distribution over the Internet and the consequent rise in piracy, the authors position audio watermarking as a “imperceptible, robust and secure” means of asserting ownership and detecting illicit modifications.

The core contribution is a set of three embedding scenarios: (1) Audio‑in‑Audio, where both the host (cover) and the watermark are audio streams; (2) Audio‑in‑Image, where an audio watermark is hidden inside a still image; and (3) Image‑in‑Audio, where an image is embedded into an audio carrier. For each scenario the authors propose two technical approaches: (a) simple interleaving of watermark samples among host samples, and (b) manipulation in the Discrete Cosine Transform (DCT) domain. In the DCT‑based method the high‑frequency DCT coefficients of the host are replaced with low‑frequency coefficients of the watermark (or vice‑versa, depending on the scenario). The inverse DCT (IDCT) is then applied to reconstruct the watermarked signal.

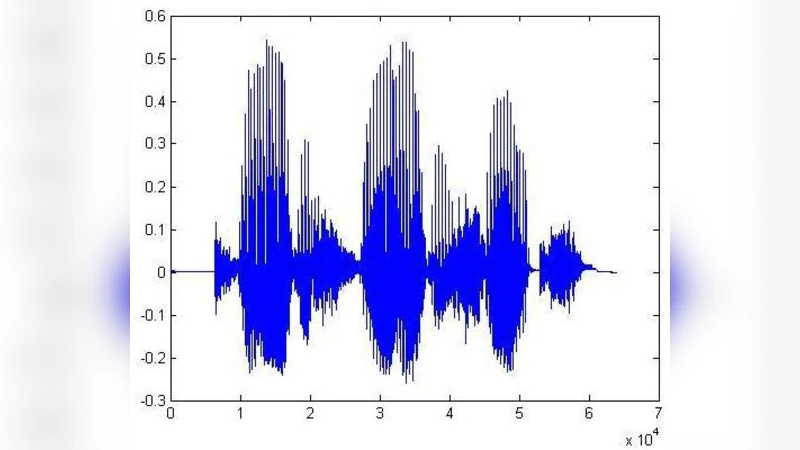

Performance evaluation relies exclusively on Mean Squared Error (MSE). The authors argue that MSE is simple, memory‑less, physically meaningful, convex, and widely used in signal‑processing optimization. Reported MSE values for the three scenarios are on the order of 10⁻⁴ to 10⁻³ for audio‑audio interleaving and DCT, and as low as 2.47 × 10⁻⁹ for the image‑related cases. Spectral plots of original, watermarked, and recovered signals are provided, but no perceptual metrics (e.g., PSNR, SNR, MOS) or statistical significance tests are presented.

To address channel errors, the authors incorporate a (15, 11) Hamming code, which can correct single‑bit errors and detect double‑bit errors. They simulate the addition of noise to the transmitted watermarked signal and compare the recovered audio with and without error correction. The MSE after Hamming decoding is shown to be substantially lower, and waveform figures illustrate a visibly cleaner reconstruction. However, the noise model is not described (e.g., Gaussian, burst, SNR range), and no comparison with other coding schemes (e.g., Reed‑Solomon, convolutional codes) is made.

The paper’s strengths lie in its clear, step‑by‑step description of the embedding pipelines and the inclusion of a basic error‑correction layer, which together form a complete transmission chain from watermark generation to reception. The use of both interleaving and DCT demonstrates awareness of trade‑offs between computational simplicity and perceptual robustness.

Nevertheless, several critical shortcomings limit the scientific impact:

-

Novelty – The combination of interleaving or DCT‑based embedding with Hamming codes has been explored in earlier works (e.g., Liu & Lu 2009; Yan et al. 2009). The paper does not articulate a distinct algorithmic improvement or a new theoretical insight.

-

Evaluation Scope – Relying solely on MSE is insufficient for audio watermarking, where human auditory perception is paramount. Absence of PSNR, SNR, Bit Error Rate (BER) for the extracted watermark, or listening tests (Mean Opinion Score) makes it impossible to judge imperceptibility and robustness.

-

Robustness Tests – No experiments are conducted under common attacks such as MP3/AAC compression, resampling, low‑pass filtering, or time‑scale modification. Consequently, the claim of “robustness” remains unsubstantiated.

-

Parameter Disclosure – Critical parameters such as the embedding strength, quantization step, proportion of DCT coefficients replaced, and the payload size (bits per second) are omitted, preventing reproducibility.

-

Error‑Correction Detail – The paper mentions a (15, 11) Hamming code but does not explain how the watermark bits are mapped to codewords, nor does it discuss latency, overhead, or the impact on payload capacity.

-

Presentation Quality – The manuscript contains numerous typographical errors, inconsistent terminology, and duplicated text, which detracts from its credibility.

In conclusion, while the work presents a functional framework for audio watermarking with a simple error‑correction layer, it falls short of delivering a rigorous, novel contribution to the field. Future research should integrate perceptual models for adaptive embedding, evaluate against a comprehensive set of attacks, employ richer performance metrics, and compare alternative forward error‑correction schemes to substantiate any claimed improvements in robustness and audio quality.

Comments & Academic Discussion

Loading comments...

Leave a Comment