Improved Modeling of 3D Shapes with Multi-view Depth Maps

💡 Research Summary

The paper proposes a simple yet powerful framework for modeling 3D shapes using multi‑view depth maps, leveraging recent advances in 2D image generation networks. The authors argue that depth maps provide a memory‑efficient, image‑like representation that can be processed directly with convolutional neural networks (CNNs), avoiding the heavy computational costs associated with voxel grids, point clouds, meshes, or implicit functions.

The architecture consists of two main components: an Identity Encoder and a Viewpoint Generator. The Identity Encoder receives one or more depth maps of an object and produces a view‑independent latent vector. During training, multiple random viewpoints of the same object are encoded, and their embeddings are averaged (expected value) to obtain a single identity code that captures pure shape information while discarding viewpoint cues. The encoder follows a standard hierarchical Conv‑Block design, progressively halving spatial resolution until a 4×4 feature map is reached.

The Viewpoint Generator is built upon the StyleGAN‑v2 architecture. Instead of starting from a random latent vector, it begins with a learned constant tensor (256×4×4) and injects the identity code via a multi‑layer perceptron (MLP) that produces a style vector. This style vector modulates each synthesis block through Adaptive Instance Normalization (AdaIN). A separate learned category embedding (also 256×4×4) enables class‑conditional generation, allowing a single model to handle multiple object categories. The generator outputs a discrete depth map: depth values are quantized to 8‑bit integers (0‑255), and a softmax layer produces a probability distribution over these 256 possible values. Training uses label‑smoothed cross‑entropy (implemented as KL‑divergence) to mitigate over‑confidence and improve stability.

Key technical innovations include:

- Discrete depth representation – Quantizing continuous depth to 256 levels dramatically reduces memory consumption and simplifies the loss formulation.

- Averaging heuristic for identity extraction – By averaging embeddings from multiple viewpoints, the encoder is forced to learn view‑invariant shape features without explicit supervision.

- Adaptation of StyleGAN‑v2 to depth‑map generation – The use of AdaIN and a constant input tensor allows the network to inherit the high‑quality synthesis capabilities of modern 2D generators while remaining fully compatible with depth‑map data.

- Implicit Maximum Likelihood Estimation (IMLE) for generative modeling – After training the encoder‑decoder, the authors fit an IMLE model to the latent identity space, enabling sampling of novel shapes without the instability issues of GANs (mode collapse, vanishing gradients).

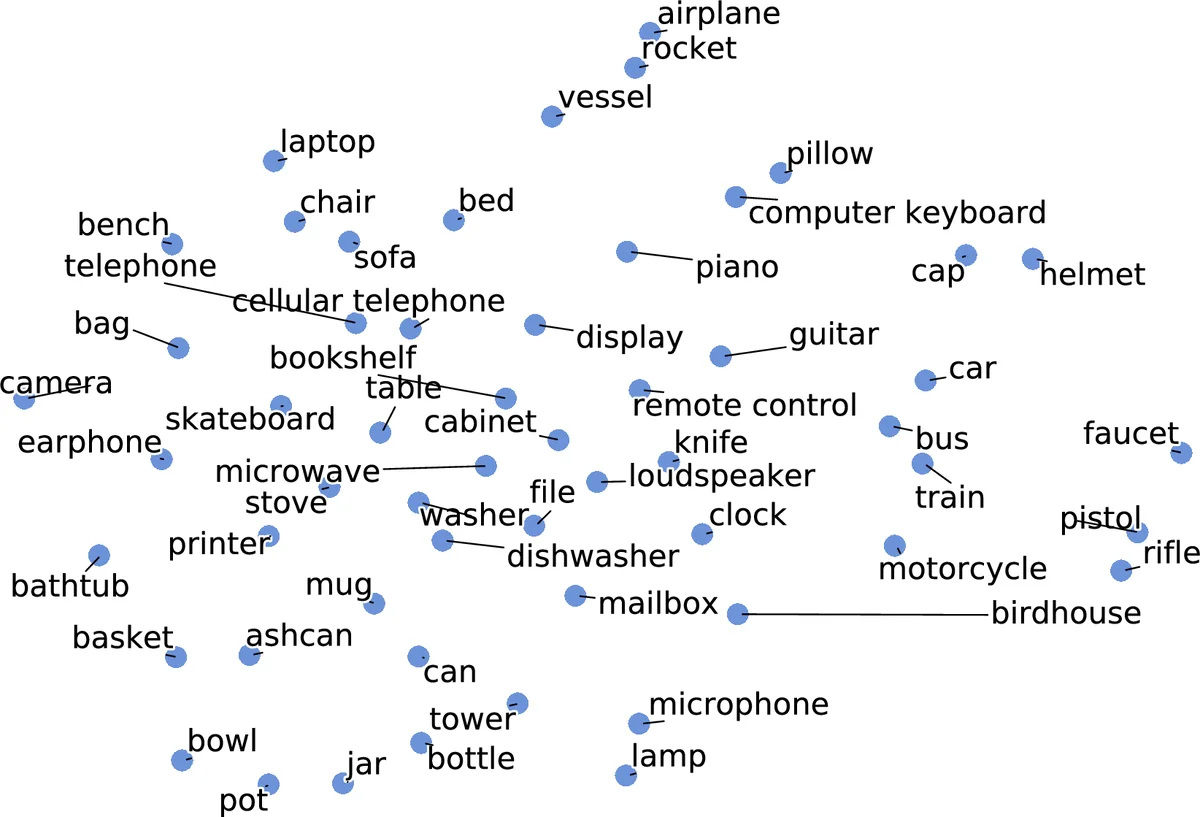

Experiments are conducted on ShapeNet across several categories (chairs, tables, cars, etc.). Quantitative metrics (Chamfer Distance, Earth Mover’s Distance, Intersection‑over‑Union) show that the proposed method outperforms voxel‑based 3DGAN, point‑cloud based PointFlow, and implicit‑function based DeepSDF/Occupancy Networks, while using far less GPU memory (batch size 32, learning rate 0.004). The method also matches or exceeds class‑specific models despite being trained as a single class‑conditional network.

Qualitative results demonstrate that a single input depth map suffices to reconstruct a dense multi‑view depth representation, and that linear interpolation between two identity vectors yields smooth shape morphing. The authors also visualize generated depth maps by converting them to point clouds using a standard renderer, confirming high‑frequency surface details.

Limitations discussed include reliance on a fixed set of 20 predefined camera viewpoints, which may restrict generalization to arbitrary viewpoints, and the need for post‑processing to fill small holes after depth‑to‑point‑cloud conversion. Future work could explore dynamic viewpoint sampling, higher‑resolution quantization, and multimodal training that combines RGB and depth (2.5D) data, especially given the proliferation of depth sensors on modern smartphones.

Overall, the paper convincingly shows that 2D CNN architectures can be repurposed for 3D shape modeling by treating multi‑view depth maps as the primary representation, achieving high‑quality reconstruction and synthesis with minimal computational overhead.

Comments & Academic Discussion

Loading comments...

Leave a Comment