A review of Gaussian Markov models for conditional independence

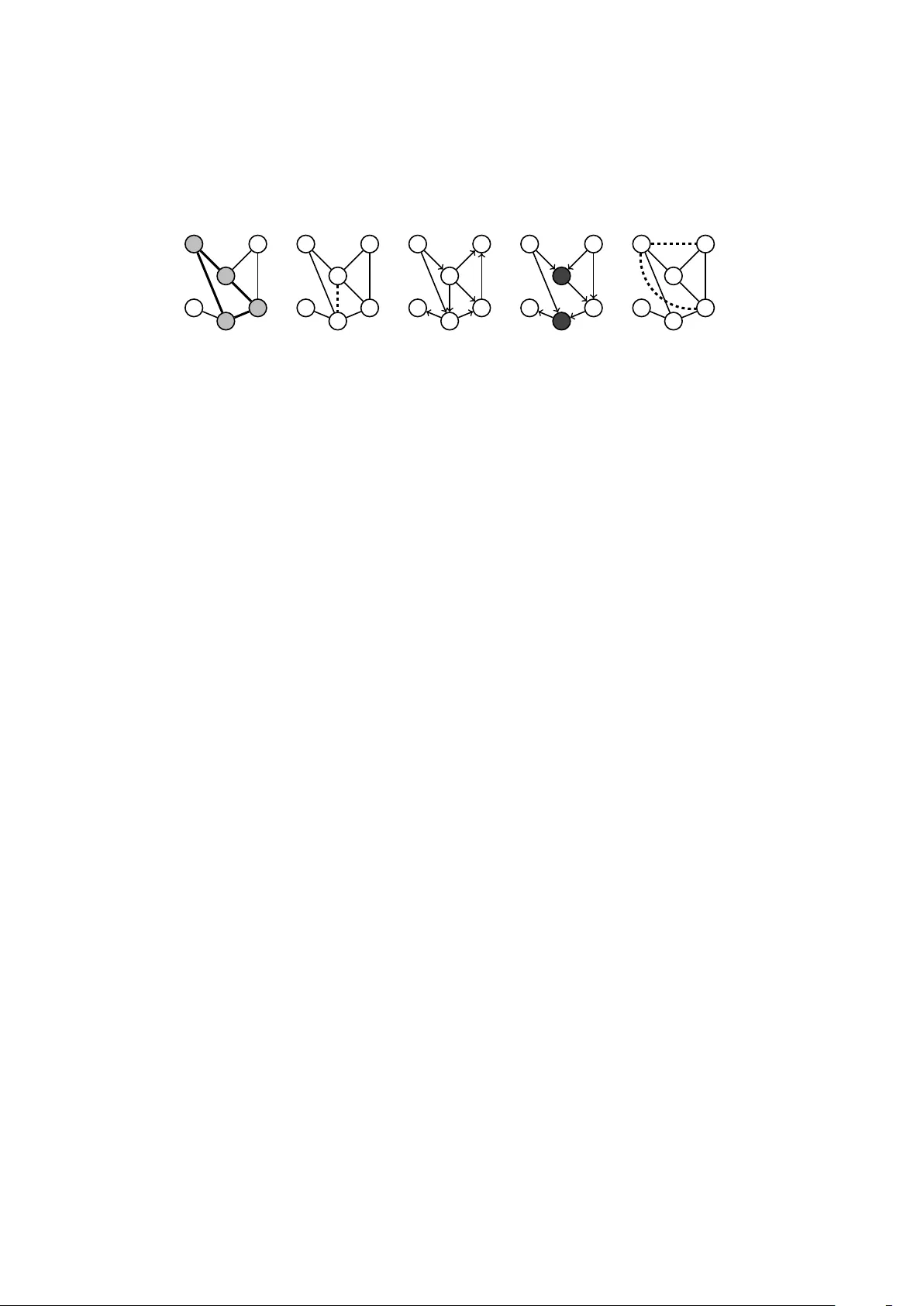

Markov models lie at the interface between statistical independence in a probability distribution and graph separation properties. We review model selection and estimation in directed and undirected Markov models with Gaussian parametrization, emphas…

Authors: Irene Cordoba, Concha Bielza, Pedro Larra~naga