Face Recognition Using Discrete Cosine Transform for Global and Local Features

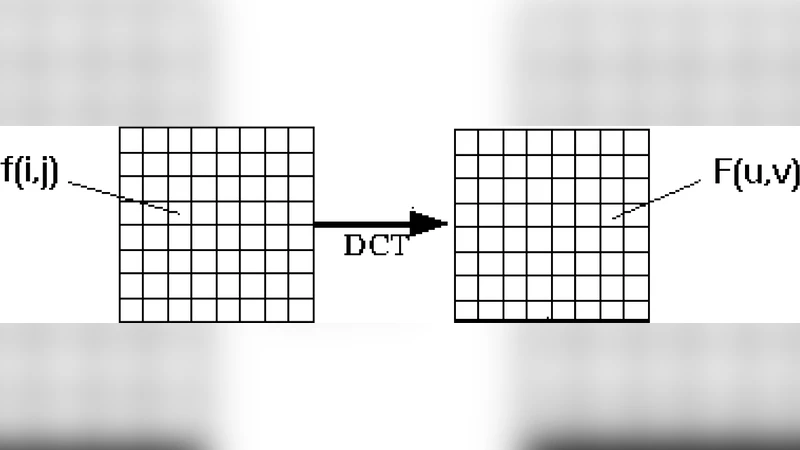

Face Recognition using Discrete Cosine Transform (DCT) for Local and Global Features involves recognizing the corresponding face image from the database. The face image obtained from the user is cropped such that only the frontal face image is extracted, eliminating the background. The image is restricted to a size of 128 x 128 pixels. All images in the database are gray level images. DCT is applied to the entire image. This gives DCT coefficients, which are global features. Local features such as eyes, nose and mouth are also extracted and DCT is applied to these features. Depending upon the recognition rate obtained for each feature, they are given weightage and then combined. Both local and global features are used for comparison. By comparing the ranks for global and local features, the false acceptance rate for DCT can be minimized.

💡 Research Summary

**

The paper proposes a hybrid face‑recognition approach that combines global image information with local facial components (eyes, nose, mouth) using the Discrete Cosine Transform (DCT). All face images are first cropped to a frontal 128 × 128 gray‑scale region, and background is removed. The entire image is transformed with a 2‑D DCT, producing a set of frequency coefficients that serve as global features. In parallel, four local regions are manually extracted: left eye (16 × 16), right eye (16 × 16), nose (25 × 40) and mouth (30 × 50). Each of these patches is also subjected to a 2‑D DCT. The resulting coefficient matrices are linearised using a zig‑zag scan, which orders low‑frequency coefficients first. From the full set of 16 k coefficients per image, only the most significant 50–64 coefficients are retained for matching.

Because illumination varies across captures, the authors normalise each test image to the average intensity of the corresponding registered image. The normalisation factor is the ratio of the registered image’s mean pixel value to that of the test image; the test image is multiplied by this factor before DCT is applied. This step reduces the sensitivity of DCT coefficients to lighting changes.

Matching is performed by computing the Euclidean distance between the selected coefficient vectors of a test image and each registered image in the database. For each pair, the distances of the chosen coefficients are summed, yielding a scalar “X”. With 15 registered subjects, each test image generates 15 X‑values, which are sorted in ascending order. The smallest X corresponds to rank‑1; if rank‑1 matches the true identity, the test is counted as correct. Recognition rate is defined as the number of correctly identified subjects divided by the total number of test images.

Experiments use a small subset of the BioID database: 25 individuals, each with one registered image and four test images (total 100 test cases). Four experimental configurations are evaluated:

-

Global features only – 64 DCT coefficients from the whole face.

Without intensity normalisation: 88.25 % recognition.

With normalisation: 92.5 %. -

Local features only – DCT coefficients from the four facial patches combined.

Without normalisation: 87.18 %.

With normalisation: 90.2 %. -

Logical AND of global and local ranks – a subject is accepted only if both global and local matching produce rank‑1. This yields a false‑acceptance rate of zero, but recognition drops to 80.52 % (without normalisation) and 82.35 % (with normalisation).

-

Weighted combination of global and local coefficients – the authors assign different weights to global and each local patch and sum the weighted distances. The exact weighting scheme is not detailed, but the combined system achieves a recognition rate comparable to the best global‑only result (≈92 %).

The paper also references four well‑known face‑recognition techniques—Principal Component Analysis (PCA), Independent Component Analysis (ICA), Spectroface, and Gabor‑jet—suggesting that the proposed DCT‑based method could be integrated with or compared to these, but no quantitative comparison is presented.

Key technical observations

-

DCT suitability – DCT provides a compact representation because most image energy concentrates in low‑frequency coefficients, which are retained for matching. It is computationally efficient (especially with pre‑computed 8 × 8 basis functions) and yields integer‑valued coefficients, simplifying storage and distance calculations.

-

Normalization impact – Aligning average intensities dramatically improves performance (≈4–5 % gain), confirming DCT’s sensitivity to global illumination.

-

Local vs. global trade‑off – Local patches capture fine‑grained structural details that are less affected by pose or expression changes, while global features retain overall facial geometry. Their combination can reduce false matches, as demonstrated by the AND logic experiment, albeit at the cost of lower overall recognition.

-

Manual region extraction – The study extracts eyes, nose, and mouth manually, which limits real‑world applicability. An operational system would require automatic landmark detection; the performance of the DCT pipeline would then depend on the accuracy of that detector.

-

Dataset size and evaluation rigor – Only 25 subjects are used, which is insufficient for statistically robust conclusions. Moreover, the paper does not report standard deviations, confidence intervals, or cross‑validation, making it difficult to assess the reliability of the reported rates.

-

Comparison with other methods – While the authors list PCA, ICA, Spectroface, and Gabor‑jet as benchmarks, they do not provide side‑by‑side results. Consequently, it is unclear whether the DCT‑based approach offers a genuine advantage over these established techniques.

-

Computational considerations – Applying DCT to the full image plus four separate patches increases processing time and memory usage compared with a single‑image approach. For real‑time video streams, optimization (e.g., selecting fewer coefficients, using fast DCT algorithms, or parallel processing) would be necessary.

Conclusions and future directions

The study demonstrates that DCT can be effectively used to extract both global and local facial features, and that a simple weighted fusion of these features can achieve recognition rates above 90 % on a modest dataset when intensity normalisation is applied. However, to transition from a proof‑of‑concept to a deployable system, several challenges must be addressed: (1) automatic detection of facial landmarks, (2) systematic optimisation of feature weights, (3) extensive testing on larger, more diverse databases, and (4) rigorous statistical evaluation against state‑of‑the‑art methods. Addressing these issues would clarify the true potential of DCT‑based hybrid feature fusion in modern biometric applications.

Comments & Academic Discussion

Loading comments...

Leave a Comment