Prestige of scholarly book publishers: an investigation into criteria, processes, and practices across countries

Numerous national research assessment policies set the goal of promoting “excellence” and incentivise scholars to publish their research in the most prestigious journals or with the most prestigious book publishers. We investigate the practicalities of the assessment of book outputs based on the prestige of book publishers (Denmark, Finland, Flanders, Lithuania, Norway). Additionally, we test whether such judgments are transparent and yield consistent results. We show inconsistencies in the levelling of publishers, such as the same publisher being ranked as prestigious and not-so-prestigious in different states or in consequent years within the same country. Likewise, we find that verification of compliance with the mandatory prerequisites is not always possible because of the lack of transparency. Our findings support doubts about whether the assessment of books based on a judgement about their publisher yields acceptable outcomes. Currently used rankings of publishers focus on evaluating the gatekeeping role of publishers but do not assess other essential stages in scholarly book publishing. Our suggestion for future research is to develop approaches to evaluate books by accounting for the value added to every book at every publishing stage, which is vital for the quality of book outputs from research assessment and scholarly communication perspectives.

💡 Research Summary

The paper investigates how several national research assessment systems—specifically those of Denmark, Finland, Flanders (the Dutch‑speaking part of Belgium), Lithuania, and Norway—use the prestige of scholarly book publishers as a proxy for research quality. By mapping each country’s policy documents, data sources, and ranking criteria, the authors reveal a striking lack of consistency and transparency. The same publisher can be classified as “high‑prestige” in one jurisdiction while being deemed “low‑prestige” in another, and even within a single country the ranking of a publisher may shift dramatically from year to year. For example, Springer appears among the top tier in Norway’s 2022 assessment but is omitted from the elite list in the 2023 round.

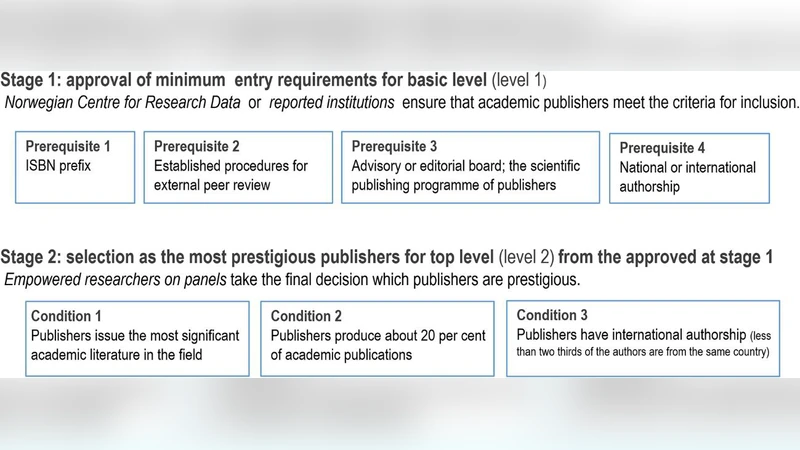

The study quantifies these fluctuations by constructing a cross‑national matrix of about 150 publishers over the 2020‑2023 period. On average, a publisher’s rank changes by 1.3 levels, with a maximum swing of three levels. Such volatility stems from divergent national definitions of “mandatory prerequisites” (ISBN presence, peer‑review status, annual output, citation metrics) and from the fact that many of these prerequisites are not verifiable in the publicly available data. The authors attempted to audit the underlying databases and found that most assessment agencies do not disclose the exact lists or the algorithmic rules used to assign prestige scores, making external replication impossible.

A central critique is that current publisher rankings focus almost exclusively on the gate‑keeping function—whether a publisher accepts or rejects a manuscript—while ignoring the myriad value‑adding stages that follow acceptance. Scholarly books undergo extensive editorial work, copy‑editing, design, marketing, distribution, and finally generate impact through citations, course adoption, and public engagement. By reducing a book’s assessment to a single binary judgment about its publisher, the existing systems fail to capture the true scholarly contribution of the work.

To address this shortcoming, the authors propose a “Value‑Added at Every Publishing Stage” model. The model identifies five key dimensions: (1) manuscript selection and peer‑review quality, (2) editorial and copy‑editing standards, (3) design and readability, (4) marketing and dissemination effectiveness, and (5) post‑publication impact (citations, usage statistics, curriculum integration). Each dimension would be measured with a mix of quantitative indicators (error rates, design scores, reach metrics) and qualitative expert assessments, producing a composite score for each book rather than a blanket publisher rating.

The paper concludes with three policy recommendations: (1) increase transparency by publishing the full criteria, data sources, and weighting schemes used in prestige rankings; (2) integrate multi‑stage value‑added metrics into national assessment frameworks to evaluate books on their actual scholarly merit; and (3) foster international collaboration to develop a harmonised, cross‑border evaluation framework that reduces contradictory rankings. Implementing these changes would enable researchers to make informed publishing decisions, improve the fairness of research assessments, and ultimately strengthen the role of scholarly monographs in the research ecosystem.