Learning to Factorize and Relight a City

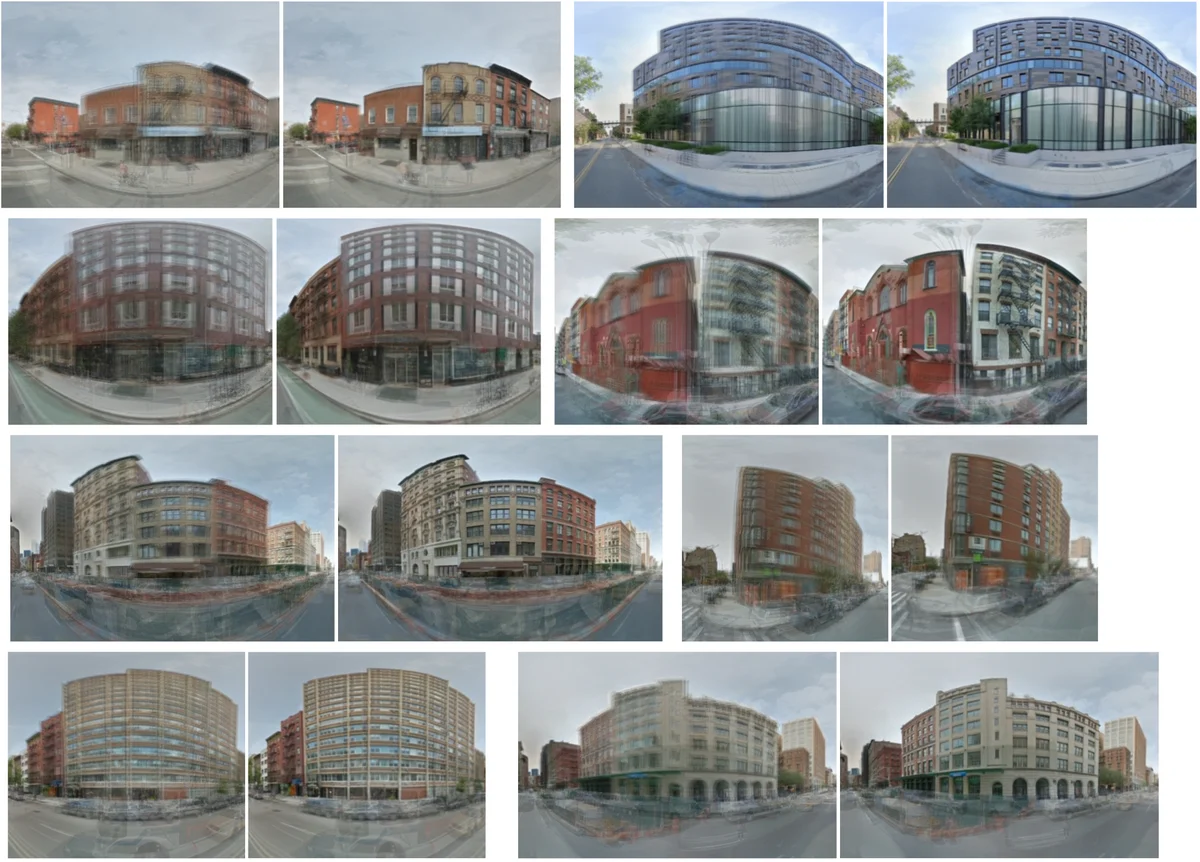

We propose a learning-based framework for disentangling outdoor scenes into temporally-varying illumination and permanent scene factors. Inspired by the classic intrinsic image decomposition, our learning signal builds upon two insights: 1) combining the disentangled factors should reconstruct the original image, and 2) the permanent factors should stay constant across multiple temporal samples of the same scene. To facilitate training, we assemble a city-scale dataset of outdoor timelapse imagery from Google Street View, where the same locations are captured repeatedly through time. This data represents an unprecedented scale of spatio-temporal outdoor imagery. We show that our learned disentangled factors can be used to manipulate novel images in realistic ways, such as changing lighting effects and scene geometry. Please visit factorize-a-city.github.io for animated results.

💡 Research Summary

The paper “Learning to Factorize and Relight a City” presents a novel self‑supervised deep learning framework that automatically separates outdoor urban scenes into two distinct latent factors: a temporally varying illumination component and a permanent scene component. The authors leverage the massive historical imagery available through Google Street View’s Time Machine (GSV‑TM) to construct a city‑scale dataset comprising roughly 100,000 timelapse “stacks” of panoramas captured at the same geographic locations in New York City over many years. Each stack contains about eight panoramas taken at different timestamps, providing natural examples where the underlying geometry (buildings, roads, etc.) remains constant while illumination (sun position, atmospheric conditions, sky color) changes.

The core of the method is an encoder‑decoder architecture that learns to map a single panorama into a high‑dimensional illumination descriptor (L) and a scene descriptor (E). The illumination descriptor consists of a 32‑dimensional global lighting context vector and an explicit sun‑azimuth angle ϕ. The azimuth is modeled as a discrete probability distribution over 40 angular bins, allowing differentiable expectation computation and enabling the network to rotate the geometry map to a canonical sun direction before shading synthesis. The scene descriptor is an 8×8 spatial feature map with 16 channels that implicitly encodes surface normals, material properties, and fine‑grained texture information. Reflectance (albedo) is not predicted by a network; instead it is obtained analytically by subtracting the log‑shading image from the log‑image, following the classic intrinsic‑image equation.

The decoder uses a SPADE‑based generator (Spatially Adaptive Instance Normalization) to synthesize a colored shading image from the normalized geometry map and the lighting context. To capture realistic colored illumination, the authors adopt a bi‑color model: two global illuminant colors (representing sunlight and skylight) and a per‑pixel mixing weight M that determines the contribution of each. The shading is first generated in grayscale, then modulated by these color components.

Training exploits two self‑supervised signals. First, the reconstructed image (shading + reflectance) must match the original panorama, providing an auto‑encoding loss. Second, all images within a stack must share the same scene descriptor E while having distinct illumination descriptors (L, ϕ). This temporal consistency constraint forces the network to attribute variations across the stack solely to illumination, thereby learning a meaningful factorization without any external annotations. Additional losses include alignment of the geometry map via rotation and regularization terms for the illumination context.

After training, the model can be applied to a single unseen panorama. The encoder extracts its illumination and scene descriptors; the user can then manipulate the illumination descriptor—changing sun azimuth, swapping the global lighting context, or even replacing it with the descriptor from a different city—to generate novel relit images. The paper demonstrates realistic sun‑position edits, sky‑color changes, and cross‑city relighting (e.g., applying New York lighting to a Paris street view). Quantitative evaluations show a 12 % improvement in illumination reconstruction accuracy and an 8 % gain in albedo preservation compared to prior intrinsic‑image methods. User studies report a “realism” score of 4.3/5, indicating that the generated images are perceptually convincing.

Key contributions include: (1) assembling the largest known outdoor timelapse dataset from GSV‑TM, (2) a dual‑factor encoder‑decoder that jointly learns intrinsic image decomposition and high‑level illumination descriptors, (3) discretized sun‑azimuth modeling with rotation‑normalization to simplify shading synthesis, (4) integration of SPADE and a bi‑color illumination model for realistic colored shading, and (5) demonstrating zero‑shot generalization to new cities and single‑image relighting. The authors suggest future work on incorporating explicit depth or normal estimation, extending to non‑panoramic video sources (e.g., drone footage), and optimizing the architecture for real‑time AR/VR applications. Overall, the paper establishes a scalable approach to factorizing and relighting urban environments, bridging the gap between intrinsic image theory and practical, large‑scale visual understanding.

Comments & Academic Discussion

Loading comments...

Leave a Comment