Recommending Themes for Ad Creative Design via Visual-Linguistic Representations

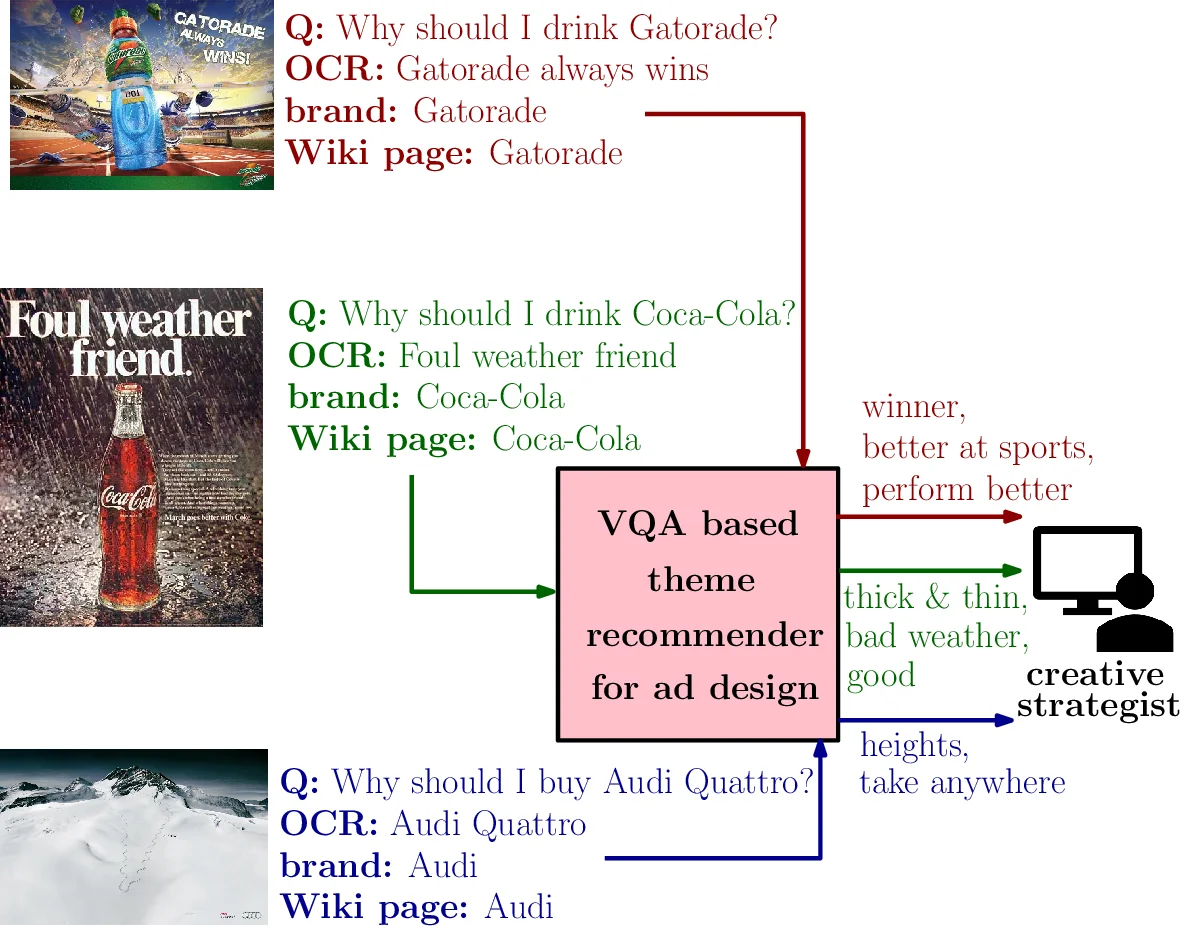

There is a perennial need in the online advertising industry to refresh ad creatives, i.e., images and text used for enticing online users towards a brand. Such refreshes are required to reduce the likelihood of ad fatigue among online users, and to incorporate insights from other successful campaigns in related product categories. Given a brand, to come up with themes for a new ad is a painstaking and time consuming process for creative strategists. Strategists typically draw inspiration from the images and text used for past ad campaigns, as well as world knowledge on the brands. To automatically infer ad themes via such multimodal sources of information in past ad campaigns, we propose a theme (keyphrase) recommender system for ad creative strategists. The theme recommender is based on aggregating results from a visual question answering (VQA) task, which ingests the following: (i) ad images, (ii) text associated with the ads as well as Wikipedia pages on the brands in the ads, and (iii) questions around the ad. We leverage transformer based cross-modality encoders to train visual-linguistic representations for our VQA task. We study two formulations for the VQA task along the lines of classification and ranking; via experiments on a public dataset, we show that cross-modal representations lead to significantly better classification accuracy and ranking precision-recall metrics. Cross-modal representations show better performance compared to separate image and text representations. In addition, the use of multimodal information shows a significant lift over using only textual or visual information.

💡 Research Summary

This paper addresses a persistent challenge in the online advertising industry: the need to continuously refresh ad creatives (images and text) to combat ad fatigue and incorporate insights from successful past campaigns. Manually brainstorming new themes is a time-consuming and creative-intensive task for strategists. To automate this, the authors propose a novel theme (keyphrase) recommendation system for ad creative design that leverages multimodal data from historical ad campaigns.

The core innovation lies in framing the theme recommendation problem as a Visual Question Answering (VQA) task. The system takes multiple inputs: (1) the ad image itself, (2) associated text including in-image OCR text and questions posed about the ad (e.g., “Why should I buy this product?”), and (3) external world knowledge in the form of the Wikipedia page for the brand featured in the ad. The model’s goal is to answer these questions with appropriate keyphrases, which are then aggregated per brand to serve as recommended themes for new creatives.

Technically, the model employs transformer-based cross-modality encoders to learn unified visual-linguistic representations. Image features are extracted using Faster R-CNN, providing region-of-interest features and their spatial locations. Textual inputs are tokenized and embedded. These separate visual and linguistic embeddings are then processed through a cross-modality encoder architecture (inspired by LXMERT), which uses cross-attention layers to model deep interactions between the two modalities, producing a fused representation.

The authors explore two specific formulations for the VQA-based recommender: a classification approach and a ranking approach. The classification model selects the most probable keyphrase from a predefined vocabulary, while the ranking model (using a DRMM architecture) orders keyphrases by their relevance to the input. Experiments conducted on a public dataset of ad creatives demonstrate the significant superiority of the proposed cross-modal representations. The integrated model substantially outperforms baselines that use only image features or only text features, in terms of both classification accuracy and ranking precision-recall metrics. This result underscores the critical importance of jointly understanding visual context, ad copy, and brand background knowledge for the subjective task of inferring advertising themes.

In conclusion, this work presents a practical and effective data-driven solution to assist creative strategists. By automatically generating brand-specific thematic suggestions derived from multimodal analysis of past campaigns, it reduces the manual effort in the initial ideation phase. The research highlights the efficacy of modern visual-linguistic AI models, particularly cross-modal transformers, in tackling complex, real-world problems in advertising and marketing, paving the way for more AI-augmented creative processes.

Comments & Academic Discussion

Loading comments...

Leave a Comment