Speeding up VP9 Intra Encoder with Hierarchical Deep Learning Based Partition Prediction

In VP9 video codec, the sizes of blocks are decided during encoding by recursively partitioning 64$\times$64 superblocks using rate-distortion optimization (RDO). This process is computationally intensive because of the combinatorial search space of …

Authors: Somdyuti Paul, Andrey Norkin, Alan C. Bovik

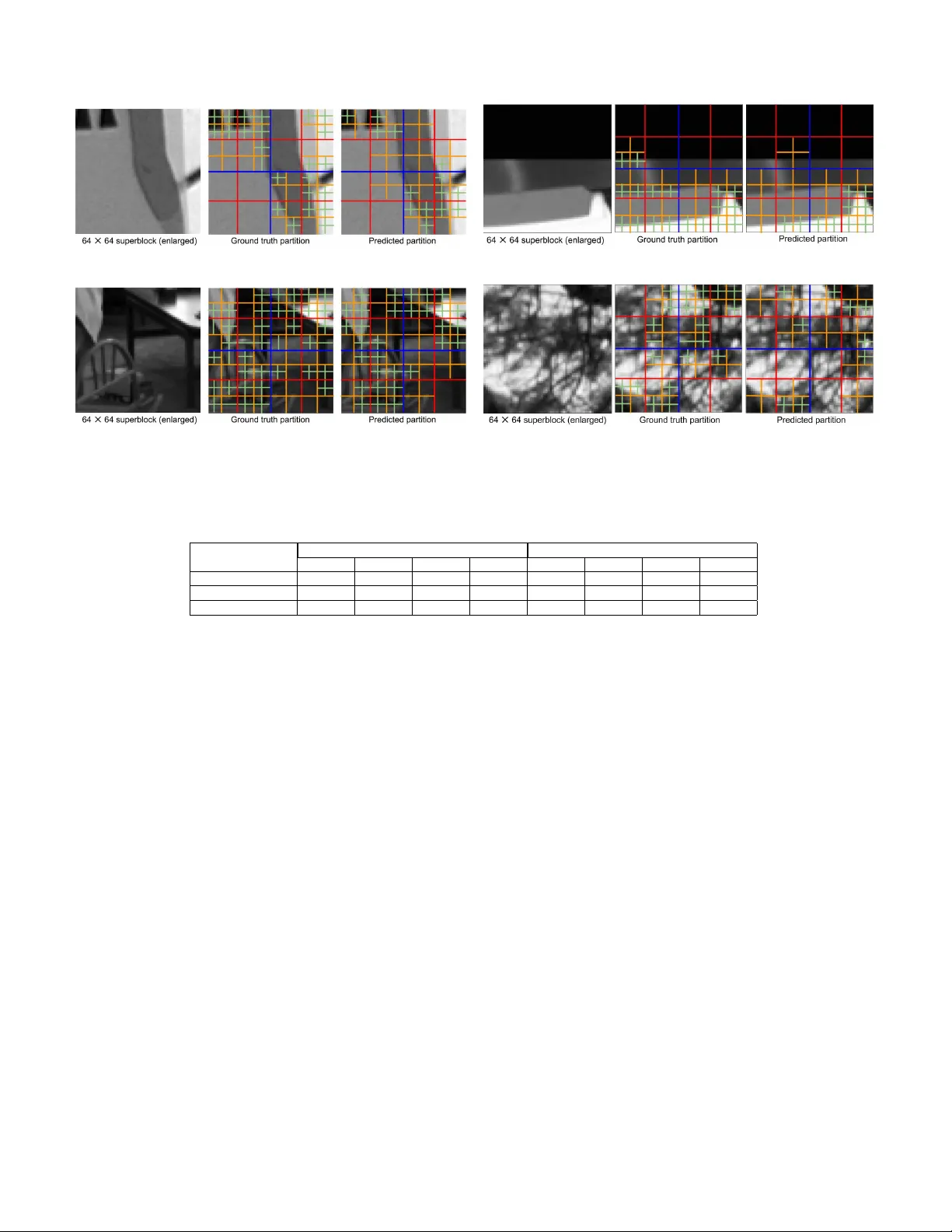

IEEE TRANSA CTIONS ON IMA GE PR OCESSING 1 Speeding up VP9 Intra Encoder with Hierarchical Deep Learning Based P artition Prediction Somdyuti Paul, Andre y Norkin, and Alan C. Bovik Abstract —In VP9 video codec, the sizes of blocks ar e decided during encoding by r ecursiv ely partitioning 64 × 64 superblocks using rate-distortion optimization (RDO). This process is com- putationally intensiv e because of the combinatorial search space of possible partitions of a superblock. Here, we pr opose a deep learning based alternative framework to predict the intra- mode superblock partitions in the form of a f our -lev el partition tree, using a hierarchical fully convolutional network (H-FCN). W e created a large database of VP9 superblocks and the corresponding partitions to train an H-FCN model, which was subsequently integrated with the VP9 encoder to r educe the intra- mode encoding time. The experimental results establish that our approach speeds up intra-mode encoding by 69.7% on average, at the expense of a 1.71% increase in the Bjøntegaard-Delta bitrate (BD-rate). While VP9 pro vides se veral b uilt-in speed levels which are designed to provide faster encoding at the expense of decreased rate-distortion perf ormance, we find that our model is able to outperform the fastest recommended speed level of the reference VP9 encoder f or the good quality intra encoding configuration, in terms of both speedup and BD-rate. Index T erms —VP9, video encoding, block partitioning, intra prediction, convolutional neural networks, machine learning . I . I N T RO D U C T I O N V P9 has been dev eloped by Google [1] as an alternative to mainstream video codecs such as H.264/A VC [2] and High Efficiency V ideo Coding (HEVC) [3] standards. VP9 is supported in many web bro wsers and on Android devices, and is used by online video streaming service pro viders such as Netflix and Y ouT ube. As compared to both its predecessor , VP8 [4] and H.264/A VC video codecs, VP9 allo ws larger prediction blocks, up to size 64 × 64 , which results in a significant improv ement in coding efficiency . In VP9, sizes of prediction blocks are decided by a recursive splitting of non-ov erlapping spatial units of size 64 × 64 , called superblocks. This recursive partition takes place at four hierarchical levels, possibly down to 4 × 4 blocks, through a search over the possible partitions at each level, guided by a rate-distortion optimization (RDO) process. The Coding tree units (CTUs) in HEVC, which are analogous to VP9’ s superblocks, hav e the same default maximum size of 64 × 64 and minimum size of 8 × 8 , which can be further split into smaller partitions ( 4 × 4 in the intra- prediction case). Howe ver , while HEVC intra-prediction only S. Paul and A. C. Bovik are with the Department of Electrical and Computer Engineering, Uni versity of T exas at Austin, Austin, TX, 78712 USA (email: somdyuti@utexas.edu, bovik@ece.utexas.edu). A. Norkin is with Netflix Inc. Los Gatos, CA, 95032 USA (email: anorkin@netflix.com). This w ork is supported by Netflix Inc. Fig. 1. Recursiv e partition of VP9 superblock at four levels sho wing the possible types of partition at each level. supports partitioning a block into four square quadrants, VP9 intra-prediction also allows rectangular splits. Thus, there are four partition choices at each of the four lev els of the VP9 partition tree for each block at that level: no split, horizontal split, vertical split and four-quadrant split. This results in a combinatorial comple xity of the partition search space since the square partitions can be split further . A diagram of the recursiv e partition structure of VP9 is shown in Fig. 1. Although the large search space of partitions in VP9 is instrumental to achiev e its rate-distortion (RD) performance, it causes the RDO based search to incur more computational ov erhead as compared to VP8 or H.264/A VC, making the encoding process slo wer . Ne wer video codecs, such as A V1 [5] and future V ersatile V ideo Coding (VVC) [6], allo w for prediction units of sizes from 128 × 128 to 4 × 4 , giving rise to ev en deeper partition trees. As ultra high-definition (UHD) videos become more popular , the need for faster encoding algorithms will only escalate. One important way to approach this issue is to reduce the computational complexity of the RDO-based partition search in video coding. While state of the art performances in visual data processing technologies, such as computer vision, rely heavily on deep learning, mainstream video coding and compression tech- nology remains dominated by traditional block-based hybrid codecs. Ho wever , deep learning based image compression techniques such as [7]–[9] are also being actively explored, and have sho wn promise. A few of such image based deep c 2020 IEEE. Personal use of this material is permitted. Permission from IEEE must be obtained for all other uses, in any current or future media, including reprinting/republishing this material for advertising or promotional purposes, creating new collecti ve works, for resale or redistribution to servers or lists, or reuse of any cop yrighted component of this w ork in other works. 2 IEEE TRANSA CTIONS ON IMA GE PR OCESSING learning techniques hav e also been extended to video com- pression with promising results [10]–[12]. A second category of work uses deep learning to enhance specific aspects of video coding, such as block prediction [13], [14], motion compensation [15], [16], in-loop filtering [17]–[19], and rate control [20], with the objectiv e of improving the efficienc y of specific coding tools. Given these de velopments, there is a possibility of a future paradigm shift in the domain of video coding tow ards deep learning based techniques. Our present work is motiv ated by the success of deep learning- based techniques such as [21], [22], on the current and highly practical task of predicting the HEVC partition quad-trees. In this paper , we take a step in this direction by de veloping a method of predicting VP9 intra-mode superblock partitions in a novel bottom-up way , by employing a hierarchical fully con volutional network (H-FCN). Unlike pre vious methods of HEVC partition prediction, which recursi vely split blocks starting with the largest prediction units, our method predicts block merges recursively , starting with the smallest possible prediction units, which are 4 × 4 blocks in VP9. The bottom- up approach optimized using an H-FCN model allo ws us to use a much smaller network than [22]. By integrating the trained model with the reference VP9 encoder, we are able to substantially speed up intra-mode encoding at a reasonably low RD cost, as measured by the Bjønteg aard delta bitrate (BD- rate) [23], which quantifies differences in bitrate at a fixed encoding quality lev el relativ e to another reference encode. Our method also surpasses the higher speed lev els of VP9 in terms of speedup, while maintaining a lo wer BD-rate as we show in the experimental results. The main steps of our work presented in this paper can be summarized as follows: 1) W e created a large and div erse database of VP9 intra encoded superblocks and their corresponding partition trees using video content from the Netflix library . 2) W e de veloped a fast H-FCN model that efficiently predicts VP9 intra-mode superblock partition trees using a bottom-up approach. 3) W e integrated the trained H-FCN model with the VP9 encoder to demonstrably speed up intra-mode encoding. These steps are summarized by the block diagram in Fig. 2. The source code of our model implementation, including the modifications made to the reference VP9 decoder and encoder for creating the database of superblock partitions, and using the trained H-FCN model for faster intra encoding, respectiv ely , is available online at [24]. The rest of the paper is organized as follo ws. In Section II, we briefly re view earlier works relev ant to the current task. Section III describes the VP9 partition database that we created to driv e our deep learning approach. Section IV elaborates the proposed method. Experimental results are presented in Section V. Finally , we dra w conclusions and provide directions for future work in Section VI. I I . R E L A T E D W O R K The earliest machine learning based methods that were designed to infer the block partition structures of coded videos from pix el data relied heavily on feature design. A decision tree based approach was used to predict HEVC partition quadtrees for intra frames from features deriv ed from the first and second order block moments in [25], reportedly achieving a 28% reduction in computational complexity along with 0.6% increase in BD-rate. Using a support vector machine (SVM) classifier on features deri ved from measurements of the variance, color and gradient distributions of blocks, a 36.8% complexity reduction was gained against a 3% increase in BD- rate in [26], on the screen content coding extension of HEVC in the intra-mode. W ith the adv ent of deep learning techniques in recent years, significant further breakthroughs were achiev ed [21], [22], [27]. In [21], a parallel conv olutional neural network (CNN) architecture was employed to reduce HEVC intra encoding time by 61.1% at the expense of a 2.67% increase in BD-rate. Three separate CNN models were used to learn the three-le vel intra-mode partition structure of HEVC in [27], obtaining an av erage savings of 62.2% of encoding time against a BD- rate increase of 2.12%. This approach was e xtended in [22] to reduce the encoding time of both intra and inter modes using a combination of a CNN with a long short-term memory (LSTM) architecture. This approach reduced the average intra- mode encoding time by 56.9-66.5% against an increase of 2.25% in BD-rate, while in the inter mode, a 43.8%-62.9% av erage reduction was obtained versus an increase of 1.50% in BD-rate. Howe ver , there has been little work reported on the related problem of reducing the computational complexity of RDO based superblock partition decisions in VP9, and even less work employing machine learning techniques. A multi-lev el SVM based early termination scheme for VP9 block parti- tioning was adopted in [28], which reduced encoding time by 20-25% against less than a 0.03% increase in BD-rate in the inter mode. Although superblock partition decisions using RDO consume bulk of the compute expense of intra-mode encoding in VP9, to the best of our knowledge, there has been no prior w ork on predicting the complete partition trees of VP9 superblocks. The problem of VP9 superblock partition prediction is a hierarchical decision process, which in volv es choosing one of four types of partitions for each block, at every le vel of the partition tree. A hierarchical structure was introduced in [29], using a two-lev el CNN that was trained to perform coarse-to-fine category classification, achieving improvements in the classification accuracy . Based on similar principles, [30] extended the hierarchical classification approach to more than two le vels, using a branched CNN model with multiple output layers yielding coarse-to-fine category predictions. The ability of these architectures to capture the hierarchical relationships inherent in visual data motiv ates our model design. At the same time, unlike global image tasks such as classification, inferring partition trees from superblock pixels is a spatially dense prediction task. For example, on a 64 × 64 superblock, there can be a maximum of 64 8 × 8 blocks whose partitions are to be inferred. This means that as many as 64 localized predictions must be made on a 64 × 64 superblock at the lo west lev el of the partition tree. P A UL et al. : SPEEDING UP VP9 INTRA ENCODER WITH HIERARCHICAL DEEP LEARNING BASED P AR TITION PREDICTION 3 Fig. 2. Flo w diagram of our VP9 partition prediction. The inputs to the database are schematically shown for each superblock. Fully conv olutional networks (FCNs) have been sho wn to perform remarkably well on a variety of other spatially dense prediction tasks, such as semantic segmentation [31], [32], depth estimation [33], saliency detection [34], object detection [35], visual tracking [36] and dense captioning [37]. Moreov er , conv olution layers are typically faster than fully connected layers for similar input and output sizes. Likewise, we hav e found that the H-FCN model that we hav e dev eloped and explain here imparts the ability to simultaneously handle hierarchical prediction and dense prediction with significant speedup. I I I . V P 9 I N T R A - M O D E S U P E R B L O C K P A RT I T I O N D A TA BA S E In order to facilitate a data-dri ven training of our H-FCN model, we constructed a large database of VP9 intra encoded superblocks, corresponding QP values, and partition trees. In the absence of a publicly av ailable superblock partition database for VP9 similar to the one dev eloped in [22] for HEVC CTU partitions, this was a necessary first step for our work. A. P artition T ree Repr esentation Since the number of possible partition trees of a superblock is too large to be represented as distinct classes in a multi- class classification problem, we need a simple and concise description of the partition tree to ensure effecti ve learning. The partition tree representation we adopt is similar to [22], but represents block merges instead of splits to facilitate bottom-up prediction. In our approach, the partition tree is represented by four matrices M 0 , · · · , M 3 that correspond to the four lev els of the VP9 partition tree. An example of a superblock partition tree is illustrated in Fig. 3. The four possible merges of the blocks at each level (including the possibility of no merge) Fig. 3. Matrix representation of the four-lev el partition tree. are indicated by the numbers 0 to 3 as shown in Fig. 3. Each element of the matrices indicates the type of merge of the group of four blocks corresponding to that element’ s location at that le vel. For e xample, each element of the 8 × 8 matrix M 0 indicates ho w the four 4 × 4 blocks at corresponding locations are merged at level 0. Similarly , M 1 , M 2 and M 3 represent merges of non-overlapping groups of four 8 × 8 , 16 × 16 and 32 × 32 blocks, respectively , at the higher levels. W e denote the partition tree of a superblock by P = {M 0 , · · · , M 3 } . This succinct representation allows us to formulate the problem as a multi-lev el, multi-class classification task, with 4 lev els, and 4 classes corresponding to each matrix element. B. Database Cr eation Our database was created using content from the Netflix video catalog, encoded using the reference VP9 encoder from the libvpx package [38], in VP9 profile 0 (8 bits/sample and 4:2:0 chroma subsampling), using speed level 1 and the quality setting good . The contents were drawn from 89 cinematic productions and 17 tele vision episodes, each from a unique television series. The contents selected were dra wn from different genres, such as action, drama, animation, etc. 4 IEEE TRANSA CTIONS ON IMA GE PR OCESSING W e encoded each content at three resolutions: 1920 × 1080 , 1280 × 720 and 960 × 540 . The partition pattern selected by the RDO on each superblock depends on both its visual content, as well as the QP value chosen for that superblock. Thus, we modified the VP9 decoder from the libvpx package to record the computed partition tree P of each superblock, in the form described in Section III-A, along with the corresponding QP value Q while decoding the intra frames of the VP9 encoded bitstreams. In order to obtain the ra w pixel data corresponding to the superblocks of each VP9 encoded video, the source videos were con verted to a YCbCr 4:2:0 8-bit representation, then downsampled to the encode resolution via Lanczos resam- pling, if the source and the encode were at different reso- lutions. Follo wing this process, the luma channels of non- ov erlapping 64 × 64 blocks were extracted from the source frames at the encode resolution. These steps constitute the preprocessing stage shown in Fig. 2. Denote this superblock pixel data by S . Thus, each sample of our database may be expressed by a tuple ( S , Q , P ) . If the frame width or height (or both) is not exactly divisible by 64, the VP9 encoder zero pads the frame boundaries to construct superblocks of size 64 × 64 at the boundaries during encoding. Howe ver , we excluded the boundary superblocks with partial zero padding from our database. The HEVC intra-mode partition database [22] is limited to only 4 QP values. By contrast, our database encompasses internal QP values in the range 8-105, where the internal QP value range for VP9 is 0-255 (the corresponding external QP value range is 0-63). The range of QP values used in our database is a practical range used for encoding intra frames in adaptiv e streaming, where rather than using higher QP values to stream videos at lo w target bitrates, a lo wer resolution video is streamed, which is suitably upsampled later at the viewers’ end [39]. W e refer the reader to Appendix B for a comparison of the encoding performance on our database against on the intra-mode database of [22]. Our ne w database contains content from only a single episode of each television series. W e divided our database into training and validation sets, as summarized in T able I, where the letters M and E indicate cinematic “movies” and television “episodes, ” respecti vely . The di vision was conducted such that there was no o verlap in content between the training and validation sets, i.e. entirely different movies and tele vision series were used for each set. T able I also specifies the percentage of computer graphics image (CGI) content in each set. Although the superblocks database of Netflix content cannot be made publicly av ailable (due to the content licenses), we do provide the code for the modified VP9 decoder at [24], which can be used to generate a similar database from a set of VP9 encoded bitstreams and their original source videos. The test set was created from publicly av ailable content, dif ferent from the Netflix contents used in the training and validation sets, as described later in Section V -D. I V . P R O P O S E D M E T H O D As mentioned earlier , in our approach the partition tree is constructed in a bottom-up manner , where merges of the T ABLE I S U MM A RY O F V P 9 I N T R A - M O D E S U PE R B L OC K PART I T I ON D A TAB A SE Database Contents % of CGI content # of samples T raining 62 (M) + 12 (E) 12.16 11,990,384 V alidation 27 (M) + 5 (E) 12.50 4,698,195 smallest possible blocks, which are of size 4 × 4 in VP9, are predicted first at the lowest lev el of the partition tree, follo wed by mer ge predictions on larger blocks at the upper lev els. This is unlike the coarse-to-fine approaches used in many image analysis applications, but it is well-moti v ated here. In a multi- layered CNN model, the early layers learn simple lo w le vel features depicting local image characteristics, such as edges and corners. These simple features are progressively combined to form textures and more complex features representing higher lev el global semantics by the deeper layers. The intu- ition behind our bottom-up block mer ge strategy follows from this observ ation, which is supported by feature visualization techniques applied to successi ve CNN layers [40]. Since the smaller blocks are more spatially localized, low-le vel local features, such as edges and corners that are learned by the early layers of a CNN, should be adequate to predict the merge types of 4 × 4 blocks i.e. the partition types of 8 × 8 blocks. The most notable benefit of this approach is that early prediction of the merge types of the smaller blocks at the lower levels of the partition tree, sav es computation time and network parameters that can instead be in vested in predicting the merge types of larger blocks at the upper lev els, on which the CNN needs to learn more complex patterns. This allo ws us to design a deeper network for the higher le vels, ha ving many fewer trainable parameters than for e xample, the ETH-CNN of [22] with three parallel branches and 1 , 287 , 189 trainable parameters, which uses just three conv olutional layers at each lev el of prediction, despite the larger number of trainable parameters. A deeper network is able to learn additional le vels of hierarchical abstractions of the data, which is rele v ant in the context of hierarchical partition prediction in VP9. An analysis of the efficac y of this bottom-up prediction strate gy is giv en in Appendix A, by comparing it with an alternati ve top-down strategy . A. H-FCN Ar chitectur e The architecture of our H-FCN model is sho wn in Fig. 4. It consists of four output branches and a trunk from which the branches emanate. This branched architecture is similar to [30]. Howe ver , unlike [30], our model is fully con volutional and uses a bottom-up prediction scheme. The inputs to the model are the superblocks S and corresponding QP v alues Q . The trunk has a con ventional CNN structure with con volutional layers followed by rectified linear unit (ReLU) nonlinearities [41] and using batch normalization [42]. Also 2 × 2 max pooling is applied follo wing every tw o conv olutional layers in the trunk. The outputs of the model are the matrices M 0 , · · · M 3 which constitute the partition tree P . Each branch predicts one matrix from the lowest to the highest level of the partition tree, as shown in Fig. 4. P A UL et al. : SPEEDING UP VP9 INTRA ENCODER WITH HIERARCHICAL DEEP LEARNING BASED P AR TITION PREDICTION 5 Fig. 4. H-FCN model architecture. The input to each branch are the features produced by the con volutional layers processed by ReLU, batch normalization and max pooling at each depth of the trunk. At the first layer of each branch, a con volutional layer ha ving filters with spatial kernel dimensions of 4 × 4 and stride 4 is used, making it possible to isolate the features corresponding to adjacent blocks at that lev el. The QP value is fed at the input of the second con volutional layer of each branch by concatenating a matrix of identical elements of value Q to the output of the first conv olutional layer (after ReLU and batch normalization operations). The size of this matrix at each branch is chosen to match the spatial dimensions of the output of the first con volutional layer of that branch, which allo ws the two to be concatenated along their third dimension. Since the features that correspond to adjacent blocks are isolated by the first con volutional layer of each branch, feeding in QP value input in this manner also ensures that there is a copy of the QP value corresponding to the prediction of each block at the subsequent layers of that level. Unlike CNNs that make global predictions using fully connected output layers, we need to make structured local predictions at each branch, except for the last one (at the topmost level of the partition tree). The subsequent output layers of these branches are designed as con volutional layers having 1 × 1 × M filters, where M is the “depth” dimension of the input to the layers. This design serves to maintain isolation between features that correspond to adjacent blocks by forming local connections to the outputs of the previous layers. At the last branch, the spatial dimension of the input to the first 1 × 1 con volutional layer is also 1 × 1 , which makes it functionally equiv alent to a fully connected layer . Finally , the output of the last 1 × 1 con volutional layer of each branch is fed to a softmax function, yielding a set of class probabilities. Using 1 × 1 × M con volutions on the features deriv ed from each block at particular conv olutional layer of each branch, is akin to having a fully connected layer (in the “depth” dimension) for every block at the same lev el while sharing the weights. This design has two important consequences. First, it increases the inference and training speed as compared to using fully connected layers because of the greatly reduced number of connections. Second, it further speeds up con ver - gence during training by reducing the number of trainable parameters and by “augmenting” the data, since blocks of the same size but at dif ferent spatial locations within a superblock are used to train the con volution parameters. It should be noted that, although we experimented with larger model architectures, we found the architecture of Fig. 4 to be best suited to the task, as elaborated later in Section V. W e will refer to the model as shown in Fig. 4 as the “H-FCN model” in the rest of the paper , unless otherwise stated. B. Loss Function Since there are four possible types of merges for each group of four blocks at each level, predicting the elements of the matrices M 0 , · · · , M 3 is a multi-class classification with four classes. By slicing each of the four matrices into their constituent elements, we obtain a total of 85 outputs. A categorical cross-entropy loss is then applied to each output: L q ( w ) = − 1 N N X i =1 K X j =1 y i,j log ( p q i,j ( w )) q = 1 , · · · , 85 (1) where N is the batch size, K = 4 is the number of classes, w represents the weights of the network, and p q i,j ( w ) is the softmax probability of the i th sample, predicted for the j th class at the q th output of the network. The net loss of the network is then L ( w ) = P 85 p =1 L q ( w ) . C. Integr ation with VP9 Encoder W e integrated the trained H-FCN model with the reference VP9 encoder implementation, av ailable in the libvpx package [38]. By this integration, we replaced the RDO based partition search of the VP9 encoder with the H-FCN model prediction, which, as it turns out, makes intra encoding much faster . While encoding each frame, the partition trees of all 64 × 64 superblocks completely contained within the frame boundaries were collectively predicted as a batch, using the integrated model. 6 IEEE TRANSA CTIONS ON IMA GE PR OCESSING Since the different levels of the superblock partition are modeled independently of one another, the predictions of any two adjacent lev els might be mutually inconsistent, producing an inv alid partition tree. For example, a “full merge” may be predicted for a group of four 16 × 16 blocks at level 2, whereas the four 8 × 8 subblocks within one of these 16 × 16 blocks can be predicted to have no merge in level 1. This is inconsistent, because in this case the merger of four 16 × 16 blocks indicates that a 32 × 32 block is not split, and thus the 16 × 16 subblocks within it cannot have a split either , although a split is required by the “no merge” prediction of the corresponding group of 8 × 8 blocks. Thus, it may be necessary to correct the predictions in P to obtain a valid partition tree that can be used while encoding. W e devised a top-down correction procedure that is illustrated in Fig. 5, where the colored regions are inconsistent with predictions at other lev els. Consistency is enforced between adjacent lev els by correcting any of the other three merge predictions to “full merge, ” at all such blocks at the lo wer of the two le vels enclosed by a lar ger block predicted to ha ve “full merge” at the higher lev el. Beginning with level 2, predictions at each lev el are successiv ely corrected to be consistent with its corrected next higher level in this manner . In other words, first M 2 at lev el 2 is corrected to be consistent with M 3 ; let the corrected matrix at level 2 be M 0 2 . At the next step, M 1 is corrected to be consistent with M 0 2 to obtain M 0 1 and so on. At the end of this procedure, we hav e P 0 = {M 3 , M 0 2 , M 0 1 , M 0 0 } , where P 0 is a valid partition tree. Although the predictions made by the H-FCN are inde- pendent at each le vel, they were found to be remarkably consistent across lev els. Our experiments re vealed that only about 5.17% of the predicted superblock partition trees from the validation set were inconsistent, and thus needed the aforementioned correction. This suggests that although the consistency requirement was not explicitly enforced, the H- FCN model implicitly learns to make consistent merge predic- tions most of the time. The motiv ation behind our choice of the inconsistency correction approach is explained in Section V -D. Predictions in P 0 are then ordered to form a preorder trav ersal of the partition tree from left to right and top to bottom, which corresponds to the order in which the blocks are encoded in VP9. The predictions thus ordered are then recursiv ely used to replace the RDO module of the encoder to decide the partitions of all the superblocks except those extending be yond frame boundaries. Since these boundary cases were not included in our database, we simply in voke the RDO module to encode them. V . E X P E R I M E N TA L R E S U L T S In order to experimentally ev aluate the performance of our H-FCN model relative to RDO, we compared the encoding time and RD performance of the two approaches on a set of test video sequences at three different resolutions. T o further validate the efficacy of our approach, we also extended the comparison to include the highest recommended speed le vel of VP9 for our encoding configuration ( cpu-used 4 at good quality). Fig. 5. T op-down inconsistency correction. Fig. 6. H-FCN loss with training progress. A. System Settings W e dev eloped and trained our H-FCN model using T en- sorflow (version 1.12) with the Keras API. The model was trained on a system with an Intel Core i7-6700K CPU @4 GHz, with 8 cores and 32 GB RAM running a 64 bit Ubuntu 16.04 operating system. The training was accelerated with a Nvidia Titan X Pascal GPU with 12 GB of memory . Since the loss is not calculated during inference, the matrix slicing operation to generate multiple outputs is unnecessary , and removing it reduces the inference time by a significant fraction in our Keras implementation. Thus, we removed the slicing layers during inference as indicated by the dashed box in Fig. 4, directly obtaining the matrices M 0 , · · · , M 3 as the network outputs. The trained Keras model was then con verted to a T ensorflow computation graph prior to deployment in the VP9 encoder . Integration of the trained model with the reference VP9 encoder was done using libvpx version 1.6.0, which is same as the one used to encode the VP9 bitstreams to create the partition trees in our database. Since the libvpx im- plementation of VP9 is in C language, we used the T ensorflow C API, which was compiled with support for optimizations that use Intel’ s Math Kernel Library for Deep Neural Networks. This enabled us to seamlessly embed our model within the VP9 encoder . The T ensorflo w C API was also found to be considerably faster than both Keras and T ensorflo w’ s nativ e Python API for inference, which is an added advantage of this choice. While a GPU was used for training, all encoding tests were performed without a GPU on a single core Intel Core i7-7500U CPU @2.70GHz with 8 GB of RAM running 64 bit Ubuntu 16.04. P A UL et al. : SPEEDING UP VP9 INTRA ENCODER WITH HIERARCHICAL DEEP LEARNING BASED P AR TITION PREDICTION 7 (a) QP=25 (b) QP=36 (c) QP=42 (d) QP=63 Fig. 7. Superblock partitions predicted by the trained H-FCN model compared with ground truth partitions on four superblocks encoded with different QP values. T ABLE II P R ED I C T IO N AC C U RA C Y O F H - F CN M O D EL # of parameters T raining (%) V alidation (%) Lev el 0 Lev el 1 Level 2 Level 3 Level 0 Lev el 1 Level 2 Level 3 336,608 91.96 86.63 85.70 90.54 91.43 85.70 84.60 90.80 70,408 91.89 86.46 85.33 90.23 91.34 85.53 84.28 90.81 26,336 91.73 86.07 84.42 89.42 91.18 85.13 83.47 90.27 B. T raining Details and Hyperparameters The H-FCN model as depicted in Fig. 4 has 26 , 336 trainable parameters and executes ∼ 10.8M floating point op- erations (FLOPs) per sample, at a per sample inference time of ∼ 1ms, where each sample in the inference batch is a 64 × 64 superblock. W e also experimented with two larger versions of the model, having 336 , 608 and 70 , 408 trainable parameters, which were identical to that in Fig. 4, except that we used a larger number of filters in certain layers. 1 The number of training and validation samples are as mentioned in T able I. The H-FCN model was trained with a batch size of 128 using the Adam optimizer [43], with a step size of 0.001 for ov er 10 7 iterations. The weights of each layer were initialized with randomly drawn samples from uniform distributions determined by the size of the inputs to each layer [44]. Fig. 6 illustrates the variation of the training and validation losses of the H-FCN model against the number of training iterations, where the validation loss was ev aluated on 10 5 samples from the validation set at the end of ev ery 1000 iterations. C. Prediction P erformance W e ev aluated the prediction accuracy of the trained model on the v alidation set. The average accuracies of the model at 1 The architectures of the larger models are provided at https://drive.google. com/file/d/1nDP42TjoeZhoW AlfhGlHlCMi04- CmKUE/view?usp=sharing the four lev els of prediction were ev aluated on 10 5 randomly drawn samples of the v alidation set, and are summarized in T able II. The corresponding performance on an equal number of random samples from the training set is also provided for reference. In T able II, we also include the performance results of the tw o lar ger models with 336 , 608 and 70 , 408 parameters respectiv ely , to show that our model design allows for smaller architectures with no significant impairment of prediction accuracy . From T able II, we observe that the maximum decline in accuracy on the validation set between the largest and the smallest models was 1.13%, which occured at level 2. The impact of this decline in accuracy on the BD-rate is examined in Section V -D. The partitions predicted by the H-FCN model are visualized in Fig. 7, against the corresponding ground truth partitions, on four randomly selected superblocks encoded at different QP values from the validation set, with the 64 × 64 superblocks shown enlarged for visual clarity . These visualizations serve to demonstrate that the global structure and density of the superblock partitions predicted by our H-FCN model are in good agreement with ground truth, although some local blocks may be partitioned differently between the two. D. Encoding P erformance Although the encoding time can be reduced by predicting the block partitions using a trained model instead of con- 8 IEEE TRANSA CTIONS ON IMA GE PR OCESSING T ABLE III E N CO D I N G P E RF O R M AN C E O F H - FC N M O DE L Sequence V ideo Source Resolution # of frames ∆ T (%) BD-rate (%) 336,608 70,408 26,336 336,608 70,408 26,336 Sintel trailer Blender 1920 × 1080 300 -0.1 27.8 56.0 2.78 2.78 3.39 P edestrian ar ea TUM 375 36.6 41.6 61.8 2.08 2.12 2.45 Sunflower TUM 500 18.8 34.4 55.0 2.61 2.76 3.07 Cr owd run VQEG 500 45.2 49.7 65.3 0.99 1.13 1.16 Ducks takeoff VQEG 500 43.6 48.6 67.9 1.42 1.55 1.72 Narrator Netflix El fuente 300 36.6 55.8 73.3 0.32 0.25 0.58 F ood market Netflix El fuente 300 37.0 46.4 68.5 1.51 1.50 1.63 T oddler fountain Netflix Chimera 420 47.8 48.9 70.2 1.37 1.35 1.33 Rainr oses EBU 500 57.9 64.2 73.6 0.83 0.86 0.92 Kidssoccer EBU 500 74.6 76.5 83.5 0.65 0.59 0.74 A verage - - 39.8 49.4 67.5 1.46 1.49 1.70 Big buc k bunny Blender 1280 × 720 300 37.6 55.2 69.3 2.26 2.22 3.21 P ark run TUM 504 24.0 50.4 65.2 1.29 2.06 1.74 Shields TUM 504 54.2 62.9 69.3 1.61 1.88 1.73 Into tree VQEG 500 52.3 69.4 77.0 1.10 1.23 1.27 Old town cross VQEG 500 59.4 67.8 77.5 0.38 0.71 0.82 Cr osswalk Netflix El fuente 300 42.4 63.3 74.4 0.91 1.07 1.32 T ango Netflix El fuente 294 35.8 49.6 75.4 1.52 1.61 1.73 Driving PO V Netflix Chimera 500 35.6 55.9 70.1 1.25 1.24 1.46 Dancers Netflix Chimera 500 25.5 49.9 71.9 3.08 1.68 2.47 V egicandle EBU 500 47.1 54.0 71.6 1.47 1.61 1.74 A verage - - 41.4 57.8 72.2 1.49 1.53 1.75 Elephant’ s dr eam Blender 960 × 540 545 37.6 54.7 59.9 1.68 1.72 1.82 Eur o T ruck Simulator 2 T witch 300 48.0 68.0 73.1 1.65 1.63 1.69 Station TUM 313 64.0 65.4 80.7 2.01 2.08 2.27 Rush hour TUM 500 27.3 50.3 66.4 1.71 1.92 1.98 T ouchdown pass VQEG 570 45.2 63.7 72.8 1.45 1.49 1.62 Snow mnt VQEG 570 35.1 50.8 64.6 1.09 1.05 1.04 Roller coaster Netflix El fuente 500 46.1 53.6 73.9 1.40 1.46 1.68 Dinner scene Netflix Chimera 500 23.9 54.0 65.7 1.48 1.38 1.70 Boxing practice Netflix Chimera 254 43.1 58.4 68.6 1.43 1.42 1.50 Meridian Netflix Meridian 500 42.4 57.9 69.6 1.36 1.46 1.47 A verage - - 41.3 57.7 69.5 1.53 1.56 1.68 Overall average 40.8 55.0 69.7 1.49 1.53 1.71 ducting RDO-based exhaustiv e search, the RD performance of learned models may suf fer due to incorrect partition pre- dictions. Thus, to ev aluate the performance of our trained model, it is also necessary to assess its RD performance with respect to that of the reference RDO. Accordingly , we encoded sev eral test video sequences with the original VP9 encoder using the RDO based partition search, and also with the modified VP9 encoder using the integrated H-FCN model to conduct partition prediction. The test video sequences were obtained as raw videos from multiple publicly av ailable sources commonly used for ev aluating video codecs. 2 All of the test sequences were con verted to the YCbCr 4:2:0 8- bit format prior to encoding. The lengths of the sequences varied between 254 to 570 frames. Sequences which were originally longer were clipped to less than 550 frames (the number of frames used for each test sequence are reported in T able III). As mentioned earlier in Section III-B, our model was jointly trained on three dif ferent spatial resolutions. For each resolution, the test sequences chosen were at the same or higher resolution. The sequences that were originally at higher resolutions were downsampled to the required resolution using Lanczos resampling. A set of fiv e internal QP values gi ven by { 15 , 31 , 47 , 70 , 99 } (corresponding to external QP values of { 20 , 30 , 35 , 40 , 45 } 2 Our test video sequences were sourced from https://media.xiph.org/video/ derf/ and https://tech.ebu.ch/hdtv/hdtv test- sequences. respectiv ely) was selected to represent a practical quality range of intra-frames used in adaptiv e streaming. Each sequence was then encoded at these five QP values in one pass, using constant quality intra-mode, one tile per frame, speed level 1 and the good quality setting. These settings were chosen to be compatible with the encoding configuration used for our database, as mentioned in Section III-B, although our model is equally amenable to be trained for other configurations and can be parallelized over multiple tiles. The RDO based VP9 encoder at these settings thus formed the baseline for our approach. W e used the same encoding settings as the baseline for the modified encoder with the integrated H-FCN model. The percentage speedup of our method with respect to the RDO baseline for a test sequence is calculated as: ∆ T = T RDO − T H-FCN T RDO × 100 (2) where T RDO and T H-FCN are the total times taken to encode the sequence at all fiv e QP values with the RDO baseline encoder and the H-FCN integrated encoder , respectiv ely . Since our model is integrated with the encoder , T H-FCN includes the total inference time of the H-FCN model on the superblock batches from all of the frames of the video sequence to be encoded, as well as the encoding time without the RDO procedures for superblock partition decision. Thus, a positiv e value of ∆ T represents a speedup with respect to the RDO. T o measure the RD performance, we also computed the BD-rate P A UL et al. : SPEEDING UP VP9 INTRA ENCODER WITH HIERARCHICAL DEEP LEARNING BASED P AR TITION PREDICTION 9 relativ e to the RDO baseline, using peak signal-to-noise ratio (PSNR) as the distortion metric. T able III reports the ∆ T and BD-rate values of the test sequences when using the H-FCN models with 336 , 608 , 70 , 408 and 26 , 336 parameters. The test videos in T able III comprise 30 distinct contents, equally divided between the three resolutions. In T able III, the first sequences of the 1920 × 1080 and 1280 × 720 categories as well as the first two sequences of the 540 × 960 category are CGI content. As would be expected, the smallest model, with just 26 , 336 parameters, achieved the highest speedup ov er all the test videos. Although the model with 336 , 608 parameters achiev ed the highest accuracy on the validation set, we observe that for some test sequences, the smaller models achiev ed lower BD-rate values. The ov erall BD-rates achiev ed by the three models were 1.49%, 1.53% and 1.71%. The smallest model was thus found to maintain a reasonable BD- rate, while achieving the most speedup. All of the analysis that we provide was conducted using the smallest H-FCN model having 26 , 336 parameters, unless otherwise mentioned. In order to show that the H-FCN model is able to generalize irrespectiv e of the QP values chosen for ev aluation within the range of the database, we also report the performance measures for an additional set of four internal QP values { 10 , 40 , 60 , 88 } in T able IV. The speedup and BD-rate values reported in T able IV are close to the corresponding values from T able III, indicating that the performance is consistent across the range of QP values used in the database. For each of the nine QP values in the combined set { 10 , 15 , 31 , 40 , 47 , 60 , 70 , 88 , 99 } , the av erage changes in encoding time, bitrate and PSNR with respect to the RDO baseline, denoted by ∆ t , ∆ bitrate and ∆ PSNR respectively , are also plotted in Fig. 8 for the three resolutions. The curves in Fig. 8, illustrate that a substantial speedup is obtained at ev ery QP value, for a relativ ely small bitrate increase and PSNR reduction with respect to the RDO baseline. Although the encoding time was predominantly reduced with increases in the QP value using the RDO baseline as well as the proposed method, on the 540p sequences, the av erage encoding time of the RDO baseline was found to increase at the higher end of the range of QP values, while the proposed method was still faster at these QPs. Consequently , the av erage ∆ t v alues for the 540p sequences increased at the higher end of the QP range, unlike the trend observed for the other two resolutions. Further, the ∆ t , ∆ bitrate and ∆ PSNR values obtained at the adjacent QP v alues tested are generally quite close. Subsequent results are hence reported using the initial set of fiv e QP values. T ABLE IV E N CO D I N G P E RF O R M AN C E O N A D DI T I O NA L Q P V A L UE S Resolution ∆ T (%) BD-rate (%) 1080p 69.0 1.80 720p 67.8 1.37 540p 71.9 1.41 Overall 70.7 1.61 W e also ev aluated the performance trade-off achiev ed by the top-down inconsistency correction scheme described in Section IV -C, by exploring an alternativ e approach of resolv- ing inconsistencies, whereby the RDO was inv oked to decide the partitioning of a superblock if its predicted partition tree was determined to be inconsistent. Among all approaches that could be devised to handle inconsistencies, assigning superblocks having inconsistent partition predictions to the RDO in this manner would ensure the best performance in terms of BD-rate, albeit at the possible expense of a decline in the speedup achieved. This trade-of f is summarized in T able V, in terms of av erage BD-rate and speedup of our inconsistency correction approach against that obtained when the RDO is employed to resolve inconsistencies, for each of the three resolutions considered in our work. Thus, by using the RDO to handle inconsistencies, an ov erall improvement of 0.4% in BD-rate was achiev ed at the expense of a 6.3% reduction in speedup on the sequences tested. Naturally , the improv ement in RD performance that can be achiev ed by any other correction scheme is upper bounded by 0.4%, and more sophisticated correction approaches such as those based on prediction probabilities indeed achie ved negligible gains. Thus, due to its simplicity , we found that the top-do wn approach described in Section IV -C to be a good choice for inconsistency correction. T ABLE V P E RF O R M AN C E T R A DE - O FF O F T H E I N CO N S I ST E N CY C O R R EC T I ON A P PR OAC H W I TH R E S PE C T T O U S I N G R D O T O R E S O L V E I N C O NS I S T EN C I ES Resolution ∆ T (%) BD-rate (%) T op-do wn RDO T op-down RDO 1080p 67.5 63.7 1.70 1.40 720p 72.2 62.3 1.75 1.13 540p 69.5 64.1 1.68 1.40 Overall 69.7 63.4 1.71 1.31 E. Comparison with VP9 Speed Levels The VP9 encoder provides speed control settings which allow skipping certain RDO search options and using early termination for faster encoding at the expense of RD perfor- mance. Thus, it essentially works tow ards the same goal as our H-FCN based partition prediction design. VP9 has 9 speed lev els designated by the numerals 0-8. T o use a particular speed level, the cpu-used parameter is set to the corresponding number while encoding. The recommended speed levels for best and good quality settings, are 0-4, whereas lev els 5-8 are reserved for use with the r ealtime quality setting. Speed lev el 0 is the slowest and is rarely used in practice, whereas speed lev el 1 provides a good quality versus speed trade-off. 3 As already pointed out earlier in this section, speed lev el 1 with the good quality setting is the baseline for our method, and the fastest encoding for this configuration occurs by setting the speed lev el to 4. The experimental results in T able III rev eal that our method is 69.7% faster than the baseline, with an increase of 1.71% in BD-rate in intra-mode. Howe ver , any approach that claims to accelerate VP9 encoding is only useful in practice if it performs better than the av ailable speed control setting. Thus, we compared the performance of our model with that 3 See http://wiki.webmproject.org/f fmpeg/vp9- encoding- guide for recom- mended settings. 10 IEEE TRANSA CTIONS ON IMA GE PR OCESSING Fig. 8. V ariation of ∆ t , ∆ bitrate and ∆ PSNR across the range of QP values at different resolutions. T ABLE VI C O MPA R IS O N O F S P EE D U P V E R SU S B D - R ATE T R A DE O FF O F O U R A P P ROAC H W I TH V P 9 S P E E D L E VE L 4 Sequence Res. ∆ T (%) BD-rate (%) BD-PSNR (dB) speed 4 H-FCN speed 4 H-FCN speed 4 H-FCN Sintel trailer 1080p 46.3 56.0 4.46 3.39 -0.21 -0.17 P edestrian ar ea 62.8 61.8 2.94 2.45 -0.12 -0.10 Sunflower 50.2 55.0 6.74 3.07 -0.28 -0.13 Cr owd run 62.8 65.3 2.30 1.16 -0.21 -0.11 Ducks takeoff 63.2 67.9 1.88 1.72 -0.18 -0.17 Narrator 59.5 73.3 3.72 0.58 -0.13 -0.02 F ood market 55.9 68.5 2.39 1.63 -0.18 -0.12 T oddler fountain 61.7 70.2 1.73 1.33 -0.14 -0.11 Rainr oses 72.9 73.5 2.50 0.92 -0.14 -0.04 Kidssoccer 85.1 83.5 0.86 0.74 -0.10 -0.08 A verage 62.0 67.5 2.95 1.70 -0.17 -0.10 Big buc k bunny 720p 58.4 69.3 7.92 3.21 -0.51 -0.21 P arkrun 61.1 65.1 3.63 1.74 -0.46 -0.22 Shields 70.4 69.3 2.84 1.73 -0.24 -0.14 Into tree 75.6 77.0 1.57 1.27 -0.13 -0.11 Old town cross 75.5 77.5 2.00 0.82 -0.15 -0.06 Cr osswalk 71.8 74.4 3.75 1.32 -0.15 -0.05 T ango 62.4 75.3 2.39 1.73 -0.11 -0.08 Driving PO V 62.6 70.1 2.00 1.46 -0.16 -0.12 Dancers 70.4 71.9 12.26 2.47 -0.09 -0.00 V egicandle 74.1 71.6 2.85 1.74 -0.14 -0.08 A verage 68.2 72.2 4.12 1.75 -0.21 -0.11 Elephants dream 540p 59.2 59.9 2.13 1.82 -0.18 -0.16 Eur o T ruck Simulator 2 72.0 73.1 1.89 1.69 -0.23 -0.20 Station 76.5 80.7 2.06 2.27 -0.13 -0.15 Rush hour 59.9 66.4 2.68 1.98 -0.11 -0.08 T ouchdown pass 70.1 72.8 2.56 1.62 -0.15 -0.09 Snow mnt 65.2 64.6 2.31 1.04 -0.30 -0.14 Roller coaster 71.1 73.9 2.21 1.68 -0.15 -0.11 Dinner Scene 60.1 65.7 3.91 1.70 -0.09 -0.03 Boxing practice 65.8 68.6 2.03 1.50 -0.14 -0.10 Meridian 59.5 69.6 1.98 1.47 -0.11 -0.08 A verage 65.9 69.5 2.38 1.68 -0.16 -0.12 Overall average 65.4 69.7 3.15 1.71 -0.18 -0.11 of speed level 4, which is the fastest speed level of VP9 intended for this configuration (i.e. with the good quality setting). T able VI summarizes the speedups, BD-rates, and BD-PSNRs of our method and those of speed lev el 4, where all quantities were computed with respect to the baseline. The results in T able VI re veal that on the sequences tested, our H-FCN based approach achie ved a 4.3% higher speedup on av erage than did the RDO at speed lev el 4, while incurring 1.44% smaller BD-rate penalty . Speed le vel 4 suf fered an av erage BD-PSNR decrease of -0.18 dB, as compared to - 0.11 dB by our method. Our learned model deliv ers greater speedup, while also yielding better RD performance than the av ailable speed control setting of the VP9 codec operating in intra-mode. The computational efficienc y of the H-FCN model, combined with the effecti veness of the partitions that it predicts while maintaining good RD performance, implies that it better optimizes this important trade-off than does the VP9 speed control mechanism. Our approach consistently surpasses the RDO at speed level 4 in terms of speedup achiev ed ov er the common baseline, across the practical range of QP values for adaptive streaming considered in our work. T o emphasize this point further , Fig. 9 plots the net speedup achiev ed on the test videos against the QP values, for each of the three spatial resolutions considered. In ev ery instance, the net speedup obtained by using the speed lev el 4 setting of the VP9 encoder fell below that achie ved by our system, ov er all QP values, although at 540p resolution and QP value of 99, it was close to that of our method. This strongly supports the utility of our method for practical encoding scenarios that use a range of QP values, rather than P A UL et al. : SPEEDING UP VP9 INTRA ENCODER WITH HIERARCHICAL DEEP LEARNING BASED P AR TITION PREDICTION 11 a few specific ones. Fig. 9. Plot of speedup achiev ed by H-FCN and RDO at speed 4 relativ e to baseline against QP value, for three spatial resolutions. T ABLE VII P E RF O R M AN C E C O M P A RI S O N O F E T H- C N N [ 2 2 ] A GA I N S T O U R A P PR OAC H O N O U R T E S T S E T Sequence Res. ∆ T (%) BD-rate (%) ETH- CNN H-FCN ETH- CNN H-FCN Sintel trailer 1080p 83.1 74.6 6.40 5.00 P edestrian ar ea 76.6 71.1 5.12 4.60 Sunflower 79.4 73.9 2.99 2.65 Cr owd run 54.6 56.1 1.26 1.11 Ducks takeoff 58.7 66.7 2.90 1.49 Narrator 81.0 73.2 3.87 3.52 F ood market 60.0 59.9 2.02 1.97 T oddler fountain 53.4 57.6 2.15 1.61 Rainr oses 69.8 70.1 4.05 3.23 Kidssoccer 43.7 41.9 1.14 1.08 A verage 66.0 64.5 3.19 2.63 Big buc k bunny 720p 76.8 71.2 3.21 2.29 P arkrun 47.2 47.5 1.64 1.43 Shields 51.7 51.2 2.04 1.67 Into tree 68.1 65.9 1.89 1.35 Old town cross 57.2 53.9 1.57 1.44 Cr osswalk 75.8 70.1 3.17 2.85 T ango 69.7 64.8 3.69 3.52 Driving PO V 53.8 52.8 1.71 1.58 Dancers 84.4 74.2 4.96 4.84 V egicandle 65.6 61.7 2.69 2.39 A verage 65.6 61.7 2.69 2.39 Overall average 65.8 63.1 2.94 2.51 F . HEVC Encoding P erformance In this section, we assess the performance of the H-FCN model in reducing the intra-mode encoding complexity of HEVC. The H-FCN model used for this purpose was identical to the model shown in Fig. 4 except that the first branch with 64 outputs was remov ed to account for the 3 le vel partition structure of CTUs into coding units (CUs) in the default configuration of the HM reference encoder [45] for HEVC in the intra mode. Also, size of the last con volutional layer of each of remaining three branches was changed from 1 × 1 × 4 to 1 × 1 × 2 since the split labels are binary for HEVC intra mode CUs. Thus, minimal changes were made to adapt the H-FCN model for HEVC partition prediction. The resulting model had 21 , 418 parameters, and was trained using the publicly av ailable CPH-Intra database for HEVC introduced in [22]. The CPH-Intra database was created using four QP values { 22 , 27 , 32 , 37 } and has 2 , 446 , 725 and 143 , 925 sample CTUs for training and validation respecti vely [22]. The hyperparameters used for training were identical to the ones mentioned in Section V -B for VP9. Training the modified H-FCN model in the abov e manner enabled us to compare the performance of our model with that of the ETH-CNN model of [22] trained on the same database. 4 for predicting the intra mode partition of CTUs into CUs Although it might be possible to improve performance by also predicting the splits of the smallest 8 × 8 CUs into 4 × 4 prediction units (PUs), the CPH-Intra database does not include the corresponding split labels for 8 × 8 CUs, and thus our model does not make this prediction either , which allows for a fair comparison with [22]. The accuracy values obtained by the H-FCN model on the CPH-Intra validation set were 86.90%, 86.87% and 91.39% at lev els 3, 2 and 1 respectiv ely while the corresponding accuracy values for the ETH-CNN model with 1 , 287 , 189 parameters were 90.98%, 86.42% and 80.42%. The average accuracy of the H-FCN model across all the three le vels was 88.38% which was thus better than that of the much larger ETH-CNN model [22], which av eraged 85.94% across all lev els. The set of test video sequences from T able III were then encoded at QP values { 22 , 27 , 32 , 37 } with the reference HEVC encoder from the HM 16.5 software [45] using the main profile in intra mode. Each test sequence was encoded thrice, using the RDO based CTU partition decisions of the HM encoder , the partitions predicted by the ETH-CNN model of [22] and our H-FCN model respectiv ely . The 540p test sequences listed in T able III were excluded in this case, as the HM reference encoder requires the frame height to be a multiple of the minimum CU depth; since the minimum CU depth is 8 in default configuration, this condition is not satisfied for 540p sequences. The comparison of the encoding performance of our ap- proach with that of [22] provided in T able VII indicates that our method attains a slightly smaller speeedup than ETH- CNN on average, but is able to consistently achiev e better BD-rates on all the test sequences. In this case, we performed the inference purely in Python to allow for a fair comparison with [22] which does not integrate the ETH-CNN model with the HM encoder , and performs an e xternal inference step using Python. Integrating the trained model with the encoder using the optimized C API as we hav e done for VP9 should further improv e the speedup achiev ed by our approach. The encodes used to compute the RD performance of the approach of [22] were obtained using four different trained ETH-CNN models, each separately trained for one QP value, whereas our method was able to obtain better RD performance with a single H-FCN model jointly trained for all four QP v alues. The performance tradeof f achiev ed by our approach seems to be particularly promising for the 1080p sequences where 0.56% lower BD-rate was achiev ed for 1.5% increase in encoding complexity by our approach. The higher speedup attained by the approach of [22] can be explained by the early termination scheme of the ETH-CNN model. On an av erage our approach 4 the implementation of ETH-CNN as well as pretrained models are pro- vided at https://github.com/tian yili2017/HEVC- Complexity- Reduction 12 IEEE TRANSA CTIONS ON IMA GE PR OCESSING T ABLE VIII P E RF O R M AN C E C O M P A RI S O N O F E T H- C N N [ 2 2 ] A GA I N S T O U R A P PR OAC H O N T H E J C T - V C T E S T S E T Class Resolution Sequence ∆ T (%) BD-rate (%) ETH-CNN H-FCN ETH-CNN H-FCN A 2560 × 1600 P eopleOnStr eet 56.0 59.3 2.37 2.53 T raffic 62.8 64.4 2.55 2.63 B 1920 × 1080 BasketBallDrive 63.7 65.0 4.26 3.60 BQT errace 54.1 54.0 1.84 1.78 Cactus 59.9 60.9 2.26 2.32 Kimono 79.5 74.9 2.59 1.90 P arkscene 62.52 64.62 1.96 1.80 E 1280 × 720 F ourP eople 60.6 61.2 3.11 3.13 Johnny 73.2 67.6 3.82 3.64 KristenAndSara 70.9 66.1 3.46 3.26 A verage 64.31 63.80 2.82 2.66 reduced encoding time by 63.1% for 2.51% increase in BD- rate, while the the method of [22] resulted in 65.8% reduction in encoding time for a 2.94% increase in BD-rate. Using the same experimental setup that was just described, we also compared the encoding performance of our approach against that of [22] on the 8 bit sequences ha ving resolutions of at least 720p from the JCT -VC test set [46] as reported in T able VIII. Such deep learning based comple xity reduction schemes are more beneficial at higher resolutions, as indicated by the lower speedup values reported on resolutions smaller than 720p in [22]. Thereby , we only report the results on the highest three resolutions from the JCT -VC test set in T able VIII. On a verage, our approach achiev ed a lo wer BD-rate on this set of test sequences as well, while being marginally slower than the method of [22]. V I . C O N C L U S I O N W e hav e dev eloped and explained a deep learning based partition prediction method for VP9 superblocks, that is im- plemented using a hierarchical fully conv olutional network. W e constructed a large database of VP9 superblocks and corresponding partitions on real streaming video content from the Netflix library , which we used to train the H-FCN model. The trained model was found to produce consistent partition trees yielding good prediction accuracy on VP9 superblocks. By integrating the trained H-FCN model into the VP9 encoder, we were able to sho w that VP9 intra-mode encoding time can be reduced by 69.7% on a verage, at the cost of an increase of 1.71% in BD-rate, by using the partitions predicted by the model, instead of in voking an RDO-based partition search during encoding. Further, by selectiv ely employing the RDO to decide the partitions of superblocks that are predicted with inconsistent partitions, the BD-rate was reduced to 1.31% with a corresponding speedup of 63.4%. The experiments we con- ducted comparing our model against the fastest recommended speed lev el of VP9 for the good quality setting, further cor - roborated its effecti veness relativ e to the faster speed settings av ailable in the reference encoder . This strongly suggests that the framew ork dev eloped here of fers an attracti ve alternativ e approach to accelerate VP9 intra-prediction partition search. W e also believ e that our approach is applicable to other video codecs, such as HEVC and A V1, that employ hierarchical block partitioning at multiple levels. Our approach can be improv ed to avoid the inconsistency correction step, by deploying a simple mechanism, such as early termination to enforce consistent partition predictions at all levels. Another immediate next step is to extend our approach to the prediction of inter-mode superblock partitions, a task which encounters the additional challenge of spatiotem- poral inference. Further, computing the partition tree, as well as other decisions made by the RDO, may be perceptually suboptimal, since they are generally driven by the goal of optimizing the mean squared error (MSE) with respect to the reference video. Since the MSE is generally a poor indicator of the perceptual quality of images and videos [47], other more perceptually rele vant criteria could be considered. By instead optimizing the partition decision process in terms of a suitable perceptual quality model like SSIM [48], MS-SSIM [49], VIF [50], ST -RRED [51], or VMAF [52], [53], RD performance could potentially be further improved, which is a direction that we intend to explore as part of our future work. Finally , it is also interesting to extend this approach to optimize the A V1 codec, which, due to its ev en deeper partition tree, and more computationally intensive RDO search process, could benefit ev en more from the speedup offered by our system model. A C K N O W L E D G M E N T W e thank Anush K. Moorthy and Christos G. Bampis of Netflix for their help in creating the database. A P P E N D I X A C O M PAR I S O N W I T H T O P - D O W N P A RT I T I O N P R E D I C T I O N In order to substantiate the efficac y of our bottom-up hierarchical block merge prediction scheme, we designed a top-down version of the H-FCN model, to predict block splits instead of merges, starting with the split type of the 64 × 64 superblocks. While performing top-do wn prediction with the hierarchical model, spatial isolation between features from the adjacent smallest blocks need to be maintained until the end of the trunk, which limits the number of max-pooling stages that can be used in the trunk. W e thus modified the H-FCN architecture of Fig. 4 such that some of the max pooling layers were remov ed from the trunk and instead added to the branches, so that the output of each branch is a matrix of the desired size. Howe ver , the number of conv olutional filters used in a branch relativ e to the number of outputs predicted by P A UL et al. : SPEEDING UP VP9 INTRA ENCODER WITH HIERARCHICAL DEEP LEARNING BASED P AR TITION PREDICTION 13 T ABLE IX P E RF O R M AN C E O F T H E T OP - D OW N A N D B OT T O M - U P M O D E L D E SI G N S . Resolution ∆ T (%) BD-rate (%) Bottom-up (70,408) Bottom-up (26,336) T op-down (31,456) Bottom-up (70,408) Bottom-up (26,336) T op-down (31,456) 1080p 49.4 67.5 47.4 1.49 1.70 1.60 720p 57.8 72.2 53.3 1.53 1.75 1.63 540p 57.7 69.5 40.2 1.56 1.68 1.63 Overall 55.0 69.7 46.8 1.53 1.71 1.62 that branch were the same as in Fig. 4. A diagram illustrating the architecture of the top-down H-FCN model is av ailable at [54]. The top-do wn model has 31 , 456 parameters, which is comparable to the 26 , 336 parameters in the bottom-up model from Fig. 4 used in our work. The hyperparameters used when training the top-down model were the same as described in Section V -B. The accuracy values of the trained top-down H-FCN model on 10 5 samples from the validation set were 89.35%, 82.80%, 85.12%, and 91.47%, from the le vel 3 to le vel 0 of the partition tree. As compared to the corresponding values of 90.27%, 83.47%, 85.13% and 91.18% obtained using the bottom-up model with 26 , 336 parameters, the accuracy improved at the lowest level, but declined at the other three lev els. In fact, the reduction in accuracy was lar gest at the highest level of the partition tree. This is consistent with our observation that global semantics learned by the deeper con volutional layers aid the partition decisions on larger blocks. The encoding performance of the top-down and bottom- up partition prediction approaches are compared in T able IX, where the numbers in parentheses denote the number of parameters of each model. The values in T able IX were calculated by encoding the set of test video sequences listed in T able III. While the top-down model has a better BD-rate performance as compared to the bottom-up model with 26 , 336 parameters, the speedup it can achiev e is considerably less. Howe ver , a higher av erage speedup and lo wer BD-rate than obtained by the top-down model on average can be achiev ed by using a bottom-up model with 70 , 408 parameters, as sho wn in the second and fifth columns of T able IX. If the number of parameters of the top-down model is reduced, its speedup will approach the value obtained by the bottom-up model with 70 , 408 parameters, but the BD-rate will increase further . This suggests that the hierarchical model is more efficient when predictions are made in a bottom-up fashion. The key idea behind the bottom-up partition decision is to reduce the number of computations by introducing max pooling at each stage, which progressiv ely reduces the spatial dimensions of the feature maps that propagate along the trunk of the network. Howe ver , as the top-down approach predicts the partitions of the smallest blocks at the last stage, the number of max pooling stages that can be introduced in the trunk is limited by the need to prev ent the feature maps from the adjacent smallest blocks from combining. Hence, the prediction of the largest 8 × 8 matrix representing the split types of 8 × 8 blocks at the last branch requires a larger feature map to be carried through the trunk as compared to the bottom- up approach, which limits its speed. The results from T able IX support the intuition behind adopting a bottom-up merge prediction scheme. While other framew orks can be designed that are amenable to top-do wn split prediction, our hierarchical model is thus found to be more suitable for bottom-up merge prediction. A P P E N D I X B E V A L UAT I ON O N C P H - I N T R A D A TA BA S E In order to ev aluate the effect of the contents chosen to train the H-FCN model, we also created a VP9 partition database, from the images provided as part of the CPH-Intra database introduced in [22] for HEVC intra-mode partition prediction. The CPH-Intra database is comprised of 2000 images, with 1700 images provided for training and the rest for validation and testing. As in our original database described in Section III, the contents were encoded at three different resolutions (1080p, 720p and 540p). The preprocessing stage was also the same as described in Section III, where at each encode resolution, all the images of the same or higher resolution were used as sources after Lanczos resampling (for contents that were originally at a higher resolution), and con version to YCbCr 4:2:0 8-bit representation. Howe ver , in this case the content was a set of images, each with a distinct visual content, unlike a set of videos characterized by substantial temporal correlation between frames, as in our original database. Thus, we encoded each image individually as an intra-frame using the libvpx reference encoder for VP9 to ensures maximal uti- lization of the av ailable content. The encoding configuration, and the process of extracting superblocks from the sources and partition trees from the encodes were exactly the same as in Section III-B. The final database, which we refer to as the CPH-VP9 database, consists of 841 , 500 training samples. The H-FCN model trained with this CPH-VP9 database was ev aluated using the set of test sequences from T able III. At each resolution, we compared the average BD-rate obtained using the CPH-VP9 database and our larger database from Section III, as reported in T able X. The average speedup is essentially the same as in T able III since the same H-FCN model was trained on both databases. T ABLE X C O MPA R IS O N O F B D - R ATE O N C P H- I N T RA D A TAB A SE A N D O U R DAT A B AS E Resolution CPH-VP9 (%) Ours (%) 1080p 1.65 1.70 720p 1.58 1.75 540p 2.36 1.68 Overall 1.86 1.71 As compared to the H-FCN model trained on our database, the model trained on the CPH-VP9 database achie ved a lower 14 IEEE TRANSA CTIONS ON IMA GE PR OCESSING av erage BD-rate on the 1080p and 720p resolutions, while its average BD-rate at 540p was higher . The overall BD-rate performance of the model trained with our database w as a little better . Nevertheless, the H-FCN model was able to generalize well on the smaller CPH-VP9 database. R E F E R E N C E S [1] D. Mukherjee et al. , “The latest open-source video codec VP9 - An overvie w and preliminary results, ” in Proc. IEEE Pictur e Coding Symp. , 2013, pp. 390–393. [2] T . Wie gand, G. J. Sulliv an, G. Bjontegaard, and A. Luthra, “Overview of the H. 264/A VC video coding standard, ” IEEE Tr ans. Circuits Syst. V ideo T echnol. , vol. 13, no. 7, pp. 560–576, 2003. [3] G. J. Sullivan, J.-R. Ohm, W .-J. Han, and T . Wie gand, “Overview of the high efficienc y video coding (HEVC) standard, ” IEEE Tr ans. on Circuits Syst. V ideo T echnol. , vol. 22, no. 12, pp. 1649–1668, 2012. [4] J. Bankoski, P . Wilkins, and Y . Xu, “T echnical overvie w of VP8, an open source video codec for the web, ” in Pr oc. IEEE Int. Conf. Multimedia Expo , 2011, pp. 1–6. [5] Y . Chen et al. , “ An overvie w of core coding tools in the A V1 video codec, ” in Proc. IEEE Picture Coding Symp. , 2018, pp. 41–45. [6] B. Bross, J. Chen, and S. Liu, “V ersatile video coding (draft 5), ” JVET Document JVET -N1001, Genev a, CH, Mar . 2019. [7] G. T oderici et al. , “Full resolution image compression with recurrent neural networks, ” in Proc. IEEE Conf. Comput. V ision P attern Recogn. , 2017, pp. 5306–5314. [8] E. Agustsson, M. Tschannen, F . Mentzer, R. Timofte, and L. V an Gool, “Generativ e adversarial networks for extreme learned image compres- sion, ” arXiv preprint , 2018. [9] J. Ball ´ e, D. Minnen, S. Singh, S. J. Hwang, and N. Johnston, “V ari- ational image compression with a scale hyperprior, ” arXiv pr eprint arXiv:1802.01436 , 2018. [10] T . Chen, H. Liu, Q. Shen, T . Y ue, X. Cao, and Z. Ma, “Deepcoder: a deep neural network based video compression, ” in Pr oc. IEEE V isual Commun. Image Pr ocess. , 2017, pp. 1–4. [11] S. Kim et al. , “ Adversarial video compression guided by soft edge detection, ” arXiv preprint , 2018. [12] C.-Y . Wu, N. Singhal, and P . Krahenbuhl, “V ideo compression through image interpolation, ” in Pr oc. Eur o. Conf. Comput. V ision , 2018, pp. 416–431. [13] Z. Zhao, S. W ang, S. W ang, X. Zhang, S. Ma, and J. Y ang, “Enhanced bi-prediction with con volutional neural network for high efficienc y video coding, ” IEEE Tr ans. Circ. Syst. V ideo T echnol. , Oct. 2018 (Early Access). [14] Y . W ang, X. Fan, C. Jia, D. Zhao, and W . Gao, “Neural network based inter prediction for HEVC, ” in Proc. IEEE Int. Conf. Multimedia Expo , 2018, pp. 1–6. [15] J. Liu, S. Xia, W . Y ang, M. Li, and D. Liu, “One-for-all: Grouped variation network-based fractional interpolation in video coding, ” IEEE T rans. Image Pr ocess. , vol. 28, no. 5, pp. 2140–2151, May 2019. [16] Z. Zhao, S. W ang, S. W ang, X. Zhang, S. Ma, and J. Y ang, “CNN-based bi-directional motion compensation for high efficiency video coding, ” in Pr oc. IEEE Int. Symp. Circuits Syst. , 2018, pp. 1–4. [17] C. Jia et al. , “Content-aware conv olutional neural network for in-loop filtering in high efficiency video coding, ” IEEE T rans. Image Pr ocess. , vol. 28, no. 7, pp. 3343 – 3356, Jul. 2019. [18] Y . Zhang, T . Shen, X. Ji, Y . Zhang, R. Xiong, and Q. Dai, “Residual highway con volutional neural networks for in-loop filtering in HEVC, ” IEEE T rans. Image Pr ocess. , vol. 27, no. 8, pp. 3827–3841, Mar . 2018. [19] J. Kang, S. Kim, and K. M. Lee, “Multi-modal/multi-scale conv olutional neural network based in-loop filter design for next generation video codec, ” in Proc. IEEE Int. Conf. Image Process. , 2017, pp. 26–30. [20] Y . Li, B. Li, D. Liu, and Z. Chen, “ A conv olutional neural network- based approach to rate control in HEVC intra coding, ” in Proc. IEEE V is. Commun. Image Pr ocess. , 2017, pp. 1–4. [21] Z. Liu, X. Y u, Y . Gao, S. Chen, X. Ji, and D. W ang, “CU partition mode decision for HEVC hardwired intra encoder using con volution neural network, ” IEEE T rans. Image Process. , vol. 25, no. 11, pp. 5088–5103, Nov . 2016. [22] M. Xu, T . Li, Z. W ang, X. Deng, R. Y ang, and Z. Guan, “Reducing complexity of HEVC: A deep learning approach, ” IEEE T rans. Image Pr ocess. , vol. 27, no. 10, pp. 5044–5059, Oct. 2018. [23] G. Bjontegaard, “Calculation of average PSNR differences between RD-curves, ” Pr oc. of the ITU-T V ideo Coding Experts Gr oup (VCEG) Thirteenth Meeting , Apr . 2001. [24] H-FCN Based VP9 Intra Encoding. Accessed May 27, 2019. [Online]. A vailable: https://github.com/Somdyuti2/H- FCN [25] D. Ruiz-Coll, V . Adzic, G. Fernandez-Escribano, H. Kalva, J. L. Martinez, and P . Cuenca, “Fast partitioning algorithm for HEVC intra frame coding using machine learning, ” in Pr oc. IEEE Int. Conf. Image Pr ocess. , 2014, pp. 4112–4116. [26] F . Duanmu, Z. Ma, and Y . W ang, “Fast CU partition decision using machine learning for screen content compression, ” in Pr oc. IEEE Int. Conf. Image Process. , 2015, pp. 4972–4976. [27] T . Li, M. Xu, and X. Deng, “ A deep con volutional neural network approach for complexity reduction on intra-mode HEVC, ” in Proc. IEEE Int. Conf. Multimedia Expo , 2017, pp. 1255–1260. [28] Y . Xian, Y . W ang, Y . Tian, Y . Xu, and J. Bankoski, “Multi-level machine learning-based early termination in VP9 partition search, ” Electron. Imaging , vol. 2018, no. 2, pp. 1–5, 2018. [29] Z. Y an et al. , “HD-CNN: hierarchical deep conv olutional neural net- works for large scale visual recognition, ” in Proc. IEEE Int. Confer ence Comput. V ision , 2015, pp. 2740–2748. [30] X. Zhu and M. Bain, “B-CNN: Branch con volutional neural network for hierarchical classification, ” arXiv preprint , 2017. [31] J. Long, E. Shelhamer, and T . Darrell, “Fully con volutional networks for semantic segmentation, ” in Pr oc. IEEE Conf. Comput. V ision P attern Recog. , 2015, pp. 3431–3440. [32] V . Badrinarayanan, A. Kendall, and R. Cipolla, “SegNet: A deep con volutional encoder-decoder architecture for image segmentation, ” IEEE T ran. P attern Anal. Mach. Intell. , vol. 39, no. 12, pp. 2481–2495, Dec. 2017. [33] I. Laina, C. Rupprecht, V . Belagiannis, F . T ombari, and N. Nav ab, “Deeper depth prediction with fully con volutional residual networks, ” in Pr oc. 4th Int. Conf. 3D V ision , 2016, pp. 239–248. [34] L. W ang, L. W ang, H. Lu, P . Zhang, and X. Ruan, “Saliency detection with recurrent fully convolutional networks, ” in Proc. Euro. Conf. Comput. V ision , 2016, pp. 825–841. [35] J. Dai, Y . Li, K. He, and J. Sun, “R-FCN: Object detection via region- based fully conv olutional networks, ” in Proc. Adv . Neural Info. Process. Syst. , 2016, pp. 379–387. [36] L. W ang, W . Ouyang, X. W ang, and H. Lu, “V isual tracking with fully con volutional networks, ” in Proc. IEEE Int. Conf. Comput. V ision , 2015, pp. 3119–3127. [37] J. Johnson, A. Karpathy , and L. Fei-Fei, “DenseCap: Fully conv olu- tional localization networks for dense captioning, ” in Pr oc. IEEE Conf. Comput. V ision P attern Recogn. , 2016, pp. 4565–4574. [38] libvpx: VP8/VP9 Codec SDK (version 1.6.0). Accessed May 27, 2019. [Online]. A vailable: https://chromium.googlesource.com/webm/libvpx/ [39] A. Aaron, Z. Li, M. Manohara, J. De Cock, and D. Ronca. Per-title encode optimization. Accessed: May 27, 2019. [Online]. A vailable: https://medium.com/netflix- techblog/ per- title- encode- optimization- 7e99442b62a2 [40] M. Zeiler and R. Fergus, “Visualizing and understanding con volutional networks, ” in Eur opean Conf. Comput. V ision , 2014, pp. 818–833. [41] X. Glorot, A. Bordes, and Y . Bengio, “Deep sparse rectifier neural networks, ” in Proc. 14th Int. Conf. Artif. Intell. Statist. , 2011, pp. 315– 323. [42] S. Ioffe and C. Szegedy , “Batch normalization: Accelerating deep network training by reducing internal covariate shift, ” arXiv preprint arXiv:1502.03167 , 2015. [43] D. P . Kingma and J. Ba, “ Adam: A method for stochastic optimization, ” arXiv preprint arXiv:1412.6980 , 2014. [44] K. He, X. Zhang, S. Ren, and J. Sun, “Delving deep into rectifiers: Surpassing human-level performance on imagenet classification, ” in Pr oc. IEEE Int. Conf. Comput. V ision , 2015, pp. 1026–1034. [45] JCT -VC. HM Software. Accessed Nov 26, 2019. [Online]. A vailable: https://hevc.hhi.fraunhofer .de/svn/svn HEVCSoftware/tags/HM- 16.5/ [46] F . Bossen. (2013) Common test conditions and software reference con- figurations. Accessed: May 18, 2020. [Online]. A vailable: https://www . itu.int/wftp3/av- arch/jctvc- site/2010 07 B Genev a/JCTVC- B300.doc [47] Z. W ang and A. C. Bovik, “Mean squared error: Love it or leave it? a new look at signal fidelity measures, ” IEEE Signal Process. Mag. , vol. 26, no. 1, pp. 98–117, Jan. 2009. [48] Z. W ang, A. C. Bovik, H. R. Sheikh, and E. P . Simoncelli, “Image quality assessment: from error visibility to structural similarity , ” IEEE T rans. Image Pr ocess. , vol. 13, no. 4, pp. 600–612, Apr . 2004. [49] Z. W ang, E. P . Simoncelli, and A. C. Bovik, “Multiscale structural similarity for image quality assessment, ” in Pr oc. 37th Asilomar Conf. Signals, Syst. Comput. , vol. 2, 2003, pp. 1398–1402. [50] H. R. Sheikh and A. C. Bovik, “Image information and visual quality , ” IEEE T rans. Image Pr ocess. , vol. 15, no. 2, pp. 430–444, Feb . 2006. P A UL et al. : SPEEDING UP VP9 INTRA ENCODER WITH HIERARCHICAL DEEP LEARNING BASED P AR TITION PREDICTION 15 [51] R. Soundararajan and A. C. Bovik, “V ideo quality assessment by reduced reference spatio-temporal entropic differencing, ” IEEE T rans. Cir cuits Syst. V ideo T echnol. , vol. 23, no. 4, pp. 684–694, 2012. [52] Z. Li, A. Aaron, I. Katsav ounidis, A. Moorthy , and M. Manohara. Tow ard A Practical Perceptual Video Quality Metric. Accessed: May 27, 2019. [Online]. A vailable: https://medium.com/netflix- techblog/ tow ard- a- practical- perceptual- video- quality- metric- 653f208b9652 [53] Z. Li et al. VMAF: The Journey Continues. Accessed: May 27, 2019. [Online]. A vailable: https://medium.com/netflix- techblog/ vmaf- the- journey- continues- 44b51ee9ed12 [54] Top-down H-FCN architecture. Accessed Nov 26, 2019. [Online]. A vailable: https://dri ve.google.com/file/d/ 1k4PnhKaUXL TmmiT o38BsnCBz09VgYIT7/view?usp=sharing

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment