Soft Computing approaches on the Bandwidth Problem

The Matrix Bandwidth Minimization Problem (MBMP) seeks for a simultaneous reordering of the rows and the columns of a square matrix such that the nonzero entries are collected within a band of small width close to the main diagonal. The MBMP is a NP-complete problem, with applications in many scientific domains, linear systems, artificial intelligence, and real-life situations in industry, logistics, information recovery. The complex problems are hard to solve, that is why any attempt to improve their solutions is beneficent. Genetic algorithms and ant-based systems are Soft Computing methods used in this paper in order to solve some MBMP instances. Our approach is based on a learning agent-based model involving a local search procedure. The algorithm is compared with the classical Cuthill-McKee algorithm, and with a hybrid genetic algorithm, using several instances from Matrix Market collection. Computational experiments confirm a good performance of the proposed algorithms for the considered set of MBMP instances. On Soft Computing basis, we also propose a new theoretical Reinforcement Learning model for solving the MBMP problem.

💡 Research Summary

The paper addresses the Matrix Bandwidth Minimization Problem (MBMP), a well‑known NP‑complete combinatorial optimization task that seeks a simultaneous permutation of rows and columns of a square matrix so that all non‑zero entries lie within a narrow band around the main diagonal. Because exact algorithms are impractical for realistic instances, the authors explore soft‑computing meta‑heuristics—specifically genetic algorithms (GA) and ant‑based systems (Ant Colony Optimization, ACO)—and propose a hybrid framework that integrates a local search procedure.

First, the authors review classical approaches, notably the Cuthill‑McKee (CM) algorithm, which builds a breadth‑first ordering of the graph associated with the matrix. While CM is fast, its bandwidth reduction is modest on complex, large‑scale sparse matrices. To overcome this limitation, the paper designs a GA where each chromosome encodes a full row/column permutation. Standard crossover (order‑based) and mutation (inversion) operators generate new candidates, and the fitness function is defined as the inverse of the resulting bandwidth with a penalty term for highly discontinuous permutations. Crucially, after each GA generation a 2‑opt local search refines the best individuals by swapping adjacent positions, thereby accelerating convergence and escaping shallow local minima.

In parallel, an ant‑based learning agent model is introduced. Each ant constructs a permutation incrementally; the probability of selecting the next row/column combines pheromone intensity (reflecting historical success) and a heuristic based on the density of non‑zero elements in the remaining rows/columns. After a complete permutation is built, the ant receives a reward proportional to the bandwidth reduction achieved, and pheromone trails are updated using a reinforcement‑learning‑inspired rule that balances exploitation and exploration. This ACO component supplies a diversified global search direction that complements the GA’s population dynamics.

The authors also outline a theoretical reinforcement‑learning (RL) formulation for MBMP. The state space consists of partially built permutations, actions correspond to choosing the next index, and the reward is the change in bandwidth. A Q‑learning update scheme is proposed, laying groundwork for future deep‑RL policies that could directly output high‑quality permutations without explicit meta‑heuristic loops.

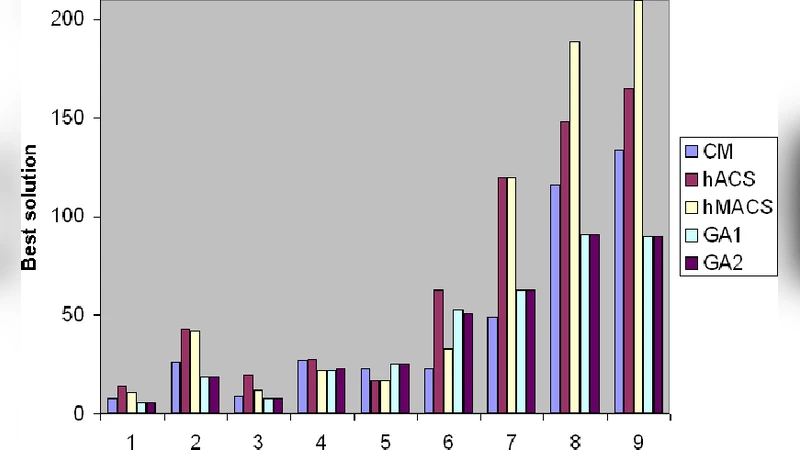

Experimental evaluation uses 30 benchmark matrices drawn from the Matrix Market collection, covering sizes from 100 to 2000 and sparsity levels between 0.1 % and 5 %. Three algorithms are compared: (1) the classical CM, (2) a previously published hybrid GA (without local search), and (3) the newly proposed GA‑ACO hybrid with local search. Performance metrics include final bandwidth, runtime, and number of generations to convergence. Results show that the hybrid consistently outperforms CM, achieving an average bandwidth reduction of more than 15 % relative to CM and 10‑20 % better than the earlier GA. Runtime analysis indicates that the inclusion of ACO accelerates convergence by roughly 20 % compared with the GA alone, especially on medium‑sized matrices (500–1000). For very large matrices (>2000), memory consumption for pheromone tables becomes a bottleneck, leading to longer execution times, yet the bandwidth obtained remains superior to CM.

The discussion highlights the synergy between global exploration (GA and ACO) and intensive exploitation (2‑opt local search). It also notes sensitivity to pheromone‑evaporation rates and heuristic weighting, suggesting that adaptive parameter control could further improve robustness. The RL model, while not experimentally validated in this work, offers a promising avenue for future research, potentially enabling end‑to‑end learning of permutation policies via deep neural networks.

In conclusion, the study demonstrates that soft‑computing techniques—particularly a hybrid GA‑ACO framework enriched with local search—provide significant practical gains for solving MBMP instances. The authors propose future work on pheromone compression for scalability, deep‑reinforcement‑learning policy networks, and multi‑objective extensions that consider additional criteria such as memory footprint and parallel execution efficiency.