I hear, I forget. I do, I understand: a modified Moore-method mathematical statistics course

Moore introduced a method for graduate mathematics instruction that consisted primarily of individual student work on challenging proofs (Jones, 1977). Cohen (1982) described an adaptation with less explicit competition suitable for undergraduate stu…

Authors: Nicholas Jon Horton

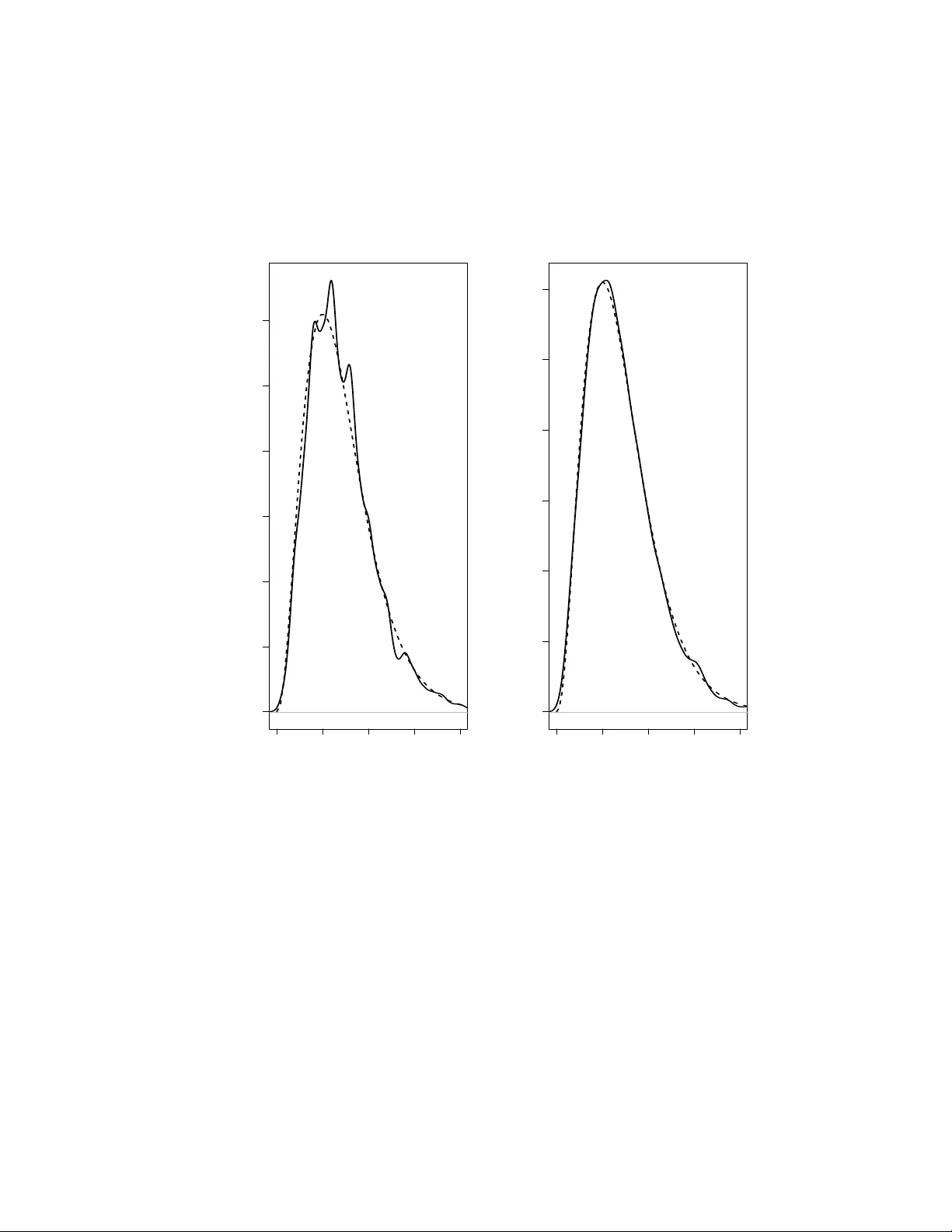

I hear , I for get. I do, I understand: a modified Moore-method mathematical statistics course Nicholas J. Horton ∗ Department of Mathematics Amherst College, Amherst, MA October 1, 2013 ∗ Address for correspondence: Dept of Mathematics, Seeley Mudd, Amherst College, Amherst, MA 01002-5000. Phone: 413-542-5655, email: nhorton@amherst.edu 1 I hear , I forget. I do, I understand: a modified Moore-method mathematical statistics course Abstract Moore introduced a method for graduate mathematics instruction that consisted primarily of indi vid- ual student work on challenging proofs (Jones 1977). Cohen (1992) described an adaptation with less explicit competition suitable for undergraduate students at a liberal arts college. This paper details an adaptation of this modified Moore-method to teach mathematical statistics, and describes w ays that such an approach helps engage students and foster the teaching of statistics. Groups of students worked a set of 3 dif ficult problems (some theoretical, some applied) e very tw o weeks. Class time was de v oted to coaching sessions with the instructor , group meeting time, and class presen- tations. R was used to estimate solutions empirically where analytic results were intractable, as well as to provide an en vironment to undertake simulation studies with the aim of deepening understanding and complementing analytic solutions. Each group presented comprehensive solutions to complement oral presentations. Dev elopment of parallel techniques for empirical and analytic problem solving was an ex- plicit goal of the course, which also attempted to communicate ways that statistics can be used to tackle interesting problems. The group problem solving component and use of technology allo wed students to attempt much more challenging questions than they could otherwise solv e. K eywords: capstone course, empirical problem solving, intermediate statistics, R software, RStudio integrated dev elopment en vironment, reproducible analysis, simulation studies, statistical computing, statistical education 2 1 Intr oduction In this paper , an implementation of a mathematical statistics course is described with the goal of dev el- oping a combination of analytic and empirical problem-solving skills through the solution of challenging problems and comple x case studies. The course, of fered at the Department of Mathematics and Statistics at Smith College in spring 2007 and spring 2011, adapted the approach of R.L Moore (Jones 1977), using modifications suggested by Cohen (1992). A similar mathematical statistics course is described by McLoughlin (2008). In the next subsection, recent dev elopments in statistics education are described, follo wed by an overvie w of the modified Moore-Cohen method. Section 2 describes specific details of the course, Section 3 pro- vides two example problems (with empirical as well as analytic solutions), Section 4 describes grading and assessment while Section 5 provides additional discussion and closing thoughts. 1.1 Dev elopments in statistical education Extensi ve curricular reforms in undergraduate statistics education have transformed our programs and courses in recent decades (Cobb 1992, Moore, Cobb, Garfield & Meeker 1995, Cobb 2011). The Guide- lines for Assessment and Instruction for Statistics Education (GAISE) report (GAISE College Group 2005), which succinctly described these changes, recommended that introductory statistics courses: • Emphasize statistical literacy and de velop statistical thinking, • Use real data, • Stress conceptual understanding rather than mere knowledge of procedures, • Foster acti ve learning in the classroom, • Use technology for dev eloping conceptual understanding and analyzing data, and • Use assessments to improve and e valuate student learning. Other related efforts have attempted to broaden the types of questions that statistics students grapple with (Bro wn & Kass 2009, Gould 2010), increase the use of case studies (Barrows & T amblyn 1980, Nolan & Speed 2000, Nolan 2003) and tak e advantage of sophisticated computing technologies and en vironments 3 such as R (Ihaka & Gentleman 1996) or Matlab (Kaplan 2003) to buttress understanding of statistical concepts (Buttrey , Nolan & T emple Lang 2001, Nolan & T emple Lang 2003, Horton, Bro wn & Qian 2004, Froelich 2008, Nolan & T emple Lang 2010, Lazar , Reev es & Franklin 2011). The mathematical statistics course has undergone many transformations during this same period. A liv ely panel in 2003 with the provocati v e title “Is the Math Stat Course obsolete?” (Rossman & Chance 2003) provided a glimpse into ways that this intermediate level statistics course is adapting to a changing landscape. One idea raised was that the math stat course (still a common entry point to the field for many students studying mathematics) should con ve y the excitement of the discipline (“ev en if they don’t go on [in statistics], we want them to leave thinking statistics is interesting”). Another was that modeling, computing and problem-solving are ke y components of such a course. Cobb (2011) provides a series of capsule summaries of innovations in the teaching of mathematical statistics, and discusses key tensions that underlie our courses in terms of what we want students to learn: Surely the most common answer must be that we want our students to learn to analyze data, and certainly I share that goal. But for some students, particularly those with a strong interest and ability in mathematics, I suggest a complementary goal, one that in my opinion has not recei ved enough e xplicit attention: W e want these mathematically inclined students to learn to solve methodological problems. I call the two goals complementary because, as I shall argue in detail, there are essential tensions between the goals of helping students learn to analyze data and helping students learn to solve methodological problems. For a ready example of the tension, consider the role of simple, artificial examples. For teaching data analysis, these “toy” examples are often and deserv edly re garded with con- tempt. But for dev eloping an understanding of a methodological challenge, the ability to create a dialectical succession of toy e xamples and exploit their e v olution is critical (p. 32). 1.2 Moore and Cohen methods Moore was noted (Halmos 1985) for quoting the Chinese proverb I hear , I forg et. I see, I r emember . I do, I under stand. He provided classes with a list of definitions and theorems which they would subsequently prov e indi vidually and then share with the rest of the class. Competition was a ke y dri ving force in the course (Jones 1977), with ef forts to ensure that student background was as homogeneous as possible. The ov erall goal was to b uild student capacity to create structure from an axiomatic basis and communicate 4 this to others. Smith, Y oo & Nichols (2009) described possible ev aluations and assessment of Moore method mathematics courses. Cohen (1992) modified Moore’ s approach using three guiding principles: • Students understand better and remember longer what they discover themselves than what is told to them, • People master an idea thoroughly when they teach it to someone else, and • Effecti v e writing and clear thinking are inextricably linked (p. 474). A fourth principle incorporated in this mathematical statistics course in volv ed the use of R (R Core T eam 2013) and RStudio (an open source integrated de velopment en vironment for R) to facilitate parallel empirical and analytic problem solving techniques. Much of the class time is spent with students working as a group and indi vidually to solve sets of chal- lenging problems, writing up solutions, and presenting them to the class as a whole. While each group tackled problems from each of the major units of the course, group members would tend to learn their assigned problems in more detail and rely on their classmates to con v ey understanding of the other prob- lems. The Moore and Cohen approaches deal more with pedagogy than with curriculum. Moore used his method to teach proofs in topology . Cohen used his method for linear algebra. Here we borrow Cohen’ s pedagogy for a course in mathematical statistics. Students are not gi ven theorems to pro ve as in Moore’ s courses; instead they are gi v en challenging problems to solve. These problems are chosen according to four criteria. The first two are critical to the pedagogy: each problem should be easy to grasp, and each should be hard enough that solving it poses a genuine chal- lenge. The first criterion helps ensure that all students in a group can participate; the second helps ensure that stronger students will not be able to cut of f discussion with a quick solution. A third criterion is that the problems should ha ve links to actual applications. This is in the spirit of the GAISE recommendations. Fourth, the problems should lend themselves to parallel and complementary pairs of solutions, one based on simulation and the other based on theory . The parallel solutions constitute a recurring theme to the 5 course, one that is central to the curriculum. This criterion is in some ways incidental to the pedagogy , although it helps ensure that students with different strengths and backgrounds can contribute acti vely to group work. 2 Details of the course For the sections (of ficially titled “Seminar in Mathematical Statistics”) taught by the author in Spring 2007 and Spring 2011, the class met three times per week for 80 minutes per session for thirteen weeks. While the only required prerequisite for the class was probability , most students in the course had also taken introductory statistics and linear algebra. No specific knowledge of statistics was assumed. At the beginning of the course, the students took the 40 item multiple choice CA OS (Comprehensiv e As- sessment of Outcomes in a first statistics course) test (DelMas, Garfield, Ooms & Chance 2006). While designed to assess student reasoning after a first course in statistics (and not a mathematical statistics class), the CA OS focuses on conceptual understanding of v ariability and uncertainty . The av erage score for the mathematical statistics students on the CA OS pre-test was 67.2% correct with a standard deviation of 13.6% (v alues ranged from 43% to 90%). The structure of the course included (almost) no lectures. Instead, the material was broken do wn into a number of problem sets. These questions were designed to be suf ficiently dif ficult to pro vide a challenge to students, but still amenable (with some assistance) to solution. During the first of fering, four groups of 3 students were created, with seven groups of 3 students for the second offering. Each group would work a different set of problems for each problem set (with an occasional problem assigned to all groups). Throughout the semester , these groups were reshuffled twice, with no two indi viduals being in the same group twice. The re-balancing helped to address issues with groups that consisted of only weak students (as well as to pro vide a release v alve for problems with group dynamics). Most class sessions consisted of a series of “coaching” sessions se v eral days after the initial presentation of the problems. These coaching sessions, described in detail in Cohen (1992), are critical in helping to guide students tow ards the desired solution without providing the answer . All students in a group attended a gi ven coaching session, and discussed their preliminary attempts at the problems. In some cases they may ha ve solved their problems. More commonly additional guidance was needed for them to make progress or to elaborate on their solutions. Early on in the course, much of this coaching inv olv ed 6 support and scaffolding for the use of computing (to allow them to gradually build their skills in terms of simulation and exploration). Each student created a draft of their preliminary solution in preparation for a second coaching session. T o ensure that all students were engaged and making good faith efforts, these were revie wed by the instructor . One per group was graded in detail, to provide general feedback for all students. The second coaching session was used to help answer any remaining questions and assist with prepa- rations of the solutions (“weekly papers”). Other assistance was provided outside of the regular class meeting times by email or during of fice hours. Before the final class session for a given set of problems, each group created a single clear and com- prehensi ve solution, which was made av ailable to the class. Finally , each group gav e a 15 minute oral presentation that re viewed their solution, with questions and answers as needed. 2.1 T extbooks and topics The approach suggested by Cohen (1992) to teach analysis of linear algebra provides students several pages of axioms, definitions, theorems and problems. This serv es as the foundation from which all of the remaining material is derived. Because of the need for more extensi ve material to support student work in a range of mathematical statistical topics, the text by Rice (1995) was used for background reading as well as the source of many of the problems. In addition, several modules (including case studies with adv anced data analysis) were integrated from Nolan & Speed (2000). The course began with a series of challenging probability problems, covering selected topics and high- lights from Chapters 1 through 5 of Rice (1995). The ne xt set of problems related to descripti ve and graphical visualization (co vering Chapter 10 of Rice (1995) and the Maternal smoking and infant health module from Nolan & Speed (2000)). T wo sets of problems were dev oted to estimation and the bootstrap (Chapter 8 of Rice (1995) and the P atterns in DN A and Who plays video games? modules from Nolan & Speed (2000)). T esting hypotheses and assessing goodness of fit (Chapter 9 of Rice (1995)) comprised two sets of problems, while Chapter 10 was used as a basis for a set on two sample comparisons. The first time the course was of fered, it closed with a set of problems entitled Bayesian infer ence: a big idea , based loosely on Chapter 15 of Rice (1995) and Section 2.5 of Lavine (2013), while the second offering closed with precursors of informal inference and simulation studies of inference rules (W ild, Pfannkuch, Regan & Horton 2010). 7 2.2 Real data and mathematical statistics While the course did not focus on advanced analysis of multiv ariate datasets, real data was regularly incorporated into the course, primarily as a component of problems assigned to the students throughout the semester . The textbooks by Rice as well as Nolan & Speed are notable for the number and variety of motiv ating examples provided throughout, including the exercises. As an example, students might be asked to find the method of moments estimator for θ for a Pareto distribution with kno wn scale parameter x 0 , and compare this to the maximum likelihood estimator for θ . After finding the analytic results, and simulating to compare the variance of the estimators, the y would be asked to calculate and interpret the sample statistic using data from an economic surve y . Another set of problems related to the analysis of cell probabilities expected by genetic theories, through estimation of underlying parameters. Students were assessed both on their ability to report in context on the underlying applied statistical question, as well as on the rele vant statistical deri vations or simulations that the y carried out. As outlined by the GAISE guidelines, use of real data is essential to the introductory course, and cen- tral also to any statistics curriculum as a whole. Ne vertheless, for certain individual courses that serve as elements of a larger statistics curriculum, real data may be less essential. There is an inherent com- plementarity between analysis of data using e xisting methods and the de velopment of ne w methods (Cobb 2011). W e need a curriculum that teaches students to engage, appreciate and enjoy both data an- alytic and methodological challenges. In a course such as the one described here, although connections to real data are important, the balance is weighted to wards problems of a more abstract sort. 2.3 T echnology This approach would not be feasible without the use of computing technology to facilitate analysis and simulation. R (Ihaka & Gentleman 1996) and RStudio ( http://rstudio.org ) provided a fle xible and adaptable en vironment for e xploration (Horton et al. 2004, Pruim 2011). RStudio is an open-source integrated development en vironment that provides a consistent and po werful interface for R (an open source general purpose statistical package, http://r- project.org ) that is easier to install, learn and run than standard R. L A T E X (Lamport 2011) within the Sweave (Leisch 2002) system was used as the formatting en vironment for the solutions, with an annotated example distributed to all students during the initial class meeting. This included examples of tables, figures, cross referencing, bibliograph y and other useful attrib utes. Submissions were made av ailable to students as both Sweav e source and PDF files to allow students to borro w working code. RStudio is particularly 8 attracti ve because it simplifies the user interface and has tightly integrated support for Sweave (including a single b utton click to Compile PDF from the source document). In future offerings, the Markdown system within the knitr package (Xie 2012) will be used, as it provides simplified functionality and does not require kno wledge of L A T E X. The course intentionally introduced students to concepts of r epr oducible analysis (Gentleman & T em- ple Lang 2007), where computation, code and results of an analysis are integrated. Being able to re-run a set of simulations and regenerate a report with a single click is a powerful moti vator for students used to error-prone processes of cutting and pasting output and figures. Reproducible analysis systems are becoming standard in industry and academia, hav e the potential to help ensure better statistical analysis, and should be incorporated in the statistics curriculum. T o help simplify the learning curve for these somewhat complex systems, a number of e xamples and idioms were provided by the instructor , to help b uild students’ repertoire of useful techniques to at- tack problems. Students were encouraged to write their initial solutions using pseudo-code (an informal description that could later be turned into working R code). These were also posted to the course man- agement system to facilitate re-use in other problems and settings. 3 Example problems and solutions T o giv e a better sense of the course, we describe two problems that were completed by the students, along with model solutions and commentary (additional examples are found in the online supplement). Each group would generally work 3 or 4 problems per assignment. These problems feature both empirical (simulations in R) and analytic (closed-form) solutions by the groups. They range from easier to more challenging, but illustrate the approaches taken by students in three separate application areas. The general level of dif ficulty is similar to that of Rice (1995) ∗ . 3.1 Pooled blood sera sampling It is kno wn that 5% of the members of a population ha ve disease X, which can be disco v ered by a blood test (that is assumed to perfectly identify both diseased and nondiseased populations). Suppose that N ∗ Rice states on page xi that This book includes a fairly large number of pr oblems, some of which will be quite difficult for students . My students confirmed this assertion. 9 people are to be tested, and the cost of the test is non-tri vial. The testing can be done in two ways: (A) Everyone can be tested separately; or (B) the blood samples of k people are pooled to be analyzed. Assume that N = nk with n an inte ger . If the test is ne gati ve, all the people tested are healthy (that is, just this one test is needed). If the test result is positiv e, each of the k people must be tested separately (that is, a total of k + 1 tests are needed for that group) † . Questions: i. For fixed k what is the expected number of tests needed in (B)? ii. Find the k that will minimize the expected number of tests in (B). iii. Using the k that minimizes the number of tests, on a verage how many tests does (B) sa ve in comparison with (A)? Be sure to check your answer using an empirical simulation. 3.1.1 Empirical (simulation-based) solution W e attempted to gain a better understanding of the problem by simulation. First, we set k = 10 , n = 500 and P ( infected ) = p = 0 . 05 (refer to Figure 1 for code). Gi ven these specific v alues for each of the v ariables, we found the e xpected number of tests to be approximately 2501.9. W e then used this v alue to help us check our analytic solution. Next, we t ried dif ferent v alues of k and n such that N (the number of people to be tested) equaled 5000. W e did this to find the value of k that minimized the expected number of tests. Giv en that N = 5000 , possible integer values for k were 2, 4, 5, 8, and 10. W e found the expected number of tests for each of these k values, respecti vely were 2988.7, 2178.9, 2126.5, 2306.2, and 2501.9. For this example, the minimum v alue is found when k = 5 . 3.1.2 Analytic (closed-f orm) solution Approach (A): the expected number of tests needed is E [ T A ] = N = n ∗ k , because we would be testing each indi vidual exactly once. † This assumes that all of the tests are run at the same time. Otherwise, if the pool tested positi ve and the first k − 1 tests were negati ve, there w ould be no need to test the final member of the pool. 10 numsim = 1000 p = 0.05 # probability of infection k = 10 # size of pool n = 500 # number of pools N = n * k # total number of people # for Approach (A), E[# tests] = N # for Approach (B), find E[# tests] if pooled? res = numeric(numsim) # stash results for (i in 1:numsim) { disease = rbinom(N, size=1, prob=p) poolmat = matrix(disease, nrow=k, ncol=n) Y = apply(poolmat, 2, sum)>=1 res[i] = n+sum(Y) * k } mean(res) sd(res) curve(N/x+x * (N/x) * (1-(1-p)ˆx), xlab="number in pool (k)", ylab="expected number", from=2, to=10) Figure 1: R code to generate empirical estimates using Approach (B) For Approach (B): i. Let Y = the # of pools infected and T B = the total number of tests needed. Assuming independence, we ha ve that E [ Y ] = n (1 − . 95 k ) and E [ T B ] = n + k E [ Y ] = n + k ( n (1 − . 95 k )) . W ith N = 5000 people, this simplifies to: E [ T B ] = 5000(1 /k + (1 − . 95 k )) . When k = 10 , n = 500 and P ( infected ) = p = 0 . 05 , E [ T B ] = 2506 . 3 , which closely matches the results from the simulation. ii. W e find the deriv ati ve of E [ T B ] with respect to k and solve (using a symbolic mathematics package such as Maple or W olfram Alpha), which yields a positi ve solution of k = 5 . 022 (see Figure 2). When k = 5 , E [ T B ] = n + 5( n (1 − . 95 5 )) = n + 1 . 13 n = 2 . 13 n = 2130 . This result is similar to that sho wn in the empirical simulations. iii. W e compare the two expected number of tests needed for each of the approaches when k = 5 and p = 0 . 05 : E [ T A ] /E [ T B ] = 5 n/ 2 . 13 n = 2 . 35 . 11 2 4 6 8 10 2200 2400 2600 2800 3000 number in pool (k) e xpected number Figure 2: Display of expected number of blood tests required as a function of pool-size ( k ), with N = 5000 , p = 0 . 05 12 Approach (A) requires an av erage of 2.35 times the number of tests than Approach (B). Figure 2 demonstrates that this ratio is greater than 1 for pool sizes between 2 and 10, gi v en a pre v alence of 0 . 05 . 3.1.3 Commentary This problem was part of a series of probability questions at the start of the course. While more ef ficient programming approaches could be used, the empirical solution features a number of idioms and tricks of the trade that are repeated throughout the class. This example demonstrates a setting where the analytic solution is straightforw ard using basic properties of expectations, but where the empirical solution provides a useful check on the results. This type of question helps students build confidence in using kno wledge from the prerequisite course in new ways. 3.2 Sampling from a probability distribution Questions [from Ev ans & Rosenthal (2004)]: i. Is it possible to find a tractable expression for the cdf of a distribution with density gi ven by: f ( y ) = c (1 + | y | ) 3 exp( − y 4 ) , where c is a normalizing constant and y is defined on the whole real line? If not, can you find c ? ii. Show ho w to generate a sample of observ ations from this distrib ution. iii. Describe how this is useful in Bayesian inference. 3.2.1 Solution i. While it is possible to find a closed-form solution for the cdf of this distrib ution it is not easily solv able. Note that because the density is a function of the absolute value of y , the integral can be broken into two symmetric parts. T o find c , we ev aluate twice the inte gral from [0 , ∞ ) in R: > f = function(x){exp(-xˆ4) * (1+abs(x))ˆ3} > integral = integrate(f, 0, Inf) 13 > 2 * integral$value [1] 6.809611 Hence c = 1 / 6 . 809611 ∼ = 0 . 15 . ii. W e created a Markov Chain Monte Carlo sampler , using the Metropolis-Hastings algorithm. The premise for this algorithm is that it chooses proposal probabilities so that after the process has con v erged draws are generated from the desired distribution. A further discussion for enthusiasts can be found on Page 610 of Ev ans & Rosenthal (2004). W e find the acceptance probability α ( x, y ) in terms of two densities, our f ( y ) and q ( x, y ) , a normal proposal density with mean x and v ariance 1, so that α ( x, y ) = min 1 , f ( y ) q ( y, x ) f ( x ) q ( x, y ) = min ( 1 , c exp ( − y 4 )(1 + | y | ) 3 (2 π ) − 1 / 2 exp ( − ( y − x ) 2 / 2) c exp ( − x 4 )(1 + | x | ) 3 (2 π ) − 1 / 2 exp ( − ( x − y ) 2 / 2) ) = min ( 1 , exp ( − y 4 + x 4 )(1 + | y | ) 3 (1 + | x | ) 3 ) Pick an arbitrary v alue for X 1 . The Metropolis-Hastings algorithm then computes the v alue X n +1 as follo ws: 1. Generate Y n +1 from a normal( X n , 1). 2. Let y = Y n +1 , compute α ( x, y ) as before. 3. W ith probability α ( x, y ) , let X n +1 = Y n +1 = y (use proposal value). Otherwise, with proba- bility 1 − α ( x, y ) , let X n +1 = X n = x (keep previous v alue). The code (displayed in Figure 3), uses the first 100,000 iterations as a burn-in period, then gener- ates 100,000 samples. A histogram is displayed in Figure 4. iii. The Metropolis-Hastings algorithm is a form of Markov Chain Monte Carlo (MCMC) and is par- ticularly attractive when the posterior density function does not hav e a familiar integral (such as when f ( x ) is a posterior density that does not correspond to a conjugate prior). Simulation is a central part of applied Bayesian analysis, because of the relati ve ease with which samples can be generated from a probability distribution, e ven when the density function cannot be explicitly inte grated (see page 25 of Gelman, Carlin, Stern & Rubin (2004)). 14 x = seq(from=-3, to=3, length=200) pdfval = 1/6.809610784 * exp(-xˆ4) * (1+abs(x))ˆ3 par(mfrow=c(2, 1)); plot(x, pdfval, type="n"); lines(x, pdfval) title("Actual pdf") alphafun = function(x, y) { return(exp(-yˆ4+xˆ4) * (1+abs(y))ˆ3 * (1+abs(x))ˆ-3) } numvals = 100000; burnin = 100000 i = 1 xn = 3 # arbitrary value to start while (i <= burnin) { propy = rnorm(1, mean=xn, sd=1) alpha = min(1, alphafun(xn, propy)) unif = runif(1) < alpha xn = unif * propy + (1-unif) * xn i = i + 1 } i = 1 res = rep(0, numvals) while (i <= numvals) { propy = rnorm(1, mean=xn, sd=1) alpha = min(1, alphafun(xn, propy)) unif = runif(1) < alpha xn = unif * propy + (1-unif) * xn res[i] = xn i = i + 1 } hist(res, nclass=20, main="Metropolis-Hastings samples", xlab="x", freq=FALSE) Figure 3: R code to generate Metropolis-Hastings samples 15 −3 −2 −1 0 1 2 3 0.0 0.2 0.4 x pdfv al Actual pdf Metropolis−Hastings samples x Density −1.5 −1.0 −0.5 0.0 0.5 1.0 1.5 0.0 0.2 0.4 Figure 4: True density and simulated dra ws from probability distribution 16 3.2.2 Commentary This problem was taken from the final set of problems, entitled Bayesian statistics: a big idea , which was intended to introduce students to more sophisticated simulations that are necessary to get answers for more complex models. Because the students had no prior e xperience with MCMC, a preliminary mini-lecture on the topic was provided along with supporting readings from Lavine (2013). This in- cluded some classic examples with conjugate priors. Throwing the nasty density function at students was initially off-putting, b ut it helped to moti vate MCMC methods and introduce Bayesian ideas and methods. The goal of this section was to give students a glimpse into a flexible and sophisticated set of models that can tackle problems far outside the realm of a traditional math stat class. 4 Grading and assessment Assessment of students in the course was done in se veral ways. Students completed 7 sets of problems ov er the course of the semester (each one approximately 2 weeks apart). Grades on preliminary solutions and weekly papers constituted 35% of the grade, with class participation, attendance and oral presenta- tions an additional 20%. T wo midterm exams accounted for 40% while 5% reflected good faith effort to wards completion of low-stak es online assessments. The midterm exams had in-class and take-home components. They included problems similar to those undertaken by the groups, albeit with simpler solutions. An informal mid-semester ev aluation was undertaken approximately halfway through the course. For the first of fering of the course, a colleague met with the class during the last 15 minutes of a class session (without the instructor present). Feedback from this assessment indicated great worries about the structure of the take-home midterm (would the problems be as hard as Rice?) and queries about other forms of assessment. For the second offering, a more formal e v aluation was undertaken where a staff member from the colle ge learning center spent the last 20 minutes of a class session with students in focus groups. The students appreciated the structure of the course and the opportunities for re vision. They “like that we get lectures on background, the collaboration and group work” and “like that we do analytic and empirical solu- tions. ” Students sought more input from the instructor , with a desire for more lectures to “put things into perspecti ve. ” Some students suggested that the instructor “tell us what are the key points to absolutely kno w from each problem set. ” The final question from the focus groups related to the students’ roles 17 as learners. Students rev ealed that they understand that they have to prepare more thoroughly for class, improv e their own class participation and assume additional responsibilities outside of class. The stu- dents acknowledged that the y should read the text more carefully , read other groups’ problems before the presentations, and “try harder” with Rice. The outside e valuator summarized the report with the follo wing quote: As you made clear to me in our discussion, although your students may want you to tell them “the ke y points to absolutely kno w , ” you belie ve strongly that they must work their way to wards knowledge mastery in this course. T o assist them in achie ving this end, you have structured the course in ways that require them to work individually and collaborativ ely– with guidance from you–as they become more e xpert and reflecti ve learners. Many of your students are uneasy with this approach and unsure of themselves: they want to know the right answers, the correct way to think, hence their request for more input from you. Their unease marks them as less sophisticated about real learning and/or timid about undertaking independent intellectual journeys. Y ou might ha ve an explicit discussion with your students about your pedagogy and your learning goals for them. I suspect they would be quite responsi ve to this kind of communication giv en their high reg ard for you and this course: they know you belie ve in them. And, since their answers to the third question re veal that they are aware of their own responsibilities as students, you could also use this discussion to reinforce their o wn good insights on becoming more active and inquisitive learners. The students also completed the CA OS post-test at the end of the class, with a mean of 72.5% correct (sd=13%, min=43%, max=90%). There was a statistically significant increase in scores compared to the pre-test (paired t-test p=0.01, df=30, 95% confidence interv al from 1.4 to 9.2 point increase). Figure 5 displays the relationship between pre and post scores (with a solid scatterplot smoother plus dashed P OS T = P RE line). There is some indication of larger improvement for students with lower pre-test scores, which is consistent with a ceiling ef fect. Giv en that the CA OS test is intended to assess outcomes from a first course, this is not surprising. 18 pre post 50 60 70 80 90 50 60 70 80 90 Figure 5: Relationship between student outcomes on the CA OS (Comprehensive Assessment of Out- comes in a first Statistics course) from the class in 2007 and 2011 (plus smoothed line and P OS T = P RE line). 19 5 Discussion This paper describes an implementation of a modified Moore-Cohen method mathematical statistics course at an undergraduate liberal arts college. The course featured a series of challenging problems, some theoretical, others data-driven, designed to help teach mathematical statistics using applications. A key idea is that the use of technology (R/RStudio and reproducible analysis tools) has opened up ne w possibilities. An attractive aspect of the proposed course was how it intentionally dov etailed with the GAISE recom- mendations (GAISE College Group 2005). In particular , it was designed to encourage statistical thinking through empirical problem solving, use real data to motiv ate methods, stress conceptual understanding, foster activ e learning and use technology to develop conceptual understanding. The course is consistent with the American Statistical Association guidelines for statistics programs (W orkgroup on Undergrad- uate Statistics 2000), which call for students to develop effecti ve technical writing, presentation skills, teamwork and collaboration, in addition to kno wledge of statistics. 5.1 Comparisons, advantages and limitations This approach dif fers significantly from the traditional Moore-method, which was de veloped for a definition- theorem-proof type course and relies primarily on individual work and competition as a motiv ator . The modified Moore-Cohen method uses group work to f acilitate engagement, with stronger students able to plunge more deeply into their solutions while still ensuring that weak er students can receive assistance as needed. This modification might be better thought of as a species split-of f, where rather than competing, students are supported to go beyond their e xpectation and discov er something in themselves. A primary challenge of teaching is to engage students in the material being studied. Cohen (1992) noted that the method ef fectively raises the le vel of communication between students and that most students are stimulated by the chang e fr om passive to active learning . Lazar et al. (2011) described the importance of capstone courses in statistics. Structuring the class with multiple, challenging problems that were not amenable to quick indi vidual solution helped to achie ve the goals of a capstone. This includes get- ting students to grapple with real-world problems, helping them dev elop capacities to work effecti vely in groups, augmenting their ability to compute to extend their problem-solving abilities, and helping them to sharpen their abilities to communicate the complexity and po wer of statistical methods. The course also dovetails with other ef forts to in volv e students in interdisciplinary research projects (Legler , Roback, Ziegler-Graham, Scott, Lane-Getaz & Richey 2010), which tend to focus on larger , more com- 20 plex datasets in the conte xt of a client discipline. While no formal assessment of the course was undertaken, student feedback from less formal appraisals was generally positi ve. Students found the approach to be challenging, particularly at the beginning of the semester when they were confronted with simultaneously learning R/RStudio, L A T E X/Sweav e, empirical problem solving techniques as well as oral and written presentation skills. The particular technical challenges of learning new packages and systems quickly receded, and the primary challenge related to answering dif ficult questions and learning new material, concepts and statistical methods. A limitation of problem or case-based courses is that they typically cover fewer topics in more depth. That was true for this course, which had more constrained coverage goals than the traditional math stat class (though most of the typical ke y concepts were cov ered). In addition, students would be expected to have variable mastery of particular topics that were included, since they engaged in the problems that their group was assigned at an intense lev el, but had more passi ve in volvement in the problems that other groups presented. The combination of written and oral presentation of solutions from other groups was designed to minimize these disparities. Ideally students would emerge from the course with useful capacities (such as ability to compute with data, simulate to approximate answers, and communicate orally and through their writing) that would allow them to fill an y gaps in their kno wledge and succeed in a graduate le vel course. Classes that include group work as a component of assessments often hav e group dynamic issues, and this course w as no e xception. In general there was a positi ve sense of community and eng agement which flo wed from the group-based workload. Knowing that the groups would be reshuffled twice helped as well. Focusing much of the work in groups allowed students to tackle far more challenging questions than they could solve individually and also modeled a common post-colle ge work en vironment. Several students hav e provided anecdotal reports of the value of learning tools for statistical computing and reproducible analysis. There are other challenges to use of this method for teaching the mathematical statistics courses. The enrollments were 12 and 20 students in Spring 2007 and Spring 2011, respectively . While scaling to course sizes of 30–40 students would be straightforward, larger class sizes would require different sys- tems and structures. These might include multiple sections taught with some common mini-lectures, doubling up on problems or student support for computing. The time commitment was comparable to a standard course, due to the e xtensiv e coaching and preparation, despite the fact that formal lectures were relati vely short (generally at the start of each ne w topic). 21 5.2 Use of technology Empirical (simulation-based) estimation complements analytic solutions, and can often allow approxi- mate solution of e xtremely challenging problems. Besides providing a useful check on analytic answers, these simulations can help with insights into how to solve a problem. R and RStudio serve as a flexible and po werful en vironment for such e xploration. A number of technologies were prominently featured in the course. These included extensi ve use of L A T E X and R. Reproducible analysis (the Sweave system (Leisch 2002) as implemented within RStudio) greatly facilitated inte gration of commands, output and graphics, and led to better facility for students to undertake analyses outside the course. This scaffolding also helped to mov e students from a “point and click” approach to statistical analysis towards a more flexible scripting interface. Further discussion of ho w to integrate reproducible analysis and effecti ve mechanisms to build students’ ability to “compute with data” are important issues but lie some what outside the scope of this paper . Other courses may find the use of R and RStudio for simulation and approximation of analytic solutions to be helpful, without the Moore-method approach. The new text by Pruim (2011) features such a presentation. 5.3 Closing thoughts Cobb (2011) ar gues that the profession needs two types of statisticians: those with the capacity to appropriately analyze and interpret data, as well as those with interest in devising nov el solutions to methodological challenges. T eaching mathematical statistics in this manner has the potential to foster engagement by presenting students with extended glimpses of the excitement of de veloping statistical procedures to solve challenging problems (Nolan & T emple Lang 2010). This approach could also serv e as a model for other intermediate and advanced undergraduate statistics classes. This method may also be rele vant for the teaching of similar quantitati ve courses in other disciplines. Acknowledgements This work was supported by NSF grant 0920350 (Phase II: Building a Community around Modeling, Statistics, Computation, and Calculus). Thanks to Sarah Anoke, George Cobb, David Cohen, Daniel 22 Kaplan, Da vid P almer and Randall Pruim for many useful discussions about pedagogy as well as helpful comments on an earlier draft. I am also indebted to the Editor , Associate Editor and anonymous re viewers for many suggestions which led to impro vements in the manuscript. 23 Refer ences Barro ws, H. & T amblyn, R. (1980). Pr oblem based learning: an appr oach to medical education , Springer-V erlag, Ne w Y ork. Bro wn, E. N. & Kass, R. E. (2009). What is statistics?, The American Statistician 63 (2): 105–110. Buttrey , S. E., Nolan, D. & T emple Lang, D. (2001). Computing in the mathematical statistics course, ASA Pr oceedings of the Joint Statistical Meetings . Cobb, G. (2011). T eaching statistics: some important tensions, Chilean J ournal of Statistics 2 (1): 31–62. Cobb, G. W . (1992). T eaching statistics, In L ynn A. Steen (ed), Heeding the call for chang e: suggestions for curricular action (MAA Notes No. 22) pp. 3–43. Cohen, D. W . (1992). A modified Moore method for teaching undergraduate mathematics, American Mathematical Monthly 89 (7): 473–74,487–490. DelMas, R., Garfield, J., Ooms, A. & Chance, B. (2006). Assessing students’ conceptual understanding after a first course in statistics, Pr oceedings of the Annual Meetings of the American Educational Resear c h Association . Ev ans, M. J. & Rosenthal, J. S. (2004). Pr obability and Statistics: the Science of Uncertainty , W H Freeman and Company , New Y ork. Froelich, A. (2008). Using R in probability and mathematical statistics courses, ASA Pr oceedings of the J oint Statistical Meetings . GAISE College Group (2005). Guidelines for assessment and instruction in statistics education, http://www.amstat.org/education/gaise , accessed August 18, 2013, T echnical r e- port , American Statistical Association. Gelman, A., Carlin, J. B., Stern, H. S. & Rubin, D. B. (2004). Bayesian data analysis (second edition) , Chapman and Hall. Gentleman, R. & T emple Lang, D. (2007). Statistical analyses and reproducible research, Journal of Computational and Graphical Statistics 16 (1): 1–23. Gould, R. (2010). Statistics and the modern student, International Statistical Review 78 (2): 297315. Halmos, P . (1985). I W ant to Be a Mathematician: An Automathogr aphy , Springer . Horton, N. J., Bro wn, E. R. & Qian, L. (2004). Use of R as a toolbox for mathematical statistics exploration, The American Statistician 58 (4): 343–357. Ihaka, R. & Gentleman, R. (1996). R: A language for data analysis and graphics, J ournal of Computa- tional and Graphical Statistics 5 (3): 299–314. Jones, F . B. (1977). The Moore method, American Mathematical Monthly 84 : 273–278. 24 Kaplan, D. (2003). Intr oduction to Scientific Computation and Pr ogramming , CL-Engineering. Lamport, L. (2011). LaT eX: a document preparation system, http://www.latex- project.org , accessed August 18, 2013, T echnical r eport , LaT eX Project. Lavine, M. (2013). Intr oduction to Statistical Thought, https://www.math.umass.edu/ ˜ lavine/Book/book.html , accessed August 18, 2013 , Creati v e Commons. Lazar , N. A., Reev es, J. & Franklin, C. (2011). A capstone course for undergraduate statistics majors, The American Statistician 65 (3): 183–189. Legler , J., Roback, P ., Zie gler-Graham, K., Scott, J., Lane-Getaz, S. & Richey , M. (2010). A model for an interdisciplinary undergraduate research program, The American Statistician 64 : 184–189. Leisch, F . (2002). Sweave, part I: Mixing R and L A T E X, R News 2 (3): 28–31. McLoughlin, P . (2008). A modified Moore approach to teaching probability and mathematical statistics: An inquiry based learning technique, ASA Pr oceedings of the Joint Statistical Meetings . Moore, D. S., Cobb, G. W ., Garfield, J. & Meeker , W . Q. (1995). Statistics education fin de si ` ecle, The American Statistician 49 : 250–260. Nolan, D. A. (2003). Case studies in the mathematical statistics course, Science and statistics: A festschrift for T erry Speed (IMS Pr ess, F ountain Hills, AZ) pp. 165–176. Nolan, D. & Speed, T . (eds) (2000). Stat Labs: Mathematical Statistics Thr ough Applications , Springer - V erlag, New Y ork. Nolan, D. & T emple Lang, D. (2003). Case studies and computing: broadening the scope of statistical education, Pr oceedings of the 2003 ISI Meeting . Nolan, D. & T emple Lang, D. (2010). Computing in the statistics curriculum, The American Statistician 64 (2): 97–107. Pruim, R. (2011). F oundations and Applications of Statistics: An Intr oduction using R , American Math- ematical Society . R Core T eam (2013). R: A Language and Envir onment for Statistical Computing , R Foundation for Statistical Computing, V ienna, Austria. URL: http://www .R-pr oject.or g/ Rice, J. A. (1995). Mathematical statistics and data analysis , Duxbury . Rossman, A. & Chance, B. (2003). Notes from the 2003 JSM session Is the Math Stat Course Obso- lete? www.amstat.org/sections/educ/MathStatObsolete.pdf , accessed August 18, 2013, T echnical r eport , American Statistical Association. Smith, J. C., Y oo, S. & Nichols, S. R. (2009). Evaluation and assessment: Ef fectiv eness of the method, The Moor e Method: A P athway to Learner-Center ed Instruction , pp. 139–149. 25 W ild, C. J., Pfannkuch, M., Regan, M. & Horton, N. J. (2010). T ow ards more accessible conceptions of statistical inference (with discussion), J ournal of the Royal Statistical Society , Series A (Applied Statistics) 174 : 247–295. W orkgroup on Under graduate Statistics (2000). Guidelines for undergraduate statistics programs, http://www.amstat.org/education/curriculumguidelines.cfm , accessed Au- gust 18, 2013, T echnical r eport , American Statistical Association. Xie, Y . (2012). knitr: A gener al-purpose packa ge for dynamic r eport gener ation in R . R package version 0.8. URL: http://CRAN.R-pr oject.org/pac kage=knitr , accessed August 18, 2013 26 Online Appendix: Additional Example Problems I hear , I for get. I do, I understand: a modified Moore-method mathematical statistics course The follo wing material is proposed as an online appendix. 5.4 Estimating σ using IQR Assume that we observe n iid observations from a normal distrib ution. Questions: i. Use the IQR of the list to estimate σ . ii. Use simulation to assess the variability of this estimator for samples of n = 100 and 400 . iii. How does the v ariability of this estimator compare to ˆ s (usual estimator)? numsim=1000; mu=42; n1=100; n2=400 runsim = function(numsim, n, mu, sigma) { res1 = numeric(numsim); res2 = res1 for (i in 1:numsim) { mynorms = rnorm(n, 0, sigma) vals = quantile(mynorms) res1[i] = (vals[4]-vals[2])/1.34898 res2[i] = sd(mynorms) } return(data.frame(IQR=res1, S=res2)) } res100 = runsim(numsim, n1, mu, pi) res400 = runsim(numsim, n2, mu, pi) boxplot(res100$IQR, res100$S, res400$IQR, res400$S, names=c("n=100 (IQR)","n=100 (S)", "n=400 (IQR)", "n=400 (S)"), ylab="distribution of sigma-hat") text(3.5, 4.0, "True sigma is 3.14159") abline(h=pi); abline(v=2.5) Figure 6: R code to carry out simulation study (estimation of σ ) 27 ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● n=100 (IQR) n=100 (S) n=400 (IQR) n=400 (S) 2.0 2.5 3.0 3.5 4.0 4.5 distribution of sigma−hat T rue sigma is 3.14159 Figure 7: Distribution of sample estimates by estimator and sample size 28 5.4.1 Solution i. W e know that for a standard normal random variable P ( Z > 0 . 675) = 0 . 25 . So we would expect that the IQR (interquartile range) would extend to 2 ∗ . 6745 = 1 . 35 standard units. W e use this expectation to determine the estimator: ˜ s = I QR / 1 . 35 . ii. W e carried out a simple simulation study with a fixed mean and standard deviation (set to π ). A thousand simulations of samples were taken using ˜ s and ˆ s (MLE). The results are displayed in Figure 7. W e note that both estimators are less v ariable when n = 400 than for n = 100 and conclude that the v ariability of the estimators goes down as a function of √ n . iii. The IQR for ˜ s is 0.50 for n=100 and 0.25 for n=400, while the IQR for ˆ s is 0.30 for n=100 and 0.16 for n=400. W e conclude that the MLE is more efficient than our ad-hoc estimator . 5.4.2 Commentary This ex ercise was included with a problem set mid-way through the class as the nature and properties of estimators were explored. This problem introduced the idea of a simulation study to in vestig ate the behavior of a ne w estimator . While the analytic solution was straightforward, it required the students to think about estimation in a different way , and tap properties of the normal distrib ution. The empirical solution pro vided a glimpse into the additional v ariability of the IQR estimator compared to the standard estimator of standard deviation. A full analytic solution for this problem was beyond the scope of the course, but can be undertak en for specific v alues of n . 5.5 Assessing robustness of chi-square statistic to small cell counts Perform a simulation study on the sensiti vity of the χ 2 test for the uniform distribution to expected cell counts below 5. Simulate the distribution of the test statistic for 16 and 64 observations from a uniform distribution using 8 equal-length bins (from Nolan & Speed (2000)). 5.5.1 Solution W e know that the chi-square test is recommended only in situations where the expected cell count is 5 or more in each cell. In this simulation study , we generate repeated samples from the null distribution and compare these to the large-sample distribution of the chi-square ( χ 2 ) statistic (see Figure 8). W e kno w that in this setting, the appropriate degrees of freedom are equal to the number of bins minus 1. The main work is done using the simchisq() function, which generates data from a continuous uniform v ariable, then constructs the observ ed and expected cell counts and the chi-square statistic. This is repeated for the two scenarios and displayed in Figure 9. W e see that the observ ed distrib ution under 29 simchisq = function(n, bins) { vals = cut(runif(n, 0, bins), breaks=0:bins) obs = c(table(vals)) exp = c(rep(n/bins, bins)) return(sum(((obs - exp)ˆ2)/exp)) } library(mosaic); par(mfrow=c(1, 2)) bins = 8; n = 16 # Expected count per cell equal to 2 res = do(10000) * simchisq(n, bins) plot(density(res$result), main="", lwd=2, xlab=paste("N=",n,", ",bins," bins", sep=""), xlim=c(0, 20)) curve(dchisq(x, bins-1), 0, max(res$result), add=TRUE, lwd=2, lty=2) n = 64 # Expected count per cell equal to 8 res = do(10000) * simchisq(n, bins) plot(density(res$result), main="", lwd=2, xlab=paste("N=",n,", ",bins," bins", sep=""), xlim=c(0, 20)) curve(dchisq(x, bins-1), 0, max(res$result), add=TRUE, lwd=2, lty=2) Figure 8: R code to carry out simulation study (chi-square problem) the null is some what jumpy (due to the discreteness of the possible v alues) when the expected cell counts are lo w (left figure), and that the observed curve is quite similar to the chi-square distrib ution when the expected cell count is 8 (right figure). 5.5.2 Commentary This problem was intended to provide more practice in the construction of simulation studies as well as introduce ne w idioms related to looping and writing of functions. It also serves to highlight the impor- tance of assumptions and the idea of sampling under the null distrib ution (as a precursor to resampling based inference). This was included with a group of problems mid-way through the class as the nature and properties of tests of hypotheses along with sampling distrib utions under the null were explored. 30 0 5 10 15 20 0.00 0.02 0.04 0.06 0.08 0.10 0.12 N=16, 8 bins Density 0 5 10 15 20 0.00 0.02 0.04 0.06 0.08 0.10 0.12 N=64, 8 bins Density Figure 9: Observed and expected distrib ution for chi-square statistic 31

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment