Faster Uncertainty Quantification for Inverse Problems with Conditional Normalizing Flows

💡 Research Summary

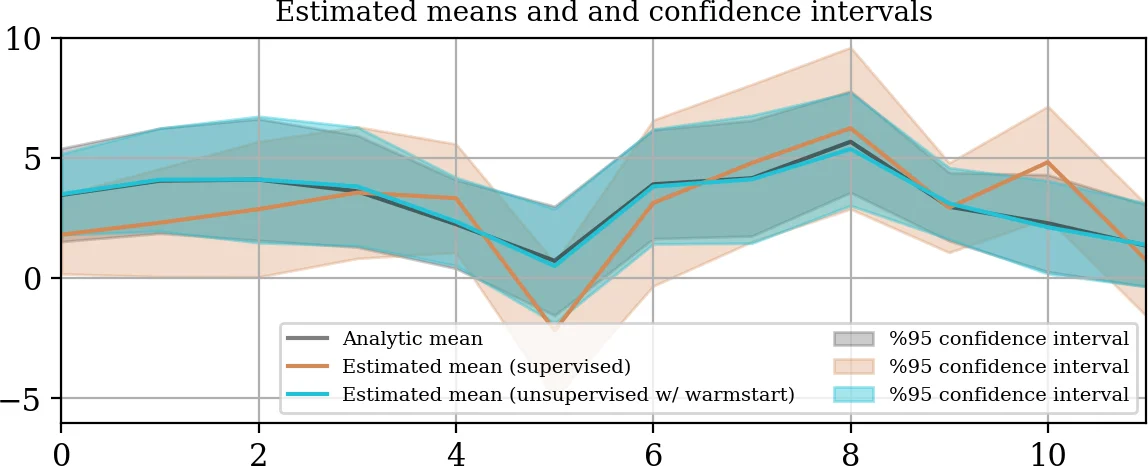

The paper addresses the challenge of efficiently quantifying uncertainty in large‑scale inverse problems when only a limited amount of paired data (observations y and corresponding solutions x) is available for supervised training, but new observations y₀ arise from a slightly different distribution (different forward operator, noise level, or prior). The authors propose a two‑step framework that leverages conditional normalizing flows (CNFs) to bridge the supervised and unsupervised regimes through transfer learning.

Step 1 – Supervised learning of a conditional flow.

Given a dataset of joint samples ((x_i, y_i) \sim p_{X,Y}), a conditional invertible map (T: (x,y) \mapsto (z_x, z_y)) is trained by minimizing the KL‑divergence between the push‑forward density (T_# p_{X,Y}) and a standard normal distribution in latent space. The loss

\

Comments & Academic Discussion

Loading comments...

Leave a Comment