Structure-Invariant Testing for Machine Translation

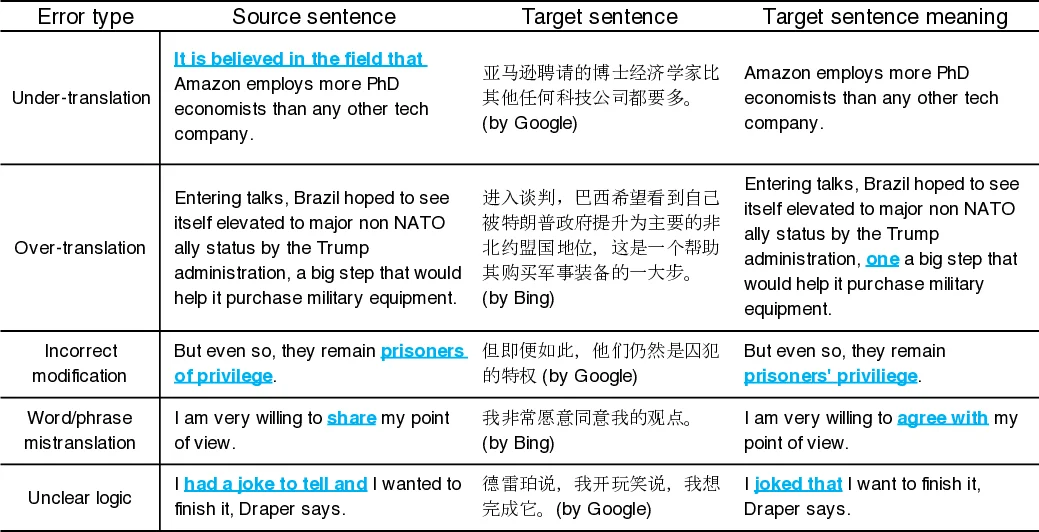

In recent years, machine translation software has increasingly been integrated into our daily lives. People routinely use machine translation for various applications, such as describing symptoms to a foreign doctor and reading political news in a foreign language. However, the complexity and intractability of neural machine translation (NMT) models that power modern machine translation make the robustness of these systems difficult to even assess, much less guarantee. Machine translation systems can return inferior results that lead to misunderstanding, medical misdiagnoses, threats to personal safety, or political conflicts. Despite its apparent importance, validating the robustness of machine translation systems is very difficult and has, therefore, been much under-explored. To tackle this challenge, we introduce structure-invariant testing (SIT), a novel metamorphic testing approach for validating machine translation software. Our key insight is that the translation results of “similar” source sentences should typically exhibit similar sentence structures. Specifically, SIT (1) generates similar source sentences by substituting one word in a given sentence with semantically similar, syntactically equivalent words; (2) represents sentence structure by syntax parse trees (obtained via constituency or dependency parsing); (3) reports sentence pairs whose structures differ quantitatively by more than some threshold. To evaluate SIT, we use it to test Google Translate and Bing Microsoft Translator with 200 source sentences as input, which led to 64 and 70 buggy issues with 69.5% and 70% top-1 accuracy, respectively. The translation errors are diverse, including under-translation, over-translation, incorrect modification, word/phrase mistranslation, and unclear logic.

💡 Research Summary

The paper introduces Structure‑Invariant Testing (SIT), a novel metamorphic testing technique designed to assess the robustness of modern neural machine translation (NMT) services without requiring reference translations. The core premise is that sentences which are semantically similar and share the same part‑of‑speech (POS) structure should produce translations with comparable syntactic structures. To operationalize this idea, the authors first generate “similar” source sentences by replacing a single token (restricted to nouns and adjectives) with context‑aware alternatives. They achieve this using a BERT‑based masked language model (MLM), which predicts the top‑k most plausible replacements given the surrounding words, thereby ensuring grammaticality and naturalness.

Each original sentence and its generated variants are fed into the translation system under test (Google Translate and Bing Microsoft Translator in the experiments). The resulting target sentences are parsed with both constituency and dependency parsers, yielding tree representations. The authors then compute a quantitative distance between the original translation’s tree and each variant’s tree, using metrics such as tree‑edit distance. If the distance exceeds a pre‑defined threshold, the pair is flagged as a potential error, indicating that a minor lexical change caused an unexpectedly large structural shift in the translation.

The experimental protocol involved crawling 200 real‑world English sentences from the web, generating on average five similar sentences per source, and applying SIT to the two commercial translators. The method uncovered 64 structural‑invariance violations in Google Translate and 70 in Bing, with top‑1 translation accuracy of roughly 70 % for both services. The identified bugs fall into five categories: under‑translation (key content omitted), over‑translation (extraneous material added), incorrect modification (misapplied adjectives or adverbs), word/phrase mistranslation, and unclear logical flow. Notably, none of these issues were captured by traditional quality metrics such as BLEU or ROUGE, highlighting SIT’s ability to surface errors that directly affect user comprehension.

Key contributions include: (1) a fully automated, oracle‑free testing framework that leverages syntactic invariance; (2) a practical implementation that combines MLM‑based lexical substitution with robust parsing techniques; (3) empirical evidence that a modest set of 200 source sentences suffices to reveal dozens of substantive translation defects in state‑of‑the‑art commercial systems; and (4) a taxonomy of error types that are invisible to n‑gram‑based evaluation.

The authors acknowledge limitations: the approach depends on the quality of the parsers, and structural divergence does not always imply a semantic error. Moreover, the current token‑replacement strategy focuses on nouns and adjectives, potentially missing errors triggered by other parts of speech. Future work is outlined to address these gaps, including extending the method to multilingual parsing, exploring richer tree similarity measures (e.g., graph neural network embeddings), integrating automatic error correction, and scaling the pipeline for continuous integration in large‑scale translation services. In sum, SIT offers a promising direction for systematic, scalable validation of machine translation systems, and its underlying metamorphic principles could be adapted to other AI‑driven software domains.

Comments & Academic Discussion

Loading comments...

Leave a Comment