A Survey of Numerical Methods Utilizing Mixed Precision Arithmetic

💡 Research Summary

This technical report, produced by the Exascale Computing Project’s Multiprecision Focus Team, surveys the state‑of‑the‑art mixed‑precision techniques across dense and sparse linear algebra, data‑communication compression, preconditioning, and software ecosystem integration. The motivation stems from the rapid adoption of low‑precision arithmetic units—such as half‑precision (FP16) and bfloat16 tensor cores—in modern CPUs and GPUs driven by machine‑learning workloads. These units deliver 2–4× higher throughput than single‑precision and up to an order of magnitude over double‑precision, while simultaneously halving memory traffic.

The report begins with an overview of low‑precision BLAS, focusing on NVIDIA’s tensor‑core‑accelerated half‑precision GEMM (HGEMM). Benchmarks show that HGEMM with FP32 output on tensor cores reaches ~100 TFLOP/s on a V100 GPU, offering both higher performance and better accuracy than pure FP16 output. Batch‑HGEMM implementations (e.g., in MAGMA) overcome the fixed‑size restrictions of tensor cores and outperform cuBLAS for small‑to‑moderate matrix sizes.

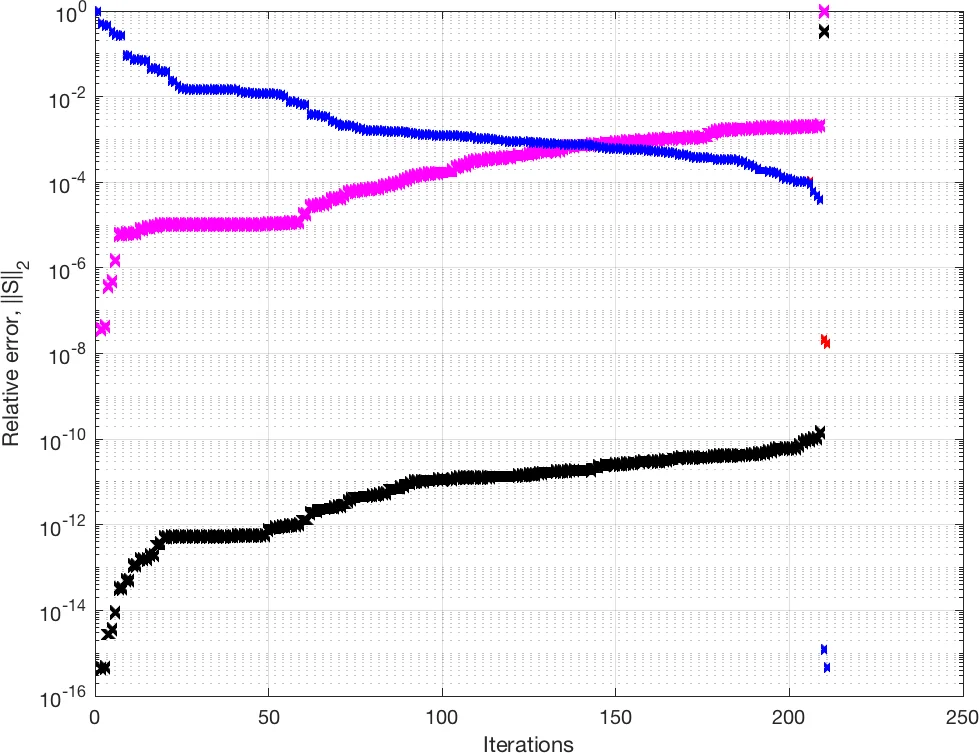

Mixed‑precision iterative refinement is presented as a generic strategy: a single‑precision (or half‑precision) solve provides an inexpensive initial solution, which is then refined in double precision to achieve full‑precision accuracy. Classical iterative refinement and the more robust GMRES‑IR are described, together with scaling and shifting techniques that improve convergence for ill‑conditioned systems.

The survey continues with mixed‑precision factorizations. Dense LU, Cholesky, and QR algorithms exploit FP16 matrix‑panel updates while retaining double‑precision accuracy through high‑precision updates of the trailing submatrix. A quantized integer LU method demonstrates that 8‑bit integer arithmetic, combined with appropriate scaling, can dramatically reduce memory bandwidth while delivering acceptable accuracy for certain applications.

Section 3 addresses data and communication compression. Mixed‑precision MPI reduces network traffic by transmitting operands in low precision and reconstructing them at the receiver. Approximate FFTs with tunable accuracy‑for‑speed trade‑offs and dynamic splitting strategies further lower communication costs in spectral methods.

Sparse linear algebra receives extensive treatment. Mixed‑precision sparse LU and QR factorisations, as well as direct solvers, are shown to achieve substantial speedups by performing the bulk of matrix‑vector products in low precision. Krylov subspace methods—including Lanczos‑CG, Arnoldi‑GMRES, and their mixed‑precision variants—benefit from low‑precision matrix‑vector kernels and high‑precision residual corrections. GMRES‑IR combined with low‑precision preconditioners can be 2–3× faster than traditional double‑precision GMRES while preserving convergence.

Preconditioner design (Section 6) emphasizes decoupling arithmetic precision from memory precision. Multigrid smoothers can run in FP16, while coarse‑grid corrections use FP32 or FP64, yielding up to 75 % memory savings without sacrificing convergence.

The report also surveys the integration of mixed‑precision capabilities into the xSDK ecosystem. Libraries such as Ginkgo, hypre, Kokkos Kernels, MAGMA, PETSc, PLASMA, SLATE, STRUMPACK, SuperLU, and Trilinos now expose APIs for FP16/bfloat16 kernels, automatic precision selection, and mixed‑precision MPI.

Finally, the authors discuss IEEE‑754 format emulators and rounding‑error analysis, providing a theoretical foundation for predicting error growth when switching precisions. The emulator enables systematic testing of precision‑mixing strategies across architectures.

Overall, the document argues that mixed‑precision algorithms are essential for achieving Exascale performance: they alleviate the growing memory‑bandwidth bottleneck, exploit the massive throughput of tensor cores, and retain double‑precision accuracy where needed. Future work includes developing automated precision‑selection frameworks, extending portability across emerging hardware (e.g., ARM‑based GPUs, future tensor‑core designs), and conducting large‑scale application studies to validate the reported speedups in real scientific workloads.

Comments & Academic Discussion

Loading comments...

Leave a Comment