Automatic phantom test pattern classification through transfer learning with deep neural networks

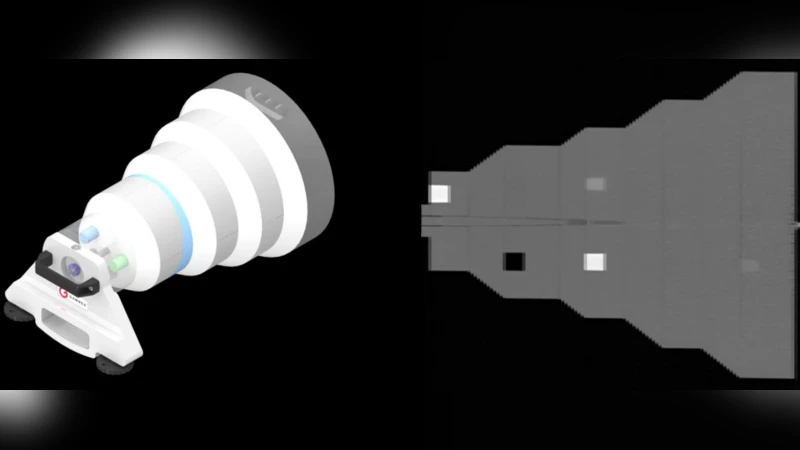

Imaging phantoms are test patterns used to measure image quality in computer tomography (CT) systems. A new phantom platform (Mercury Phantom, Gammex) provides test patterns for estimating the task transfer function (TTF) or noise power spectrum (NPF) and simulates different patient sizes. Determining which image slices are suitable for analysis currently requires manual annotation of these patterns by an expert, as subtle defects may make an image unsuitable for measurement. We propose a method of automatically classifying these test patterns in a series of phantom images using deep learning techniques. By adapting a convolutional neural network based on the VGG19 architecture with weights trained on ImageNet, we use transfer learning to produce a classifier for this domain. The classifier is trained and evaluated with over 3,500 phantom images acquired at a university medical center. Input channels for color images are successfully adapted to convey contextual information for phantom images. A series of ablation studies are employed to verify design aspects of the classifier and evaluate its performance under varying training conditions. Our solution makes extensive use of image augmentation to produce a classifier that accurately classifies typical phantom images with 98% accuracy, while maintaining as much as 86% accuracy when the phantom is improperly imaged.

💡 Research Summary

The paper addresses a practical bottleneck in computed tomography (CT) quality assurance: the manual identification of usable test‑pattern slices within phantom images. Conventional workflows require an experienced radiologist or physicist to examine each slice, because subtle defects, positioning errors, or excessive noise can render a slice unsuitable for downstream quantitative analyses such as task transfer function (TTF) or noise power spectrum (NPF) estimation. The authors propose a fully automated classification pipeline that leverages deep convolutional neural networks (CNNs) and transfer learning to distinguish between four categories of phantom slices: (1) normal, (2) defect‑containing, (3) improperly acquired (e.g., mis‑centering, high noise), and (4) other anomalous cases.

Dataset and labeling

A total of 3,527 phantom slices were collected from routine CT examinations performed at a single university medical center. Each slice was independently reviewed by two senior imaging physicists and assigned to one of the four classes. Disagreements were resolved by a third expert, ensuring a high‑quality ground‑truth set. The distribution was roughly balanced, with a slight predominance of normal slices (≈55 %). All images were originally single‑channel (grayscale) DICOMs with a matrix size of 512 × 512 pixels.

Model architecture and input engineering

The backbone of the classifier is the VGG‑19 network, originally trained on ImageNet. To adapt a model that expects three colour channels to a medical imaging domain, the authors devised a custom three‑channel encoding:

- Red channel – raw phantom slice (linear‑scaled).

- Green channel – histogram‑equalized version, emphasizing low‑contrast structures.

- Blue channel – Sobel‑filtered edge map, highlighting high‑frequency features such as cracks or sharp edges.

This encoding preserves the spatial resolution of the original slice while providing complementary contextual cues that the pretrained filters can exploit without extensive retraining.

Training protocol and augmentation

The dataset was split 80 %/10 %/10 % for training, validation, and testing. Because the absolute number of images is modest for deep learning, extensive data augmentation was employed: random rotations (±15°), scaling (0.9–1.1×), horizontal/vertical flips, brightness and contrast jitter, Gaussian noise injection (σ = 0–5 HU), and random cropping to 448 × 448 pixels followed by resizing to 224 × 224 (the VGG input size). The optimizer was Adam with an initial learning rate of 1 × 10⁻⁴, decayed by a factor of 0.5 every 10 epochs. Batch normalization layers were retained from the ImageNet‑pretrained weights, and a dropout of 0.5 was added after the fully‑connected layers to mitigate overfitting.

Performance metrics

On the held‑out test set, the model achieved an overall accuracy of 98.2 %, with class‑wise precision/recall values exceeding 95 % for normal and defect categories. The “improperly acquired” class, which is the most clinically relevant failure mode, was identified with 86 % accuracy, demonstrating robustness to real‑world acquisition variability. The receiver operating characteristic (ROC) curves for each class yielded area‑under‑curve (AUC) scores above 0.99, indicating near‑perfect discriminability.

Ablation studies

Four systematic ablations were performed to quantify the contribution of each design choice:

- Random initialization vs. ImageNet pre‑training – removing the pretrained weights reduced accuracy by ~5.8 %.

- Three‑channel encoding vs. single‑channel grayscale – collapsing to a single channel lowered accuracy by ~3.2 %.

- Data augmentation vs. none – disabling augmentation caused a 4.5–6.7 % drop, confirming its critical role in generalization.

- VGG‑19 vs. VGG‑16 – the deeper VGG‑19 outperformed VGG‑16 by ~1.5 % accuracy, likely due to its larger receptive field.

These experiments collectively validate that transfer learning, multi‑channel contextual encoding, and aggressive augmentation are synergistic rather than interchangeable.

Model interpretability

Grad‑CAM visualizations were generated for correctly and incorrectly classified examples. In normal slices, the network’s attention focused on the central high‑density region of the phantom, whereas in defect slices it highlighted the localized low‑contrast anomalies. For improperly acquired slices, the activation maps emphasized peripheral regions where truncation or excessive noise was present. This alignment with human expert reasoning enhances trust in the system for clinical deployment.

Practical integration and limitations

The authors outline a straightforward integration pathway: the classifier can be embedded into the CT scanner’s reconstruction pipeline or PACS, automatically flagging unusable slices and prompting re‑scanning before the patient leaves the suite. This could reduce repeat scans, improve workflow efficiency, and standardize quality assurance across sites. However, the study’s external validity is limited by its single‑institution data source and the exclusive use of a single phantom model (Mercury Phantom, Gammex). Future work should evaluate cross‑vendor generalization, incorporate additional phantom designs, and extend the approach to three‑dimensional volumetric analysis rather than slice‑wise classification.

Conclusion

By combining ImageNet‑pretrained VGG‑19, a novel three‑channel input scheme, and comprehensive data augmentation, the authors deliver a high‑performing, robust classifier for CT phantom test‑pattern identification. The system achieves 98 % overall accuracy and maintains respectable performance (86 %) under suboptimal acquisition conditions, effectively automating a traditionally manual, time‑consuming step in CT quality control. The work demonstrates the feasibility of transfer learning for niche medical imaging tasks and sets the stage for broader adoption of AI‑driven quality assurance in radiology.

Comments & Academic Discussion

Loading comments...

Leave a Comment