Deep Geometric Texture Synthesis

💡 Research Summary

The paper introduces a novel generative framework for learning and transferring geometric textures on triangle meshes directly from a single reference model. Unlike prior approaches that rely on surface parameterization, normal‑only displacement maps, or genus‑restricted templates, the proposed method operates on the intrinsic structure of meshes and is oblivious to topology. The core idea is to treat each triangular face together with its three one‑ring neighboring faces as a fixed‑size receptive field. For every edge of a face, a rotation‑ and translation‑invariant 4‑dimensional feature (edge length and the opposite vertex projected onto a local coordinate system defined by the face normal, edge direction, and their cross product) is extracted. These features are first processed by a 1×1 convolution to obtain an order‑invariant face embedding, after which a symmetric face convolution aggregates information from the three neighboring faces using permutation‑invariant operations (e.g., averaging).

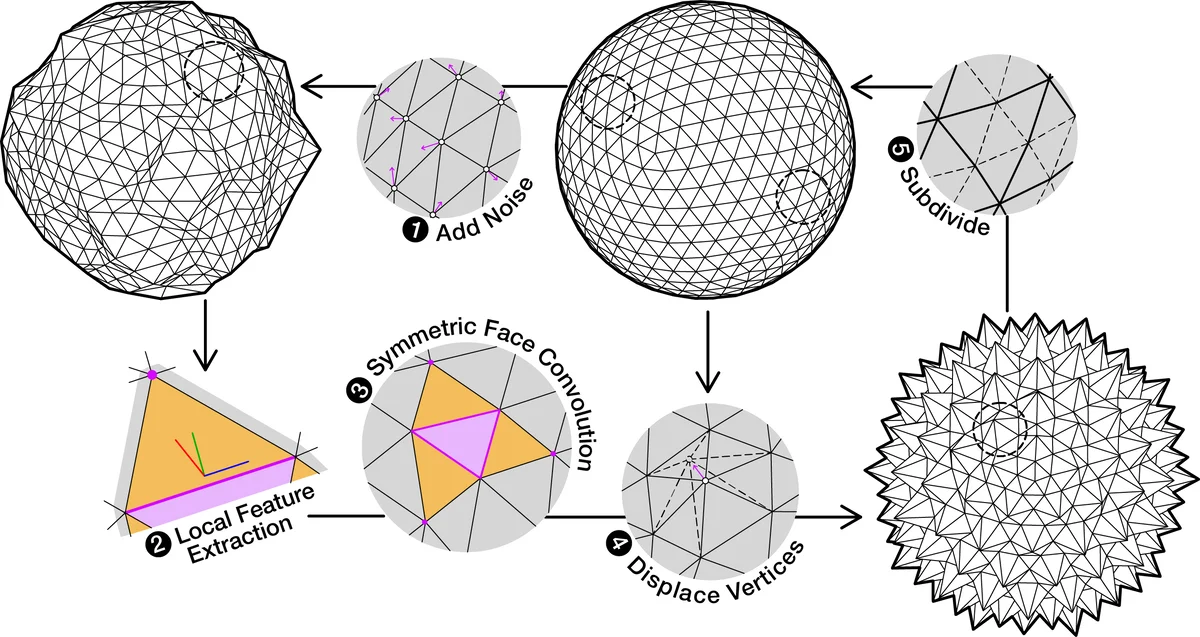

Training proceeds hierarchically across multiple resolutions. Starting from a low‑resolution template (e.g., an icosahedron), the reference mesh is repeatedly subdivided and optimized so that each level provides a multi‑scale training set. At each scale, Gaussian noise is added to vertex positions and fed into the generator. The generator’s final layer predicts a displacement vector for each vertex of a face; the per‑vertex displacement is obtained by averaging the contributions of all incident faces. The displaced mesh is then subdivided and passed to the next, finer scale. A discriminator at each scale, built from the same face‑convolution layers, learns to distinguish real patches (taken from the multi‑scale reference) from synthesized ones, thereby enforcing that local statistics match the reference distribution.

Because the network predicts full 3‑D vertex displacements, it can move vertices not only along the normal but also tangentially, enabling synthesis of complex geometric textures that cannot be expressed by 2‑D displacement maps. The hierarchical GAN architecture allows the model to first capture coarse shape variations and then progressively add fine‑grained details, similar to multi‑scale texture synthesis in images.

Experiments demonstrate successful transfer of a variety of geometric textures—spikes, cubic stylizations, wave‑like deformations—from a single source mesh to target meshes with different triangulations and genera (including genus 1 to genus 4). The method produces results that are visually indistinguishable from the reference statistics, while requiring no UV mapping or explicit surface parameterization. Moreover, by sampling different latent codes, the model can generate diverse variations of the learned texture, confirming its probabilistic nature.

The paper’s contributions are threefold: (1) a rotation‑ and translation‑invariant face feature representation coupled with symmetric face convolutions, (2) a hierarchical GAN that learns multi‑scale geometric texture distributions from a single mesh, and (3) a genus‑oblivious texture transfer pipeline that synthesizes new geometry rather than copying patches. Limitations include reliance on local patches, which may lead to global consistency issues for large‑scale deformations, and the fact that only a single reference limits style diversity. Future work is suggested in integrating global context, training on multiple exemplars, and extending the framework to modify mesh topology (e.g., creating holes) in addition to vertex displacement. This research opens new avenues for automatic, high‑fidelity mesh detailing in graphics, game asset creation, and scientific visualization.

Comments & Academic Discussion

Loading comments...

Leave a Comment