Did You Remember to Test Your Tokens?

Authentication is a critical security feature for confirming the identity of a system’s users, typically implemented with help from frameworks like Spring Security. It is a complex feature which should be robustly tested at all stages of development. Unit testing is an effective technique for fine-grained verification of feature behaviors that is not widely-used to test authentication. Part of the problem is that resources to help developers unit test security features are limited. Most security testing guides recommend test cases in a “black box” or penetration testing perspective. These resources are not easily applicable to developers writing new unit tests, or who want a security-focused perspective on coverage. In this paper, we address these issues by applying a grounded theory-based approach to identify common (unit) test cases for token authentication through analysis of 481 JUnit tests exercising Spring Security-based authentication implementations from 53 open source Java projects. The outcome of this study is a developer-friendly unit testing guide organized as a catalog of 53 test cases for token authentication, representing unique combinations of 17 scenarios, 40 conditions, and 30 expected outcomes learned from the data set in our analysis. We supplement the test guide with common test smells to avoid. To verify the accuracy and usefulness of our testing guide, we sought feedback from selected developers, some of whom authored unit tests in our dataset.

💡 Research Summary

The paper addresses the gap between the critical importance of authentication security and the relative neglect of unit testing for authentication mechanisms, especially token‑based authentication implemented with Spring Security. While most existing security testing guidance focuses on black‑box techniques such as penetration testing, developers lack concrete, white‑box unit‑test‑oriented test cases. To fill this void, the authors conduct an empirical study using classic grounded theory to inductively discover common unit‑test scenarios for token authentication.

Data collection starts with mining GitHub for Java projects that use Spring Security and JUnit. An automated pipeline (GitHub Torrent, REAPER, custom scripts, and JavaParser) filters 100 000 random Java repositories down to 53 projects that contain 481 unique JUnit test methods exercising authentication‑related classes. Forks, duplicate JHipster‑generated test suites, and irrelevant sessions‑related code are removed to ensure a clean corpus.

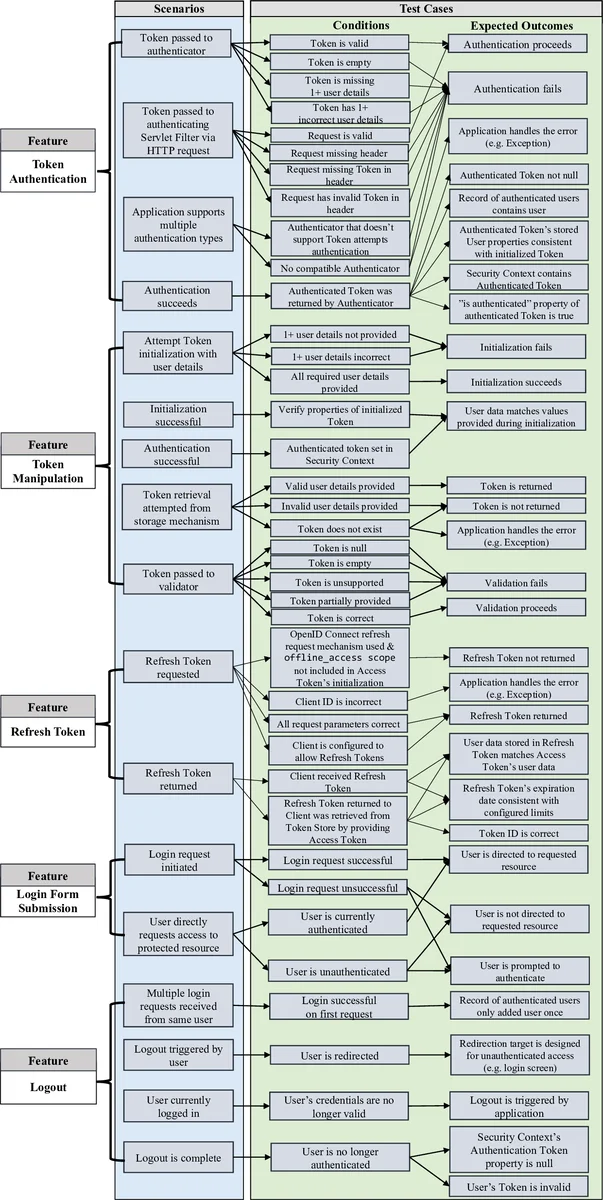

Two researchers independently perform open coding on each test method, extracting the “Given‑When‑Then” components (context, action, condition, expected outcome) and recording them as Gherkin‑style memos. When a memo already captures a test, only a code label is added to avoid duplication. This process yields a taxonomy of 17 distinct scenarios (e.g., valid token, expired token, malformed token, missing claims), 40 conditions (e.g., null parameters, wrong signature, altered header, secret‑key leakage), and 30 expected outcomes (e.g., authentication success, failure, exception types, security‑context state changes).

By systematically combining these elements, the authors derive 53 unique test cases that represent realistic, developer‑focused unit‑test patterns for token authentication. Each case is presented as a flow diagram and a Gherkin scenario, making it immediately reusable. The paper also catalogs 30 common “test smells” observed in the corpus—such as hard‑coded secret keys, excessive mocking of security contexts, duplicated setup code, missing assertions on authentication state, and ignoring exception hierarchies—and provides recommendations for avoiding them.

To assess practical relevance, the authors interview two developers who authored tests in the dataset and two additional practitioners. All participants confirm that the catalog is comprehensive, easy to adopt, and helps bring security considerations into early development stages. The feedback highlights that the guide encourages scenario‑driven test design, reduces reliance on ad‑hoc black‑box testing, and improves regression safety for authentication code.

Limitations include the exclusive focus on token authentication (excluding session management, OAuth2 flows, OpenID Connect, etc.) and the restriction to Java/Spring Security projects. The authors suggest future work to extend the methodology to other languages, frameworks, and broader authentication/authorization mechanisms, as well as to integrate the catalog with automated test‑generation tools.

In summary, the study delivers the first empirically grounded, developer‑friendly unit‑testing guide for token authentication. By providing a concrete set of 53 test cases, a taxonomy of scenarios, conditions, and outcomes, and a checklist of test smells, it equips developers and security engineers with actionable artifacts to embed security testing early in the software lifecycle, thereby strengthening the overall robustness of authentication implementations.

Comments & Academic Discussion

Loading comments...

Leave a Comment