A Multi-Turn Emotionally Engaging Dialog Model

Open-domain dialog systems (also known as chatbots) have increasingly drawn attention in natural language processing. Some of the recent work aims at incorporating affect information into sequence-to-sequence neural dialog modeling, making the response emotionally richer, while others use hand-crafted rules to determine the desired emotion response. However, they do not explicitly learn the subtle emotional interactions captured in human dialogs. In this paper, we propose a multi-turn dialog system aimed at learning and generating emotional responses that so far only humans know how to do. Compared with two baseline models, offline experiments show that our method performs the best in perplexity scores. Further human evaluations confirm that our chatbot can keep track of the conversation context and generate emotionally more appropriate responses while performing equally well on grammar.

💡 Research Summary

The paper introduces MEED (Multi‑turn Emotionally Engaging Dialog model), a neural architecture designed to generate open‑domain responses that are both contextually coherent and emotionally appropriate across multiple conversational turns. The authors begin by motivating the need for affect‑aware chatbots, citing prior work that either requires an explicit emotion label as input or relies on handcrafted rules to steer the system toward a target affect. Both approaches are impractical for real‑world dialogue where emotions emerge organically and evolve throughout the exchange.

In the related‑work section, the authors review three families of prior research: (1) standard sequence‑to‑sequence (seq2seq) and hierarchical models such as HRED and HRAN that capture semantic context but ignore affect; (2) affect‑augmented language models (e.g., Affect‑LM) that embed emotion at the word‑level using resources like LIWC or VAD; (3) emotion‑conditioned dialog generators (e.g., ECM, Emotional Chatting Machine) that require a predefined emotion category for each response. They argue that none of these methods jointly model the dynamic flow of emotions in multi‑turn interactions without external supervision.

MEED’s architecture consists of three main components: a hierarchical attention encoder, an emotion encoder, and a decoder that fuses both sources of information. The hierarchical encoder mirrors HRAN: a bidirectional GRU processes each utterance at the word level, producing hidden states h_{jk}. A Bahdanau‑style word‑level attention computes a weighted sum r_{tj} for each utterance. An utterance‑level unidirectional GRU then processes the sequence of r_{tj} from the most recent to the earliest turn, and a second level of attention yields the final semantic context vector c_t for the current decoding step.

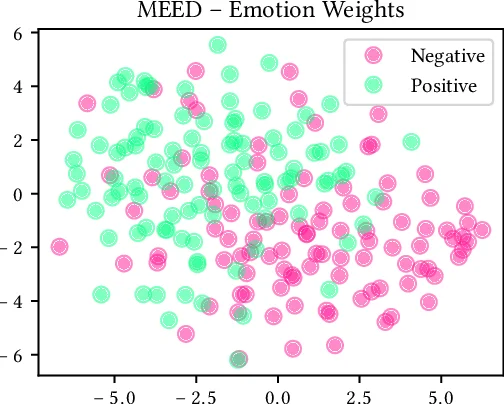

The emotion encoder operates independently of the semantic encoder. Each utterance x_j is passed through the LIWC2015 tool, which flags the presence of five emotion categories (positive, negative, anxious, angry, sad) plus a neutral flag, producing a binary 6‑dimensional vector 𝟙(x_j). This vector is projected into a continuous space via a dense layer with sigmoid activation, yielding a_j. A unidirectional GRU consumes the sequence of a_j in chronological order, and its final hidden state is taken as the emotion context vector e. This design allows the model to learn how emotions evolve across turns directly from data, without any hand‑crafted rules or external labels.

During decoding, the probability of the next token y_t is conditioned on both c_t and e, i.e., p(y_t | y_{<t}, c_t, e). The decoder is a GRU‑based language model that concatenates the previous decoder hidden state, the semantic context, and the emotion context before computing the output distribution. Training uses the standard cross‑entropy loss on a large corpus of movie subtitles, chosen for its high‑quality, professionally written dialogues that are less likely to contain toxic or overly informal language. No additional affective loss terms are introduced; the model learns to align affect implicitly through the joint optimization of semantic and emotional signals.

Experimental evaluation compares MEED against two baselines: (a) a vanilla seq2seq model trained on the same data, and (b) HRAN, which captures hierarchical context but lacks affect modeling. Perplexity results show MEED achieving the lowest score (≈28.4), indicating better predictive power. Human evaluation involves 200 multi‑turn dialogue snippets rated on three dimensions: emotional appropriateness, grammatical fluency, and contextual coherence. MEED consistently outperforms both baselines on emotional appropriateness (average 4.3/5 vs. 3.5 for baselines) while matching or slightly exceeding them on fluency and coherence. The authors also conduct an ablation study removing the emotion encoder, which leads to a noticeable drop in emotional appropriateness, confirming the encoder’s contribution.

The paper acknowledges several limitations. LIWC’s categorical scheme is coarse; it cannot represent mixed or nuanced emotions (e.g., simultaneous sadness and anger). Encoding the entire emotion history into a single vector e may cause information loss in very long conversations. Moreover, the model relies on the quality of the LIWC lexicon, which may not cover slang or emerging affective expressions common in social media. Future work is suggested to explore continuous affect spaces such as VAD, incorporate emotion‑specific attention mechanisms, and test the model on more diverse datasets (e.g., Reddit, Twitter) while employing safety filters to avoid toxic outputs.

In conclusion, MEED represents a significant step toward data‑driven, affect‑aware dialog generation. By jointly learning hierarchical semantic context and a learned emotion flow without requiring external emotion labels, it demonstrates that neural chatbots can produce responses that are not only fluent but also emotionally resonant, moving closer to the natural dynamics of human‑human conversation.

Comments & Academic Discussion

Loading comments...

Leave a Comment