Feature Graph Learning for 3D Point Cloud Denoising

Identifying an appropriate underlying graph kernel that reflects pairwise similarities is critical in many recent graph spectral signal restoration schemes, including image denoising, dequantization, and contrast enhancement. Existing graph learning algorithms compute the most likely entries of a properly defined graph Laplacian matrix $\mathbf{L}$, but require a large number of signal observations $\mathbf{z}$’s for a stable estimate. In this work, we assume instead the availability of a relevant feature vector $\mathbf{f}i$ per node $i$, from which we compute an optimal feature graph via optimization of a feature metric. Specifically, we alternately optimize the diagonal and off-diagonal entries of a Mahalanobis distance matrix $\mathbf{M}$ by minimizing the graph Laplacian regularizer (GLR) $\mathbf{z}^{\top} \mathbf{L} \mathbf{z}$, where edge weight is $w{i,j} = \exp{-(\mathbf{f}_i - \mathbf{f}_j)^{\top} \mathbf{M} (\mathbf{f}_i - \mathbf{f}j) }$, given a single observation $\mathbf{z}$. We optimize diagonal entries via proximal gradient (PG), where we constrain $\mathbf{M}$ to be positive definite (PD) via linear inequalities derived from the Gershgorin circle theorem. To optimize off-diagonal entries, we design a block descent algorithm that iteratively optimizes one row and column of $\mathbf{M}$. To keep $\mathbf{M}$ PD, we constrain the Schur complement of sub-matrix $\mathbf{M}{2,2}$ of $\mathbf{M}$ to be PD when optimizing via PG. Our algorithm mitigates full eigen-decomposition of $\mathbf{M}$, thus ensuring fast computation speed even when feature vector $\mathbf{f}_i$ has high dimension. To validate its usefulness, we apply our feature graph learning algorithm to the problem of 3D point cloud denoising, resulting in state-of-the-art performance compared to competing schemes in extensive experiments.

💡 Research Summary

The paper tackles the problem of learning an appropriate graph structure when only a single observation of a graph signal is available, a situation common in many image and 3‑D data restoration tasks. Traditional statistical graph‑learning methods require many independent signal samples to estimate a precision (inverse covariance) matrix, while existing spectral‑based approaches still rely on multiple observations to enforce low‑frequency smoothness. To overcome this limitation, the authors assume that each node i is equipped with a feature vector f_i ∈ ℝ^K (e.g., color intensities, 3‑D coordinates, surface normals). They define edge weights as an exponential of a Mahalanobis distance: w_{ij}=exp{−(f_i−f_j)^T M (f_i−f_j)}, where M ∈ ℝ^{K×K} is a positive‑definite metric matrix to be learned.

The learning objective is to minimize the Graph Laplacian Regularizer (GLR) z^T L(M) z for the single observed signal z ∈ ℝ^N, where L(M) is the combinatorial Laplacian built from the feature‑based weight matrix W(M). Because K is typically far smaller than N, the number of unknowns (K^2 entries of M) is modest, enabling stable estimation from a single signal.

Optimization proceeds by alternating updates of the diagonal and off‑diagonal entries of M. For the diagonal part, the authors derive linear inequality constraints from the Gershgorin Circle Theorem that guarantee M remains positive‑definite (PD). They then apply a Proximal Gradient (PG) descent, projecting each gradient step onto the feasible set defined by those inequalities.

For the off‑diagonal entries, a block‑descent scheme is introduced: one row and the corresponding column of M are optimized jointly while keeping the rest fixed. To preserve PD, the Schur complement of the sub‑matrix M_{2,2} is constrained to be PD, using the Haynsworth inertia additivity theorem. The PD constraint is relaxed via a vector‑norm bound, which avoids explicit matrix inverses and eigen‑decompositions, dramatically reducing computational cost.

The resulting algorithm learns M efficiently even when the feature dimension K is large, because it never requires a full eigen‑decomposition of M.

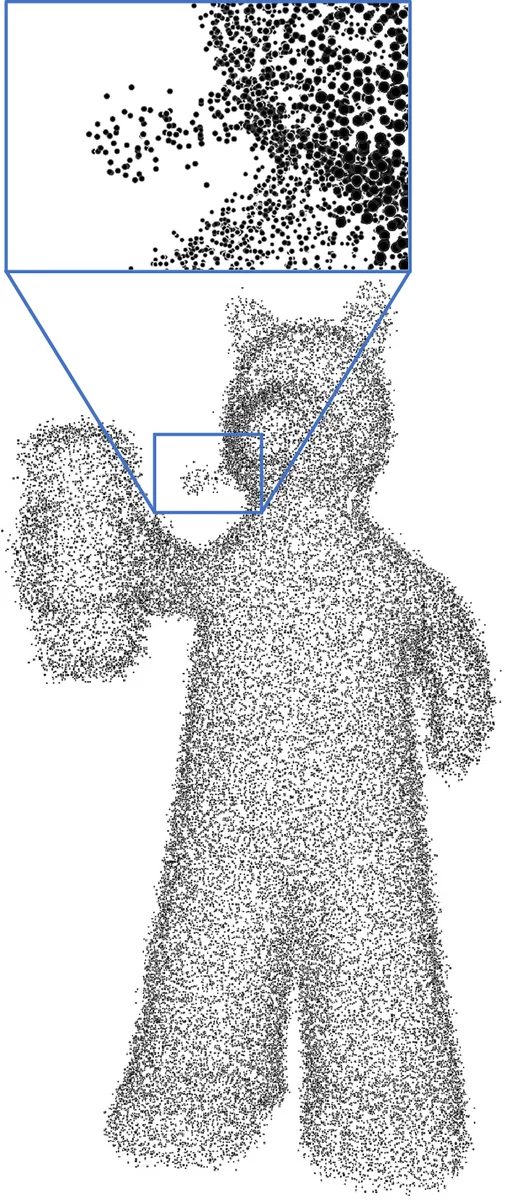

The learned feature‑based graph is then applied to 3‑D point‑cloud denoising. The authors first segment the cloud into locally self‑similar patches, using both point coordinates and surface normals as features. An initial k‑nearest‑neighbor graph is constructed for each patch, after which the proposed M‑learning procedure refines the edge weights. Assuming an intrinsic Gaussian Markov Random Field (IGMRF) prior on the point cloud, they formulate a MAP estimation problem where the GLR serves as the prior term. The point positions and the underlying graph are alternately updated until convergence.

Extensive experiments on synthetic and real point‑cloud datasets (including ModelNet and Stanford Bunny) demonstrate that the proposed method outperforms state‑of‑the‑art techniques from the MLS, LOP, non‑local, and previous graph‑based families. Quantitative metrics such as PSNR, Chamfer distance, and visual inspection show superior preservation of sharp features and high‑frequency details, especially under strong noise. Moreover, the computational time is significantly lower than competing high‑dimensional graph‑learning approaches because of the avoidance of full eigen‑decompositions.

In summary, the paper makes three key contributions: (1) a novel feature‑graph learning framework that works with a single signal observation by minimizing the GLR with respect to a Mahalanobis metric; (2) an efficient block‑descent algorithm that guarantees positive‑definiteness via Gershgorin‑derived linear constraints and Schur‑complement constraints, while sidestepping costly matrix inverses; (3) a successful application of the learned graph to 3‑D point‑cloud denoising, achieving state‑of‑the‑art performance. Future directions include extending the method to directed graphs, multi‑feature fusion, and other 3‑D processing tasks such as reconstruction and compression.

Comments & Academic Discussion

Loading comments...

Leave a Comment