Functional differentiations in evolutionary reservoir computing networks

We propose an extended reservoir computer that shows the functional differentiation of neurons. The reservoir computer is developed to enable changing of the internal reservoir using evolutionary dynamics, and we call it an evolutionary reservoir computer. To develop neuronal units to show specificity, depending on the input information, the internal dynamics should be controlled to produce contracting dynamics after expanding dynamics. Expanding dynamics magnifies the difference of input information, while contracting dynamics contributes to forming clusters of input information, thereby producing multiple attractors. The simultaneous appearance of both dynamics indicates the existence of chaos. In contrast, sequential appearance of these dynamics during finite time intervals may induce functional differentiations. In this paper, we show how specific neuronal units are yielded in the evolutionary reservoir computer.

💡 Research Summary

The paper introduces an “Evolutionary Reservoir Computer” (ERC), an extension of conventional reservoir computing (RC) that incorporates evolutionary dynamics to adapt the internal recurrent network structure. Traditional RCs rely on a fixed, randomly connected reservoir whose internal weights remain unchanged during training; only the read‑out weights are learned, typically via linear regression. While this architecture offers fast training, it often fails on tasks that require complex temporal processing or the separation of multiple sensory modalities.

To overcome these limitations, the authors embed a genetic algorithm (GA) into the reservoir design. The GA evolves both the recurrent weight matrix and each neuron’s decay constant (α_i) across generations. Evolutionary operators include mutation (random rewiring of 4 % of connections, addition of Gaussian noise to a subset of weights, and perturbation of α_i) and crossover (half‑and‑half merging of two parent networks). Fitness is defined as the summed mean‑square error of the spatial and temporal read‑out units over a test interval, encouraging networks that can accurately reproduce target outputs for both modalities.

The ERC model consists of N neurons updated by a discrete‑time equation:

x_i(t+1) = (1‑α_i)x_i(t) + α_i tanh(∑_j w_ij x_j(t) + w_i0 + ∑_k w_in,ik I_k(t)) + ξ_i(t).

Only 10 % of possible recurrent connections are non‑zero, yielding a sparse reservoir. Importantly, the internal network is split into an “input layer” (receiving external signals but not directly feeding the read‑out) and an “output layer” (connected to the read‑out units). This two‑stage architecture enables a sequential expansion‑contraction dynamical regime: an initial expanding phase amplifies differences among input patterns, while a subsequent contracting phase clusters similar inputs, forming multiple attractors. When these phases occur sequentially rather than simultaneously, the system can develop functional specialization of individual neurons.

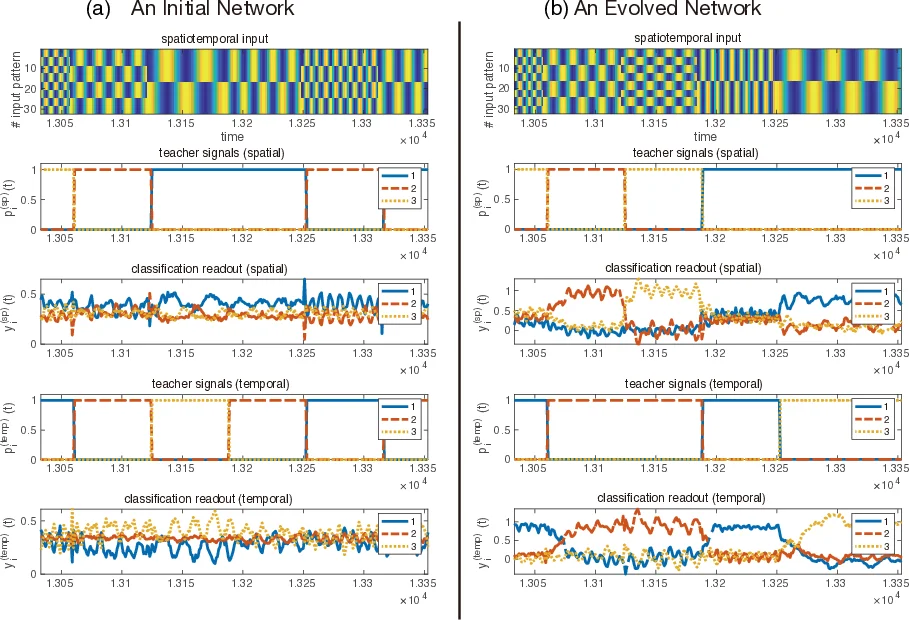

Two benchmark tasks are employed. The “separation task” presents a product of a spatial pattern (a static square‑wave vector) and a temporal pattern (a cosine wave) as input. The network must simultaneously decode the spatial component and the temporal component, each via a dedicated set of read‑out units. A four‑step delay is imposed so that the target output corresponds to the input presented four steps earlier, demanding short‑term memory and nonlinear transformation. The “combination task” requires detection of specific spatial‑temporal pairings; each read‑out unit is assigned a subset of pattern combinations to fire for, testing the network’s ability to process conjunctive information rather than separate streams.

Experimental results show that random reservoirs, even after optimal read‑out training, achieve poor accuracy on both tasks. In contrast, after a modest number of GA generations (typically <50), ERCs reach >90 % classification accuracy on the separation task and substantially higher performance on the combination task compared with the baseline. Analysis of the evolved topologies reveals a transition from purely random connectivity to structures containing feed‑forward pathways complemented by feedback loops, reminiscent of cortical microcircuit motifs.

Crucially, the authors demonstrate functional differentiation: certain neurons become highly selective to specific spatial patterns, others to particular temporal frequencies. Mutual information analysis between input patterns and individual neuron activations confirms that information flow is partitioned across the reservoir, mirroring the brain’s functional parcellation. Parameter sweeps indicate that the magnitude of the decay constants and the degree of sparsity modulate the balance between expanding and contracting dynamics, thereby controlling the extent of specialization.

The study proposes that the interplay between chaotic expansion and near‑stable contraction provides a mechanistic basis for the emergence of specialized processing units in adaptive neural systems. By coupling evolutionary optimization with reservoir computing, the authors present a framework where the reservoir is no longer a static “black box” but an adaptable substrate capable of self‑organizing functional modules. The work opens avenues for applying ERCs to more complex multimodal perception, continual learning, and embodied robotics, where the ability to evolve internal dynamics in response to environmental constraints could yield more robust and flexible artificial intelligence.

Comments & Academic Discussion

Loading comments...

Leave a Comment