Coupling-based Invertible Neural Networks Are Universal Diffeomorphism Approximators

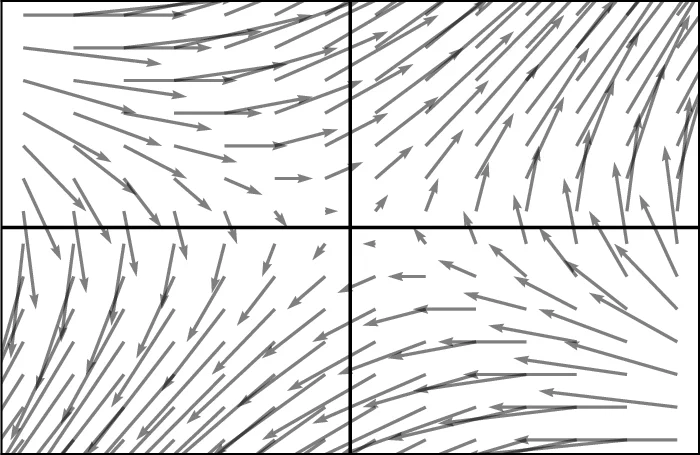

Invertible neural networks based on coupling flows (CF-INNs) have various machine learning applications such as image synthesis and representation learning. However, their desirable characteristics such as analytic invertibility come at the cost of restricting the functional forms. This poses a question on their representation power: are CF-INNs universal approximators for invertible functions? Without a universality, there could be a well-behaved invertible transformation that the CF-INN can never approximate, hence it would render the model class unreliable. We answer this question by showing a convenient criterion: a CF-INN is universal if its layers contain affine coupling and invertible linear functions as special cases. As its corollary, we can affirmatively resolve a previously unsolved problem: whether normalizing flow models based on affine coupling can be universal distributional approximators. In the course of proving the universality, we prove a general theorem to show the equivalence of the universality for certain diffeomorphism classes, a theoretical insight that is of interest by itself.

💡 Research Summary

This paper addresses a fundamental question about the expressive power of coupling‑flow based invertible neural networks (CF‑INNs): can they universally approximate arbitrary invertible functions? While CF‑INNs enjoy analytic invertibility and tractable Jacobians, their layer designs impose structural constraints that could, in principle, limit their ability to represent certain diffeomorphisms. The authors answer this by establishing a simple yet powerful criterion: a CF‑INN is universal if its constituent layers can realize affine coupling transformations and invertible linear maps as special cases.

The work begins by formalizing three notions of universality. Lᵖ‑universality requires that, for any target function f and any compact set K, there exists a model g whose Lᵖ error on K is arbitrarily small. Sup‑universality strengthens this to a uniform bound over the whole domain. Distributional universality demands that a model can push forward a known reference distribution (e.g., uniform on

Comments & Academic Discussion

Loading comments...

Leave a Comment