A Characteristic Function Approach to Deep Implicit Generative Modeling

Implicit Generative Models (IGMs) such as GANs have emerged as effective data-driven models for generating samples, particularly images. In this paper, we formulate the problem of learning an IGM as minimizing the expected distance between characteri…

Authors: Abdul Fatir Ansari, Jonathan Scarlett, Harold Soh

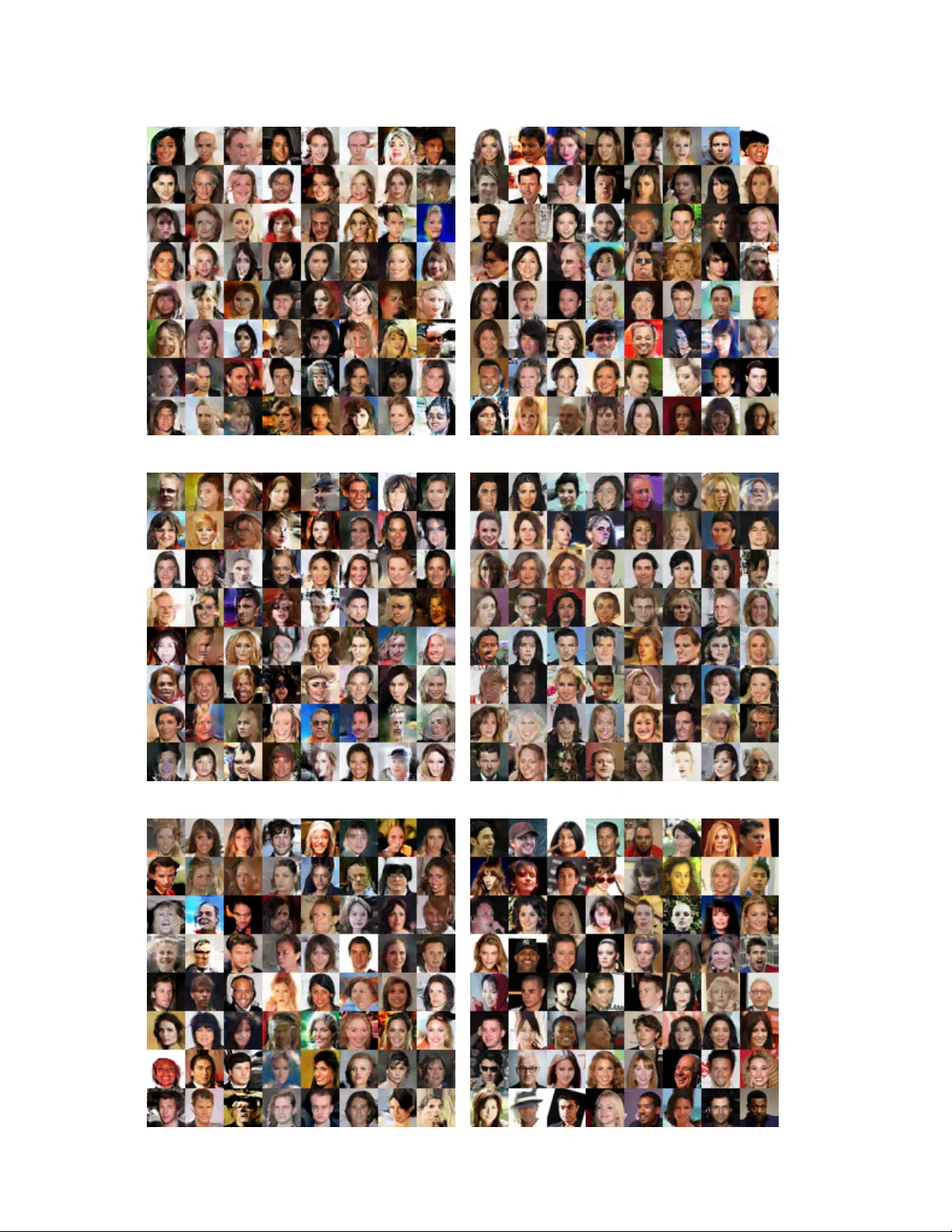

A Characteristic Function A ppr oach to Deep Implicit Generative Modeling Abdul Fatir Ansari † , Jonathan Scarlett †‡ , and Harold Soh † † Department of Computer Science ‡ Department of Mathematics National Uni versity of Singapore { afatir, scarlett, harold } @comp.nus.edu.sg Abstract Implicit Generative Models (IGMs) suc h as GANs have emer ged as effective data-driven models for generating samples, particularly ima ges. In this paper , we formulate the pr oblem of learning an IGM as minimizing the expected distance between characteristic functions. Specifically , we minimize the distance between char acteristic functions of the r eal and generated data distributions under a suitably- chosen weighting distribution. This distance metric, whic h we term as the c haracteristic function distance (CFD), can be (appr oximately) computed with linear time-comple xity in the number of samples, in contrast with the quadratic-time Maximum Mean Discr epancy (MMD). By r eplacing the dis- cr epancy measur e in the critic of a GAN with the CFD, we obtain a model that is simple to implement and stable to train. The pr oposed metric enjoys desirable theor etical pr operties including continuity and differ entiability with r e- spect to generator parameters, and continuity in the weak topology . W e further pr opose a variation of the CFD in which the weighting distribution par ameters ar e also opti- mized during training; this obviates the need for manual tuning, and leads to an impr ovement in test power relative to CFD. W e demonstrate e xperimentally that our pr oposed method outperforms WGAN and MMD-GAN variants on a variety of unsupervised image g eneration benchmarks. 1. Introduction Implicit Generati ve Models (IGMs), such as Generati ve Adversarial Networks (GANs) [ 14 ], seek to learn a model Q θ of an underlying data distribution P using samples from P . Unlike prescribed probabilistic models, IGMs do not re- quire a likelihood function, and thus are appealing when the data likelihood is unknown or intractable. Empirically , GANs have excelled at numerous tasks, from unsupervised image generation [ 20 ] to policy learning [ 19 ]. The original GAN suffers from optimization instabil- ity and mode collapse, and often requires v arious ad-hoc tricks to stabilize training [ 34 ]. Subsequent research has rev ealed that the generator-discriminator setup in the GAN minimizes the Jensen-Shannon div ergence between the real and generated data distributions; this diver gence possesses discontinuities that results in uninformativ e gradients as Q θ approaches P , which hampers training. V arious works ha ve since established desirable properties for a diver gence that can ease GAN training, and proposed alternati ve training schemes [ 2 , 37 , 3 ], primarily using distances belonging to the Integral Probability Metric (IPM) family [ 32 ]. One popular IPM is the kernel-based metric Maximum Mean Discrepancy (MMD), and a significant portion of recent work has focussed on deriving better MMD-GAN vari- ants [ 24 , 5 , 1 , 25 ]. In this paper , we undertake a dif ferent, more elemen- tary approach, and formulate the problem of learning an IGM as minimizing the expected distance between charac- teristic functions of real and generated data distributions. Characteristic functions are widespread in probability the- ory and ha ve been used for two-sample testing [ 17 , 12 , 8 ], yet surprisingly , hav e not yet been in vestigated for GAN training. W e find that this approach leads to a simple and computationally-efficient loss: the characteristic function distance (CFD). Computing CFD requires linear time in the number of samples (unlike the quadratic-time MMD), and our experimental results indicate that CFD minimization re- sults in effecti ve training. This work provides both theoretical and empirical sup- port for using CFD to train IGMs. W e first establish that the CFD is continuous and dif ferentiable almost everywhere with respect to the parameters of the generator , and that it satisfies continuity in the weak topology – key properties that mak e it a suitable GAN metric [ 3 , 24 ]. W e pro vide nov el direct proofs that supplement the existing theory on GAN training metrics. Algorithmically , our key idea is sim- ple: train GANs using empirical estimates of the CFD under optimized weighting distributions. W e report on systematic experiments using synthetic distrib utions and four bench- mark image datasets (MNIST , CIF AR10, STL10, CelebA). Our experiments demonstrate that the CFD-based approach outperforms WGAN and MMD-GAN variants on quantita- tiv e e valuation metrics. From a practical perspecti ve, we find the CFD-based GANs are simple to implement and sta- ble to train. In summary , the key contributions of this work are: • a novel approach to train implicit generati ve models using a loss deriv ed from characteristic functions; • theoretical results sho wing that the proposed loss met- ric is continuous and differentiable in the parameters of the generator , and satisfies continuity in the weak topology; • e xperimental results showing that our approach leads to ef fectiv e generati ve models f av orable against state- of-the-art WGAN and MMD-GAN variants on a vari- ety of synthetic and real-world datasets. 2. Probability Distances and GANs W e be gin by pro viding a brief re view of the Gener- ativ e Adversarial Network (GAN) framework and recent distance-based methods for training GANs. A GAN is a generativ e model that implicitly seeks to learn the data dis- tribution P X giv en samples { x } n i =1 from P X . The GAN consists of a generator network g θ and a critic network f φ (also called the discriminator). The generator g θ : Z → X transforms a latent vector z ∈ Z sampled from a simple distribution (e.g., Gaussian) to a vector ˆ x in the data space. The original GAN [ 14 ] was defined via an adversarial two- player game between the critic and the generator; the critic attempts to distinguish the true data samples from ones ob- tained from the generator, and the generator attempts to make its samples indistinguishable from the true data. In more recent work, this two-player game is cast as min- imizing a div ergence between the real data distribution and the generated distribution. The critic f φ ev aluates some probability di vergence between the true and generated sam- ples, and is optimized to maximize this diver gence. In the original GAN, the associated (implicit) distance measure is the Jensen-Shannon div ergence, b ut alternati ve di vergences hav e since been introduced, e.g., the 1-W asserstein distance [ 3 , 16 ], Cramer distance [ 4 ], maximum mean discrepancy (MMD) [ 24 , 5 , 1 ], and Sobolev IPM [ 31 ]. Many distances proposed in the literature can be reduced to the Integral Probability Metric (IPM) framework with different restric- tions on the function class. 3. Characteristic Function Distance In this work, we propose to train GANs using a distance metric based on characteristic functions (CFs). Letting P be the probability measure associated with a real-valued ran- dom variable X , the characteristic function ϕ P : R d → C of X is giv en by ϕ P ( t ) = E x ∼ P [ e i h t , x i ] = Z R e i h t , x i d P , (1) where t ∈ R d is the input argument, and i = √ − 1 . Charac- teristic functions are widespread in probability theory , and are often used as an alternativ e to probability density func- tions. The characteristic function of a random variable com- pletely defines it, i.e., for two distrib utions P and Q , P = Q if and only if ϕ P = ϕ Q . Unlike the density function, the characteristic function always exists, and is uniformly con- tinuous and bounded: | ϕ P ( t ) | ≤ 1 . The squared Characteristic Function Distance (CFD) [ 8 , 18 ] between two distributions P and Q is giv en by the weighted integrated squared error between their character- istic functions CFD 2 ω ( P , Q ) = Z R d | ϕ P ( t ) − ϕ Q ( t ) | 2 ω ( t ; η ) d t , (2) where ω ( t ; η ) is a weighting function, which we henceforth assume to be parametrized by η and chosen such that the in- tegral in Eq. ( 2 ) con verges. When ω ( t ; η ) is the probability density function of a distribution on R d , the integral in Eq. ( 2 ) can be written as an expectation: CFD 2 ω ( P , Q ) = E t ∼ ω ( t ; η ) h | ϕ P ( t ) − ϕ Q ( t ) | 2 i . (3) By analogy to Fourier analysis in signal processing, Eq. ( 3 ) can be interpreted as the expected discrepancy between the Fourier transforms of two signals at frequencies sam- pled from ω ( t ; η ) . If supp( ω ) = R d , it can be shown us- ing the uniqueness theorem of characteristic functions that CFD ω ( P , Q ) = 0 ⇐ ⇒ P = Q [ 38 ]. In practice, the CFD can be approximated using em- pirical characteristic functions and finite samples from the weighting distribution ω ( t ; η ) . T o elaborate, the character- istic function of a degenerate distribution δ a for a ∈ R d is given by e i h t , a i where t ∈ R d . Gi ven observations X := { x 1 , . . . , x n } from a probability distribution P , the empirical distribution is a mixture of degenerate distribu- tions with equal weights, and the corresponding empirical characteristic function ˆ ϕ P is a weighted sum of characteris- tic functions of degenerate distrib utions: ˆ ϕ P ( t ) = 1 n n X j =1 e i h t , x j i . (4) Let X := { x 1 , . . . , x n } and Y := { y 1 , . . . , y m } with x i , y i ∈ R d be samples from the distributions P and Q respectiv ely , and let t 1 , . . . , t k be samples from ω ( t ; η ) . W e define the empirical characteristic function distance (ECFD) between P and Q as ECFD 2 ω ( P , Q ) = 1 k k X i =1 | ˆ ϕ P ( t i ) − ˆ ϕ Q ( t i ) | 2 , (5) 0 200 400 600 800 1000 Dimensions 0 . 5 0 . 6 0 . 7 0 . 8 0 . 9 1 . 0 T est P o w er P 6 = Q ECFD ECFD-Smo oth OECFD OECFD-Smo oth − 3 − 2 − 1 0 1 2 3 µ P j 6 = µ Q j − 3 − 2 − 1 0 1 2 3 µ P i = µ Q i − 3 − 2 − 1 0 1 2 3 0 . 00 0 . 01 0 . 02 0 . 00 0 . 02 − 3 − 2 − 1 0 1 2 3 0 . 0 0 . 1 0 . 2 0 . 3 0 . 4 0 . 5 Figure 1: (left) V ariation of test power with the number of dimensions for ECFD-based tests; (right) Change in the scale of the weighting distribution upon optimization. where ˆ ϕ P and ˆ ϕ Q are the empirical CFs, computed using X and Y respectiv ely . A quantity related to CFD (Eq. 2 ) has been studied in [ 33 ] and [ 18 ], in which the discrepancy between the analyt- ical and empirical characteristic functions of stable distri- butions is minimized for parameter estimation. The CFD is well-suited to this application because stable distributions do not admit density functions, making maximum likeli- hood estimation difficult. Parameter fitting has also been ex- plored for other models such as mixture-of-Gaussians, sta- ble ARMA process, and affine jump dif fusion models [ 39 ]. More recently , [ 8 ] proposed fast ( O ( n ) in the number of samples n ) two-sample tests based on ECFD, as well as a smoothed version of ECFD in which the characteristic func- tion is conv olved with an analytic kernel. The authors em- pirically show that ECFD and its smoothed variant hav e a better test-power/run-time trade-off compared to quadratic time tests, and better test po wer than the sub-quadratic time variants of MMD. 3.1. Optimized ECFD f or T wo-Sample T esting The choice of ω ( t ; η ) is important for the success of ECFD in distinguishing two different distributions; choos- ing an appropriate distribution and/or set of parameters η allows better coverage of the frequencies at which the dif- ferences in P and Q lie. F or instance, if the differences are concentrated at the frequencies far away from the origin and ω ( t ; η ) is Gaussian, the test po wer can be improv ed by suit- ably enlarging the v ariance of each coordinate of ω ( t ; η ) . T o increase the power of ECFD, we propose to optimize the parameters η (e.g., the variance associated with a normal distribution) of the weighting distribution ω ( t ; η ) to maxi- mize the power of the test. Howe ver , care should be taken when specifying how rich the class of functions ω ( · ; η ) is — the choice of which parameters to optimize and the asso- ciated constraints is important. Excessiv e optimization may cause the test to fixate on dif ferences that are merely due to fluctuations in the sampling. As an extreme example, we found that optimizing t ’ s directly (instead of optimizing the weighting distrib ution) sev erely degrades the test’ s ability to correctly accept the null hypothesis P = Q . T o validate our approach, we conducted a basic exper - iment using high-dimensional Gaussians, similar to [ 8 ]. Specifically , we used two multiv ariate Gaussians P and Q that hav e the same mean in all dimensions except one. As the dimensionality increases, it becomes increasingly dif- ficult to distinguish between samples from the two distri- butions. In our tests, the weighting distribution ω ( t ; η ) was chosen to be a Gaussian distribution N ( 0 , diag( σ 2 )) , 10000 samples each were taken from P and Q , and the num- ber of frequencies ( k ) was set to 3. W e optimized the param- eter vector η = { σ } to maximize the ECFD using the Adam optimizer for 100 iterations with a batch-size of 1000. Fig. 1a shows the variation of the test power (i.e., the fraction of times the null hypothesis P = Q is rejected) with the number of dimensions. OEFCD refers to the optimized ECFD, and the “Smooth” suffix indicates the smoothed ECFD variant proposed by [ 8 ]. W e see that optimization of η increases the power of ECFD and ECFD-Smooth, partic- ularly at the higher dimensionalities. There do not appear to be significant differences between the optimized smoothed and non-smoothed ECFD v ariants. Moreover , the optimiza- tion improved the ability of the test to correctly distinguish the tw o dif ferent distrib utions, b ut did not hamper its ability to correctly accept the null hypothesis when the distrib u- tions are the same (see Appendix C ). T o in vestigate how σ is adapted, we visualize tw o di- mensions { i, j } from the dataset where µ P i = µ Q i and µ P j 6 = µ Q j . Fig. 1b shows the absolute difference between the ECFs of P and Q , with the corresponding dimensions of the weighting distribution plotted in both dimensions. The solid blue line shows the optimized distribution (for OECFD) while the dashed orange line sho ws the initial dis- tribution (i.e., σ = 1 for ECFD and ECFD-Smooth). In the dimension where the distributions are the same, σ has small deviation from the initial value. Howe ver , in the dimension where the distrib utions are dif ferent, the increase in v ari- ance is more pronounced to compensate for the spread of difference between the ECFs a way from the origin. 4. Implicit Generative Modeling using CFD In this section, we turn our attention to applying the (op- timized) CFD for learning IGMs, specifically GANs. As in the standard GAN, our model is comprised of a generator g θ : Z → X and a critic f φ : X → R m , with param- eter vectors θ and φ , and data/latent spaces X ⊆ R d and Z ⊆ R p . Belo w , we write Θ , Φ , Π for the spaces in which the parameters θ , φ, η lie. The generator minimizes the empirical CFD between the real and generated data. Instead of minimizing the distance between characteristic functions of ra w high-dimensional data, we use a critic neural network f φ that is trained to maximize the CFD between real and generated data distri- butions in a learned lower-dimensional space. This results in the following minimax objecti ve for the IGM: inf θ ∈ Θ sup ψ ∈ Ψ CFD 2 ω ( P f φ ( X ) , P f φ ( g θ ( Z )) ) , (6) where ψ = { φ, η } (with corresponding parameter space Ψ ), and η is the parameter vector of the weighting distribution ω . The optimization over η is omitted if we choose to not optimize the weighting distrib ution. In our experiments, we set η = { σ } , with σ indicating the scale of each dimen- sion of ω . Since ev aluating the CFD requires knowledge of the data distribution, in practice, we optimize the empirical estimate ECFD 2 ω instead of CFD 2 ω . W e henceforth refer to this model as the Characteristic Function Generativ e Adver - sarial Network (CF-GAN). 4.1. CFD Properties: Continuity , Differentiability , and W eak T opology Similar to recently proposed W asserstein [ 3 ] and MMD [ 24 ] GANs, the CFD exhibits desirable mathematical properties. Specifically , CFD is continuous and differen- tiable almost e verywhere in the parameters of the generator (Thm. 1 ). Moreover , as it is continuous in the weak topol- ogy (Thm. 2 ), it can pro vide a signal to the generator g θ that is more informativ e for training than other “distances” that lack this property (e.g., Jensen-Shannon div ergence). In the following, we provide proofs for the abov e claims under as- sumptions similar to [ 3 ]. The following theorem formally states the result of con- tinuity and differentiability in θ almost everywhere, which is desirable for permitting training via gradient descent. Theorem 1. Assume that (i) f φ ◦ g θ is locally Lips- chitz with respect to ( θ , z ) with constants L ( θ , z ) not depending on φ and satisfying E z [ L ( θ , z )] < ∞ ; (ii) sup η ∈ Π E ω ( t ; η ) [ k t k ] < ∞ . Then, the func- tion sup ψ ∈ Ψ CFD 2 ω ( P f φ ( X ) , P f φ ( g θ ( Z )) ) is continuous in θ ∈ Θ everywher e, and differ entiable in θ ∈ Θ almost ev- erywher e. The following theorem establishes continuity in the weak topology , and concerns general con ver gent distribu- tions as opposed to only those corresponding to g θ ( z ) . In this result, we let P ( φ ) be the distribution of f φ ( x ) when x ∼ P , and similarly for P ( φ ) n . Theorem 2. Assume that (i) f φ is L f -Lipschitz for some L f not depending on φ ; (ii) sup η ∈ Π E ω ( t ) [ k t k ] < ∞ . Then, the function sup ψ ∈ Ψ CFD 2 ω ( P ( φ ) n , P ( φ ) ) is contin- uous in the weak topology , i.e., if P n D − → P , then sup ψ ∈ Ψ CFD 2 ω ( P ( φ ) n , P ( φ ) ) → 0 , where D − → implies conver - gence in distrib ution. The proofs are giv en in the appendix. In brief, we bound the dif ference between characteristic functions using geo- metric arguments; we interpret e ia as a vector on a circle, and note that | e ia − e ib | ≤ | a − b | . W e then upper-bound the dif ference of function values in terms of E ω ( t ) [ k t k ] (assumed to be finite) and averages of Lipschitz functions of x , x 0 under the distributions considered. The Lipschitz properties ensure that the function dif ference v anishes when one distribution con ver ges to the other . V arious generators satisfy the locally Lipschitz assump- tion, e.g., when g θ is a feed-forward network with ReLU activ ations. T o ensure that f φ is Lipschitz, common meth- ods emplo yed in prior work include weight clipping [ 3 ] and gradient penalty [ 16 ]. In addition, many common distribu- tions satisfy E ω ( t ) [ k t k ] < ∞ , e.g., Gaussian, Student-t, and Laplace with fixed σ . When σ is unbounded and op- timized, we normalize the CFD by k σ k , which prevents σ from going to infinity . An e xample demonstrating the necessity of Lipschitz as- sumptions in continuity results (albeit for a dif ferent metric) can be found in Example 1 of [ 1 ]. In the appendix, we dis- cuss conditions under which Theorem 2 can be strengthened to an “if and only if ” statement. 4.2. Relation to MMD and Prior W ork The CFD is related to the maximum mean discrepancy (MMD) [ 15 ]. Giv en samples from two distributions P and Q , the squared MMD is giv en by MMD 2 k ( P , Q ) = E [ κ ( x, x 0 )] + E [ κ ( y , y 0 )] − 2 E [ κ ( x, y )] (7) where x, x 0 ∼ P and y , y 0 ∼ Q are independent samples, and κ is kernel. When the weighting distribution of the CFD is equal to the in verse Fourier transform of the kernel in MMD (i.e., ω ( t ) = F − 1 { κ } ), the CFD and squared MMD are equiv alent: CFD 2 ω ( P , Q ) = MMD 2 κ ( P , Q ) . Indeed, kernels with supp( F − 1 ( κ )) = R d are called characteristic kernels [ 38 ], and when supp( ω ) = R d , MMD κ ( P , Q ) = 0 if and only if P = Q . Although formally equiv alent under the abov e conditions, we find experimentally that optimiz- ing empirical estimates of MMD and CFD result in dif ferent con vergence profiles and model performance across a range of datasets. Also, unlike MMD, which takes quadratic time in the number of samples to approximately compute, the CFD takes O ( nk ) time and is therefore computationally at- tractiv e when k n . Learning a generati ve model by minimizing the MMD between real and generated samples was proposed indepen- dently by [ 26 ] and [ 11 ]. The Generativ e Moment Matching Network (GMMN) [ 26 ] uses an autoencoder to first trans- form the data into a latent space, and then trains a generative network to produ ce latent vectors that match the true latent distribution. The MMD-GAN [ 24 ] performs a similar in- put transformation using a network f φ that is adversarially trained to maximize the MMD between the true distrib u- tion P X and the generator distribution Q θ ; this results in a GAN-like min-max criterion. More recently , [ 5 ] and [ 1 ] hav e proposed different theoretically-motiv ated regularizers on the gradient of MMD-GAN critic that improve training. In our experiments, we compare against the MMD-GAN both with and without gradient regularization. V ery recent work [ 25 ] (IKL-GAN) has ev aluated kernels parameterized in Fourier space, which are then used to com- pute MMD in MMD-GAN. In contrast to IKL-GAN, we de- riv e the CF-GAN via characteristic functions rather than via MMD, and our method obviates the need for kernel ev al- uation. W e also provide nov el direct proofs for the theo- retical properties of the optimized CFD that are not based on its equiv alence to MMD. The IKL-GAN utilizes a neu- ral network to sample random frequencies, whereas we use a simpler fixed distribution with a learned scale, reducing the number of hyperparameters to tune. Our method yields state-of-the-art performance, which suggests that the more complex setup in IKL-GAN may not be required for effec- tiv e GAN training. In parallel, significant work has gone into improving GAN training via architectural and optimization enhance- ments [ 30 , 7 , 20 ]; these research directions are orthogonal to our w ork and can be incorporated in our proposed model. 5. Experiments In this section, we present empirical results comparing different variants of our proposed model: CF-GAN. W e pre- fix O to the model name when the σ parameters were op- timized along with the critic and omit it when σ was kept fixed. Similarly , we suf fix GP to the model name when gra- dient penalty [ 16 ] was used to enforce Lipschitzness of f φ . In the absence of gradient penalty , we clipped the weights of f φ in [ − 0 . 01 , 0 . 01] . When the parameters σ were opti- mized, we scaled the ECFD by k σ k to prev ent σ from going to infinity , thereby ensuring E ω ( t ) [ k t k ] < ∞ . W e compare our proposed model against two variants of MMD-GAN: (i) MMD-GAN [ 24 ], which uses MMD with a mixture of RBF kernels as the distance metric; (ii) MMD-GAN-GP L 2 [ 5 ], which introduces an additi ve gra- dient penalty based on MMD’ s IPM witness function, an L2 penalty on discriminator acti vations, and uses a mix- ture of RQ kernels. W e also compare against WGAN [ 3 ] and WGAN-GP [ 16 ] due to their close relation to MMD-GAN [ 24 , 5 ]. Our code is av ailable online at https://github .com/crslab/OCFGAN . 5.1. Synthetic Data W e first tested the methods on two synthetic 1D distri- butions: a simple unimodal distribution ( D 1 ) and a more complex bimodal distribution ( D 2 ). The distributions were constructed by transforming z ∼ N (0 , 1) using a function h : R → R . F or the unimodal dataset, we used the scale- shift function form used by [ 40 ], where h ( z ) = µ + σz . For the bimodal dataset, we used the function form used by pla- nar flow [ 35 ], where h ( z ) = αz + β tanh( γ αz ) . W e trained the various GAN models to approximate the distribution of the transformed samples. Once trained, we compared the transformation function ˆ h learned by the GAN against the true function h . W e computed the mean absolute error (MAE) ( E z [ | h ( z ) − ˆ h ( z ) | ] ) to ev aluate the models. Further details on the experimental setup are in Appendix B.1 . Figs. 2a and 2b show the v ariation of the MAE with training iterations. For both datasets, the models with gra- dient penalty con ver ge to better minima. In D 1 , MMD- GAN-GP and OCF-GAN-GP con ver ge to the same v alue of MAE, but MMD-GAN-GP con ver ges faster . During our experiments, we observed that the scale of the weighting distribution (which is intialized to 1) falls rapidly before the MAE begins to decrease. F or the experiments with the scale fixed at 0.1 (CF-GAN-GP σ =0 . 1 ) and 1 (CF-GAN- GP σ =1 ), both models con ver ge to the same MAE, but CF- GAN-GP σ =1 takes much longer to con ver ge than CF-GAN- GP σ =0 . 1 . This indicates that the optimization of the scale parameter leads to faster con ver gence. For the more com- plex dataset D 2 , MMD-GAN-GP takes significantly longer to conv erge compared to WGAN-GP and OCF-GAN-GP . OCF-GAN-GP conv erges fastest and to a better minimum, 0 2000 4000 6000 8000 10000 Generator Iterations 0 . 00 0 . 05 0 . 10 0 . 15 0 . 20 0 . 25 0 . 30 0 . 35 0 . 40 Mean Absolute Error (MAE) W GAN W GAN-GP λ GP =1 MMD-GAN MMD-GAN-GP CF-GAN-GP( T ) σ =0 . 1 CF-GAN-GP( T ) σ =1 OCF-GAN-GP( T ) 0 2500 5000 7500 10000 12500 15000 17500 20000 Generator Iterations 0 . 0 0 . 1 0 . 2 0 . 3 0 . 4 0 . 5 Mean Absolute Error (MAE) W GAN W GAN-GP MMD-GAN MMD-GAN-GP OCF-GAN( N ) OCF-GAN-GP( N ) Figure 2: V ariation of MAE for synthetic datasets D 1 (left) and D 2 (right) with generator iterations. The plots are av eraged ov er 10 random runs. followed by WGAN-GP . 5.2. Image Generation A recent large-scale analysis of GANs [ 29 ] showed that different models achieve similar best performance when giv en ample computational budget, and advocates compar- isons between distributions under practical settings. As such, we compare scores attained by the models from dif- ferent initializations under fixed computational budgets. W e used four datasets: 1) MNIST [ 23 ]: 60K grayscale images of handwritten digits; 2) CIF AR10 [ 22 ]: 50K RGB images; 3) CelebA [ 27 ]: ≈ 200K RGB images of celebrity faces; and 4) STL10 [ 9 ]: 100K RGB images. F or all datasets, we center-cropped and scaled the images to 32 × 32 . Network and Hyperparameter Details Gi ven our com- putational budget and experiment setup, we used a DCGAN-like generator g θ and critic f φ architecture for all models (similar to [ 24 ]). For MMD-GAN, we used a mix- ture of fi ve RBF k ernels (5-RBF) with dif ferent scales [ 24 ]. MMD-GAN-GP L 2 used a mixture of rational quadratic ker - nels (5-RQ). The kernel parameters and the trade-off param- eters for gradient and L2 penalties were set according to [ 5 ]. W e tested CF-GAN variants with two weighting distribu- tions: Gaussian ( N ) and Student’ s-t ( T ) (with 2 degrees of freedom). For CF-GAN, we tested 3 scale parameters in the set { 0 . 2 , 0 . 5 , 1 } , and we report the best results. The number of frequencies ( k ) for computing ECFD was set to 8. Please see Appendix B.2 for implementation details. Evaluation Metrics W e compare the different models using three ev aluation metrics: Fr ´ echet Inception Dis- tance (FID) [ 37 ], Kernel Inception Distance (KID) [ 5 ], and Precision-Recall (PR) for generativ e models [ 36 ]. Details on these metrics and the ev aluation procedure can be found in Appendix B.2 . In brief, the FID computes the Fr ´ echet distance between two multiv ariate Gaussians and the KID computes the MMD (with a polynomial kernel of de gree 3) between the real and generated data distributions. Both FID and KID give single value scores, and PR giv es a two di- mensional score which disentangles the quality of generated samples from the cov erage of the data distribution. PR is de- fined by a pair F 8 (recall) and F 1 / 8 (precision) which rep- resent the cov erage and sample quality , respectiv ely [ 36 ]. Results In the follo wing, we summarize our main find- ings, and relegate the details to the Appendix. T able 1 shows the FID and KID values achiev ed by different mod- els for CIF AR10, STL10, and CelebA datasets. In short, our model outperforms both variants of WGAN and MMD- GAN by a significant margin. OCF-GAN, using just one weighting function, outperforms both MMD-GANs that use a mixture of 5 different k ernels. W e observe that the optimization of the scale parameter improv es the performance of the models for both weighting distributions, and the introduction of gradient penalty as a means to ensure Lipschitzness of f φ results in a significant improv ement in the score values for all models. This is in line with the results of [ 16 ] and [ 5 ]. Overall, amongst the CF-GAN variants, OCF-GAN-GP with Gaussian weighting performs the best for all datasets. The two-dimensional precision-recall scores in Fig. 3 provide further insight into the performance of different models. Across all the datasets, the addition of gradient penalty (OCF-GAN-GP) rather than weight clipping (OCF- GAN) leads to a higher improvement in recall compared to precision. This result supports recent arguments that weight clipping forces the generator to learn simpler func- tions, while gradient penalty is more flexible [ 16 ]. The im- prov ement in recall with the introduction of gradient penalty is more noticeable for CIF AR10 and STL10 datasets com- pared to CelebA. This result is intuitiv e; CelebA is a more uniform and simpler dataset compared to CIF AR10/STL10, which contain more di verse classes of images, and thus 0 . 70 0 . 75 0 . 80 0 . 85 0 . 90 F 8 (Recall) 0 . 78 0 . 80 0 . 82 0 . 84 0 . 86 0 . 88 0 . 90 0 . 92 0 . 94 F 1 / 8 (Precision) CIF AR10 W GAN W GAN-GP MMD-GAN MMD-GAN-GP L 2 OCF-GAN- T OCF-GAN-GP- N 0 . 700 0 . 725 0 . 750 0 . 775 0 . 800 0 . 825 F 8 (Recall) 0 . 86 0 . 88 0 . 90 0 . 92 0 . 94 0 . 96 0 . 98 1 . 00 F 1 / 8 (Precision) STL10 W GAN W GAN-GP MMD-GAN MMD-GAN-GP L 2 OCF-GAN- T OCF-GAN-GP- N 0 . 90 0 . 92 0 . 94 0 . 96 0 . 98 1 . 00 F 8 (Recall) 0 . 88 0 . 90 0 . 92 0 . 94 0 . 96 0 . 98 1 . 00 F 1 / 8 (Precision) CelebA W GAN W GAN-GP MMD-GAN MMD-GAN-GP L 2 OCF-GAN- T OCF-GAN-GP- N Figure 3: Precision-Recall scores (higher is better) for CIF AR10 (left), STL10 (center), and CelebA (right) datasets. likely ha ve modes that are more comple x and far apart. Re- sults on the MNIST dataset, where all models achiev e good score v alues, are av ailable in Appendix C , which also in- cludes further experiments using the smoothed version of ECFD and the optimized smoothed version (no impro ve- ment ov er the unsmoothed versions on the image datasets). Qualitative Results In addition to the quantitativ e met- rics presented above, we also performed a qualitative anal- ysis of the generated samples. Fig. 4 shows image samples generated by OCF-GAN-GP for different datasets. W e also tested our method with a deep ResNet model on a 128 × 128 scaled version of CelebA dataset. Samples generated by this model (Fig. 5 ) show that OCF-GAN-GP scales to lar ger images and networks, and is able to generate visually ap- pealing images comparable to state-of-the-art methods us- ing similar sized networks. Additional qualitativ e compar- isons can be found in Appendix C . Impact of W eighting Distribution The choice of weight- ing distribution did not lead to drastic changes in model per- formance. The T distribution performs best when weight clipping is used, while N performs best in the case of gra- dient penalty . This suggests that the proper choice of dis- tribution is dependent on both the dataset and the Lipschitz regularization used, but the overall framew ork is robust to reasonable choices. W e also conducted preliminary experiments using a uni- form ( U ) distribution weighting scheme. Even though the condition supp( U ) = R m does not hold for the uniform distribution, we found that this does not adversely affect the performance (see Appendix C ). The uniform weighting dis- tribution corresponds to the sinc-kernel in MMD, which is known to be a non-characteristic kernel [ 38 ]. Our results suggest that such kernels could remain effecti ve when used in MMD-GAN, but we did not v erify this experimentally . Figure 4: Image samples for the different datasets (top to bottom: CIF AR10, STL10, and MNIST) generated by OCF-GAN-GP (random samples without selection). Impact of Number of Random Frequencies W e con- ducted an experiment to study the impact of the number of random frequencies ( k ) that are sampled from the weight- ing distribution to compute the ECFD. W e ran our best per- forming model (OCF-GAN-GP) with different values of k from the set { 1 , 4 , 8 , 16 , 32 , 64 } . The FID and KID scores for this experiment are shown in T able 2 . As expected, the score values improv e as k increases. Ho wev er , ev en for the T able 1: FID and KID ( × 10 3 ) scores (lower is better) for CIF AR10, STL10, and CelebA datasets averaged over 5 random runs (standard deviation in parentheses). Model Kernel/ CIF AR10 STL10 CelebA W eight FID KID FID KID FID KID WGAN 44.11 (1.16) 25 (1) 38.61 (0.43) 23 (1) 17.85 (0.69) 12 (1) WGAN-GP 35.91 (0.30) 19 (1) 27.85 (0.81) 15 (1) 10.03 (0.37) 6 (1) MMD-GAN 5-RBF 41.28 (0.54) 23 (1) 35.76 (0.54) 21 (1) 18.48 (1.60) 12 (1) MMD-GAN-GP L 2 5-RQ 38.88 (1.35) 21 (1) 31.67 (0.94) 17 (1) 13.22 (1.30) 8 (1) CF-GAN N ( σ =0 . 5) 39.81 (0.93) 23 (1) 33.54 (1.11) 19 (1) 13.71 (0.50) 9 (1) T ( σ =1) 41.41 (0.64) 22 (1) 35.64 (0.44) 20 (1) 16.92 (1.29) 11 (1) OCF-GAN N 38.47 (1.00) 20 (1) 32.51 (0.87) 19 (1) 14.91 (0.83) 9 (1) T 37.96 (0.74) 20 (1) 31.03 (0.82) 17 (1) 13.73 (0.56) 8 (1) OCF-GAN-GP N 33.08 (0.26) 17 (1) 26.16 (0.64) 14 (1) 9.39 (0.25) 5 (1) T 34.33 (0.77) 18 (1) 26.86 (0.38) 15 (1) 9.61 (0.39) 6 (1) T able 2: FID and KID scores for on the MNIST dataset with varying numbers of frequencies used in OCF-GAN-GP . # of freqs ( k ) FID KID × 10 3 1 0.44 (0.03) 5 (1) 4 0.39 (0.05) 4 (1) 8 0.36 (0.03) 4 (1) 16 0.35 (0.02) 3 (1) 32 0.35 (0.03) 3 (1) 64 0.36 (0.07) 4 (1) Figure 5: Image samples for the 128 × 128 CelebA dataset generated by OCF-GAN-GP with a ResNet generator (ran- dom samples without selection). lowest number of frequencies possible ( k = 1 ), the perfor- mance does not degrade too se verely . 6. Discussion and Conclusion In this paper, we proposed a novel weighted distance between characteristic functions for training IGMs, and showed that the proposed metric has attracti ve theoreti- cal properties. W e observ ed e xperimentally that the pro- posed model outperforms MMD-GAN and WGAN vari- ants on four benchmark image datasets. Our results indicate that characteristic functions provide an effecti ve alternati ve means for training IGMs. This work opens additional av enues for future research. For example, the empirical CFD used for training may re- sult in high variance gradient estimates (particularly with a small number of sampled frequencies), yet the CFD-trained models attain high performance scores with better con ver - gence in our tests. The reason for this should be more thor- oughly explored. Although we used the gradient penalty proposed by WGAN-GP , there is no reason to constrain the gradient to exactly 1. W e believ e that an exploration of the geometry of the proposed loss could lead to improvement in the gradient regularizer for the proposed method. Apart from generative modeling, two sample tests such as MMD have been used for problems such as domain adap- tation [ 28 ] and domain separation [ 6 ], among others. The optimized CFD loss function proposed in this work can be used as an alternativ e loss for these problems. Acknowledgements This research is supported by the National Research Foundation Singapore under its AI Sin- gapore Programme (A ward Number: AISG-RP-2019-011) to H. Soh. J. Scarlett is supported by the Sing apore Na- tional Research Foundation (NRF) under grant number R- 252-000-A74-281. References [1] Michael Arbel, Dougal Sutherland, Mikołaj Bi ´ nko wski, and Arthur Gretton. On gradient regularizers for MMD GANs. In NeurIPS , 2018. 1 , 2 , 4 , 5 [2] Mart ´ ın Arjovsk y and L ´ eon Bottou. T owards princi- pled methods for training generati ve adversarial networks. arXiv:1701.04862 , 2017. 1 [3] Mart ´ ın Arjovsky , Soumith Chintala, and L ´ eon Bottou. W asserstein generati ve adversarial networks. In ICML , 2017. 1 , 2 , 4 , 5 [4] Marc G. Bellemare, Ivo Danihelka, Will Dabney , Shakir Mo- hamed, Balaji Lakshminarayanan, Stephan Hoyer , and R ´ emi Munos. The Cramer distance as a solution to biased W asser- stein gradients. , 2017. 2 [5] Mikolaj Binkowski, Dougal J. Sutherland, Michael Arbel, and Arthur Gretton. Demystifying MMD GANs. In ICLR , 2018. 1 , 2 , 5 , 6 , 15 , 16 [6] K onstantinos Bousmalis, George T rigeorgis, Nathan Silber- man, Dilip Krishnan, and Dumitru Erhan. Domain separa- tion networks. In NIPS , 2016. 8 [7] Andre w Brock, Jeff Donahue, and Karen Simonyan. Large scale GAN training for high fidelity natural image synthesis. arXiv:1809.11096 , 2018. 5 [8] Kacper P Chwialko wski, Aaditya Ramdas, Dino Sejdinovic, and Arthur Gretton. Fast two-sample testing with analytic representations of probability measures. In NIPS , 2015. 1 , 2 , 3 [9] Adam Coates, Andrew Ng, and Honglak Lee. An analysis of single-layer networks in unsupervised feature learning. In AIST ATS , 2011. 6 [10] Harald Cram ´ er and Herman W old. Some theorems on distri- bution functions. Journal of the London Mathematical Soci- ety , 1(4):290–294, 1936. 13 [11] Gintare Karolina Dziugaite, Daniel M. Roy , and Zoubin Ghahramani. Training generativ e neural networks via maxi- mum mean discrepancy optimization. In U AI , 2015. 5 [12] TW Epps and Kenneth J Singleton. An omnibus test for the two-sample problem using the empirical characteristic function. Journal of Statistical Computation and Simulation , 26(3-4):177–203, 1986. 1 [13] Herbert Federer . Geometric measur e theory . Springer , 2014. 12 [14] Ian J. Goodfellow , Jean Pouget-Abadie, Mehdi Mirza, Bing Xu, David W arde-Farley , Sherjil Ozair , Aaron C. Courville, and Y oshua Bengio. Generativ e adversarial nets. In NIPS , 2014. 1 , 2 [15] Arthur Gretton, Karsten M Borgwardt, Malte J Rasch, Bern- hard Sch ¨ olkopf, and Alexander Smola. A k ernel two-sample test. Journal of Machine Learning Resear ch , 13(Mar):723– 773, 2012. 4 [16] Ishaan Gulrajani, Faruk Ahmed, Mart ´ ın Arjovsk y , V incent Dumoulin, and Aaron C. Courville. Improved training of Wasserstein GANs. In NIPS , 2017. 2 , 4 , 5 , 6 , 15 [17] CE Heathcote. A test of goodness of fit for symmetric ran- dom variables. A ustralian J ournal of Statistics , 14(2):172– 181, 1972. 1 [18] CR Heathcote. The integrated squared error estimation of parameters. Biometrika , 64(2):255–264, 1977. 2 , 3 [19] Jonathan Ho and Stefano Ermon. Generative adversarial im- itation learning. In NIPS , 2016. 1 [20] T ero Karras, Samuli Laine, and T imo Aila. A style-based generator architecture for generative adversarial networks. In CVPR , 2019. 1 , 5 [21] Achim Klenke. Pr obability theory: a compr ehensive cour se . Springer Science & Business Media, 2013. 13 [22] Ale x Krizhevsky . Learning multiple layers of features from tiny images. 2009. 6 [23] Y ann LeCun, L ´ eon Bottou, and Patrick Haffner . Gradient- based learning applied to document recognition. 2001. 6 [24] Chun-Liang Li, W ei-Cheng Chang, Y u Cheng, Y iming Y ang, and Barnab ´ as P ´ oczos. MMD GAN: T ow ards deeper under- standing of moment matching network. In NIPS , 2017. 1 , 2 , 4 , 5 , 6 , 15 [25] Chun-Liang Li, W ei-Cheng Chang, Y oussef Mroueh, Y iming Y ang, and Barnab ´ as P ´ oczos. Implicit kernel learning. In AIST ATS , 2019. 1 , 5 [26] Y ujia Li, Ke vin Swersky , and Richard S. Zemel. Generative moment matching networks. In ICML , 2015. 5 [27] Ziwei Liu, Ping Luo, Xiaogang W ang, and Xiaoou T ang. Deep learning face attributes in the wild. In ICCV , 2015. 6 [28] Mingsheng Long, Y ue Cao, Jianmin W ang, and Michael I. Jordan. Learning transferable features with deep adaptation networks. In ICML , 2015. 8 [29] Mario Lucic, Karol Kurach, Marcin Michalski, Sylvain Gelly , and Olivier Bousquet. Are GANs created equal? A large-scale study . In NeurIPS , 2018. 6 [30] T akeru Miyato, T oshiki Kataoka, Masanori K oyama, and Y uichi Y oshida. Spectral normalization for generativ e ad- versarial networks. , 2018. 5 [31] Y oussef Mroueh, Chun-Liang Li, T om Sercu, Anant Raj, and Y u Cheng. Sobolev GAN. , 2017. 2 [32] Alfred M ¨ uller . Inte gral probability metrics and their gener- ating classes of functions. Advances in Applied Probability , 29(2):429–443, 1997. 1 [33] Albert S P aulson, Edward W Holcomb, and Robert A Leitch. The estimation of the parameters of the stable laws. Biometrika , 62(1):163–170, 1975. 3 [34] Alec Radford, Luke Metz, and Soumith Chintala. Unsuper- vised representation learning with deep con volutional gener- ativ e adversarial networks. , 2015. 1 [35] Danilo Rezende and Shakir Mohamed. V ariational inference with normalizing flows. In ICML , 2015. 5 [36] Mehdi SM Sajjadi, Olivier Bachem, Mario Lucic, Olivier Bousquet, and Sylvain Gelly . Assessing generativ e mod- els via precision and recall. In NeurIPS , pages 5228–5237, 2018. 6 , 16 [37] T im Salimans, Ian Goodfellow , W ojciech Zaremba, V icki Cheung, Alec Radford, and Xi Chen. Improved techniques for training GANs. In NIPS , 2016. 1 , 6 , 16 [38] Bharath K Sriperumbudur , Arthur Gretton, Kenji Fukumizu, Bernhard Sch ¨ olkopf, and Gert RG Lanckriet. Hilbert space embeddings and metrics on probability measures. Journal of Machine Learning Researc h , 11(Apr):1517–1561, 2010. 2 , 5 , 7 [39] Jun Y u. Empirical characteristic function estimation and its applications. Econometric revie ws , 2004. 3 [40] Manzil Zaheer, Chun-Liang Li, Barnab ´ as P ´ oczos, and Rus- lan Salakhutdinov . GAN connoisseur : Can GANs learn sim- ple 1D parametric distributions? 2018. 5 , 15 A. Proofs A.1. Proof of Theorem 1 Let P X be the data distrib ution, and let P g θ ( Z ) be the distribution of g θ ( z ) when z ∼ P Z , with P Z being the latent distribution. Recall that the characteristic function of a distribution Q is gi ven by ϕ Q ( t ) = E x ∼ Q [ e i h t , x i ] . (8) The quantity CFD 2 ω ( P f φ ( X ) , P f φ ( g θ ( Z )) ) can then be written as CFD 2 ω ( P f φ ( X ) , P f φ ( g θ ( Z )) ) = E t ∼ ω ( t ; η ) h | ϕ X ( t ) − ϕ θ ( t ) | 2 i , (9) where we denote the characteristic functions of P f φ ( X ) and P f φ ( g θ ( Z )) by ϕ X and ϕ θ respectiv ely , with an implicit depen- dence of φ . For notational simplicity , we henceforth denote CFD 2 ω ( P f φ ( X ) , P f φ ( g θ ( Z )) ) by D ψ ( P X , P θ ) . Since the difference of two functions’ maximal values is alw ays upper bounded by the maximal gap between the two functions, we hav e sup ψ ∈ Ψ D ψ ( P X , P θ ) − sup ψ ∈ Ψ D ψ ( P X , P θ 0 ) ≤ sup ψ ∈ Ψ | D ψ ( P X , P θ ) − D ψ ( P X , P θ 0 ) | (10) ≤ | D ψ ∗ ( P X , P θ ) − D ψ ∗ ( P X , P θ 0 ) | + (11) where ψ ∗ = { φ ∗ , η ∗ } denotes any parameters that are within of the supremum on the right-hand side of ( 11 ), and where > 0 may be arbitrarily small. Such ψ ∗ always exists by the definition of supremum. Subsequently , we define h θ = f φ ∗ ◦ g θ for compactness. Let ω ∗ denote the distribution ω ( t ) associated with η ∗ . W e further upper bound the right-hand side of ( 11 ) as follows: | D ψ ∗ ( P X , P θ ) − D ψ ∗ ( P X , P θ 0 ) | = E ω ∗ ( t ) h | ϕ X ( t ) − ϕ θ ( t ) | 2 i − E ω ∗ ( t ) h | ϕ X ( t ) − ϕ θ 0 ( t ) | 2 i (12) ( a ) ≤ E ω ∗ ( t ) h | ϕ X ( t ) − ϕ θ ( t ) | 2 − | ϕ X ( t ) − ϕ θ 0 ( t ) | 2 i , (13) where ( a ) uses the linearity of expectation and Jensen’ s inequality . Since any characteristic function is bounded by | ϕ P ( t ) | ≤ 1 , the value of | ϕ X ( t ) − ϕ θ ( t ) | for any θ is upper bounded by 2. Since the function f ( u ) = u 2 is (locally) 4 -Lipschitz ov er the restricted domain [0 , 2] , we have | ϕ X ( t ) − ϕ θ ( t ) | 2 − | ϕ X ( t ) − ϕ θ 0 ( t ) | 2 ≤ 4 | ϕ X ( t ) − ϕ θ ( t ) | − | ϕ X ( t ) − ϕ θ 0 ( t ) | (14) ( b ) ≤ 4 | ϕ θ ( t ) − ϕ θ 0 ( t ) | (15) = 4 E z h e i h t ,h θ ( z ) i i − E z h e i h t ,h θ 0 ( z ) i i (16) ( c ) ≤ 4 E z h e i h t ,h θ ( z ) i − e i h t ,h θ 0 ( z ) i i , (17) where ( b ) uses the triangle inequality , and ( c ) uses Jensen’ s inequality . In Eq. ( 17 ), let e i h t ,h θ ( z ) i − e i h t ,h θ 0 ( z ) i =: e ia − e ib , which can be interpreted as the length of the chord that subtends an angle of | a − b | at the center of a unit circle centered at origin. The length of this chord is given by 2 sin | a − b | 2 , and since 2 sin | a − b | 2 ≤ | a − b | , we have e i h t ,h θ ( z ) i − e i h t ,h θ 0 ( z ) i ≤ |h t , h θ ( z ) i − h t , h θ 0 ( z ) i| (18) ( d ) ≤ k t k · k h θ ( z ) − h θ 0 ( z ) k , (19) where ( d ) uses the Cauchy-Schwarz inequality . Furthermore, using the assumption sup η ∈ Π E ω ( t ) [ k t k ] < ∞ , we get E ω ∗ ( t ) h E z h e i h t ,h θ ( z ) i − e i h t ,h θ 0 ( z ) i ii ≤ E ω ∗ ( t ) [ k t k ] E z [ k h θ ( z ) − h θ 0 ( z ) k ] (20) with the first term being finite. By assumption, h is locally Lipschitz, i.e., for any pair ( θ , z ) , there exists a constant L ( θ , z ) and an open set U θ, z such that ∀ ( θ 0 , z 0 ) ∈ U θ, z we hav e k h θ ( z ) − h θ 0 ( z 0 ) k ≤ L ( θ , z ) k θ − θ 0 k . Setting z 0 = z and taking the expectation, we obtain E ω ∗ ( t ) [ k t k ] E z [ k h θ ( z ) − h θ 0 ( z ) k ] ≤ E ω ∗ ( t ) [ k t k ] E z [ L ( θ , z )] k θ − θ 0 k (21) for all θ 0 sufficiently close to θ . Recall also that E z [ L ( θ , z )] < ∞ by assumption. Combining Eqs. ( 13 ), ( 17 ), and ( 21 ), we get | D ψ ∗ ( P X , P θ ) − D ψ ∗ ( P X , P θ 0 ) | ≤ 4 E ω ∗ ( t ) [ k t k ] E z [ L ( θ , z )] k θ − θ 0 k , (22) and combining with ( 11 ) giv es sup ψ ∈ Ψ D ψ ( P X , P θ ) − sup ψ ∈ Ψ D ψ ( P X , P θ 0 ) ≤ 4 E ω ∗ ( t ) [ k t k ] E z [ L ( θ , z )] k θ − θ 0 k + (23) ≤ 4 sup η ∈ Π E ω ( t ) [ k t k ] E z [ L ( θ , z )] k θ − θ 0 k + . (24) T aking the limit → 0 on both sides gi ves sup ψ ∈ Ψ D ψ ( P X , P θ ) − sup ψ ∈ Ψ D ψ ( P X , P θ 0 ) ≤ 4 sup η ∈ Π E ω ( t ) [ k t k ] E z [ L ( θ , z )] k θ − θ 0 k , (25) which proves that sup ψ ∈ Ψ D ψ ( P X , P θ ) is locally Lipschitz, and therefore continuous. In addition, Radamacher’ s theorem [ 13 ] states any locally Lipschitz function is dif ferentiable almost everywhere, which establishes the dif ferentiability claim. A.2. Proof of Theorem 2 Let x n ∼ P n and x ∼ P . T o study the behavior of sup ψ ∈ Ψ CFD 2 ω ( P ( φ ) n , P ( φ ) ) , we first consider CFD 2 ω ( P ( φ ) n , P ( φ ) ) = E ω ( t ) E x n h e i h t ,f φ ( x n ) i i − E x h e i h t ,f φ ( x ) i i 2 (26) Since E x n e i h t ,f φ ( x n ) i − E x e i h t ,f φ ( x ) i ∈ [0 , 2] , using the fact that u 2 ≤ 2 | u | for u ∈ [ − 2 , 2] , we hav e E ω ( t ) E x n h e i h t ,f φ ( x n ) i i − E x h e i h t ,f φ ( x ) i i 2 ≤ 2 E ω ( t ) h E x n , x h e i h t ,f φ ( x n ) i − e i h t ,f φ ( x ) i i i (27) ( a ) ≤ 2 E ω ( t ) h E x n , x h e i h t ,f φ ( x n ) i − e i h t ,f φ ( x ) i ii (28) ( b ) ≤ 2 E ω ( t ) [ E x n , x [min { 2 , |h t , f φ ( x n ) i − h t , f φ ( x ) i|} ]] (29) ( c ) ≤ 2 E ω ( t ) [ E x n , x [min { 2 , k t k · k f φ ( x n ) − f φ ( x ) k} ]] , (30) where ( a ) uses Jensen’ s inequality , ( b ) uses the geometric properties stated follo wing Eq. ( 17 ) and the f act that | e ia − e ib | ≤ 2 , and ( c ) uses the Cauchy-Schwarz inequality . For brevity , let T max = sup η ∈ Π E ω ( t ) [ k t k ] , which is finite by assumption. Interchanging the order of the expectations in Eq. ( 30 ) and applying Jensen’ s inequality (to E ω ( t ) alone) and the concavity of f ( u ) = min { 2 , u } , we can continue the preceding upper bound as follows: E ω ( t ) E x n h e i h t ,f φ ( x n ) i i − E x h e i h t ,f φ ( x ) i i 2 ≤ 2 E x n , x [min { 2 , T max k f φ ( x n ) − f φ ( x ) k} ] (31) ( d ) ≤ 2 E x n , x [min { 2 , T max L f k x n − x k} ] , (32) where ( d ) defines L f to be the Lipschitz constant of f φ , with is independent of φ by assumption. Observe that g ( u ) = min { 2 , T max L f | u |} is a bounded Lipschitz function of u . By the Portmanteau theorem ([ 21 ], Thm. 13.16), con ver gence in distrib ution P n D − → P implies that E [ g ( k x n − x k )] → 0 for any such g , and hence ( 32 ) yields sup ψ ∈ Ψ CFD 2 ω ( P ( φ ) n , P ( φ ) ) → 0 (upon taking sup ψ ∈ Ψ on both sides), as required. A.3. Discussion on an “only if ” Counterpart to Theorem 2 Theorem 2 shows that, under some technical assumptions, the function sup ψ ∈ Ψ CFD 2 ω ( P ( φ ) n , P ( φ ) ) satisfies continuity in the weak toplogy , i.e., P n D → P = ⇒ sup ψ ∈ Ψ CFD 2 ω ( P ( φ ) n , P ( φ ) ) → 0 . where P n D → P denotes con ver gence in distribution. Here we discuss whether the opposite is true: Does sup ψ ∈ Ψ CFD 2 ω ( P ( φ ) n , P ( φ ) ) → 0 imply that P n D → P ? In general, the answer is negati ve. For example: • If Φ only contains the function φ ( x ) = 0 , then P ( φ ) is always the distribution corresponding to deterministically equaling zero, so any two distrib utions giv e zero CFD. • If ω ( t ) has bounded support, then two distributions P 1 , P 2 whose characteristic functions only dif fer for t v alues outside that support may still giv e E ω ( t ) | ϕ P 1 ( t ) − ϕ P 2 ( t ) | 2 = 0 . In the following, howe ver , we argue that the answer is positiv e when { f φ } φ ∈ Φ is “sufficiently rich” and { ω } η ∈ Π is “suffi- ciently well-behav ed”. Rather than seeking the most general assumptions that formalize these requirements, we focus on a simple special case that still captures the key insights, assuming the follo wing: • There e xists L > 0 such that { f φ } φ ∈ Φ includes all linear functions that are L -Lipschitz; • There e xists η ∈ Π such that ω ( t ) has support R m , where m is the output dimension of f φ . T o giv e examples of these, note that neural networks with ReLU activ ations can implement arbitrary linear functions (with the Lipschitz condition amounting to bounding the weights), and note that the second assumption is satisfied by any Gaussian ω ( t ) with a fixed positi ve-definite co variance matrix. In the following, let x n ∼ P n and x ∼ P ( φ ) . W e will prove the contrapositi ve statement: P n D 6→ P ( φ ) = ⇒ sup ψ ∈ Ψ CFD 2 ω ( P n , P ( φ ) ) 6→ 0 . By the Cram ´ er-W old theorem [ 10 ], P n D 6→ P ( φ ) implies that we can find constants c 1 , . . . , c d such that d X i =1 c i x ( i ) n D 6→ d X i =1 c i x ( i ) , (33) where x ( i ) , x ( i ) n denote the i -th entries of x , x n , with d being their dimension. − 10 − 5 0 5 10 0 . 00 0 . 25 0 . 50 0 . 75 1 . 00 1 . 25 1 . 50 1 . 75 2 . 00 z ∼ N (0 , 1) h ( z ) = − 10 + 5 z p ( z ) p ( f ( z )) (a) D 1 − 10 − 5 0 5 10 0 . 0 0 . 2 0 . 4 0 . 6 0 . 8 1 . 0 1 . 2 1 . 4 1 . 6 z ∼ N (0 , 1) h ( z ) = 1 5 z + 10 tanh(1000 · 1 5 z ) p ( z ) p ( f ( z )) (b) D 2 Figure 6: The PDFs of D 1 and D 2 (in blue) estimated using Kernel Density Estimation (KDE) along with the true distrib ution p ( z ) (in red). Recall that we assume { f φ } φ ∈ Φ includes all linear functions from R d to R m with Lipschitz constant at most L > 0 . Hence, we can select φ ∈ Φ such that ev ery entry of f φ ( x ) equals 1 Z P d i =1 c i x ( i ) , where Z is sufficiently large so that the Lipschitz constant of this f φ is at most L . Howe ver , for this φ , ( 33 ) implies that f φ ( x n ) D 6→ f φ ( x ) , which in turn implies that | ϕ P ( φ ) n ( t ) − ϕ P ( φ ) ( t ) | is bounded away from zero for all t in some set T of positiv e Lebesgue measure. Choosing ω ( t ) to have support R m in accordance with the second technical assumption above, it follo ws that E ω ( t ) | ϕ P ( φ ) 1 ( t ) − ϕ P ( φ ) 2 ( t ) | 2 6→ 0 and hence sup ψ ∈ Ψ CFD 2 ω ( P ( φ ) n , P ( φ ) ) 6→ 0 . B. Implementation Details B.1. Synthetic Data Experiments The synthetic data was generated by first sampling z ∼ N (0 , 1) and then applying a function h to the samples. W e constructed distributions of two types: a scale-shift unimodal distribution D 1 and a “scale-split-shift” bimodal distribution D 2 . The function h for the two distributions are defined as follo ws: • D 1 : h ( z ) = µ + σ z ; we set µ = − 10 and σ = 1 5 . This shifts the mean of the distribution to − 10 , resulting in the N ( − 10 , 1 5 2 ) distribution. Fig. 6a shows the PDF (and histogram) of the original distribution p ( z ) and the distrib ution of h ( z ) , which is approximated using Kernel Density Estimation (KDE). • D 2 : h ( z ) = αz + β tanh( γ αz ) ; we set α = 1 5 , β = 10 , γ = 100 . This splits the distribution into two modes and shifts the two modes to − 10 and +10 . Fig. 6b shows the PDF (and histogram) of the original distribution p ( z ) and the distribution of h ( z ) , which is approximated using KDE. For the two cases described abov e, there are two transformation functions that will lead to the same distribution. In each case, the second transformation function is giv en by: • D 1 : g ( z ) = µ − σ z • D 2 : g ( z ) = − αz + β tanh( − γ αz ) As there are two possible correct transformation functions ( h and g ) that the GANs can learn, we computed the Mean Absolute Error (MAE) as follows MAE = min E z h | h ( z ) − ˆ h ( z ) | i , E z h | g ( z ) − ˆ h ( z ) | i , (34) where ˆ h is the transformation learned by the generator . W e estimated the expectations in Eq. ( 34 ) using 5000 samples. For the generator and critic network architectures, we followed [ 40 ]. Specifically , the generator is a multi-layer perceptron (MLP) with 3 hidden layers of sizes 7, 13, 7, and the Exponential linear unit (ELU) non-linearity between the layers. The critic network is also an MLP with 3 hidden layers of sizes 11, 29, 11, and the ELU non-linearity between the layers. The inputs and outputs of both networks are one-dimensional. W e used the RMSProp optimizer with a learning rate of 0.001 for all models. The batch size was set to 50, and 5 critic updates were performed per generator iteration. W e trained the models for 10000 and 20000 generator iterations for D 1 and D 2 respectiv ely . For all the models that rely on weight clipping, clipping in the range [ − 0 . 01 , 0 . 01] for D 2 resulted in poor performance, so we modified the range to [ − 0 . 1 , 0 . 1] . W e used a mixture of 5 RBF kernels for MMD-GAN [ 24 ], and a mixture of 5 RQ kernels and gradient penalty (as defined in [ 5 ]) for MMD-GAN-GP . For the CF-GAN variants, we used a single weighting distribution (Student-t and Gaussian for D 1 and D 2 respectiv ely). The gradient penalty trade-off parameter ( λ GP ) for WGAN-GP was set to 1 for D 1 as the value of 10 led to erratic performance. B.2. Image Generation CF-GAN Follo wing [ 24 ], a decoder was also connected to the critic in CF-GAN to reconstruct the input to the critic. This encourages the critic to learn a representation that has a high mutual information with the input. The auto-encoding objectiv e is optimized along with the discriminator , and the final objective is gi ven by inf θ sup ψ CFD 2 ω ( P f φ ( X ) , P f φ ( g θ ( Z )) ) − λ 1 E u ∈X ∪ g θ ( Z ) D ( u , f d φ ( f φ ( u ))) , (35) where f d φ is the decoder network, λ 1 is the regularization parameter , and D is the discrepancy between the two data-points (e.g., squared error , cross-entropy , etc.). Although the decoder is interesting from an auto-encoding perspectiv e of the repre- sentation learned by f φ , we found that the removal of the decoder did not impact the performance of the model; this can be seen by the results of OCF-GAN-GP , which does not use a decoder network. W e also reduced the feasible set [ 24 ] of f φ , which amounts to an additiv e penalty of λ 2 min ( E [ f φ ( x )] − E [ f φ ( g θ ( z ))] , 0) . W e observed in our experiments that this led to improved stability of training, especially for the models that use weight clipping to enforce Lipschitz condition. For more details, we refer the reader to [ 24 ]. Network and Hyperparameter Details W e used DCGAN-like generator g θ and critic f φ architectures, same as [ 24 ] for all models. Specifically , both g θ and d φ are fully con volutional networks with the follo wing structures: • g θ : upcon v(256) → bn → relu → upconv(128) → bn → relu → upcon v(64) → bn → relu → upcon v( c ) → tanh; • f φ : con v(64) → leaky-relu(0.2) → con v(128) → bn → leaky-relu(0.2) → con v(256) → bn → leaky-relu(0.2) → con v( m ), where con v , upconv , bn, relu, leaky-relu, and tanh refer to conv olution, up-conv olution, batch-normalization, ReLU, LeakyReLU, and T anh layers respectively . The decoder f φ d (whenev er used) is also a DCGAN-like decoder . The gener- ator takes a k -dimensional Gaussian latent vector as the input and outputs a 32 × 32 image with c channels. The value of k was set dif ferently depending on the dataset: MNIST (10), CIF AR10 (32), STL10 (32), and CelebA (64). The output dimensionality of the critic netw ork ( m ) was set to 10 (MNIST) and 32 (CIF AR10, STL10, CelebA) for the MMD-GAN and CF-GAN models and 1 for WGAN and WGAN-GP . The batch normalization layers in the critic were omitted for WGAN-GP and OCF-GAN-GP (as suggested by [ 16 ]). RMSProp optimizer was used with a learning rate of 5 × 10 − 5 . All models were optimized with a batch size of 64 for 125000 generator iterations (50000 for MNIST) with 5 critic updates per generator iteration. W e tested CF-GAN variants with two weighting distributions: Gaussian ( N ) and Student-t ( T ) (with 2 degrees of freedom). W e also conducted preliminary experiments using Laplace ( L ) and Uniform ( U ) weighting distributions (see T able 3 ). For CF-GAN, we tested with 3 scale parameters for N and T from the set { 0 . 2 , 0 . 5 , 1 } , and we report the best results. The trade-off parameter for the auto-encoder penalty ( λ 1 ) and feasible-set penalty ( λ 2 ) were set to 8 and 16 respectively , as in [ 24 ]. For OCF-GAN-GP , the trade-off for the gradient penalty was set to 10, same as WGAN-GP . The number of random frequencies k used for computing ECFD for all CF-GAN models was set to 8. For MMD-GAN, we used a mixture of five RBF kernels k σ ( x, x 0 ) = exp k x − x 0 k 2 2 σ 2 with different scales ( σ ) in Σ = { 1 , 2 , 4 , 8 , 16 } as in [ 24 ]. For MMD-GAN-GP L 2 , we used a mixture of rational quadratic k ernels k σ ( x, x 0 ) = 1 + k x − x 0 k 2 2 α − α with α in A = { 0 . 2 , 0 . 5 , 1 , 2 , 5 } ; the trade-off parameters of the gradient and L2 penalties were set according to [ 5 ]. Evaluation Metrics W e compared the dif ferent models using three e valuation metrics: Fr ´ echet Inception Distance (FID) [ 37 ], Kernel Inception Distance (KID) [ 5 ], and Precision-Recall (PR) for Generative models [ 36 ]. All e valuation metrics use features extracted from the pool3 layer (2048 dimensional) of an Inception network pre-trained on ImageNet, except for MNIST , for which we used a LeNet5 as the feature extractor . FID fits Gaussian distributions to Inception features of the real and fake images and then computes the Fr ´ echet distance between the two Gaussians. On the other hand, KID computes the MMD between the Inception features of the two distributions using a polynomial kernel of degree 3. This is equiv alent to comparing the first three moments of the two distributions. Let { x r i } n i =1 be samples from the data distribution P r and { x g i } m i =1 be samples from the GAN generator distribution Q θ . Let { z r i } n i =1 and { z g i } m i =1 be the feature vectors extracted from the Inception network for { x r i } n i =1 and { x g i } m i =1 respectiv ely . The FID and KID are then giv en by FID( P r , Q θ ) = || µ r − µ g || 2 + T r(Σ r + Σ g − 2(Σ r Σ g ) 1 / 2 ) , (36) KID( P r , Q θ ) = 1 n ( n − 1) n X i =1 n X j =1 ,j 6 = i κ ( z r i , z r j ) + 1 m ( m − 1) m X i =1 m X j =1 ,j 6 = i κ ( z g i , z g j ) (37) − 2 mn n X i =1 m X j =1 κ ( z r i , z g j ) , where ( µ r , Σ r ) and ( µ g , Σ g ) are the sample mean & cov ariance matrix of the inception features of the real and generated data distributions, and κ is a polynomial kernel of degree 3, i.e., κ ( x, y ) = 1 m h x, y i + 1 3 , (38) where m is the dimensionality of the feature vectors. Both FID and KID giv e single-value scores, and PR giv es a two-dimensional score which disentangles the quality of generated samples from the co verage of the data distribution. For more details about PR, we refer the reader to [ 36 ]. In brief, PR is defined by a pair F 8 (recall) and F 1 / 8 (precision), which represent the cov erage and sample quality respectiv ely [ 36 ]. W e used 50000 (10000 for PR) random samples from the dif ferent GANs to compute the FID and KID scores. For MNIST and CIF AR10, we compared against the standard test sets, while for CelebA and STL10, we compared against 50000 random images sampled from the dataset. Follo wing [ 5 ], we computed FID using 10 bootstrap resamplings and KID by sampling 1000 elements (without replacement) 100 times. C. Additional Results Fig. 7 shows the probability of accepting the null hypothesis P = Q when it is indeed correct for different two sample tests based on ECFs. As mentioned in the main text, the optimization of the parameters of the weighting distribution does not hamper the ability of the test to correctly recognize the cases that P = Q . T able 3 shows the FID and KID scores for various models for the CIF AR10, STL10, and CelebA datasets, including results for the smoothed version of ECFD and Laplace ( L ) & Uniform ( U ) weighting distributions. The FID and KID scores for the MNIST dataset are shown in T able 4 . Figures 8 , 9 , and 10 show random images generated by dif ferent GAN models for CIF AR10, CelebA, and S TL10 datasets respectiv ely . The images generated by models that do not use gradient penalty (WGAN and MMD-GAN) are less sharp and hav e more artifacts compared to their GP counterparts. Fig. 11 shows random images generated from OCF-GAN-GP( N ) trained on the MNIST dataset with a different number of random frequencies ( k ). It is interesting to note that the change in sample quality is imperceptible ev en when k = 1 . Figure 12 shows additional samples from OCF-GAN-GP with a ResNet generator trained on CelebA 128 × 128 . 0 200 400 600 800 1000 Dimensions 0 . 5 0 . 6 0 . 7 0 . 8 0 . 9 1 . 0 1 − P (T yp e I error) P = Q ECFD ECFD-Smo oth OECFD OECFD-Smo oth Figure 7: Probability of correctly accepting the null hypothesis P = Q for various numbers of dimensions and different variants of ECFD. T able 3: FID and KID ( × 10 3 ) scores (lower is better) for CIF AR10, STL10, and CelebA datasets. Results are av eraged over 5 random runs wherev er the standard deviation is indicated in parentheses. Model Kernel/ CIF AR10 STL10 CelebA W eight FID KID FID KID FID KID WGAN 44.11 (1.16) 25 (1) 38.61 (0.43) 23 (1) 17.85 (0.69) 12 (1) WGAN-GP 35.91 (0.30) 19 (1) 27.85 (0.81) 15 (1) 10.03 (0.37) 6 (1) MMD-GAN 5-RBF 41.28 (0.54) 23 (1) 35.76 (0.54) 21 (1) 18.48 (1.60) 12 (1) MMD-GAN-GP-L2 5-RQ 38.88 (1.35) 21 (1) 31.67 (0.94) 17 (1) 13.22 (1.30) 8 (1) CF-GAN N ( σ =0 . 5) 39.81 (0.93) 23 (1) 33.54 (1.11) 19 (1) 13.71 (0.50) 9 (1) T ( σ =1) 41.41 (0.64) 22 (1) 35.64 (0.44) 20 (1) 16.92 (1.29) 11 (1) OCF-GAN N ( ˆ σ ) 38.47 (1.00) 20 (1) 32.51 (0.87) 19 (1) 14.91 (0.83) 9 (1) T ( ˆ σ ) 37.96 (0.74) 20 (1) 31.03 (0.82) 17 (1) 13.73 (0.56) 8 (1) L ( ˆ σ ) 36.90 20 32.09 18 14.96 10 U ( ˆ σ ) 37.79 21 31.80 18 14.94 10 CF-GAN-Smooth N ( σ =0 . 5) 41.17 24 32.98 19 13.42 9 OCF-GAN-Smooth N ( σ ) 38.97 21 32.60 18 14.97 9 OCF-GAN-GP N ( ˆ σ ) 33.08 (0.26) 17 (1) 26.16 (0.64) 14 (1) 9.39 (0.25) 5 (1) T ( ˆ σ ) 34.33 (0.77) 18 (1) 26.86 (0.38) 15 (1) 9.61 (0.39) 6 (1) L ( ˆ σ ) 36.06 19 29.31 16 11.65 7 U ( ˆ σ ) 35.14 18 27.62 15 10.29 6 T able 4: FID and KID scores (lower is better) achieved by the various models for the MNIST dataset. Results are averaged ov er 5 random runs and the standard deviation is indicated in parentheses. Model Kernel/W eight MNIST FID KID × 10 3 WGAN 1.69 (0.09) 20 (2) WGAN-GP 0.26 (0.02) 2 (1) MMD-GAN 5-RBF 0.68 (0.18) 10 (5) MMD-GAN-GP L 2 5-RQ 0.51 (0.04) 6 (2) CF-GAN N ( σ =1) 0.98 (0.33) 16 (10) T ( σ =0 . 5) 0.85 (0.19) 12 (4) OCF-GAN N ( ˆ σ ) 0.60 (0.12) 7 (3) T ( ˆ σ ) 0.78 (0.11) 9 (1) OCF-GAN-GP N ( ˆ σ ) 0.35 (0.02) 3 (1) T ( ˆ σ ) 0.48 (0.06) 6 (1) (a) WGAN (b) WGAN-GP (c) MMD-GAN (d) MMD-GAN-GP (e) OCF-GAN-GP (f) CIF AR10 T est Set Figure 8: Image samples from the different models for the CIF AR10 dataset. (a) WGAN (b) WGAN-GP (c) MMD-GAN (d) MMD-GAN-GP (e) OCF-GAN-GP (f) CelebA Real Samples Figure 9: Image samples from the different models for the CelebA dataset. (a) WGAN (b) WGAN-GP (c) MMD-GAN (d) MMD-GAN-GP (e) OCF-GAN-GP (f) STL10 T est Set Figure 10: Image samples from the different models for the STL10 dataset. (a) k = 1 (b) k = 4 (c) k = 8 (d) k = 16 (e) k = 32 (f) k = 64 Figure 11: Image samples from OCF-GAN-GP for the MNIST dataset trained using dif ferent numbers of random frequencies ( k ). Figure 12: Image samples for the 128 × 128 CelebA dataset generated by OCF-GAN-GP with a ResNet generator .

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment