Robust Spammer Detection by Nash Reinforcement Learning

Online reviews provide product evaluations for customers to make decisions. Unfortunately, the evaluations can be manipulated using fake reviews (“spams”) by professional spammers, who have learned increasingly insidious and powerful spamming strategies by adapting to the deployed detectors. Spamming strategies are hard to capture, as they can be varying quickly along time, different across spammers and target products, and more critically, remained unknown in most cases. Furthermore, most existing detectors focus on detection accuracy, which is not well-aligned with the goal of maintaining the trustworthiness of product evaluations. To address the challenges, we formulate a minimax game where the spammers and spam detectors compete with each other on their practical goals that are not solely based on detection accuracy. Nash equilibria of the game lead to stable detectors that are agnostic to any mixed detection strategies. However, the game has no closed-form solution and is not differentiable to admit the typical gradient-based algorithms. We turn the game into two dependent Markov Decision Processes (MDPs) to allow efficient stochastic optimization based on multi-armed bandit and policy gradient. We experiment on three large review datasets using various state-of-the-art spamming and detection strategies and show that the optimization algorithm can reliably find an equilibrial detector that can robustly and effectively prevent spammers with any mixed spamming strategies from attaining their practical goal. Our code is available at https://github.com/YingtongDou/Nash-Detect.

💡 Research Summary

**

The paper tackles the problem of online review spam detection from a business‑impact perspective rather than the conventional focus on classification accuracy. The authors argue that existing detectors, which are optimized for metrics such as AUC, recall, or precision, can be easily fooled by a small number of high‑impact “elite” spam reviews that manipulate product reputation and revenue, while still achieving high accuracy scores. To bridge this gap, they formulate a zero‑sum minimax game between a professional spamming entity and a detection system. The spammers aim to maximize a practical objective—revenue manipulation measured through a linear model that distinguishes the influence of elite versus regular reviews—while the detector seeks to minimize the same objective.

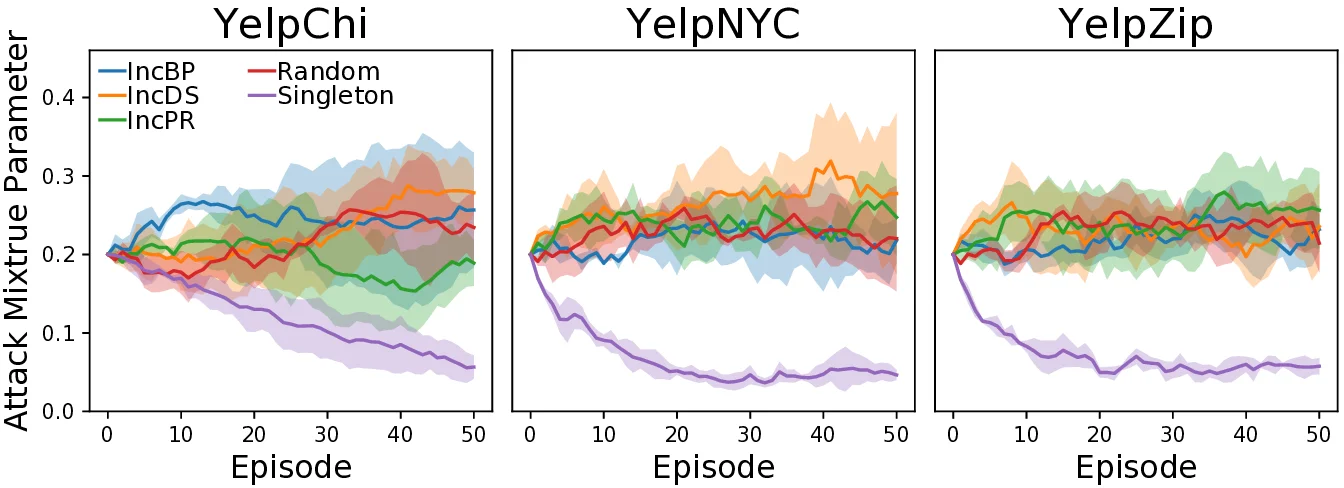

Because the game is non‑differentiable and lacks a closed‑form solution, the authors convert it into two interdependent Markov Decision Processes (MDPs). The spamming side selects among K predefined base attack strategies (e.g., camouflage, Sybil, rating skew) according to a mixed‑strategy probability vector p, while the detection side combines L base detectors (e.g., Fraudar, SpEagle, GANG) using a weight vector q. Each episode consists of an inference phase, where a concrete attack is sampled from p and the detector evaluates reviews using q, followed by a learning phase that back‑propagates the observed revenue impact (the practical loss) to update p and q. The updates employ a multi‑armed bandit scheme for probability adjustment and a policy‑gradient method for continuous refinement. Repeating this fictitious‑play process drives the two policies toward a Nash equilibrium, at which neither player can improve its objective unilaterally.

Experiments are conducted on three large‑scale datasets (Yelp, Amazon‑Chi, and Fraud‑Eagle) covering diverse product categories and review behaviors. The authors construct strong mixed attacks by combining the base strategies and evaluate a suite of state‑of‑the‑art detectors. Metrics include traditional recall/AUC as well as the “practical effect,” i.e., the estimated revenue change caused by the remaining spam after the detector’s top‑k human screening. Results show that Nash‑Detect consistently achieves lower revenue impact than all baselines, even when the spammers adapt their mixed strategy to the current detector. Moreover, the learned detector weights q automatically discover robust ensembles of base detectors, and the training process remains stable across different hyper‑parameter settings.

The paper’s contributions are threefold: (1) introducing a revenue‑centric loss that aligns detection with real business goals; (2) framing spam detection as a minimax game and solving it via a novel reinforcement‑learning pipeline that yields a Nash equilibrium; (3) demonstrating empirically that the resulting detector is attack‑agnostic and resilient to evolving, mixed spamming strategies. Limitations include reliance on platform‑specific revenue coefficients (β₀, β₁), a finite set of base attack strategies, and potential scalability challenges for policy‑gradient updates on massive review graphs. Future work may extend the strategy pool, adapt the revenue model to other platforms, and explore distributed online learning for real‑time defense. Overall, the study provides a compelling shift from accuracy‑driven spam detection toward economically meaningful, robust protection of online review ecosystems.

Comments & Academic Discussion

Loading comments...

Leave a Comment