Robust Learning Through Cross-Task Consistency

💡 Research Summary

The paper introduces Cross‑Task Consistency (X‑TC), a general framework for improving visual perception models that predict multiple outputs (e.g., depth, surface normals, object masks) from a single image. The authors argue that predictions for different tasks are not statistically independent because they all describe the same underlying scene; therefore, they should satisfy consistency constraints.

The central technical contribution is the notion of inference‑path invariance. Consider a graph whose nodes are prediction domains and whose edges are neural networks that map between domains. For any two paths that share the same start and end nodes, the outputs should be identical. The smallest such structure is a triangle consisting of three edges: X→Y₁, X→Y₂, and Y₁→Y₂. The authors define a triangle loss that combines the standard supervised losses for X→Y₁ and X→Y₂ with a consistency term enforcing that Y₁→Y₂∘(X→Y₁) matches X→Y₂.

Directly optimizing the triangle loss would require joint training of the two X‑to‑Y networks, which is computationally heavy. By applying the triangle inequality, the loss is upper‑bounded and split into two separable objectives, each involving only one of the X‑to‑Y networks. This makes the optimization tractable while preserving the same minimizer.

A further challenge is that the cross‑task mapping Y₁→Y₂ may be imperfect, noisy, or even ill‑posed. To address this, the authors replace the ground‑truth target y₂ in the consistency term with the perceptual proxy f_{Y₁→Y₂}(y₁). This yields a perceptual loss formulation: |f_{X→Y₁}(x)−y₁| + |f_{Y₁→Y₂}∘f_{X→Y₁}(x) − f_{Y₁→Y₂}(y₁)|. The second term measures the discrepancy between the predicted Y₁ and its ground‑truth counterpart after both are projected into the Y₂ space, effectively making the loss robust to imperfections in the Y₁→Y₂ mapper.

The framework naturally extends to multiple domains. For an input X and a set of tasks {Y₁,…,Yₙ}, the model learns a separate X→Yᵢ network for each task and simultaneously enforces perceptual consistency between every pair (Yᵢ, Yⱼ). This creates a global agreement across the entire task graph, rather than only short cycles used in prior work.

During training, the authors also compute a Consistency Energy, defined as the sum of all consistency losses across sampled paths. Empirically, this scalar correlates strongly (r≈0.67) with the supervised error, enabling it to serve as an unsupervised confidence metric. In out‑of‑distribution (OOD) detection experiments, Consistency Energy achieves ROC‑AUC≈0.95, demonstrating its practical utility for safety‑critical applications.

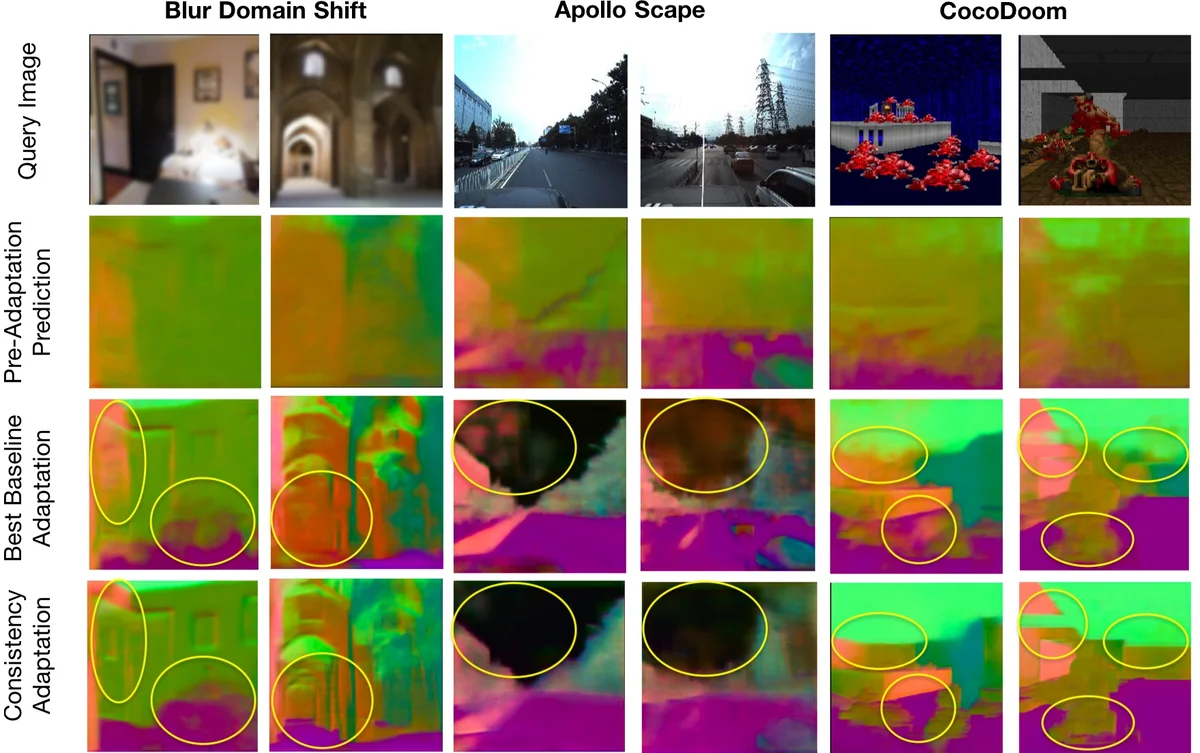

The method is evaluated on several diverse datasets: Taskonomy (indoor scenes with many tasks), Replica (synthetic indoor), COCO‑Doom (synthetic outdoor), and ApolloScape (real autonomous‑driving data). Baselines include conventional multi‑task learning (shared encoder, independent heads), cycle‑consistency approaches that only enforce two‑task loops, and analytical consistency where explicit geometric formulas are hard‑coded. Across almost all tasks, X‑TC improves quantitative metrics by 2–5 percentage points and yields qualitatively sharper, less noisy predictions (e.g., cleaner normal maps, more accurate depth edges). Notably, the gains are larger under domain shift, confirming the claim that consistency regularization reduces reliance on superficial texture cues.

The paper also discusses limitations. The approach requires either pre‑trained or jointly learned cross‑task mappers f_{Yᵢ→Yⱼ}; if these are highly inaccurate, the consistency term may provide weak or misleading gradients, though the perceptual loss formulation mitigates this effect. Moreover, the number of possible paths grows combinatorially with the number of tasks, so practical implementations must sample a subset of paths or limit graph connectivity. Finally, the current experiments focus on 2‑D image‑based tasks; extending X‑TC to 3‑D point clouds, video streams, or multimodal sensor fusion remains an open direction.

In summary, the authors present a data‑driven, scalable, and theoretically grounded method to embed cross‑task consistency into deep visual models. By reformulating consistency as a separable perceptual loss and introducing Consistency Energy as an unsupervised quality indicator, they achieve more accurate, robust, and trustworthy multi‑task perception, advancing the state of the art beyond traditional multi‑task and cycle‑consistency baselines.

Comments & Academic Discussion

Loading comments...

Leave a Comment