A Multi-step and Resilient Predictive Q-learning Algorithm for IoT with Human Operators in the Loop: A Case Study in Water Supply Networks

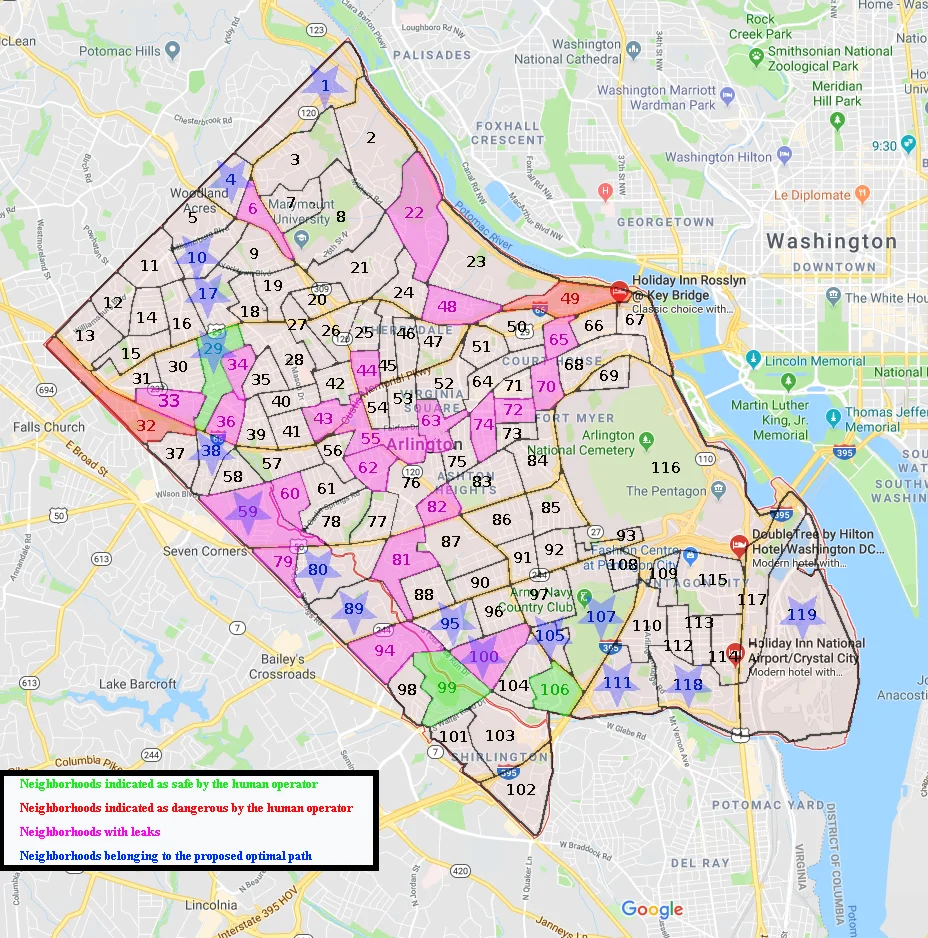

We consider the problem of recommending resilient and predictive actions for an IoT network in the presence of faulty components, considering the presence of human operators manipulating the information of the environment the agent sees for containment purposes. The IoT network is formulated as a directed graph with a known topology whose objective is to maintain a constant and resilient flow between a source and a destination node. The optimal route through this network is evaluated via a predictive and resilient Q-learning algorithm which takes into account historical data about irregular operation, due to faults, as well as the feedback from the human operators that are considered to have extra information about the status of the network concerning locations likely to be targeted by attacks. To showcase our method, we utilize anonymized data from Arlington County, Virginia, to compute predictive and resilient scheduling policies for a smart water supply system, while avoiding (i) all the locations indicated to be attacked according to human operators (ii) as many as possible neighborhoods detected to have leaks or other faults. This method incorporates both the adaptability of the human and the computation capability of the machine to achieve optimal implementation containment and recovery actions in water distribution.

💡 Research Summary

The paper addresses the challenge of maintaining a resilient and continuous water flow in a smart‑city water distribution network under fault and attack conditions, by integrating human operator expertise with an IoT‑driven reinforcement learning framework. The network is modeled as a directed graph where nodes represent neighborhoods and edges represent pipelines. Each edge carries a time‑varying cost matrix that reflects transmission cost, leak‑induced penalties, and potential attack risk.

A novel “predictive‑and‑resilient” Q‑learning algorithm is proposed. It extends classic Q‑learning by incorporating a sliding time window M and historical fault data into the Q‑value update, allowing the agent to anticipate future congestion and failures. The Q‑function is updated as

Qₖᵖ(s,a) ← Qₖᵖ(s,a) + α

Comments & Academic Discussion

Loading comments...

Leave a Comment