High-level Modeling of Manufacturing Faults in Deep Neural Network Accelerators

The advent of data-driven real-time applications requires the implementation of Deep Neural Networks (DNNs) on Machine Learning accelerators. Google’s Tensor Processing Unit (TPU) is one such neural network accelerator that uses systolic array-based matrix multiplication hardware for computation in its crux. Manufacturing faults at any state element of the matrix multiplication unit can cause unexpected errors in these inference networks. In this paper, we propose a formal model of permanent faults and their propagation in a TPU using the Discrete-Time Markov Chain (DTMC) formalism. The proposed model is analyzed using the probabilistic model checking technique to reason about the likelihood of faulty outputs. The obtained quantitative results show that the classification accuracy is sensitive to the type of permanent faults as well as their location, bit position and the number of layers in the neural network. The conclusions from our theoretical model have been validated using experiments on a digit recognition-based DNN.

💡 Research Summary

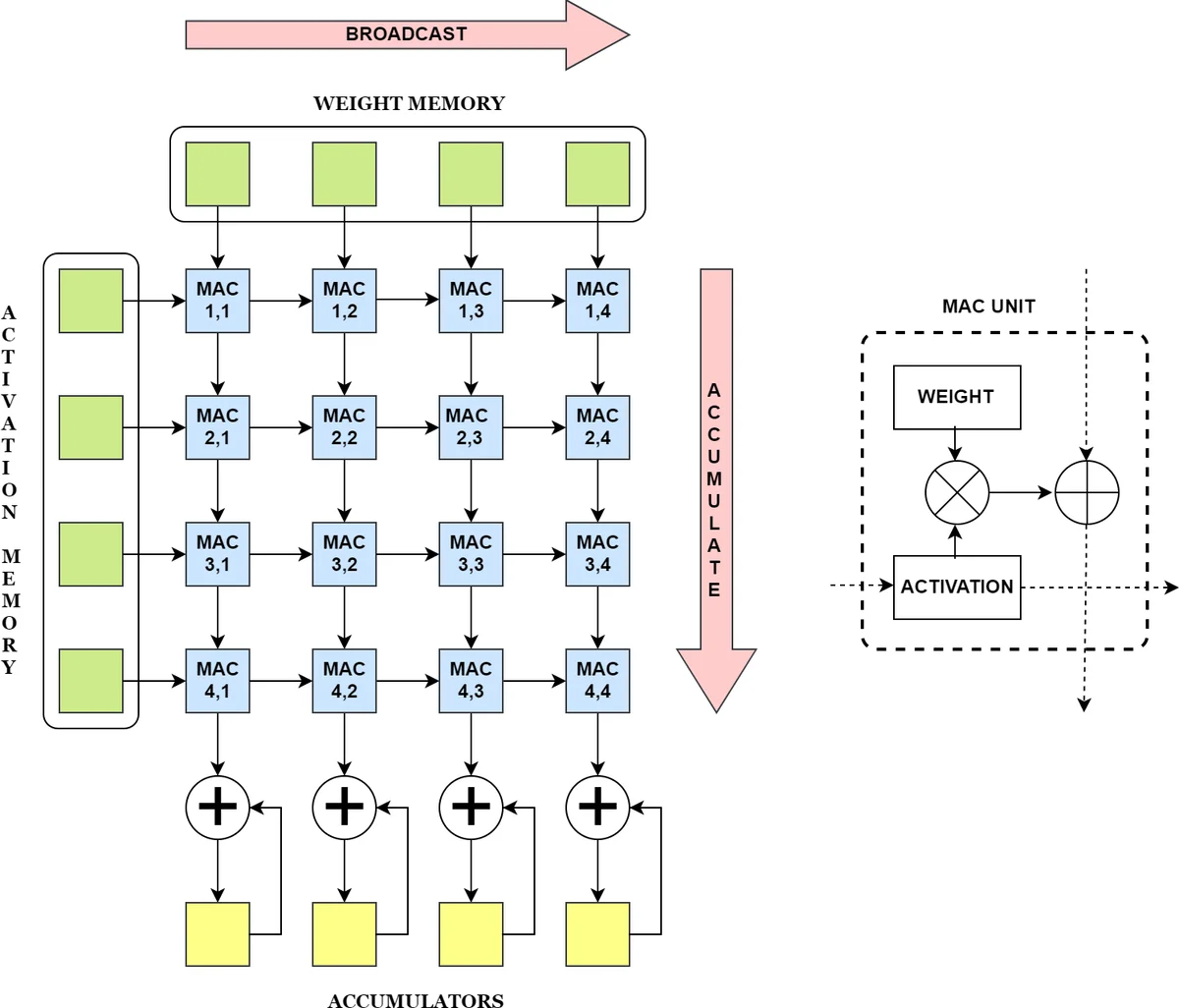

The paper addresses the reliability challenge of deep‑neural‑network (DNN) accelerators by presenting a formal, probabilistic analysis of permanent manufacturing faults in Google’s Tensor Processing Unit (TPU). The authors focus on the systolic‑array matrix‑multiplication core, where each MAC (multiply‑accumulate) unit consists of a weight register, a multiplier, and an accumulator. Permanent faults are modeled as “stuck‑at‑0” or “stuck‑at‑1” bit errors that can appear in any of these three sub‑components.

A mathematical fault‑propagation model is derived for three fault cases: (1) a stuck‑at fault in the weight register, (2) a stuck‑at fault in the accumulator, and (3) a stuck‑at fault in the multiplier. For each case the authors define logical conditions (C0‑C7) that capture whether the faulty MAC lies inside the effective computation area, whether the fault is masked by the original bit pattern, and whether a “leak” effect occurs (i.e., a stuck‑at‑1 forces the MAC to emit a constant value even when idle, propagating downstream). The resulting error contributions are expressed as integer offsets Fw(l), Fa(l), and Fm(l) that are added to the accumulated sums at each layer l.

To evaluate the impact of these faults on DNN inference, the authors construct a probabilistic model using discrete‑time Markov chains (DTMC). Input selection for each neuron is modeled by an Input‑Selection DTMC (IS‑DTMC) whose states correspond to possible input bit patterns; transition probabilities encode the assumed input distribution (uniform in the experiments). For a network with N neurons, N parallel IS‑DTMCs are synchronized, producing a joint probability distribution over the entire input vector X(1).

The execution of the TPU is captured by a deterministic probabilistic automaton called TPU‑FA (Fault Analysis). This automaton proceeds through five states: initialization, systolic‑array computation, activation & quantization, “no error”, and “error”. In the computation state the model evaluates the fault‑specific error formulas (5)‑(7) for each layer, updates the outputs of both a fault‑free reference TPU (NFTPU) and a faulty TPU (FTPU), and finally compares the two results. The property of interest—probability that the outputs differ—is expressed in PCTL as P =?

Comments & Academic Discussion

Loading comments...

Leave a Comment