The Multi-Agent Programming Contest: A résumé

💡 Research Summary

The paper provides a comprehensive retrospective of the Multi‑Agent Programming Contest (MAPC), an annual competition organized by the Clausthal University of Technology from 2005 through 2019. Its primary purpose is to investigate the practical benefits of decentralized, autonomous, and cooperative agents by offering a complex, competitive scenario that serves as a benchmark for agent‑based systems. The authors first outline the original motivation: to compare computational‑logic systems used for knowledge representation, then describe how the contest evolved into a broader platform for evaluating agent programming languages (such as Jason, 2APL, Jadex) alongside conventional programming frameworks.

A key design decision of MAPC is the deliberate absence of restrictions on the software stack. Teams may use any language or platform, which enables a fair comparison of the expressive power, built‑in communication primitives, and coordination mechanisms that agent‑oriented languages provide versus the raw performance and engineering maturity of classic languages. The contest explicitly avoids measuring algorithmic optimality or real‑time performance; instead, it focuses on how well a system can model and execute the required constructs for the scenario. To reinforce this, each simulation step is allocated a generous four‑second timeout, accounting for internet latency while keeping the emphasis on strategic reasoning rather than low‑level speed.

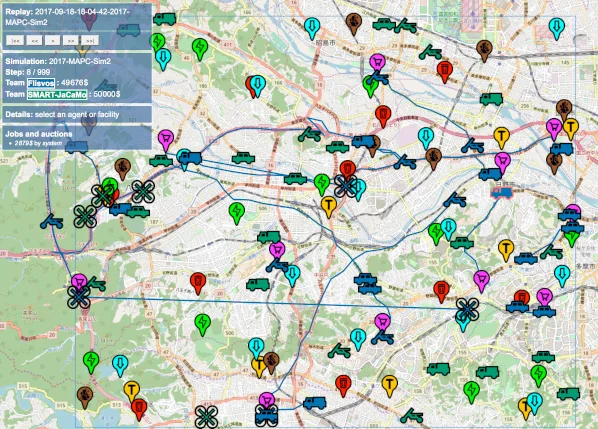

The simulation infrastructure, MASSim (Multi‑Agent Simulation), is a Java‑based server that exchanges JSON (formerly XML) messages with participant agents. In each step the server broadcasts the current world state as “percepts”; agents compute a response action and send it back. All actions are applied simultaneously, and the world advances to the next step. This cycle repeats for a fixed number of steps, after which scenario‑specific scoring determines the winner. In 2017 MASSim was completely rewritten: a single web‑based monitor replaced the previous Java RMI monitor, the plug‑in architecture was dropped in favor of yearly scenario packages, and support for more than two concurrent teams was added, laying groundwork for future extensions.

The paper chronicles the evolution of the contest scenarios, illustrating how increasing complexity forced participants to exploit genuine multi‑agent features. Early editions (2005‑2007) featured simple grid worlds such as “Gold Miners,” where agents acted independently. The 2008‑2010 “Cows and Cowboys” scenario introduced flocking behavior for cows and required coordinated positioning of agents to herd animals into corrals, thereby demanding communication and teamwork. From 2011‑2014 the “Agents on Mars” scenario added realistic city maps, resource management, and more sophisticated physics, raising the bar for planning and cooperation. The most recent “Agents Assemble” scenario (2019) integrates actual city maps, traffic constraints, and multi‑goal objectives, creating a highly realistic testbed for autonomy, negotiation, and intention progression. Each transition reflects a deliberate effort by the organizers to embed constraints that favor decentralized approaches rather than allowing participants to rely on a single clever algorithm.

Analysis of the 2019 results shows that agent‑oriented languages often reduce development effort for coordination and communication because these capabilities are built in. However, when it comes to raw execution speed, scalability, and fine‑grained system engineering, classic languages still hold an advantage. The authors also note a recurring “path of least resistance” behavior: teams tend to search for simple heuristics that bypass the intended cooperative challenges, prompting the organizers to adjust scenario difficulty and introduce additional constraints (e.g., stronger flocking rules for cows).

Beyond research, MAPC serves an educational mission. Each year the organizers release an off‑the‑shelf package, complete with documentation and sample code, which can be directly used in university courses on multi‑agent systems. Students gain hands‑on experience with networked agents, perception‑action loops, and the practical trade‑offs between declarative agent models and imperative programming. The open‑source nature of MASSim further enables instructors and researchers to extend or modify the platform for custom experiments.

In conclusion, the MAPC provides a rare, longitudinal empirical evaluation of agent programming paradigms. It demonstrates that while agent languages excel at expressing autonomy, communication, and cooperative behavior, they still lag in performance engineering compared with mature general‑purpose languages. The authors recommend future contests incorporate real‑time constraints, larger numbers of concurrent teams, and multi‑objective optimization to push the boundaries of current agent platforms and to continue shedding light on when and how agent‑oriented features truly pay off.

Comments & Academic Discussion

Loading comments...

Leave a Comment