Differentiated Backprojection Domain Deep Learning for Conebeam Artifact Removal

Conebeam CT using a circular trajectory is quite often used for various applications due to its relative simple geometry. For conebeam geometry, Feldkamp, Davis and Kress algorithm is regarded as the standard reconstruction method, but this algorithm suffers from so-called conebeam artifacts as the cone angle increases. Various model-based iterative reconstruction methods have been developed to reduce the cone-beam artifacts, but these algorithms usually require multiple applications of computational expensive forward and backprojections. In this paper, we develop a novel deep learning approach for accurate conebeam artifact removal. In particular, our deep network, designed on the differentiated backprojection domain, performs a data-driven inversion of an ill-posed deconvolution problem associated with the Hilbert transform. The reconstruction results along the coronal and sagittal directions are then combined using a spectral blending technique to minimize the spectral leakage. Experimental results show that our method outperforms the existing iterative methods despite significantly reduced runtime complexity.

💡 Research Summary

This paper addresses the persistent cone‑beam artifacts that arise in cone‑beam computed tomography (CBCT) when a circular source trajectory is used. While the Feldkamp‑Davis‑Kress (FDK) algorithm is the standard reconstruction method, its performance degrades as the cone angle increases, leading to missing frequency components and streak artifacts. Traditional model‑based iterative reconstruction (MBIR) techniques mitigate these artifacts by imposing regularization (e.g., total variation), but they require repeated forward and back‑projection operations, resulting in prohibitive computational cost.

The authors propose a fundamentally different approach that operates in the differentiated backprojection (DBP) domain. DBP transforms the 3‑D reconstruction problem into a set of independent 2‑D problems on planes of interest (coronal and sagittal). Mathematically, DBP data can be expressed as a filtered version of the object’s Fourier spectrum, where the filter is a spatially varying Hilbert‑transform‑like term σ(x,ω,λ±). This formulation reveals that the reconstruction problem is essentially a deconvolution involving a Hilbert transform, but the deconvolution kernel is spatially varying and the problem is ill‑posed because each DBP measurement contains contributions from two deformed image copies.

To solve this deconvolution efficiently, the paper introduces an encoder‑decoder convolutional neural network (E‑D CNN) that learns a mapping TΘ from DBP inputs g(t,z) to the desired images f(t,z). The network leverages ReLU activations, which partition the input space into a large number of non‑overlapping linear regions; within each region the mapping is linear, allowing the network to approximate the highly nonlinear inverse operator with a piecewise‑linear function. This piecewise‑linear property also acts as an implicit regularizer, improving robustness to noise and undersampling. Because the network parameters Θ are far fewer than the full operator, inference is orders of magnitude faster than iterative MBIR.

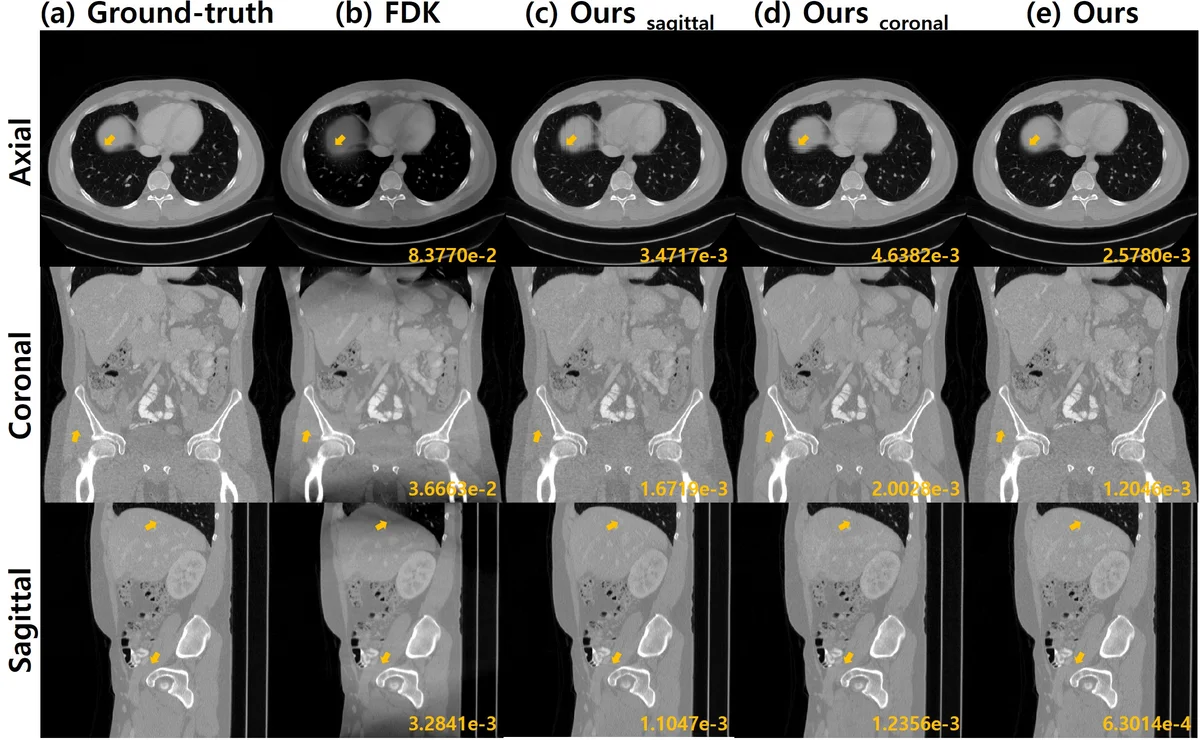

A single directional reconstruction, however, suffers from direction‑specific missing frequency bands. The authors therefore reconstruct both coronal and sagittal DBP volumes independently, transform each to the 2‑D Fourier domain, and apply a “bow‑type” spectral weighting mask w to suppress the respective missing bands. The final volume is obtained by weighted combination f_com = F⁻¹{ w·F{f_cor} + (1‑w)·F{f_sag} }, effectively filling in the missing spectral components of one direction with the complementary information from the other. This spectral blending dramatically reduces streak artifacts and improves overall image fidelity.

Training data are generated from noiseless simulated CBCT projections of known 3‑D phantoms. Despite being trained only on clean simulations, the network generalizes well to noisy real measurements and to cone angles that were not present during training, demonstrating that the learned filters capture intrinsic geometric properties of the DBP operator rather than overfitting to specific noise patterns. Quantitative evaluations show superior peak‑signal‑to‑noise ratio (PSNR) and structural similarity (SSIM) compared with state‑of‑the‑art MBIR methods, while achieving more than a tenfold reduction in reconstruction time.

In summary, the paper presents a novel DBP‑domain deep learning framework that (1) reformulates the CBCT reconstruction as a Hilbert‑transform deconvolution, (2) solves this ill‑posed problem with a piecewise‑linear encoder‑decoder CNN, and (3) restores missing spectral information through coronal‑sagittal spectral blending. The approach offers a compelling combination of high image quality, strong generalization across acquisition settings, and computational efficiency, opening avenues for real‑time or near‑real‑time cone‑beam CT applications such as interventional imaging, dental CT, and intra‑operative guidance.

Comments & Academic Discussion

Loading comments...

Leave a Comment