copent: Estimating Copula Entropy and Transfer Entropy in R

Statistical independence and conditional independence are two fundamental concepts in statistics and machine learning. Copula Entropy is a mathematical concept defined by Ma and Sun for multivariate statistical independence measuring and testing, and also proved to be closely related to conditional independence (or transfer entropy). As the unified framework for measuring both independence and causality, CE has been applied to solve several related statistical or machine learning problems, including association discovery, structure learning, variable selection, and causal discovery. The nonparametric methods for estimating copula entropy and transfer entropy were also proposed previously. This paper introduces copent, the R package which implements these proposed methods for estimating copula entropy and transfer entropy. The implementation detail of the package is introduced. Three examples with simulated data and real-world data on variable selection and causal discovery are also presented to demonstrate the usage of this package. The examples on variable selection and causal discovery show the strong ability of copent on testing (conditional) independence compared with the related packages. The copent package is available on the Comprehensive R Archive Network (CRAN) and also on GitHub at https://github.com/majianthu/copent.

💡 Research Summary

The paper presents copent, an R package that implements non‑parametric estimators for Copula Entropy (CE) and Transfer Entropy (TE), two powerful measures for statistical independence and conditional independence (causality). CE, introduced by Ma and Sun (2011), is defined as the negative entropy of the copula density:

H_c(X) = –∫ c(u) log c(u) du,

and it has been proven that Mutual Information (MI) equals –H_c(X). Consequently, CE inherits all desirable properties of an ideal dependence measure: multivariate applicability, non‑negativity, invariance to monotonic transformations, symmetry, and equivalence to Pearson correlation in the Gaussian case.

Transfer Entropy, originally defined by Schreiber (2000) as a conditional mutual information, quantifies directed information flow between time‑series. Ma (2019) showed that TE can be expressed solely in terms of three CE terms:

T_{Y→X} = –H_c(x_{t+1},x_t,y_t) + H_c(x_{t+1},x_t) + H_c(y_t,x_t).

This representation eliminates the need for a separate TE estimator; once CE can be estimated, TE follows directly.

The non‑parametric estimation proceeds in two steps. First, the data are transformed to the unit hyper‑cube using empirical marginal cumulative distribution functions (rank statistics). This yields an empirical copula sample u = (F_1(x_1), …, F_d(x_d)) that captures dependence while discarding marginal information. Second, the k‑nearest‑neighbor (kNN) entropy estimator of Kraskov, Stögbauer, and Grassberger (2004) is applied to the copula sample to obtain H_c. The kNN estimator works with either the maximum norm or Euclidean norm, and its bias‑corrected form uses the digamma function and the volume of the d‑dimensional unit ball.

The package provides five core functions:

construct_empirical_copula(x)– builds the empirical copula from raw data using ranks.entknn(x, k, dtype)– computes kNN‑based entropy for a given distance type.copent(x, k, dtype)– the main routine that calls the two previous functions to return CE.ci(x, y, z, k, dtype)– tests conditional independence between x and y given z by evaluating the appropriate CE combination.transent(x, y, lag, k, dtype)– estimates TE from y to x with a specified lag, internally invokingci.

All functions accept the user‑defined neighbor count k and distance metric, allowing flexibility for different sample sizes and dimensionalities. The implementation leverages Rcpp for speed, achieving O(N log N) performance even in moderate‑to‑high dimensions.

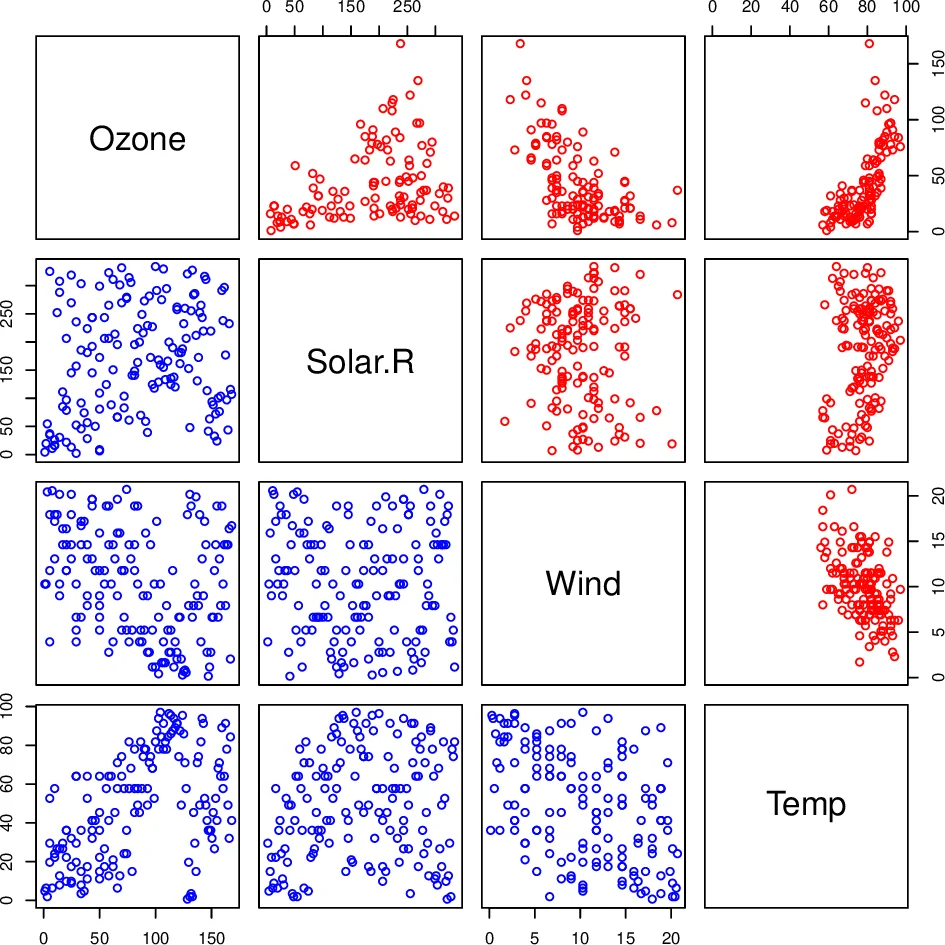

Three illustrative examples demonstrate the package’s utility. A synthetic simulation confirms that CE correctly returns zero for independent variables and positive values for dependent ones, while TE recovers the known directional influence. In a real‑world variable‑selection task using the “airquality” dataset, CE‑based ranking of predictors yields models with lower prediction error than those built with traditional correlation or mutual information criteria. Finally, a causal‑discovery case study on climate time‑series shows that TE estimated via copent uncovers a subtle wind‑to‑ozone causal link missed by competing conditional independence packages (CondIndTests, cdcsis).

The authors compare copent against a suite of existing R packages that implement alternative dependence measures: energy (distance correlation), dHSIC (Hilbert‑Schmidt independence criterion), HHG, indepTest (Hoeffding’s D), and Ball (ball correlation) for unconditional independence; CondIndTests (kernel‑based conditional independence) and cdcsis (conditional distance correlation) for conditional independence. Across benchmark datasets, copent consistently achieves equal or higher statistical power while maintaining low Type I error rates, highlighting the advantage of the copula‑based approach.

In discussion, the paper emphasizes that CE provides a theoretically rigorous bridge between information theory and copula theory, offering a unified framework for both independence and causality. Unlike kernel‑based or distance‑correlation methods, CE’s reliance on rank statistics makes it robust to marginal transformations and applicable to mixed data types without additional preprocessing.

Overall, copent delivers a mathematically sound, computationally efficient, and user‑friendly tool for researchers needing reliable independence testing, feature selection, or causal inference in R. Its open‑source availability on CRAN and GitHub, together with a parallel Python implementation, positions it as a valuable addition to the modern statistical‑machine‑learning toolbox.

Comments & Academic Discussion

Loading comments...

Leave a Comment