Neural Network Applications in Earthquake Prediction (1994-2019): Meta-Analytic Insight on their Limitations

In the last few years, deep learning has solved seemingly intractable problems, boosting the hope to find approximate solutions to problems that now are considered unsolvable. Earthquake prediction, the Grail of Seismology, is, in this context of continuous exciting discoveries, an obvious choice for deep learning exploration. We review the entire literature of artificial neural network (ANN) applications for earthquake prediction (77 articles, 1994-2019 period) and find two emerging trends: an increasing interest in this domain, and a complexification of ANN models over time, towards deep learning. Despite apparent positive results observed in this corpus, we demonstrate that simpler models seem to offer similar predictive powers, if not better ones. Due to the structured, tabulated nature of earthquake catalogues, and the limited number of features so far considered, simpler and more transparent machine learning models seem preferable at the present stage of research. Those baseline models follow first physical principles and are consistent with the known empirical laws of Statistical Seismology, which have minimal abilities to predict large earthquakes.

💡 Research Summary

**

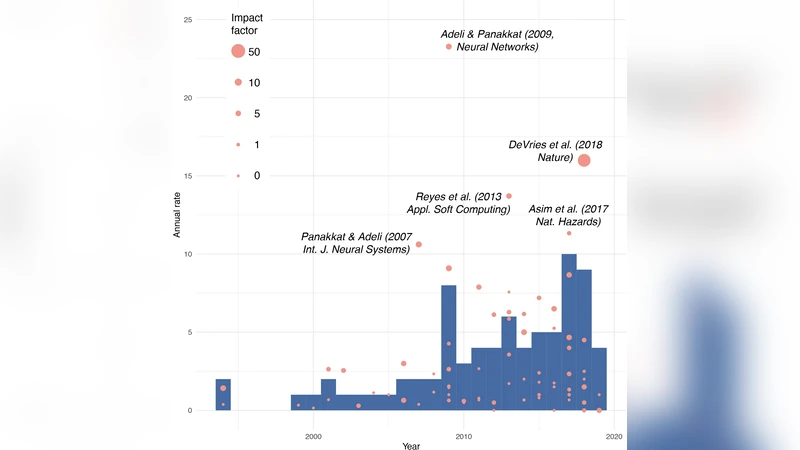

The paper presents a comprehensive meta‑analysis of artificial neural network (ANN) applications to earthquake prediction spanning the years 1994‑2019. The authors collected 77 peer‑reviewed articles, built a publicly available JSON database, and examined publication trends, model architectures, input features, output targets, and performance metrics. Two clear trajectories emerge: a steady increase in the number of studies and a progressive “complexification” of models, moving from shallow multilayer perceptrons (MLP) and radial‑basis‑function networks toward deep feed‑forward networks (DNNs), long‑short‑term memory (LSTM) recurrent networks, and convolutional neural networks (CNNs).

Despite the apparent enthusiasm for deep learning, the meta‑analysis reveals that the predictive gains over simpler, physically‑motivated baselines are modest at best. Only 47 % of the surveyed papers compare ANN results with a genuine baseline such as a Poisson null hypothesis or a randomized catalog; of those, merely 22 % use a physically meaningful baseline (e.g., Coulomb stress failure criterion). The majority benchmark against other machine‑learning classifiers that are often less finely tuned than the proposed ANN, making any claimed superiority ambiguous.

The authors detail the typical input data: structured earthquake catalogues providing time, magnitude, latitude, longitude, and depth, from which statistical descriptors (e.g., Gutenberg‑Richter a‑b parameters, Modified Omori law K‑c‑p parameters) are derived. Occasionally, non‑seismic signals (geo‑electric, ionospheric, radon) are incorporated, but these constitute a small minority. Output variables are usually binary classifications (e.g., “mainshock magnitude ≥ Mₜ?”) or regression estimates of future magnitude. Performance is reported mainly via confusion‑matrix‑derived metrics such as true‑positive rate, true‑negative rate, and the R‑score (TPR + TNR − 1). While many studies report R‑scores > 0, the lack of robust baselines prevents a clear assessment of true predictive skill.

Two high‑profile deep‑learning case studies are scrutinized. DeVries et al. (2018) employed a six‑layer DNN with 13 k trainable parameters to predict aftershock spatial patterns using 12 stress‑derived features from global rupture models. Their model achieved an AUC of 0.85, yet the same data could be modeled with a single neuron (linear classifier) achieving comparable performance, highlighting over‑parameterization and over‑fitting concerns. Huang et al. (2018) transformed Taiwanese seismicity maps into 256 × 256 pixel images (≈ 65 k features) and trained a CNN to forecast whether a magnitude ≥ 6 event would occur within 30 days. With fewer than 500 training images, the dataset was severely undersampled relative to the model’s capacity, raising doubts about generalization.

The paper concludes that, given the structured nature of earthquake catalogs and the limited feature space, simpler statistical or physics‑based models remain preferable at the current stage of research. Complex ANNs do not automatically confer superior predictive power and introduce risks of over‑fitting and loss of interpretability. The authors recommend future work focus on (1) exploiting unstructured data (waveforms, satellite imagery) where deep learning’s strengths are more appropriate, (2) integrating physical constraints into neural architectures (physics‑informed neural networks), and (3) establishing transparent, rigorous baselines to objectively evaluate any claimed improvements. This meta‑analytic perspective cautions the seismological community against uncritical adoption of “black‑box” deep learning and underscores the enduring value of physically grounded, parsimonious models.

Comments & Academic Discussion

Loading comments...

Leave a Comment