Trialstreamer: Mapping and Browsing Medical Evidence in Real-Time

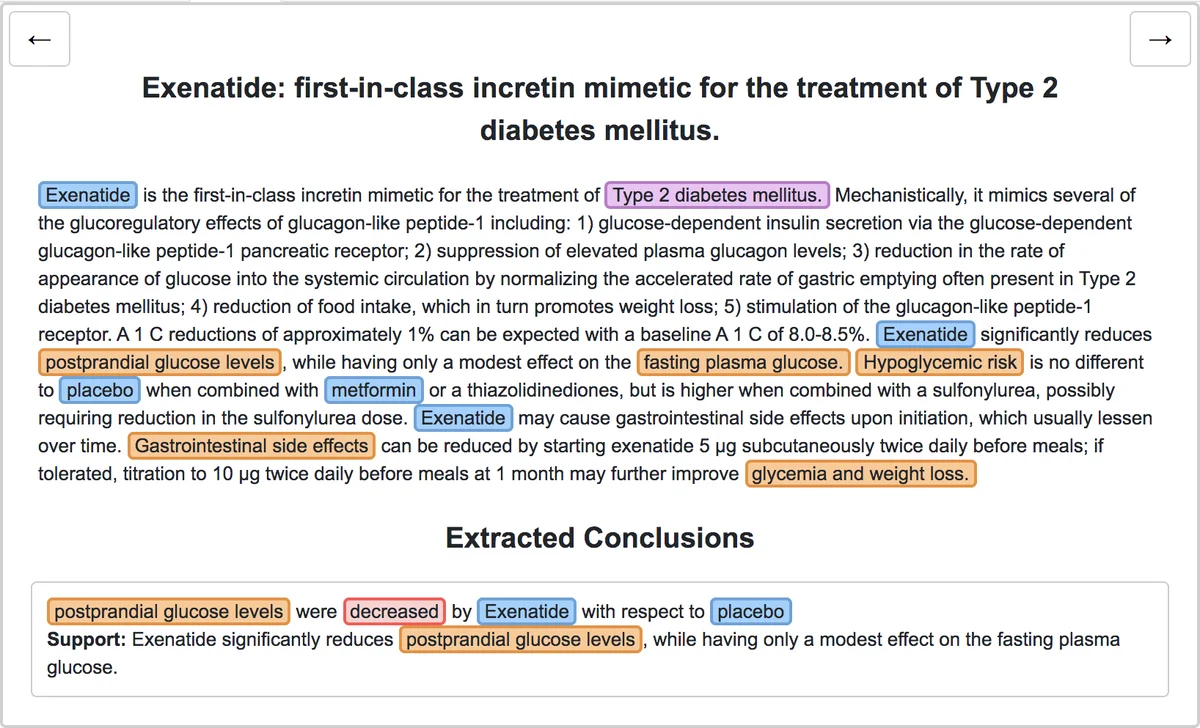

We introduce Trialstreamer, a living database of clinical trial reports. Here we mainly describe the evidence extraction component; this extracts from biomedical abstracts key pieces of information that clinicians need when appraising the literature, and also the relations between these. Specifically, the system extracts descriptions of trial participants, the treatments compared in each arm (the interventions), and which outcomes were measured. The system then attempts to infer which interventions were reported to work best by determining their relationship with identified trial outcome measures. In addition to summarizing individual trials, these extracted data elements allow automatic synthesis of results across many trials on the same topic. We apply the system at scale to all reports of randomized controlled trials indexed in MEDLINE, powering the automatic generation of evidence maps, which provide a global view of the efficacy of different interventions combining data from all relevant clinical trials on a topic. We make all code and models freely available alongside a demonstration of the web interface.

💡 Research Summary

**

The paper presents Trialstreamer, an open‑source, “living” database that continuously ingests randomized controlled trial (RCT) abstracts from MEDLINE and automatically extracts the key elements clinicians need to evaluate evidence. The system focuses on extracting the Population, Intervention, Comparator, and Outcome (PICO) components, identifying the sentences that contain the evidential statements about treatment effects, linking these components into Intervention‑Comparator‑Outcome (ICO) triples, inferring the direction of the reported effect (increase, decrease, or no difference), and normalizing the extracted concepts to MeSH terms for aggregation across studies.

System Architecture

- RCT Identification – Instead of relying on manually applied Publication Type tags, the authors use a previously validated machine‑learning classifier to tag articles as RCTs in real time, ensuring that newly published trials are promptly included.

- Pre‑processing – Abbreviations are expanded with the Ab3P algorithm, which improves downstream PICO tagging accuracy and reduces context length for later models.

- PICO Tagging – Using the EBM‑NLP corpus, a BioBERT‑based BiLSTM‑CRF model jointly predicts spans for Population, Intervention, Comparator, and Outcome. The model achieves high recall (0.87 at the clinical‑entity level) while maintaining reasonable precision, reflecting the design choice to favor recall for downstream filtering.

- Evidence Sentence Classification – Leveraging the Evidence‑Inference corpus, a linear classifier on top of BioBERT identifies sentences that contain the evidential conclusion for a given ICO prompt. The classifier reaches an impressive recall of 0.97 but a modest precision of 0.53, indicating many false positives, often from summary sentences that do not directly correspond to the annotated evidence.

- Relation Extraction & ICO Construction – The authors observe that outcomes are usually explicitly mentioned, whereas interventions and comparators are often implicit. Consequently, they anchor the extraction process on the explicit outcome spans and then predict, for each outcome, whether a candidate treatment is the intervention, comparator, or unrelated. This is done with a linear classifier that ingests the treatment token, the evidence sentence, and a short context (first four sentences). The model yields per‑class F1 scores of 0.89–0.94. After assembling ICO prompts, a final classifier predicts the effect direction; this model relies heavily on the outcome text and evidence sentence, with little contribution from the intervention/comparator tokens, reflecting the regular phrasing of trial conclusions.

- MeSH Normalization – To enable cross‑study aggregation, extracted PICO spans are mapped to MeSH concepts using a dictionary built from UMLS synonyms (following the Metamap Lite approach). When evaluated against the official MeSH annotations for 191 test articles, the system produces on average 14 MeSH terms per article (close to the 14.8 gold standard) but strict exact‑match precision is low (≈0.26), highlighting the difficulty of precise concept mapping in a large ontology.

Evaluation

The authors created a new annotated dataset of 160 abstracts (100 test, 60 development) with exhaustive PICO, evidence, and relation annotations. Macro‑averaged scores for span detection, evidence sentence identification, treatment‑outcome linking, and effect direction prediction are reported, demonstrating that each component performs at a level suitable for large‑scale deployment.

User Interface & Evidence Maps

Trialstreamer’s web interface allows users to query a clinical condition (population) and receive an “evidence map” where interventions are plotted on the vertical axis and outcomes on the horizontal axis. Each cell displays the number of trials, participant counts, and the inferred efficacy direction, with links to the original abstract and the supporting evidence sentence. This visual summary enables clinicians and patients to quickly grasp the landscape of available treatments for a specific outcome.

Strengths

- End‑to‑end pipeline that integrates multiple NLP tasks, each trained on state‑of‑the‑art corpora.

- Use of BioBERT and large‑scale pre‑training on PubMed abstracts, yielding strong performance despite limited labeled data.

- Open‑source release of code, models, and a live demo, facilitating reproducibility and community extension.

- Practical focus on real‑time evidence mapping, addressing a clear need in evidence‑based medicine.

Limitations

- The system operates only on abstracts; full‑text information (tables, detailed statistics, confidence intervals) remains untapped.

- Evidence sentence classifier’s low precision may introduce noisy signals into the ICO construction, potentially biasing the inferred effect direction.

- MeSH normalization suffers from ambiguity and hierarchical granularity, limiting exact cross‑study aggregation.

- Errors in early stages (e.g., RCT classification, abbreviation expansion) can propagate downstream, yet the paper does not quantify cumulative error impact.

Future Directions

The authors suggest extending the pipeline to full‑text articles, incorporating multimodal data (tables, figures), refining evidence sentence detection with context‑aware ranking models, and developing a feedback loop where user corrections improve model performance over time. Additionally, exploring larger transformer architectures (e.g., PubMed‑BERT, Longformer) could enhance handling of longer abstracts and full texts.

In summary, Trialstreamer demonstrates a feasible, scalable approach to turning the ever‑growing body of RCT literature into an accessible, searchable, and visual evidence resource, laying groundwork for more sophisticated, automated systematic review tools.

Comments & Academic Discussion

Loading comments...

Leave a Comment