Holistic Parameteric Reconstruction of Building Models from Point Clouds

Building models are conventionally reconstructed by building roof points planar segmentation and then using a topology graph to group the planes together. Roof edges and vertices are then mathematically represented by intersecting segmented planes. T…

Authors: Zhixin Li, Wenyuan Zhang, Jie Shan

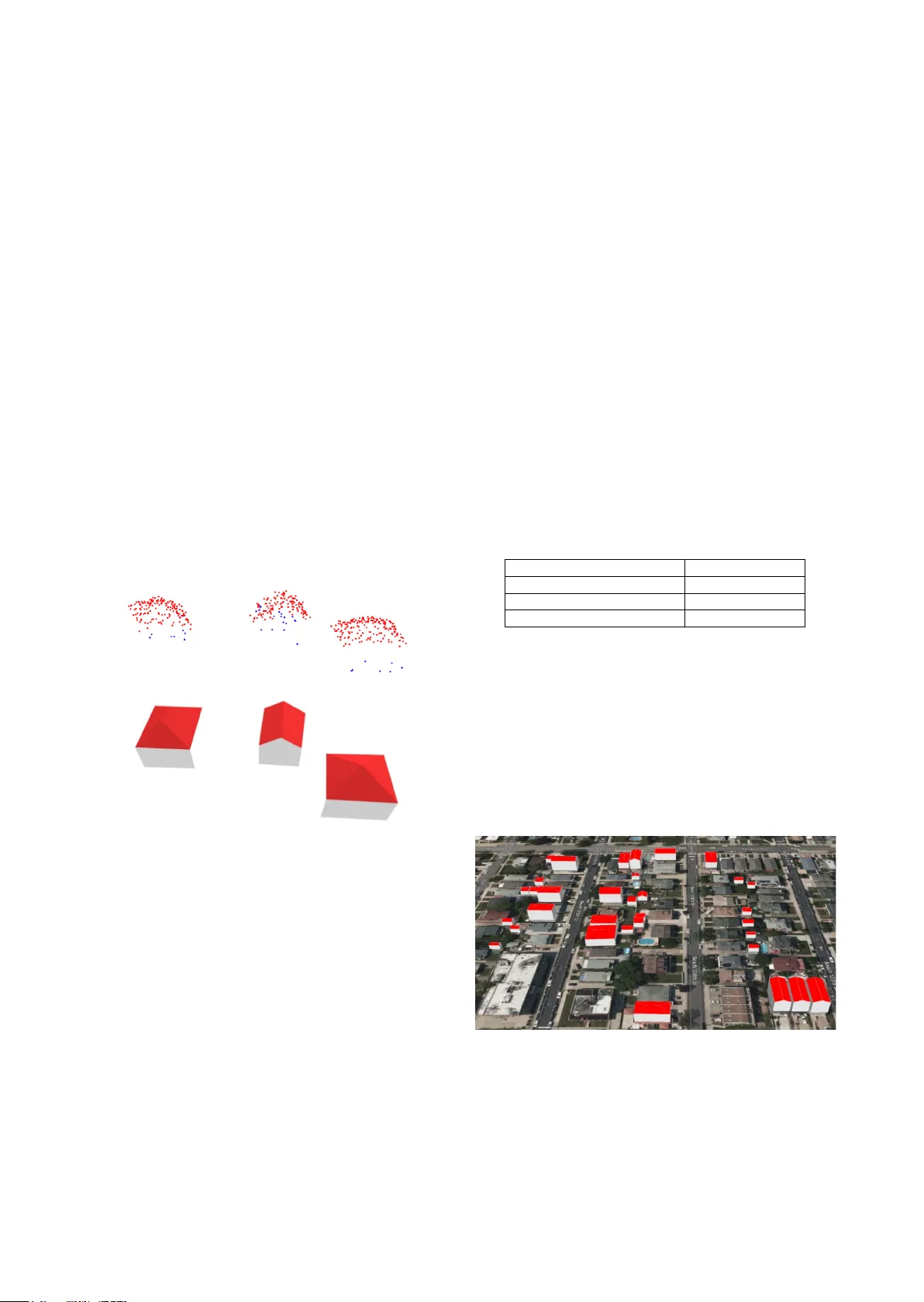

HOLISTIC PARAM ETERIC RECO NSTRUCTION OF BUILDING MO DELS FROM POINT CLOUDS Zhixin Li 1 , Wenyuan Zh ang 2, 1 , Jie Shan 1 1 Lyles School of Civil Engineering, Purdue Univ ersity, Indiana, USA - (li2887, jshan)@purdue.edu 2 National Research Center of Cultural Industries, Central China Norm al University, Wuhan, China – zhangwy@mail.ccnu.edu Commission II , WG II/6 KEY WORDS: Primitives, Building Modelling, Point Cloud, Semantic Segmentation, Deep Neural Network, CityGML ABSTRACT: Building models are conventionally reconstructed by building roof points plan a r segmentation and then using a topology graph to group the planes together. Roof e dges and vertices are then mathem atically represented by intersecting segmented planes . T echnicall y, such solution is based on sequential local fitting, i.e., the entire data of one building are not simultaneously participating in determining the building model. As a consequence, the solution is lack of top ological integrity and geometric rigor. Fundamentally differ ent from th is traditional approach, we propose a holistic parametric reconstruction method which means tak ing into consideration the entire point clouds of one building simultaneously . In our work, building m odels are reconstructed from predefined param etric (roof) p rimitive s . We f irst use a wel l -design ed deep neural network to segment and identify primitives in the given building point clouds. A holistic optimization strategy is then introduced to simultaneously determine the p arameters of a segmented primitive . In t h e last step, the optimal parameters are used to generate a watertight building model in CityGML format. The airborne LiDAR dataset RoofN3D with predefined roof types is us ed for our test. It i s shown that PointNet++ applied to the entire dataset can achie ve an accuracy of 83% for primitive classification. For a subset of 910 buildings in Roof N3D, the holistic approach is then used to determine the p aram eters of primitives and reconstruct the buildings. The achieved overall quality of reconstruction is 0.08 meters for point -surface- distance or 0.7 times RMSE of the input LiDAR points. The study demonstrates the efficiency and capability of the proposed approach and its potential to handle large scale urban point clouds. 1. INTRODUCTION High ly acc urate and s emantica lly ric h three - dimensional (3D) building models become inevitable for various applications such as urban planning, urban change d etection, 3D navigation, emergency response, and heritage conservation (Gao et al., 2018) . Ov er the past few decades , many resear ch efforts have been conducted to 3D building modelling at different levels of detail (LoD) from multi - source rem otely sensed data. However, developing a fully automated and efficient build ing modelling technique with hi gh accuracy and rich sem antics rem ains a challenging task (Jung et al., 2017) . Since the emerging of laser scanning and oblique photography technologies, there has been remarkable progress in the field of 3D point cloud generation , processing and application s , which accelerates the research and development in 3D building modelling (Rottensteiner et al., 2014). Conventionally, building models are reconstructed by separately fitting roof planes in the point clouds and then using a topology graph to group th e se planes . In this type of approach, roof edges and vertices are mathematic ally represent ed by inters ecting segm ented planes . Recent res earch pr esented som e plane- based methods that firstly segment the point clouds into primitive planes and then intersect them into polygonal mesh es in 3D space (Bauchet and Lafarge 2019). Technically, such solutions are based on sequential local fitting , i.e., at one time only part of the entir e data of a b uilding participates in determining the building model. As a consequence, the solution is lac k of topological integrity and geometric rigor. Furthermore, the fully automatic process of the traditional reconstructing approaches remains challenging due to limited point density, sensor noise, missing data, outliers, and scene complexity (Cao et al., 2017; Macher et al., 2017; Zhu et al., 2017) . More recently, deep learning has increasingly ga ined attention in various applications. For instance, 3D neural networks have been extensively explored for 3D object detection and reconstruct ion (Zhi et al., 2018) , 3D s emantic s egmentation (Engelmann et al., 2017; Graham et al., 2017; Tchapmi et al., 2017), 3D classificat ion of point clouds (Özdemir et al., 2019; U y et al., 2019) and 3D object pose estimation (Qi et al., 2019) . Fundamentally differe nt from the traditional approach, we propose a holistic parametric building reconstruction method based on deep neural networks and (3D) primitives. Ba sed on a set of predefined parametric roof primitives in 3D space, w e first use a sophisticated deep neural network to segment and identify roof primitives in the building -onl y point clouds. A holistic optimization strategy is th en introduced to simultaneously determine the parameters for a segmented building. Subsequently, a watertight building model can be uniquely reconstructed t hat best fit the point cloud for 3D modelling and representation. Our contributions can be summarized as: 1). Propose an automatic end - to - end building reconstruction pipeline for building point clouds; 2). Present a holistic paramet ric estim ation method; and 3). Introduce an effective and robust solution of semantic building modelling from point clouds. The rest of the paper is organized as follows. Section 2 describes related work in primitive fitting, point cloud s emantic segment ation and reconstruction. Section 3 introduces the methodology for parametric reconstruction (i .e., determine the values of these parameters). Section 4 tests the proposed algorithm on the RoofN3D dataset (Wichmann et al. 2018) with three predefined primitives. The work exhibits promising results. A quantitative assessment is conducted, followed by a comparative analysis on the results from different roof structures. Section 5 expresses the conclusions drawn from the experimental results. 2. RELATED WORK S 2.1 Primitive Fittin g The idea of decomposing a complex model into a set of simple geometric primitives for object recognition originates from the concept of recognition - by -c omponents proposed by Biederman (1987). Recognition of p rimitive t ype s and primitive fitting are key issues and challenging tasks for point cloud segmentation or shape detection. Schnab el et al . (2007) develop ed an auto matic and efficient random sample consensus (RANSAC) based framework for detecting planes, spheres, cylinders, cones and tori in unorganized point clouds. However, under -segmentation and false detection of primitive types may occ ur since RANSAC - based approach only looks for local cues and its estimation of the primitive parameters is sensitive to noise in position and normal (vector) of the sample points (Isack and Boyko v, 2012) . Furthermore, the performance of RA NSAC - based methods relies on careful and laborious per - input parameter tuning . Le and Duan (2017) proposed a primitive - based 3D segmentation fram ewor k for mechanical CAD models. A dimension reduction method was employed to transform the detection of 3D primitives into the classical 2D problem s such as cir cle and lin e detectio n in images . Li a nd Feng (2019) introduced geometric primitive segmentation for convolutional neural network (CNN) and proposed a framework for multi - model 3D primitive fit ting based on simulated point clouds. It performed superior th an RANSAC - based methods on noisy range images of cluttered scenes. In contrast to traditional parsing or detecting shapes from point cloud data, Li et al. (2019) introduced an end - to - end neural network named supervised primitive fitting network (SP FN) to detect multiple primitives of different types with accurate parameters. This approach supports the predi ction of plane, sphere, cylinder, and cones at different scales, and does not require any user intervention. Li et al. (2019) pre se nted a primitive - based 3D building model l ing approach b y synthesizing the training data . PointNet was adopted to classify building primitives, while coherent point d rift (CPD) was applied to align the predicted primitive s into the target 3D point clouds. 2.2 Semantic Segmentation Semantic segme ntation or classif ication of point clouds is a well - known problem in computational geometry and computer vision . It has been extens ively resear ched over th e past d ecades. D ue to the recent advancements of deep neural networks (DNN) in image sem antic segm entation, D NN has been extens ively used or extended for 3D semantic segmentation (Qi et al., 2016) . T o handle irregular point clouds , 3D DNN has been furthe r proposed, including PointNet (Qi et al. 2017) , PointNet++ (Qi et al. 2017) , Voxel Net (Zhou and Tuzel 2018) , VoteNet (Qi et al. 2019) . These methods show ed remarkable performance in semantic segme ntation, classifi cation, 3D object detecti on of point clouds . Tchapmi et al. (2017) present ed an end - to - end framework to obtain 3D point - level segmentation that combines the advantages of neural network, tr ilinear interpolation and fully connected conditional random fields. 2.3 Semantic Modelling B uilding model s wit h semantic information, suc h as CityGML and Industr y Foundation Classes (IFC) provide more valu able application potential s than traditional geometric model, which leads to a new re search top ic about bu ilding semantic modelling in rece nt years. Semantic reconstruction of diff erent building roof types is a crucial task for 3D building modelling. Most of the previous approaches rely on human intervention beyond the selection of processing parameters , whic h is tedious, time - consuming, as well as the most expensive part of the wor kflow. In order to reduce labour - inten sive pro cesses, man y efforts h ave been made to develop automatic methods over recent years. Zheng et al. (2017) presented a hy bri d method for automatic reconstruction of complex building roof structures, in which data- driven approach was used to de tect step edges. Roof t ypes were determined by using LiDAR and high -resolution orthophoto s. Finally, p lane fittin g was emplo yed to recons truct parametric m odels and generate s emantic building models at LoD2. J ayaraj and Ramiya (2018) used commercial softwar e and open source packages to generate 3D building models in CityG ML format from airborne LiDAR point cloud . However, most of these approaches rely on m ulti - source data. Automation and accurate reconstruction still pose gr eat challenges to the existing alg orith ms. 3. METHODOLOGY As shown in Figure 1, our workflow consists of the following three concept ual steps. The input LiDAR point clouds are first semantically seg mented by Poin tNet++. Then an o ptimization method with fixed initial valu es and predefined cost function, consists of point -surface- dista nce (PSD ) and 2D Intersection over Union (IoU), is used to det ermine the parameters for each individual building with specific primitive type. Finally, semantic building models in CityGM L format are reconstructed based these esti mated primit ive parameters . Figure 1. Workflow of parame tric reconstruction of building models. 3.1 Learning - based Point Cloud Segmentation I dentify roof type of point cloud is the basis of parametric reconstruction of a building. For recognizing var ious roof types of point clouds, we choose to use a learning - based method to automatically segment input point clouds into different par ts with proper roof type labels. To achieve this go al, the hierarchical deep network, PointNet++ (Qi et al. 2017) is integrated into our pipeline. This high - performance network has four sets of abstraction (SA) layers for subsampling the input point clouds and two feature propagation/up - sampling (FP) layers for up - sampling intermediate point features. The output of this step is segmented building points with identified roof types. A library of basic prim itive s is firstly defined. At th is time , t he library consists of several roof ty pes defined in sema ntic - rich OGC CityGML st andard (Gröger et al. 2012) , including flat roof , gable roof, hip roof, half - hip roof, shed roof , mono pi tch roof, pyramid roof, and mansard roof . Thes e geometric -topolog ical primitives are r epresented in a local coordinate s ystem by se veral parameters , such as width, length, height, etc . Additionally, all primitives are placed in the form of axis - aligned elements. Figure 2 shows three standard primitives (pyramid, gable and hip) to be considered in this paper . Figure 2. Roof primitive samples corresponding to . From left to right: pyram id, gable, h ip primitives 3.2 Parameter Estimation After labelling roof type of a point cloud from DNN , we start to estimate t he corresponding primitive parameters for best fitting given building clouds. In this stage, parameters are separated into three groups: global translation parameters ( ), primitive parameters ( ) and local rigid transformation ( ) , where and are to be opti mized . For the convenience and efficiency of computation, we firstly translate building points f rom a world coordinate system to a local coordinate s ystem whose origin is at the centroid of the building points ; hen ce we have the . Next, we estab lish the rel ation between th e roof primitiv e parameters and its vertices. This relation can be described as a function = ( ) where represents surfaces , by ca lculatin g vertices of each roof surface and determining their connectivity. Take gable primitive as an example, its six (6) vertices can be expressed by = { , , } , as labelled in Figure 3 . Th e two roof planes a re const ructed with = { , , , } and = { , , , } , as shown in Figure 3. T o start the optimiz ation , is initia lly estimated by using the minimum bou nding box of the input point clouds and the identified primitive t ype. Figure 3 . C oordinates for vertices of a gable roof Then we use , including local rigid transform matrix bas ed on Euler rotation angle around axis and local translatio n parameters to tran sform the pri mitive surfaces into the correct position during optimization. The reason we only use single angle rotation around Z axis is because most build ings have only one degree of freedom, making them “ perpendicular ” to the ground. As such, for surface , its centroid x and their centralized verti ces can be determined through the roof primitive fun ction , . Then the coefficient set of : = can be solved through singular value decomposition (SVD) with respect to as follow s = , ( 1 ) = ( 2 ) = ( : , 3 ) ( 3 ) = x ( 4 ) = ( 5 ) where is the normal of , given by the third column of . Finally, we optimize the primitive parameters, local rotation and translation by mini mizing the overall cost function with respec t to the PSD and IoU, shown as below = + ( 6 ) where = 1 min , | = 1,2, … , ( 7 ) = 1 ( , ) ( , ) ( 8 ) In Equation 6 , consists of (mean PSD), (0~1, relativ e missing area represented by IoU) and (coefficient to ba lance and ). is an empirical coeffic ient based o n raw LiD AR RMSE (~0.1 m eter), which can make and (~0.01) in the same scale . In Equation 7 , is a function to calculate distan ce from a point to a plane , is point in the input points, is the number of surfaces in the pri mitive and N is the tota l number of points . In Equat ion 8 , is the projected horizontal boundary of a roof primitiv e a nd is the 2D - shape boundary of the input points , and I o U is se rved as a necessary boundary constrain for primitive parameter optimizat ion . Once the cost func tion is formed, the L- BFGS -B method (Zhu et al., 1997) is used to determine and . D uring optimization, and will be updated and hence Equation 1-8 are recalculated to form . After minimization of t he c ost function , roof parameters and , along with building primitive type and global translation , will be grouped i n a JSON file for the n ext step. 3.3 Parametric Reconstruction Once we g et the estimated pa rameters f or each b ui lding, we move to the last stage of building reconstruction. First of all, we calcul ate the world coordinates of roof vertices by using global translation parameters and optimal local primi tive param eters, as well as the l abelled roof types. Ta ke the recon struction of gable roof as an example, the world coordinates of roof vert ices are inferred through rotating and translating the primitive to correct pose and position. The whole rigid transformation of v er tices is expressed as follows = + + ( 9 ) where is the local coordinates of vertices with respect to estimated p rimitive p arameter , is the rotation matrix with respect to optimal angle in local parameter , repre sents the optimal values of local translation , while is the given global translation. After we get the accurate vertex coordinates of roof structure, different semantic surfaces defined in CityGML LoD2 building model are sequentially reconstructed by using these vertices and the underlying topology. Furthermore, all surfaces are generated with boundary representation (BRep). For instance, two roof surface s in Figure 3 are represent ed by = { , , , , } and = { , , , , } separately to form oriented and closed plan ar surfaces . The ground elevation w ith respect to each roof vertex is calculated by com bining the digital elevation model (DEM) and its footprint coordinates in the ground . The minimum value of all the calculated ground elevations is taken as the base elevation for a building. Thus, the four vertices of horizontal ground are = { ( ( ) , ( ) , )| = 1, 2, 5, 6 } , and the ground planar sur face is created an d represented by = , , , , . Four façades are subsequently modelled according to the roof vertices and ground vertices in th e same vertic al plane, i.e ., { , , , , } , { , , , , , }, etc. To a chieve the des ired effect of 3D visualization, all the coordinates of vertices in a surf ace are specified in counter clockwise order and used to form a element. To this end, all exterior boundaries of a building are generated into piecewise planar surfaces with semantic information, such as RoofSurface, WallSurface, and GroundSurface. After hierarchically organized into different tr ee elements according to CityGML building schema and encoding standard, these semantic surfaces are finally form a watertight LoD2 building model with the specific element . To represent the volume of the building geometry, a closed LOD2 solid geometry (lod2Solid) is also created w ithin the above building element by referencing to the generated boundary surfaces . Buildings with hip or pyramid roof could be reconstructed in a similar way as gable roof. The semantic buildings models with three different roof structures are shown in Fi gure 4. Fig ure 4 (a) is the input three point clouds, red point are roof points, while blue ones are ground or wa ll points. Figure 4 (b) illustrates the reconstructed CityGML building m odels. ( a) Segmented point cloud s (red for roof, blue for others) ( b) Reconstruction results Figure 4 . Reconstructed City GML model s with different roof primitives (hip, gable, pyramid) In order to integrate building models with terrain, the T errain Intersection Curve (TIC) defined in CityGML is also considered during the model l ing. Thus, a lod2MultiCurve geometry is generated and applied to each building by connecting all of the calculated ground points with their elevation. In addition to the geometric representation of a bu ilding, some significant attributes re lated to a building are cre ated and ap pended to the building element a s well , such as the bounding box (envelope), the name of the spat ial referen ce system (srsName), the m easure d height of the building (measured Height). Ultimatel y, a coherent geometric- semanti cal CityGML LOD2 building model is produced by outputting these elements to a GML fil e. 4. EXPERIMENTS 4.1 Dataset Our testing data is the recen tly releas ed buildin g dataset Roof N3D. This dataset is a 3D building point c louds dataset for training 3D DNN and buildi ng reconstruction. It covers a large area in New York City (NYC) and includes rich semantic information. Provided by the U.S. Geological Survey (USGS), the point clouds were collected from a irborne LiDAR from 08/2013 to 04/2014 and cover an area of 1,009.66 sq. km. The average den sity of the point clouds is about 4 .72 points/m2. The spatial reference of point cloud is NAD83(2011)/ UTM zone 18N with unit in m eters . The whole dataset consists of 118,074 single buildings and is pre - labelled three building ty pe s , i.e ., pyramid, gable , and hip. As shown in our workflow, for the first DNN step, we used 80% (94,459) buildings as training dataset and the rest 20% (23,615) as testing dataset. For the second estimation step, we use a subset of 910 single bu ildings (including 81 pyramid s , 630 gables, and 199 hip s ) located in NYC Queens res idential area to evaluate the es timator performance. Additionally, corresponding DEM with sub - meter resolution is also inc luded to reconstruct the bottom surface for each building. 4.2 Results Table 1 shows the performance of segmentation and primitive type classification using whole RoofN3D dataset with PointNet++. As sho wn in Table 1, we achiev e an overall 47.85% semanti c segmentation IoU and 83.02% roof type classifica tion accuracy on t he t esting dataset . T he classif ication acc uracy demonstrates that m ost roof structures of the building points can be recognized correctl y under the powerful DNN model . # Testing buildings 23,615 # Roof types 3 Semantic Segmentation IoU 47.85% C lassif icat ion Accur acy 83.02% Table 1 . Segmentation and classification result of the RoofN3D dataset from PointNet++ A subset of R oof N3D Dat aset is th en selec ted to test the qu ality of bui lding modelling. It consists of 910 buildings w ith three different roof types. 910 CityGML LoD2 building models are automatically generated by using the proposed p arametric reconstruction approach. Part of the reconstructed result is shown in Figure 5. All the roof surfaces are rendered with red colour. As we can see, most mod els coincide with building footprints, which indicates these models are reconstructed in the right geographic positions and right orientation. Figure 5 . A samp le area of t he reconstructed CityGML building models in NYC 4.3 Quality of the reconstructed mod els T he quality assessments of reconstruction result are perform ed in t wo ways. First, the mean and standard deviation of the PSD from the point cloud to the corresponding reconstructed roof are calculated to valid ate the roof geometr ic accuracy . Second, the 2D IoU between bottom surfaces of the reconstructed building model and the corresponding ground truth building footprint is adopted to evaluate the horizo ntal accuracy . (a) mean P SD (b) IoU Figure 6. Error distribution of the reconstructed 910 buildings The distributions of PSD and IoU metrics are shown in Figure 6, while the statistical results for each roof type are summarized i n Table 2. Py ra mid Gable Hip Overall #Buildings 81 630 199 910 Mean PSD (m) 0.0630 0.0809 0.0863 0.0805 Std. of PSD(m) 0.0503 0.0692 0.0701 0.0677 Mean IoU (%) 90.12 88.66 95.17 90.22 Table 2. Quality evaluation of re constructed models in terms of primitive types On one hand, t he distance between reconstructed roof surfaces and their point clouds is smaller than 0.1m in most cases. The overall PSD is 0.0805m, which mostly indicates the shape (geometry) accuracy of the reconstructed building models. On the other hand, the 2D IoU ’s for most reconst ructed models are higher than 80%, and the overall IoU is 90.22%, which mostly demonstrates the accuracy of building boundary . With respect to three kinds of roof types, the reconstruction o f hip roof s achieves the best bo undary acc uracy, while the pyramid roofs receives a shape accuracy better t han the others. The difficulty of finding optimal parameters in search space grows exponentially with the number of parameters. With respectively two (2) and one (1) additional para meters to be optim ized, hip a nd gable roofs achieve a shape a ccuracy slightly poorer than the py rami d . Furthermore, there are quite a fe w asymmetric gable buildings in the test dat aset, which may cause their slightly lower boundary accuracy com paring to th e pyramid and hip buildi ngs. 4.4 Stability of the Approach To test the stability of our optimization approach , we im plement a pipeline to generate simulated points with p ermutated errors and estimate corresponding parameters. We start this pipeline with a s et of param eters estimated from building roof points from the RoofN3D dataset. We select 20 buildings for each building type and generate 30 trials of independent and identically distributed random points for each building. Pyramid Gable Hip Overall # buildings 20 20 20 60 Dimension (w, l , h, …) RMSE(m) 0.120 0.217 0.462 0.266 RMSE(%) 4.37 5.55 5.90 5.273 STD (m) 0.071 0.136 0.297 0.168 Translation RMSE(m) 0.020 0.046 0.030 0.032 STD (m) 0.018 0.042 0.030 0.030 Table 3 . Performance of the optimi zation algorithm with simulated points Based on the RoofN3D’s metadata, we add random noise to the simulated points with RMSE as 0.12 meters and the noise is distributed as: 90% within 0~1 RMSE, 9% within 1~2 RMSE and 1% within 2~3 RMSE, which is similar to the real data. The optimization alg orithm is then us ed to estim ate the par ameters of the simulated points. The results are then compared with the “true” param eters. T able 3 summarizes th e root mean square error, its percentage relative to the roo f dimen sion, and the standard deviation of the differences between estimat ed parameters and the “t rue” param eters. 4.5 Discussions A s we carefully check each building model and its quantitative evaluation indexes, ther e exists severa l less accurat e results with ei ther a higher mean PSD or a lower IoU. F or inst ance, a specific building labelled with gable roof got a high mean PSD (mean PSD =0.0687 m) and low IoU (IoU=69.73%). The comparison of the reconstructed roof and its referenced boundary model is shown in Figure 7. I t shows that the distribution of the input point cloud is not symmetric, whereas the predefined gable primitive is a fully symmetric structure. As such, the result of primitive fitting is erroneous in this case. Figure 7. P rimitive - fitted roof surface (left) and reference boundary model from RoofN3D (right). In contrast, building roofs may be occluded by sm all surrounding trees or other objects, w hich leads to an incomplete point cloud. Under such circumstance, the proposed method is able to re construct a complete and symmetric roof surfaces . Th e solution is robust to inco mplete point clo ud data , a superior property over traditional data - driven approaches (e.g. RANSAC, region growing). In addition, Figure 8 shows comparison of results between RANSAC plane detection and the proposed holist ic approach . Figure 8 left indi cates two undetected planes (red boxes) , though there are substantial sampling points on a hip roof . Ho wever , the primitive based holistic fitting could lead to correct roof struc ture. Figure 8 . Comparison between RANSAC plane detection (left) , reconstructed roof (middle) and ground truth (right). A lthough the RoofN3D dataset provides boundary models of what , the ground height for all the models are 0 , which is not able to be used as ground truth for building height. Thus, the v ertical accuracy w ith res pect to grou nd elev ation is di fficult t o evaluate by using the RMSE between reconstructed m odels and ground truth. Furthermore, Roof N3D only offers three categories of roof structure. As such, our assessment was only conducted to limited roof structures. However, the proposed parametric reconstruction algorithm is flexible and can be easily extended to m odel buildings with complex roof structure. This is mostly because of a number of typical primitives d efined in the library and deep learning based semantic segmentation of point cloud. Furthermore, since most complex buildings can be recognized and decomposed into seve ral simple prim itives by th e DNN model, each segmented building part can be reconstructed separately through th is optimization process for primitive fitting . At the end, all building parts can be composed into the complete model with topological and semant ic rules. 5. CONCLUSION This paper presented a novel holistic parametric reconstruction method. All points of a building are tak en into account simultaneously to determine the building parameters and its symmetry . To best fit a point cloud with corresponding roof primitive , both roof surfaces and their projected horizontal boundary are considered through a carefully designed objective function . The point-surface- distan ce helps define the shape of the roof primitives , while the projected horizontal boundary i s used to form a necessary boundary condition. Experiments demonstrated this can overcom e certain limitations of previous sequential sing le or simple plane fitting - based approaches . The primi tive based reconstruction results have several advantages such as symmetry , regularization, a nd compact representation. Moreover, the new development is robust to nois e, outliers, and missing data . Experimental results with over 900 buildings show ed that the proposed method can accurately and effectively generate sema ntic building models with several different roof structures. The approach is stable for the tested thr ee types of buildings, with 5.27% dimension differenc e and can achieve 0 .08 meter point- to - surface distance , or 0.7 times the RMSE of the input LiDAR poin ts. Furthermore, the entire reconstruction process is fully automatic and is implemented using Python. As such, it can be used to model large - scale sc enes with highly dense urban areas. T he generated models are represented according to CityGML building encoding, which offers the advantages of spatio - semantic coher ence, geometr ical - topological coherence, as we ll as level of detail. These seman tic mo dels can be applied to a wide range of fields. However, the reconstruction quality is limited by the accuracy of deep learning based point cloud segmentation, since the rooftop semantic segmentation results are important input for the subsequent parametric estimation and building reconstruction. Additionally, reconstruction quality with respect to other roof categories and complicated roof structures needs to be furth erl y assessed by exploring more point cloud data with ground truth. Future work could be on exploring more effective DNN and optimization techniques, and extending this work to reconstruction of compound buildings. REFERENCES Bauchet, J . P., Lafar ge, F. , 20 19. City reconstruction from airborne LiDAR: A computational geometry app roach. ISPRS Annals of the Photogrammetry, Remote Sensing and Spatial Information Sciences , 4(4/W8), 19 –26. https:/ /doi.org/10.5194/isprs- annals -IV-4- W8 -19-2019 Biederman, I. , 1987. Recognition- by - components: A theory of human image understanding. Psychologica l Review , 94(2), 115 – 147. https://doi.org/10.1037/0033-295X.94.2.115 Cao, R., Zhang, Y., Liu, X. , Zhao, Z . , 2017. 3D building roof reconstruction from airborne LiDAR point c louds: A framework based on a spatial d atabase. Internationa l Journal of Geographical Information Science , 31(7), 1359 –1380. https://doi.org/10.1080/13658816.2017.1301456 Engelmann, F., Kontogianni, T., Hermans, A., Le ibe, B. , 2017. Exploring Spatial Context for 3D Semantic Segmentation of Point Clouds. Proceedings - 2017 IEEE International Conference on Computer Vision Workshops, ICCVW 2017, 2018 -Janua, 716–724. https://doi.org/10.1109/ICCVW.2017.90 Gao, X., Shen , S., Zhou , Y., Cui, H., Zh u, L., Hu, Z. , 2018. Ancient Chinese architecture 3D preservation by merging ground and aerial point clouds. ISPRS Journal of Photogramm etry and Remote Sensing , 143, 72 –84. https://do i.org/10.1016/j.isprsjprs.2018.04.023 Graham, B ., Engelcke, M., Maate n, L. V. D. , 2017. 3D Semantic Segmentation with Submanifold Sparse Convoluti onal Networks. CVPR2017, arXiv : 17, 1 –11. https://githu b.co m/facebookresearch/ Gröger, G. , Kolbe, T. H., Nagel , C., Häfel e, K. - H. , 2012. Open Geospatial Consortium OGC City Geography Markup Language ( CityGML ) Encoding Standard,Version 2.0.0. Open Geospatial Consortium. http://www.opengeospatial.org/legal/ Isack , H., Boykov, Y. , 2012. Energy - Based Geometric Multi - model Fitting. International Journal of Computer Vision , 97(2), 123–147. https://doi.org/10.1007/s11263-011-0474-7 Jayara j, P., Ramiy a, A. M. , 2018. 3D CityGML building modelling from lidar point cloud data. International Arch ives of the Photogrammetry, Remote Sensing and Spatial Information Sciences , 42(5), 175 –180. https://doi.org/10.5194/isprs-archives- XLII -5-175-2018 Jung, J., Jw a, Y., Soh n, G. , 2017. Implicit regularization for reconstructing 3D building rooftop models using airborne LiDAR data. Sensors , 17(3). https://doi.org/10.3390/s17030621 Le, T., Du an, Y. , 2017. A prim itive - based 3D seg mentation algorithm for mechanical CAD models. Computer Aided Geometric Desig n , 52 – 53, 231 –246. https://doi.org/10.1016/j.cagd.2017.02.009 Li, D., Feng , C. , 2019. Primitive fitting using deep geometric segmentation. Proceedings of the 36th International Symposium on Automation and Robotics in Construction, ISA RC 2019, 78 0– 787. https://doi.org/10.22260/isarc2019/0105 Li, L., Sung, M., Dubrovina, A., Yi, L ., Guibas, L. J. , 2019. Supervised Fitting of Geometric Primitives to 3 D Point Clouds. 2019 IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), 2647 –2655. https://doi.org/10.1109/CVPR.2019.00276 Li, X., Lin, Y ., Miller , J., Cheon, A ., Dixon, W . , 2019. Primitive - Based 3d Building Modeling, Sensor Simulation, and Estimation. IGARSS 2019 - 2019 IEEE International Geoscience and Remote Sensing Symposium, 5148 –5151. Macher, H., Landes, T., G russenme yer, P. , 2017. From point clouds to building information models: 3D semi - autom atic reconstruction of indoors of existing buildings. App lied Scienc es (Switzerland) , 7(10), 1 – 30. https://doi.org/10.3390/app7101030 Özdemir, E., Remondino, F., G olkar, A. , 2019. A erial Point Cloud Classification with Deep Learning and Machine Le arning Algorithms. International Archives of the Photogrammetry, Remote Sensing and Spatial Information Sciences , XLII - 4/W18(October), 843 –849. https://doi.org/10.5194/isprs- archives - xlii -4- w18 -843-2019 Qi, C. R., L itany, O., H e, K., Guib as, L. J. , 2019. Deep Hough Voting for 3D Object Detection in Point C louds. Qi, C. R., Su, H., M o , K., Guibas, L. J. , 2017. PointNet: Deep learning on point sets for 3D classification and segmentation. 30th IEEE Conference on Computer Vision and Pattern Recognition, CVPR 2017, 77 –85. https://doi.org/10.1109/CVPR.2017.16 Qi, C. R., Su, H., Niessn er, M., Dai, A., Yan, M., Gu ibas, L. J. , 2016. Volumetric and Multi - View CNNs for Object Classification on 3D Data. ArXiv:1604.03265 [Cs] . Qi, C. R., Y i, L., Su , H., Guibas , L. J. , 2017. PointNet++: Deep Hierarchic al Feature Learn ing on P oint Sets in a Metric Spa ce. NeurIPS 2017, Nips. Rottensteiner, F., Sohn, G., Gerke, M ., Wegner, J. D., Breitkopf, U., Jung, J. , 2014. Re sults o f the ISPRS benchmark on urban object detection and 3D building reconstruction. ISPRS Journal of Photogrammetry and Remote Sensing , 93, 256 –271 . https://doi.org/10.1016/j.isprsjprs.2013.10.004 Schnabel, R ., Wahl, R., & Kl ein, R. , 2007. Efficient RANSAC for point - cloud shape detection. Computer Graphics Forum , 26(2), 214 –226. https://doi.org/10.1111/j.1467- 8659.2007.01016.x Tchap mi, L . P., Ch oy, C. B., Armeni, I., Gwak, J ., Savarese , S. , 2017. SEGCloud: Semantic Segmentation of 3D Point Clouds. International Conference on 3D Vision (3DV). https://doi.org/10.1109/3DV.2017.00067 Uy, M. A., Ph am, Q. - H., Hua , B. - S., Nguyen , D. T., Yeung, S. - K. , 2019. Revisiting Point Cloud Classification: A New Benchmark Dataset and Classification Model on Real -W orld Data. ICCV2019. Wichmann, A., Agoub, A., & Kada, M. , 2018. RoofN3D: Deep learning training data for 3D building reconstruction. International Archives of the Photogrammetry, Remote Sensing and Spatial Information Sciences , 42(2), 1191 –1198. https://doi.org/10.5194/isprs-archives- XLII -2-1191-2018 Zheng, Y., W eng, Q., Zheng, Y . , 2017. A Hybrid Approach for Three- Dimensional Building Reconstruction in Indianapolis from LiDAR Data. Remote S ensing , 9(4), 310. https://doi.org/10.3390/rs9040310 Zhi, S., Liu, Y., Li , X., Guo, Y. , 2018. Toward real - time 3D object recognition: A lightweight volumetric CNN framework using multitask learning. Comput ers and Graphics (Pergamon) , 71, 199 –207. https://doi.org/10.1016/j.cag.2017.10.007 Zhou, Y., Tuzel, O. , 2018. VoxelNet: End - to - End Learning for Point Cloud Based 3D Object Detection. 2018 IEEE/CVF Conference on Computer Vision and Pattern Recognition , CVPR 2018, 4490 Zhu, C., R. H. Byr d , J. Nocedal. , 1997 . L- BFGS - B: Algorithm 778: L - BFGS - B, FORTRAN routines for large scale bound constrained optimization , ACM Transaction s on Mathematic al Software , 23, 4, pp. 550 - 560. https://doi.org/10.1145/279232.279236 Zhu, Q., Li, Y ., Hu, H., W u, B. , 2017. Robust point cloud classification based on multi - level s emantic relationships for urban scenes . ISPRS Journal of Photogrammetry and Remote Sensing , 129, 86 –102. https://doi.org/10.1016/j.isprsjprs.2017.04.022

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment