Exponentially improved detection and correction of errors in experimental systems using neural networks

We introduce the use of two machine learning algorithms to create an empirical model of an experimental apparatus, which is able to reduce the number of measurements necessary for generic optimisation tasks exponentially as compared to unbiased systematic optimisation. Principal Component Analysis (PCA) can be used to reduce the degrees of freedom in cases for which a rudimentary model describing the data exists. We further demonstrate the use of an Artificial Neural Network (ANN) for tasks where a model is not known. This makes the presented method applicable to a broad range of different optimisation tasks covering multiple fields of experimental physics. We demonstrate both algorithms at the example of detecting and compensating stray electric fields in an ion trap and achieve a successful compensation with an exponentially reduced amount of data.

💡 Research Summary

This paper presents a novel machine learning-based methodology to dramatically enhance the efficiency of optimizing experimental systems by detecting and compensating for external disturbances. The core idea is to employ machine learning algorithms to create an empirical model of the experimental apparatus itself, thereby reducing the number of required measurements exponentially compared to traditional unbiased systematic searches.

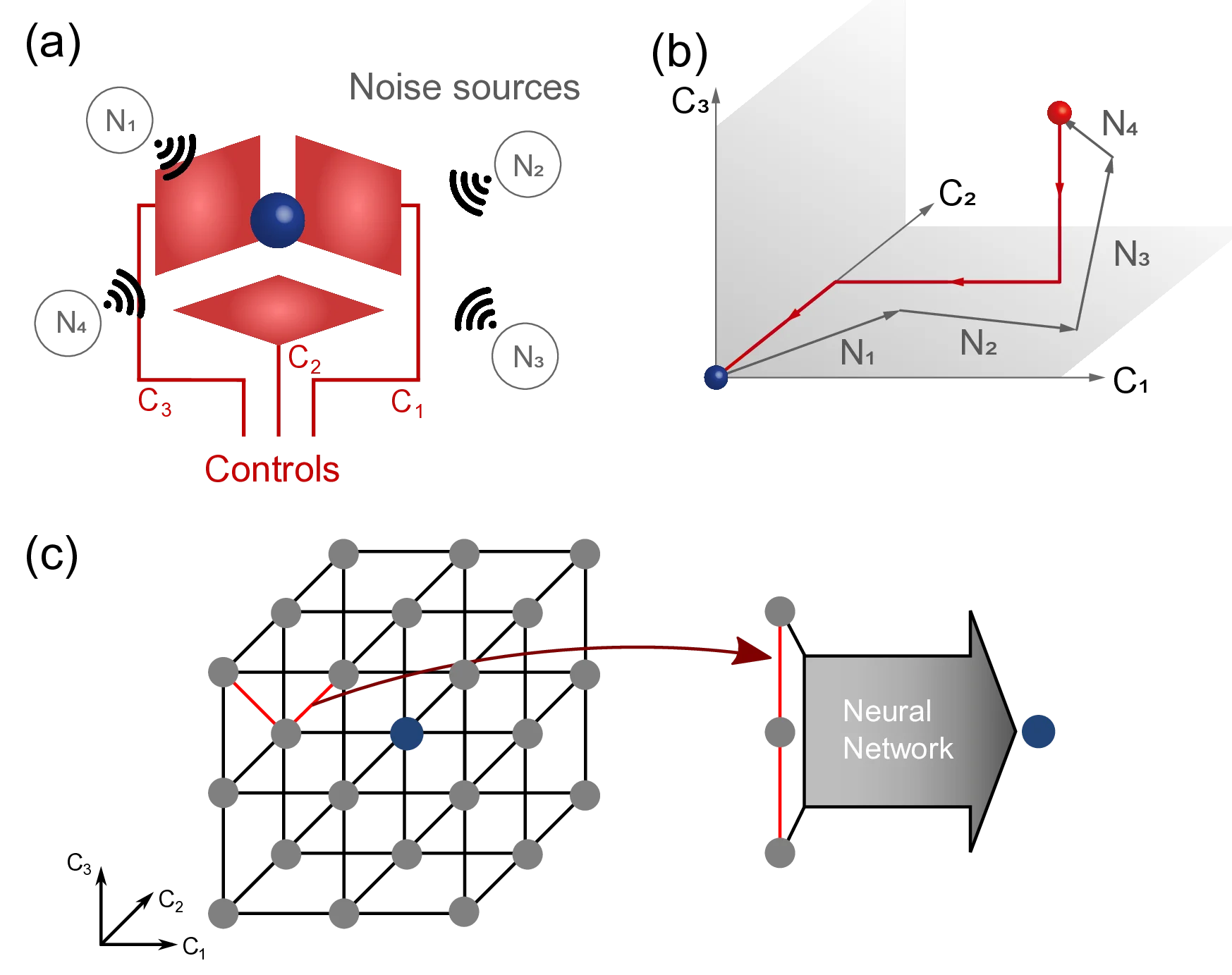

The authors address a common challenge in experimental physics: compensating for external noise sources (e.g., stray electromagnetic fields, thermal drifts) that drive a system away from its optimal operating point. Comprehensive simulation is often impossible, and systematically scanning a high-dimensional parameter space requires a prohibitive number of measurements (scaling as n^k for k dimensions).

To overcome this, the paper proposes sampling the k-dimensional control parameter space along line segments and feeding this data into machine learning models. These models learn to predict the optimal compensation point that nullifies the external noise. The goal is to reduce the measurement scaling from n^k to ank, where the coefficient ‘a’ represents the improvement factor.

Two distinct machine learning algorithms are demonstrated:

- Principal Component Analysis (PCA): This unsupervised algorithm is used when a rudimentary linear model of the data exists. In the example of compensating stray electric fields in a radiofrequency ion trap, a simple model posits that points of minimal ion motion for each of three probe laser beams lie on distinct planes in voltage space. PCA is applied to data from these planes. Remarkably, using just one data point per beam (3 total), PCA achieved a compensation accuracy of 42 V/m, outperforming the plane-intersection model which required 24 measurements for a similar accuracy. PCA succeeds by identifying the principal component (capturing 89% of variance) that reveals hidden linear correlations between parameters.

- Artificial Neural Network (ANN): This supervised learning approach eliminates the need for any prior physical model. A fully-connected ANN was trained on a relatively small dataset from seven full compensation runs (137 data points total). An ANN with 9 input neurons (representing three points in parameter space, one per beam) predicted the compensation point with an accuracy of 34 V/m, rivaling PCA. Even more impressively, an ANN with only 4 input neurons (encoding one data point and the beam identifier) could predict the compensation using just a single measurement, albeit with slightly reduced accuracy. This demonstrates the ANN’s ability to uncover complex, potentially non-linear correlations with minimal input.

The performance of all methods is compared based on the standard deviation (σ) of predicted compensation points. Unbiased systematic search required ~1000 measurements to reach σ ≈ 60 V/m. The plane-intersection model achieved this with 24 measurements. Both PCA and the 9-input ANN reached superior accuracy (σ ≈ 40 V/m) with only 3 measurements. The 4-input ANN further minimized the required input data to just 1 measurement.

In conclusion, the research demonstrates that PCA and ANN can learn the intricate correlations within an experimental system from limited data, enabling fast and accurate real-time compensation. The ANN method is particularly powerful due to its model-free nature. This machine learning framework offers a generic and highly efficient optimization strategy applicable to a wide range of experimental platforms in physics where precision and stability are paramount, from quantum computers and atomic clocks to particle accelerators.

Comments & Academic Discussion

Loading comments...

Leave a Comment