Relatedness Measures to Aid the Transfer of Building Blocks among Multiple Tasks

💡 Research Summary

**

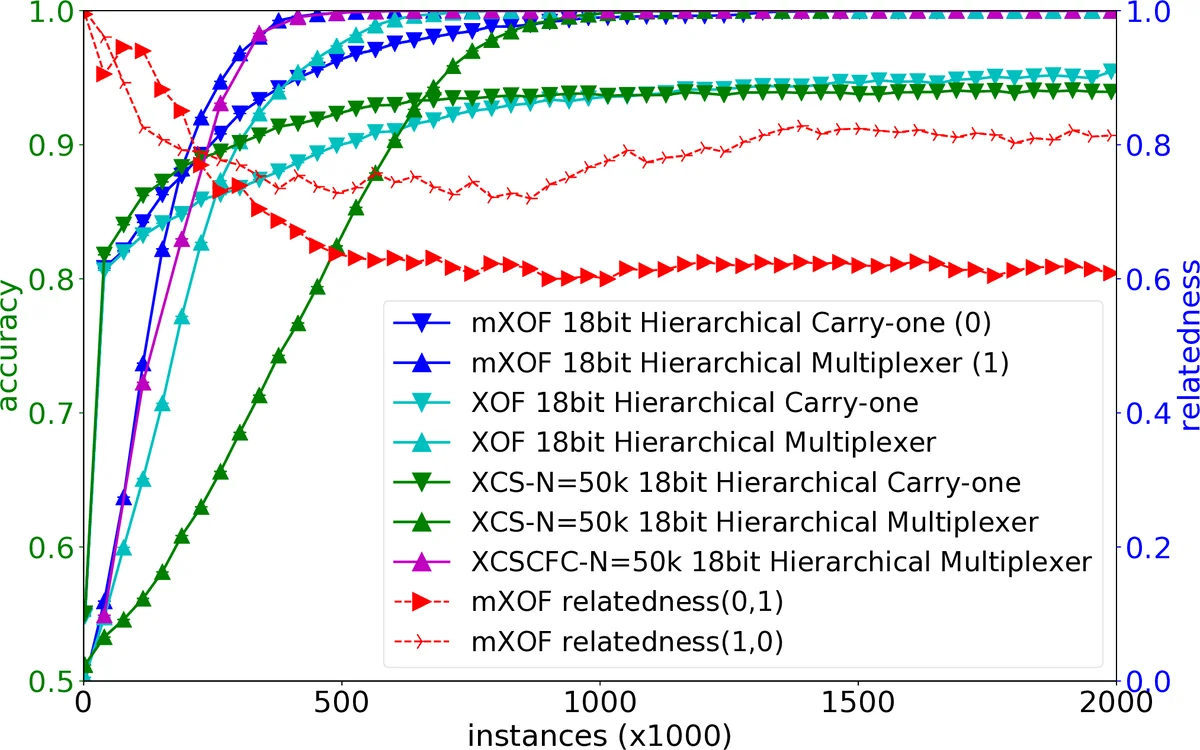

The paper introduces mXOF, a multi‑task learning framework built on top of the XOF learning classifier system (LCS), which itself extends XCS with an Online‑Feature generation (OF) module that creates tree‑based code fragments (CFs) as discriminative features. The central challenge addressed is the automatic control of knowledge transfer among tasks: while multi‑task learning (MTL) can boost performance by sharing useful knowledge, indiscriminate sharing can degrade performance when tasks are unrelated.

The authors propose to use each task’s Observed List (OL) – the set of CFs that have the highest discriminative power for that task – as a compact representation of the task’s “characteristic patterns”. By comparing two OLs, they estimate a asymmetric relatedness measure RelS(a,b), defined as the proportion of the total CF‑fitness of task a’s OL that is contributed by CFs also present in task b’s OL. This asymmetry captures the fact that the applicability of features from task a to task b may differ from the reverse direction (e.g., when one task is a subset of another).

All XOF instances in mXOF share a common CF population, which enables tracking of each CF’s fitness across tasks. The relatedness values are transformed into transfer probabilities p₍i,j₎, governing how likely CFs generated by task i are offered to task j during rule construction. Transfer is driven automatically: high relatedness leads to frequent sharing, while low relatedness suppresses sharing, preventing harmful interference.

The system is evaluated on three experimental settings:

-

Hierarchical Boolean problems – multiple tasks share low‑level Boolean sub‑patterns (e.g., AND, OR) but differ in higher‑level composition. The high overlap in OLs yields strong relatedness, and mXOF achieves 5‑8 % higher classification accuracy compared with independent XOF runs, demonstrating effective reuse of low‑level building blocks.

-

Unrelated tasks – an 11‑bit Even Parity problem and a 10‑bit Carry problem are learned simultaneously. The OLs share virtually no CFs, resulting in near‑zero transfer probabilities; performance matches that of separate single‑task learners, confirming that the framework does not force detrimental transfer.

-

UCI Zoo multi‑class classification – the original 7‑class dataset is transformed into seven binary classification tasks without any prior knowledge of inter‑class similarity. mXOF automatically discovers moderate relatedness between biologically similar classes (e.g., mammals vs. birds) and transfers useful CFs, yielding about a 3 % accuracy gain for those pairs, while unrelated class pairs see negligible transfer.

These results validate the hypothesis that the most discriminative features of a task encode its essential characteristics, and that OL‑based relatedness can guide selective feature transfer. Moreover, because the OL can be recomputed whenever a new task is introduced, mXOF naturally supports continual learning: new tasks can be added without retraining existing ones, and relatedness is instantly estimated to decide whether to share existing CFs.

The paper contributes a novel, scalable method for automatic, asymmetric knowledge transfer in MTL, bridging the gap between human‑like selective reuse of knowledge and algorithmic multi‑task systems. Future work suggested includes extending the approach to non‑Boolean domains (e.g., image or time‑series data), integrating deep feature extractors as CF analogues, and exploring multi‑objective transfer policies that balance accuracy, computational cost, and memory usage.

Comments & Academic Discussion

Loading comments...

Leave a Comment