Data Driven Estimation of Stochastic Switched Linear Systems of Unknown Order

We address the problem of learning the parameters of a mean square stable switched linear systems (SLS) with unknown latent space dimension, or \textit{order}, from its noisy input–output data. In particular, we focus on learning a good lower order approximation of the underlying model allowed by finite data. Motivated by subspace-based algorithms in system theory, we construct a Hankel-like matrix from finite noisy data using ordinary least squares. Such a formulation circumvents the non-convexities that arise in system identification, and allows for accurate estimation of the underlying SLS as data size increases. Since the model order is unknown, the key idea of our approach is model order selection based on purely data dependent quantities. We construct Hankel-like matrices from data of dimension obtained from the order selection procedure. By exploiting tools from theory of model reduction for SLS, we obtain suitable approximations via singular value decomposition (SVD) and show that the system parameter estimates are close to a balanced truncated realization of the underlying system with high probability.

💡 Research Summary

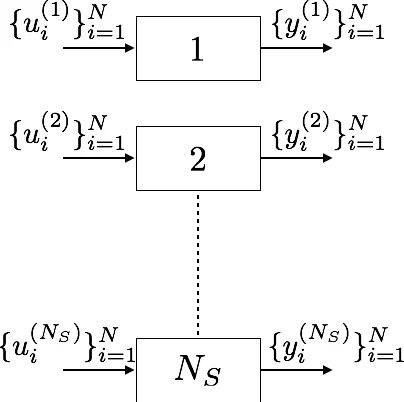

The paper tackles the challenging problem of identifying a mean‑square stable switched linear system (SLS) when the latent state dimension (order) is completely unknown and only noisy input‑output data together with the observed switching sequence are available. Classical system identification for linear time‑invariant (LTI) systems cannot be directly applied because, in an SLS, the number of possible switching patterns grows exponentially with the rollout length, causing the associated Hankel matrix to explode in size.

To overcome this, the authors first construct a “Hankel‑like” matrix from the data using ordinary least squares (OLS). For each observed switching pattern they estimate the corresponding input‑output block (C A_{\ell} B) (and (C A_{\ell})) and stack these blocks into a finite‑time estimator (\hat H(bN)). This estimator is purely linear‑algebraic; no non‑convex optimization is required, and the OLS step enjoys high‑probability error bounds under sub‑Gaussian noise assumptions.

A central contribution is a data‑dependent model‑order selection rule. The authors decompose the error (|H(\infty)-\hat H(bN)|_F) into an estimation error (which shrinks with the number of samples (N_S) and rollout length (N)) and a truncation error (which depends on the singular values of the true infinite‑dimensional Hankel matrix). By balancing these two terms they select a dimension (b) that guarantees, with high probability, a bound of the form

\

Comments & Academic Discussion

Loading comments...

Leave a Comment