Joint Subcarrier and Power Allocation in NOMA: Optimal and Approximate Algorithms

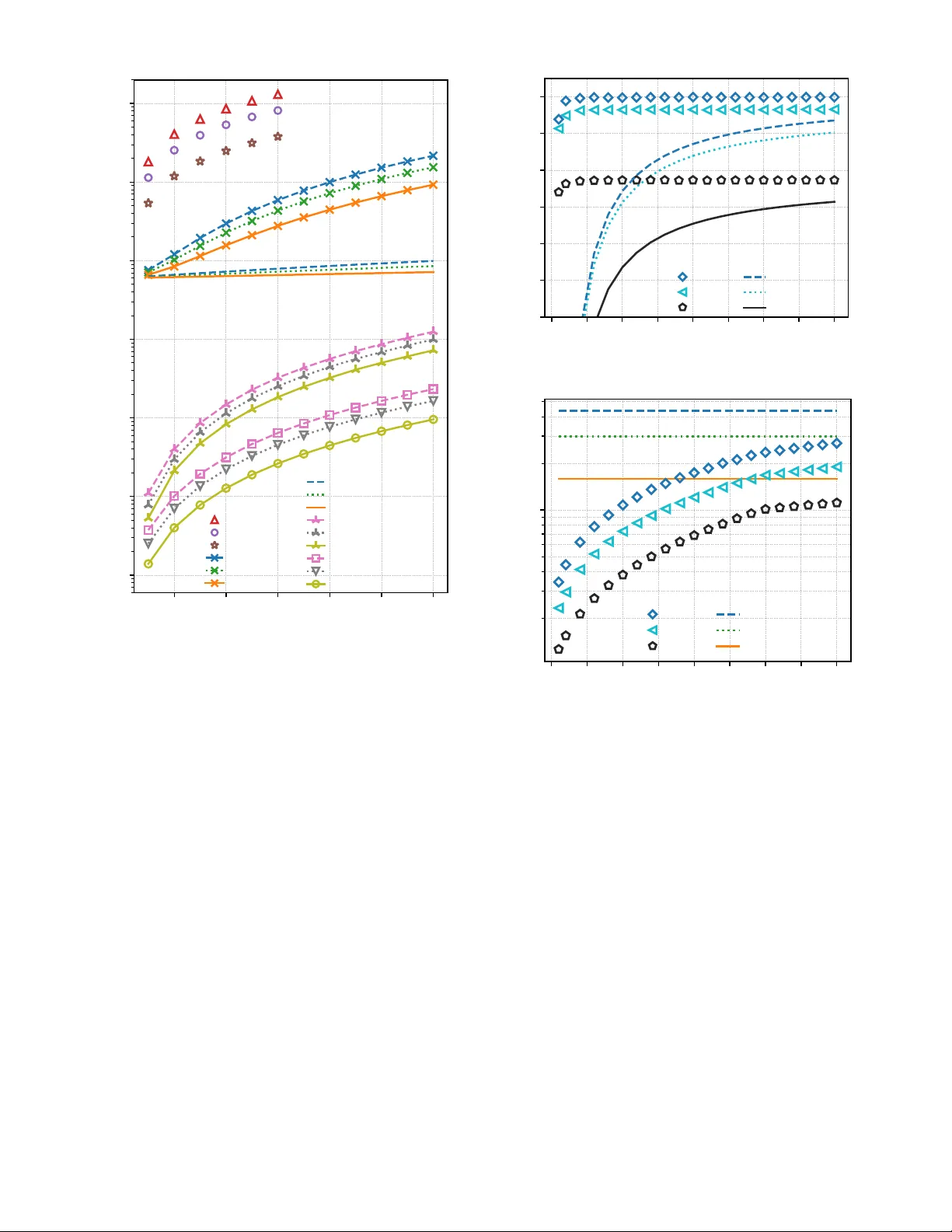

Non-orthogonal multiple access (NOMA) is a promising technology to increase the spectral efficiency and enable massive connectivity in 5G and future wireless networks. In contrast to orthogonal schemes, such as OFDMA, NOMA multiplexes several users o…

Authors: Lou Sala"un, Marceau Coupechoux, Chung Shue Chen