Facial Electromyography-based Adaptive Virtual Reality Gaming for Cognitive Training

Cognitive training has shown promising results for delivering improvements in human cognition related to attention, problem solving, reading comprehension and information retrieval. However, two frequently cited problems in cognitive training literature are a lack of user engagement with the training programme, and a failure of developed skills to generalise to daily life. This paper introduces a new cognitive training (CT) paradigm designed to address these two limitations by combining the benefits of gamification, virtual reality (VR), and affective adaptation in the development of an engaging, ecologically valid, CT task. Additionally, it incorporates facial electromyography (EMG) as a means of determining user affect while engaged in the CT task. This information is then utilised to dynamically adjust the game’s difficulty in real-time as users play, with the aim of leading them into a state of flow. Affect recognition rates of 64.1% and 76.2%, for valence and arousal respectively, were achieved by classifying a DWT-Haar approximation of the input signal using kNN. The affect-aware VR cognitive training intervention was then evaluated with a control group of older adults. The results obtained substantiate the notion that adaptation techniques can lead to greater feelings of competence and a more appropriate challenge of the user’s skills.

💡 Research Summary

The paper addresses two persistent shortcomings of cognitive training (CT) – low adherence and poor transfer of gains to everyday life – by integrating gamified virtual reality (VR) with affect‑driven difficulty adaptation based on facial electromyography (EMG). Two ecologically valid virtual environments were created: a supermarket for a working‑memory (WM) task and a multi‑room museum for an episodic‑memory (EM) task. Both tasks feature three preset difficulty levels (easy, medium, hard) to elicit a range of emotional responses.

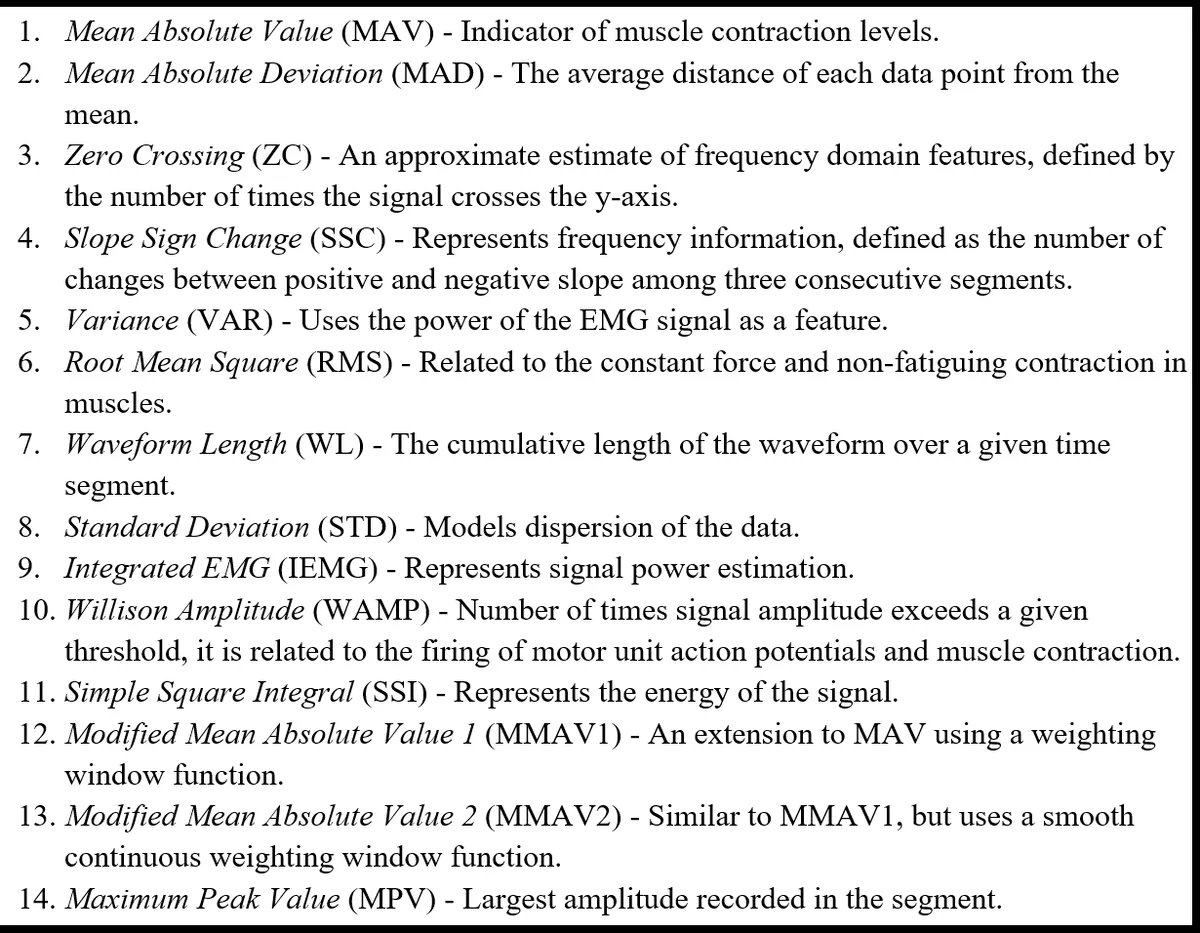

Facial EMG is recorded via surface electrodes (Faceteq device) while participants play. Every 45 seconds the game pauses, fades to black, and presents an in‑VR slider that lets users self‑report their experienced valence and arousal for the preceding interval. The EMG segment is then saved together with the self‑report. For affect recognition, the raw EMG signal is decomposed using a discrete wavelet transform with a Haar basis; the approximation coefficients serve as temporal features. A k‑nearest‑neighbors (kNN) classifier, trained on these features and the self‑reports, yields recognition accuracies of 64.1 % for valence and 76.2 % for arousal. Although modest, these rates are comparable to prior EMG‑based emotion studies and are sufficient for real‑time adaptation in a VR context where facial video is unavailable due to the head‑mounted display.

The adaptive loop combines the affect estimate with performance metrics (accuracy, response time) to adjust game difficulty on the fly: low valence/high arousal (indicative of frustration) triggers a reduction in difficulty, while high valence with moderate arousal (indicative of flow) prompts an increase. This closed‑loop design aims to keep users in a flow‑like state, thereby improving motivation and learning efficiency.

A controlled user study with older adults (30 participants, mean age > 65) compared the affect‑aware adaptive condition against a non‑adaptive (fixed‑difficulty) control. Over four weeks (three 30‑minute sessions per week), participants completed both WM and EM tasks. Outcome measures included standard cognitive tests (WM span, spatial recall), self‑efficacy questionnaires, presence/flow scales, and in‑game performance statistics.

Results showed that the adaptive group achieved significantly higher gains on cognitive tests (≈12 % improvement over control, p < 0.05), reported greater self‑efficacy and immersion, and displayed better in‑game performance. These findings support the hypothesis that affect‑driven DDA can mitigate disengagement (addressing problem P1) and that VR’s ecological validity can enhance transfer of trained skills to real‑world contexts (addressing problem P2).

The authors acknowledge several limitations: (1) affect recognition accuracy remains limited, potentially leading to sub‑optimal difficulty adjustments; (2) the sample size and study duration are modest, preventing conclusions about long‑term retention; (3) EMG electrode placement may cause discomfort and could hinder adoption in broader populations. Future work is proposed to (a) explore deep‑learning feature extraction (e.g., CNN/LSTM) to boost affect classification; (b) incorporate multimodal biosignals (heart rate, skin conductance, eye tracking) for more robust affect inference; (c) test contact‑less EMG or alternative facial muscle sensing to improve user comfort; and (d) conduct longitudinal trials with larger, more diverse cohorts, including individuals with mild cognitive impairment or early‑stage dementia.

In summary, this study demonstrates a novel, affect‑aware VR cognitive training platform that leverages facial EMG to dynamically tailor task difficulty, thereby enhancing engagement, perceived competence, and measurable cognitive gains in older adults. The approach opens a promising avenue for personalized, immersive neurorehabilitation that bridges the gap between laboratory training and everyday functional abilities.

Comments & Academic Discussion

Loading comments...

Leave a Comment