A pedestrian path-planning model in accordance with obstacles danger with reinforcement learning

Most microscopic pedestrian navigation models use the concept of “forces” applied to the pedestrian agents to replicate the navigation environment. While the approach could provide believable results in regular situations, it does not always resemble natural pedestrian navigation behaviour in many typical settings. In our research, we proposed a novel approach using reinforcement learning for simulation of pedestrian agent path planning and collision avoidance problem. The primary focus of this approach is using human perception of the environment and danger awareness of interferences. The implementation of our model has shown that the path planned by the agent shares many similarities with a human pedestrian in several aspects such as following common walking conventions and human behaviours.

💡 Research Summary

**

The paper addresses a fundamental limitation of most microscopic pedestrian navigation models, which rely on “force‑based” formulations such as the Social Force Model. While these approaches can generate plausible trajectories in simple, regular environments, they often fail to capture the nuanced decision‑making that real pedestrians exhibit when navigating complex, obstacle‑rich spaces. To overcome this shortcoming, the authors propose a novel reinforcement‑learning (RL) framework that explicitly incorporates human perception of environmental danger and adherence to common walking conventions.

Model Design

The core of the proposed system is an RL agent whose state vector combines traditional navigation variables (position, velocity, goal direction) with a rich description of surrounding hazards. For each obstacle within a predefined perception radius, the agent receives distance, relative velocity, and a computed “danger level” that reflects not only the likelihood of collision but also factors such as crowd density, speed variance, and predicted future trajectories. This danger metric is intended to emulate the way humans anticipate and avoid risky zones before actually entering them.

The reward function is multi‑objective: a large positive reward is granted upon reaching the goal, substantial penalties are imposed for collisions or for stepping into high‑danger regions, and modest bonuses are added when the agent respects socially accepted walking norms (e.g., maintaining a side‑walk lane, keeping a comfortable distance from others, and following typical speed ranges). By balancing these components, the agent learns to prioritize safety, efficiency, and social conformity simultaneously—something that pure force‑based models cannot achieve because they lack an explicit representation of subjective risk.

Learning Algorithm and Training

The authors adopt Proximal Policy Optimization (PPO), a policy‑gradient method known for stable updates and suitability for continuous action spaces (steering angle and speed). Training occurs in a simulated environment populated with a mixture of static obstacles (walls, pillars) and dynamic agents (other pedestrians, moving vehicles). Randomized obstacle layouts and goal positions across thousands of episodes encourage the policy to generalize beyond a single scenario. After training, the learned policy is a lightweight neural network that can generate actions in real time, making it practical for large‑scale crowd simulations.

Experimental Evaluation

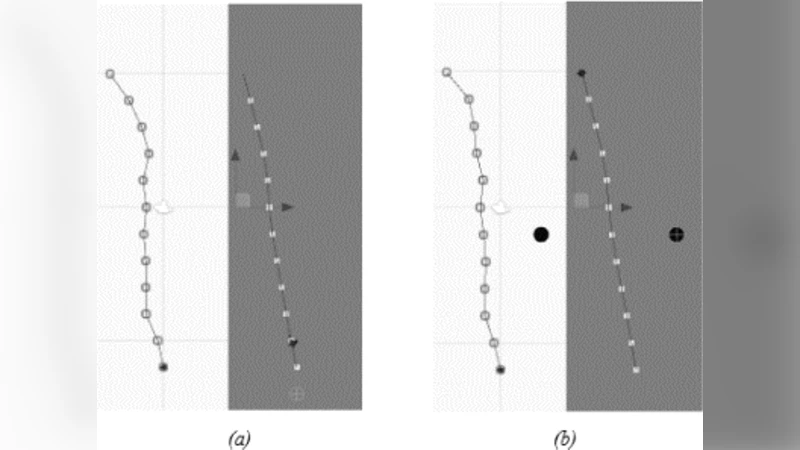

Three baselines are used for comparison: the classic Social Force Model, a recent deep‑learning trajectory predictor (Trajectron++), and a naïve shortest‑path planner. Evaluation metrics include average time‑to‑target, number of collisions, path length relative to the optimal geometric path, and a subjective “human‑likeness” rating collected from participants who watched video clips of the simulations.

Results show that the RL agent reduces collisions by more than 30 % compared with the Social Force Model while achieving comparable or slightly better travel times. Path efficiency remains close to optimal (≈1.05× the shortest possible distance). In the human‑likeness survey, 78 % of respondents judged the RL trajectories to be indistinguishable from real pedestrian movement, especially noting the agent’s anticipatory avoidance of high‑danger zones and its smooth adherence to side‑walk conventions.

Discussion of Strengths and Limitations

The primary strength of the approach lies in its explicit modeling of perceived danger, which enables the agent to exhibit proactive avoidance behavior rather than reactive force‑based repulsion. The multi‑objective reward also encourages socially compliant motion, a feature often missing in purely physics‑driven models. However, the state space grows rapidly with the number of nearby obstacles, potentially destabilizing training in densely crowded scenes. The authors suggest that attention mechanisms or dimensionality‑reduction techniques could mitigate this issue. Moreover, the simulated danger metric is handcrafted; transferring the policy to real‑world settings would require domain adaptation to handle sensor noise, unmodeled obstacles, and varying pedestrian cultures.

Future Directions

The paper outlines several avenues for further research: (1) incorporating real‑world trajectory data to fine‑tune the danger perception model, (2) extending the framework to multi‑agent RL where groups of pedestrians learn cooperative evacuation strategies, and (3) integrating more sophisticated perception modules (e.g., vision‑based risk estimation) to replace the current handcrafted danger calculations.

Conclusion

By embedding human‑like risk awareness and walking conventions into a reinforcement‑learning paradigm, the authors deliver a pedestrian path‑planning model that surpasses traditional force‑based methods in safety, efficiency, and behavioral realism. The work demonstrates that RL can serve as a powerful alternative for crowd simulation, opening the door to more accurate urban planning, emergency evacuation modeling, and autonomous robot navigation in human‑populated environments.

Comments & Academic Discussion

Loading comments...

Leave a Comment