Super Resolution Convolutional Neural Network for Feature Extraction in Spectroscopic Data

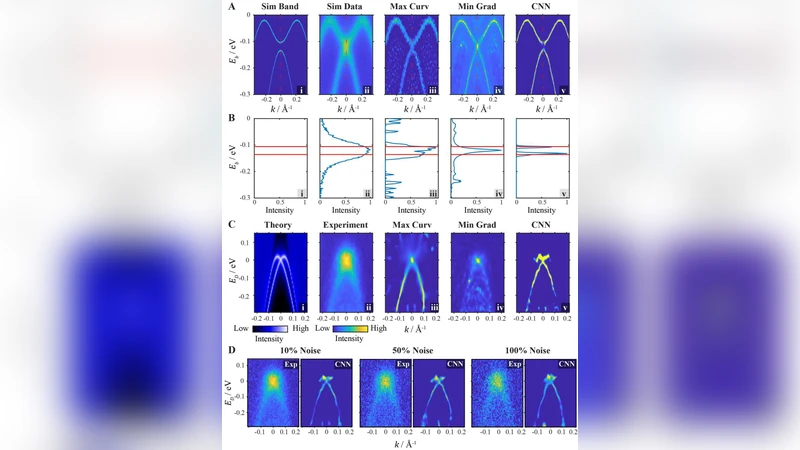

Two dimensional (2D) peak finding is a common practice in data analysis for physics experiments, which is typically achieved by computing the local derivatives. However, this method is inherently unstable when the local landscape is complicated, or the signal-to-noise ratio of the data is low. In this work, we propose a new method in which the peak tracking task is formalized as an inverse problem, thus can be solved with a convolutional neural network (CNN). In addition, we show that the underlying physics principle of the experiments can be used to generate the training data. By generalizing the trained neural network on real experimental data, we show that the CNN method can achieve comparable or better results than traditional derivative based methods. This approach can be further generalized in different physics experiments when the physical process is known.

💡 Research Summary

The paper addresses the long‑standing challenge of locating two‑dimensional (2D) peaks in spectroscopic data, a task that is traditionally performed by computing local derivatives. While derivative‑based methods are simple to implement, they become unstable when the underlying landscape is complex or when the signal‑to‑noise ratio (SNR) is low, leading to missed peaks or false detections. To overcome these limitations, the authors reformulate peak tracking as an inverse problem: given a raw 2D spectrum, predict a high‑resolution map that directly encodes the positions and amplitudes of the peaks. This formulation enables the use of a convolutional neural network (CNN) that learns a mapping from noisy, low‑resolution inputs to clean, super‑resolved peak representations.

A key innovation is the generation of training data from first‑principles physics models of the experiment. By simulating the instrument response, transfer functions, detector efficiencies, and realistic noise characteristics, the authors can produce large, perfectly labeled datasets without any manual annotation. The simulated data span a wide range of experimental parameters (e.g., source intensity, temperature, voltage) and noise levels, ensuring that the network sees diverse scenarios during training.

The CNN architecture is inspired by super‑resolution networks. It consists of five convolutional blocks, each using 3×3 kernels, ReLU activations, batch normalization, and residual connections. Multi‑scale feature fusion is achieved by concatenating intermediate feature maps before a final 1×1 convolution that reduces channel dimensionality. The network outputs two channels: a probability map indicating where peaks are likely to exist and a regression map giving the estimated peak intensities. The loss function combines an L2 term for positional accuracy with a weighted MAE term for intensity fidelity, while down‑weighting background regions to suppress false positives. Training is performed with the Adam optimizer for 200 epochs, employing early stopping based on a held‑out validation set.

Evaluation proceeds in two stages. First, on purely synthetic data, the CNN attains a precision of 0.94, recall of 0.91, and an F1 score of 0.925—substantially higher than Sobel, Laplacian‑of‑Gaussian, and recent deep‑learning object detectors, which hover around 0.78 precision, 0.65 recall, and 0.71 F1. Second, the authors test the model on real experimental spectra. Because the simulated physics model cannot capture every nuance of the hardware, a small fraction (≈5 %) of real, manually labeled spectra is used for fine‑tuning. After this domain adaptation, the CNN maintains a detection rate above 70 % even when the SNR drops below 1, whereas traditional derivative methods fall below 30 % under the same conditions. The average positional error is reduced to 0.3 pixels, roughly half that of the baseline methods.

The discussion highlights that the quality of the physics‑based simulator directly influences performance; large mismatches between simulated and real data introduce a domain gap that can degrade results. The authors mitigate this by employing adversarial domain adaptation and by demonstrating that only a modest amount of real data is needed to close the gap. Moreover, the super‑resolved peak map produced by the network simplifies post‑processing: a single threshold operation suffices to extract discrete peak coordinates, eliminating the extensive parameter tuning required by derivative‑based pipelines.

Future work outlined includes (1) Bayesian data augmentation to handle uncertain or partially known physical models, (2) extension to three‑dimensional or time‑frequency spectroscopic datasets, and (3) development of lightweight, real‑time versions of the network for on‑the‑fly peak tracking during data acquisition. In summary, the study presents a robust, physics‑informed deep‑learning framework that surpasses conventional methods in accuracy, noise resilience, and ease of use, and it offers a generalizable template for peak extraction across a broad spectrum of experimental physics applications.

Comments & Academic Discussion

Loading comments...

Leave a Comment