MIDI-Sheet Music Alignment Using Bootleg Score Synthesis

💡 Research Summary

This paper addresses the task of MIDI-sheet music alignment, which involves finding precise temporal correspondences between a symbolic MIDI representation of a piece of music and its scanned sheet music images. Traditional approaches typically rely on Optical Music Recognition (OMR) to convert sheet music into a symbolic format, a process prone to errors that can compromise alignment accuracy. As an alternative, this work proposes a novel method that bypasses explicit OMR by projecting both modalities into a common, simplified image-based representation for direct alignment.

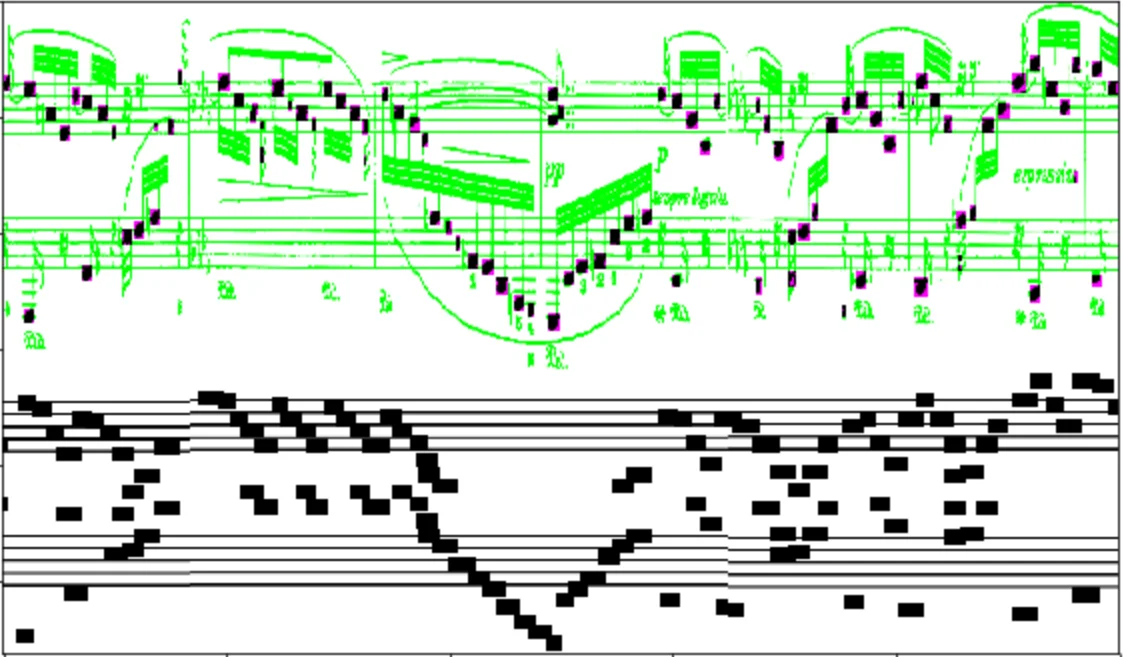

The core innovation is the concept of “bootleg score synthesis.” This process creates a crude, binary image representation that contains only rectangular blobs corresponding to notehead positions, stripping away all other musical notation details like staff lines, clefs, and dynamics. The system operates in three main stages. First, for each line of music (image strip), a deep watershed notehead detector—pre-trained on the synthetic DeepScores dataset and fine-tuned on real scans—is applied. The bounding boxes of detected noteheads are filled in to generate the bootleg representation from the sheet image (A_i).

Second, the MIDI data is projected into an identical bootleg format (B_i). For each image strip, the staff line positions are estimated to establish a pixel coordinate system. Each note onset in the MIDI file is then converted into one or more rectangular blobs placed at the appropriate vertical pixel positions corresponding to its pitch. Ambiguities arising from enharmonic equivalents or potential staff assignments (e.g., a middle C could appear on either staff) are handled by creating enlarged or duplicate blobs. Long pauses in the MIDI performance (e.g., from fermatas) are truncated to avoid excessive temporal distortion in the pixel space.

Third, alignment is performed using a variant of Dynamic Time Warping (DTW). A local cost matrix (C_i) is computed for each pair (A_i, B_i) using a negative inner product metric, which rewards overlapping black pixels without penalizing disagreements—a design choice robust to the intentionally redundant blobs in the MIDI-derived bootleg. All local cost matrices are concatenated horizontally to form a global cost matrix. A standard DTW algorithm with step patterns {(1,1), (1,2), (2,1)} is then used to find the optimal warping path through this global matrix, which defines the final alignment between the MIDI performance and the sheet music.

The proposed method was evaluated on a dataset of 68 real scanned piano scores from IMSLP and corresponding expressive MIDI performances across 22 compositions. The system achieved a high alignment accuracy of 97.3% within a tolerance of one second, outperforming several baseline systems that employed OMR. The results demonstrate that avoiding the error-prone OMR step and instead aligning in a purposefully simplified, common image domain can lead to highly accurate and robust synchronization. The authors position their “bootleg” approach as a strong non-trivial baseline and a pragmatic intermediate solution, offering valuable insights for future research into fully automated, deep learning-based cross-modal music alignment systems.

Comments & Academic Discussion

Loading comments...

Leave a Comment